Why this theme matters now

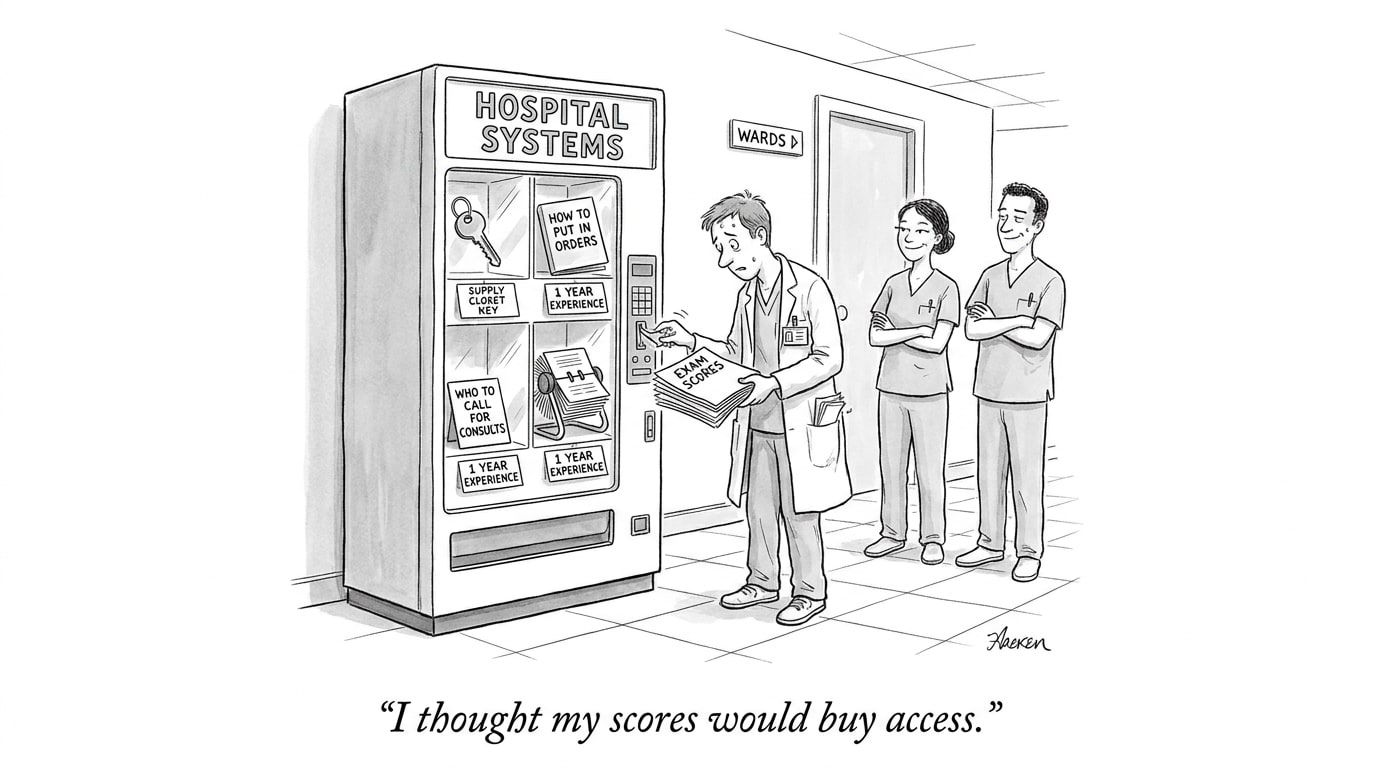

AI tools for mental health and broader clinical applications are moving from research prototypes into products and point-of-care tools. That momentum is outpacing both formal regulation and robust, standardized safety practices. At the same time, concerns about algorithmic bias and unequal outcomes are intensifying: systems trained on incomplete or skewed data can amplify disparities in access, diagnosis, and treatment. For employers, health systems, and talent teams hiring into AI-driven roles, this creates immediate operational, ethical, and reputational risks that require practical AI in Physician Employment & Clinical Practice responses.

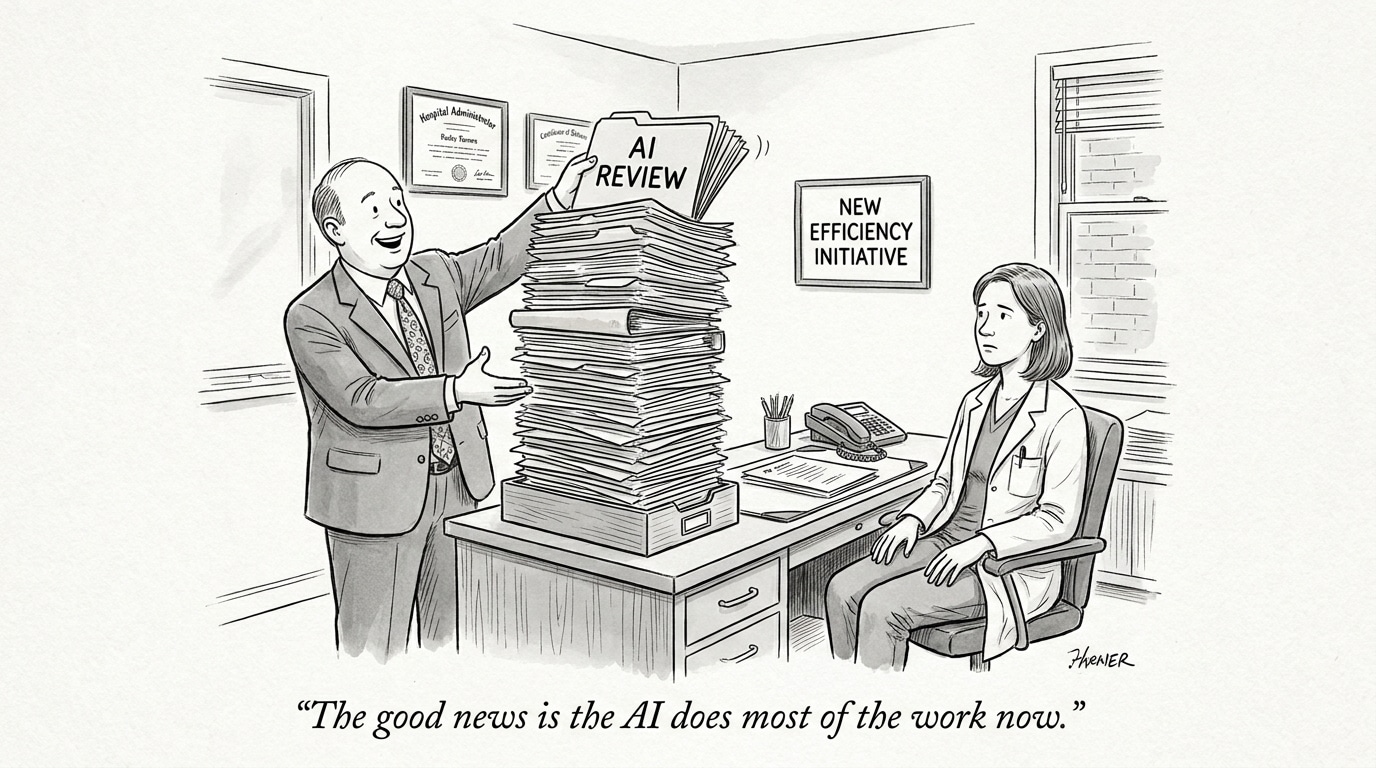

Deployment speed versus oversight gaps

Clinical AI is being adopted in settings with varying levels of clinician supervision and regulatory scrutiny. Where mental health chatbots and therapy-support systems are directly accessible to patients—sometimes without clinician triage—the margin for error narrows. Fast-moving product rollouts and commercialization can leave gaps in validation, monitoring, and escalation pathways for adverse events.

From a governance perspective, these gaps require organizations to shift from one-off approvals toward continuous oversight. That means implementing post-deployment monitoring, incident reporting, and clear escalation protocols to clinical teams. Recruitment teams and hiring managers should expect new role requirements: professionals who can operationalize safety monitoring, translate clinical risk into engineering specifications, and coordinate cross-disciplinary incident response.

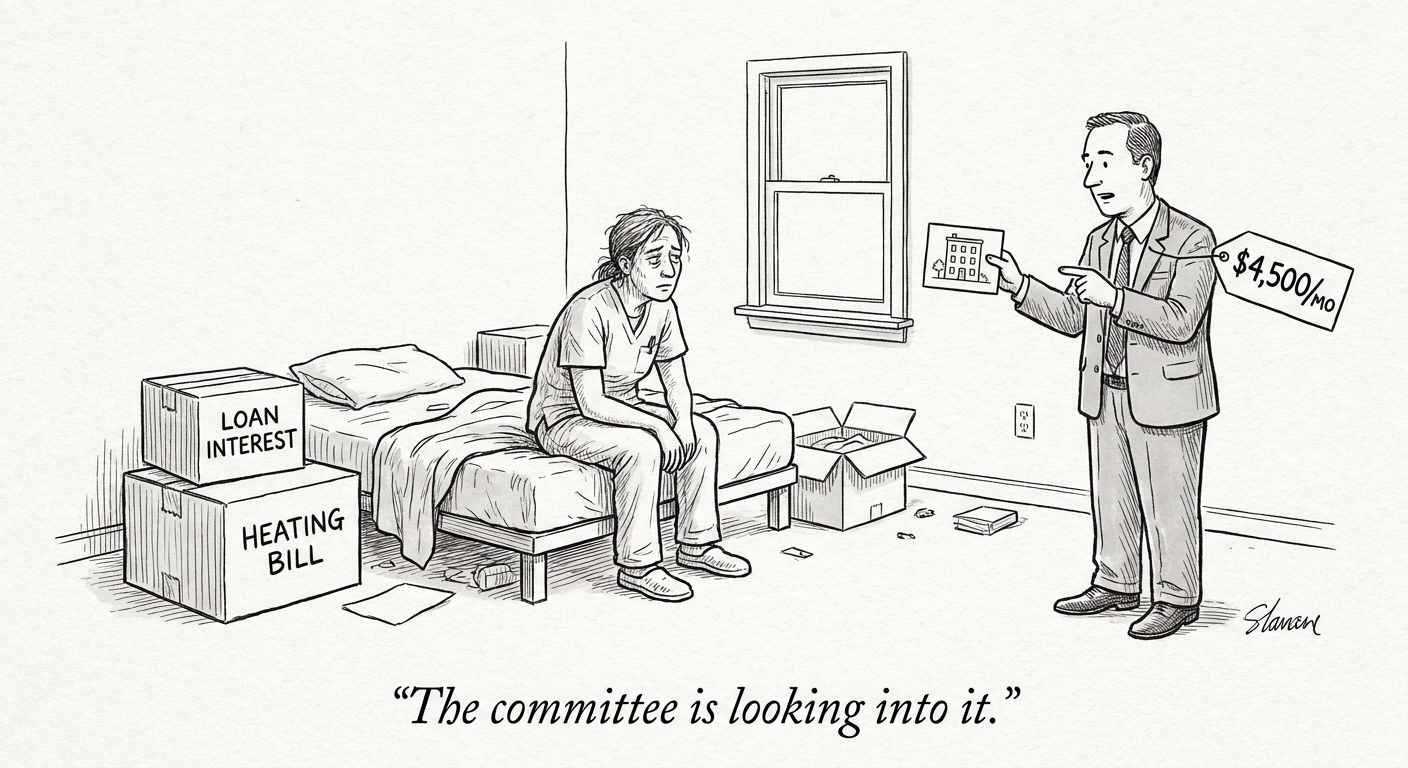

Hidden biases and inequitable outcomes

Bias in AI models is not only a technical problem; it is a systems problem. Models trained on data that underrepresent certain populations will reflect those blind spots in predictions and recommendations. In mental health, where cultural context, language nuance, and socioeconomic factors shape presentation, these blind spots are especially consequential. Unequal model performance can lead to misdiagnoses, missed crises, or inappropriate recommendations for specific demographic groups.

Addressing bias requires three complementary actions: diversifying training and validation datasets; developing metrics that go beyond aggregate accuracy to measure subgroup performance; and instituting governance that ties performance thresholds to deployment decisions. Practically, hiring practices must evolve: teams should include experts in fairness evaluation, sociotechnical analysis, and community engagement alongside data scientists and clinicians.

Call Out — Practical fairness: Quantifiable subgroup performance benchmarks and routine fairness audits should be treated as clinical safety measures, not optional analytics exercises. This reframes bias mitigation as part of clinical quality assurance.

Community-led guardrails and safety frameworks

Local organizations, clinicians, and advocacy groups are increasingly stepping into the governance vacuum to design pragmatic safety rules. Community-driven frameworks often focus on transparency about capabilities and limitations, informed consent, clear signposting to human support, and mechanisms for users to report harm or inaccuracies. These interventions are valuable because they translate ethical principles into operational controls that are enforceable at the product level.

For healthcare employers and platform operators, the lesson is to partner with stakeholders early: patient groups, front-line clinicians, legal counsel, and ethicists. Incorporating community feedback into product requirements reduces the likelihood of blind spots and increases legitimacy. Recruiting must therefore prioritize professionals skilled in stakeholder engagement, regulatory affairs, and human-centered design to ensure safeguards are both technically sound and socially acceptable.

Call Out — Governance staffing: Expect demand for hybrid roles—clinical safety engineers, AI risk officers, and community engagement leads—that bridge product teams and health systems. These roles translate governance into daily operational practice.

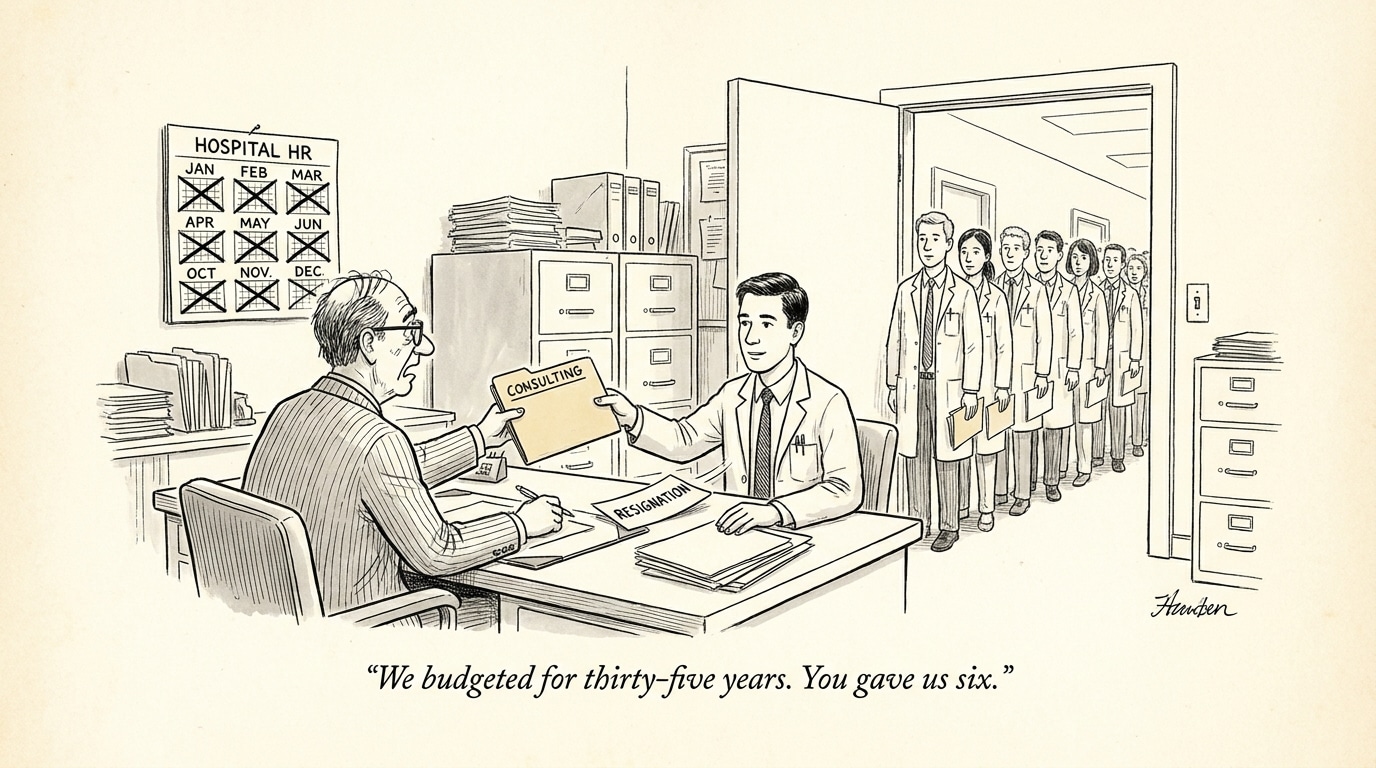

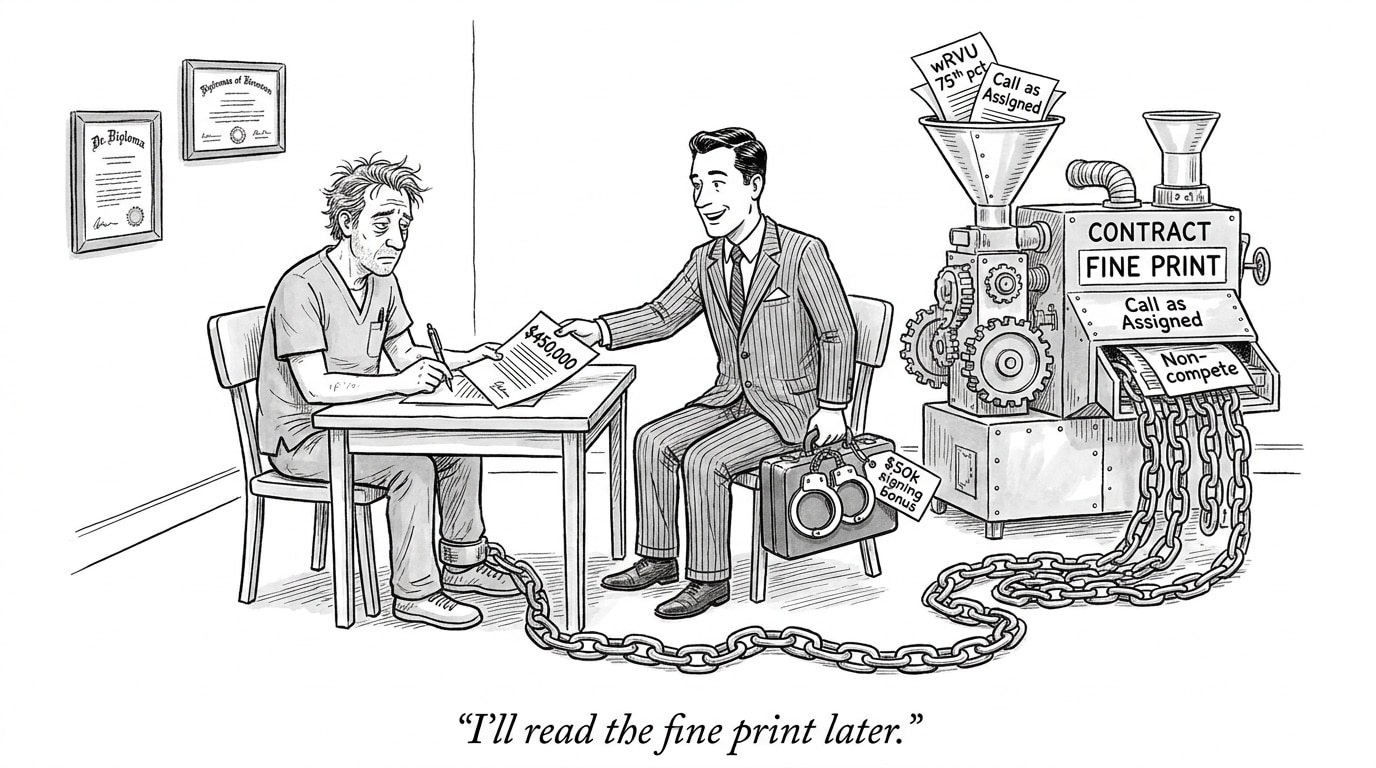

Operational and hiring implications for healthcare and recruiting

Organizations that adopt AI for mental health and other clinical use cases will need to reconfigure hiring, onboarding, and continuing education. Key capabilities to prioritize include:

- Model monitoring and incident management — staff who can detect, investigate, and remediate model failures in real time.

- Fairness evaluation and data stewardship — roles dedicated to data curation, subgroup testing, and privacy-preserving practices.

- Clinical governance and ethics — clinicians who can set safety thresholds and approve escalation workflows.

- Community and regulatory liaison — personnel who operationalize transparency obligations and manage stakeholder engagement.

Conclusion — From ad hoc fixes to institutionalized guardrails

AI’s promise in mental health is real, but so are the safety and equity risks. Addressing these risks requires moving beyond reactive measures to institutionalized governance: continuous monitoring, explicit fairness metrics, community-informed safety rules, and new cross-functional roles. Health systems and employers will need to invest in both technical capacity and governance processes to ensure AI improves care without exacerbating disparities.

For recruiting and workforce strategy, the imperative is clear: hire for the interfaces—people who can translate clinical safety into engineering specs, who can operationalize fairness evaluations, and who can embed community perspectives into product life cycles. Those organizations that build these guardrails now will reduce clinical risk, strengthen public trust, and better deliver on AI’s potential to augment, rather than undermine, equitable care.

Sources

Seattle nonprofit helps set safety rules as AI therapy booms – Axios