This analysis synthesizes 12 sources published the week ending May 12, 2026. Editorial analysis by the PhysEmp Editorial Team.

The headlines that AI now equals or beats doctors at diagnosis have created predictable panic. What gets less attention is how these capabilities will change what employers expect from physicians, how they pay them, and what counts as clinical work. Those issues sit at the center of AI in Physician Employment & Clinical Practice, where diagnostic automation collides with workforce economics and professional leverage.

What diagnostic parity actually looks like

Recent studies show AI systems reaching physician-level accuracy on many clinical benchmarks. But there’s a difference between matching performance in controlled tests and operating in a clinic. These systems are strong at pattern recognition—reading images, combining lab results, producing differentials from structured inputs. They struggle with contextual judgment: the patient who downplays symptoms, a housing situation that makes a treatment plan impossible, or the uneasy feeling a clinician gets when something doesn’t add up.

That gap matters for employed physicians. Across governance proposals, state rules like Georgia’s human-oversight law, and polls of practicing clinicians, the message is consistent: AI supplements, not substitutes, clinical judgment. For doctors considering jobs, the issue becomes how organizations will fold these tools into productivity targets, documentation requirements, and compensation formulas.

Physicians entering AI-integrated settings should ask how diagnostic automation will change expected patient volumes, who will bear the work of validating AI outputs, and whether pay models recognize that validation as skilled clinical labor.

The productivity paradox

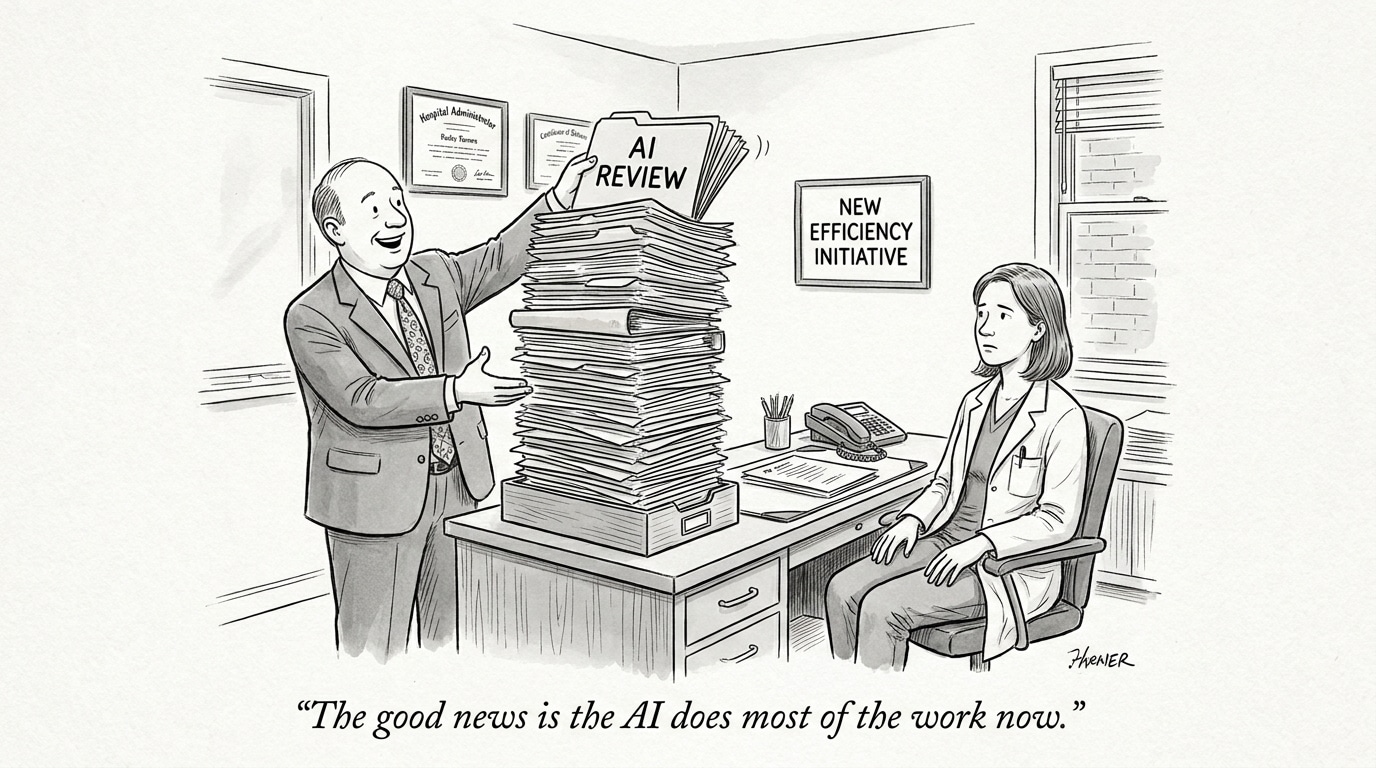

AI can speed up parts of diagnosis and thus increase possible throughput. Administrators may see an easy efficiency win. Clinicians see new work: reviewing AI suggestions, spotting edge cases, and retaining responsibility for decisions. That review takes time and focus; it doesn’t vanish because an algorithm produced the first pass.

Surveys of more than 100 physicians show resistance to being reduced to rubber stamps. UC Davis has argued human review is the step that catches AI failures before they cause harm. Health systems must decide whether the gains they hope for will come with adequate checks—or whether oversight will be trimmed away in pursuit of volume.

Hiring implications are immediate. Roles in AI-enabled practices increasingly demand comfort with validating tools, knowing when to override a recommendation, and documenting those choices. Those are different skills from traditional diagnostic training, and recruitment and onboarding should reflect that.

Governance and accountability

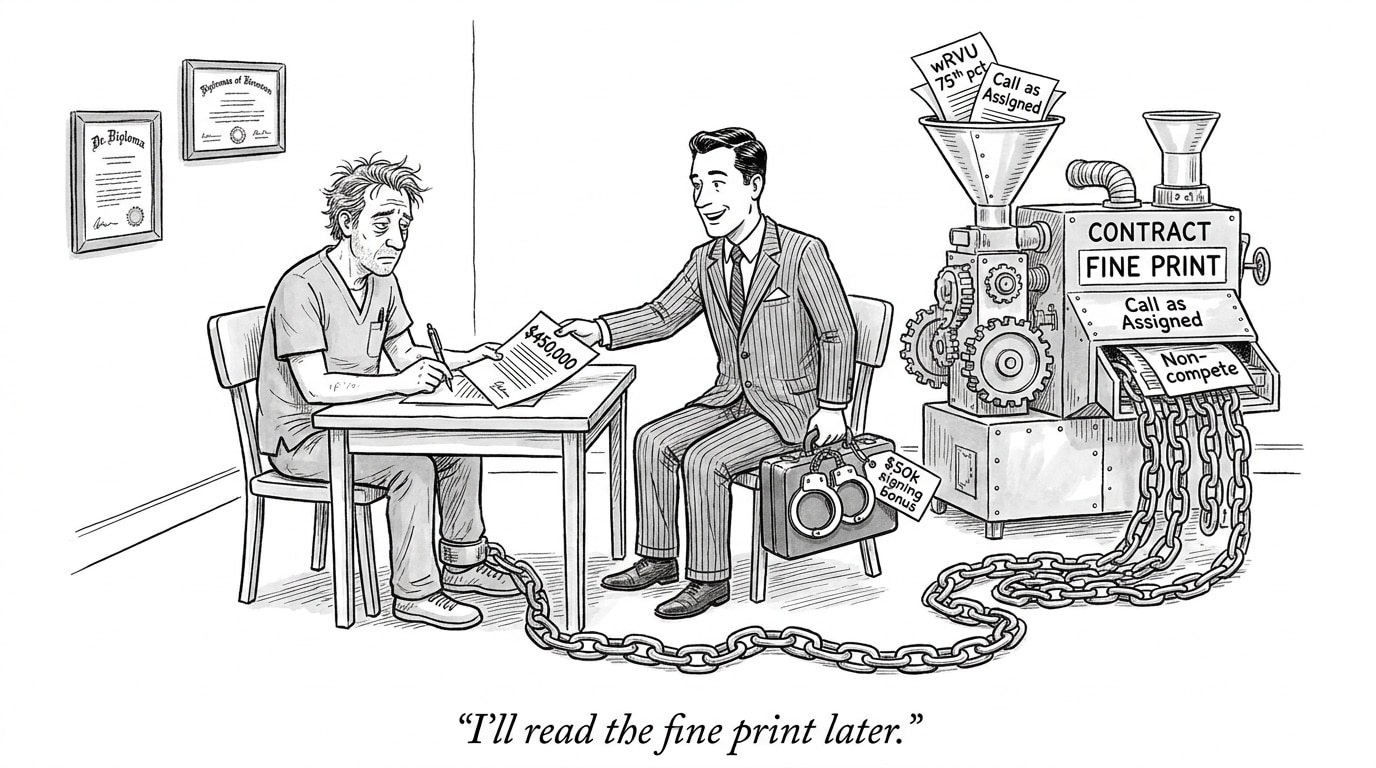

Talk of licensing diagnostic algorithms—treating them like entities requiring credentialing—signals practical change. If software needs formal oversight protocols, someone has to provide it. That could boost physician leverage if employers fund the work and adjust pay. Or it could become an unfunded mandate, quietly added to job descriptions.

Georgia’s move to keep humans involved in AI healthcare decisions offers a template others may copy. Requirements like this protect jobs from pure automation while creating new layers of responsibility. Physicians negotiating contracts today should be explicit about how liability and oversight duties will be shared when AI recommendations enter the record.

Doctors who press for clear terms around AI oversight—and for compensation that reflects the cognitive and legal burden of validating algorithmic output—will be better off than those who accept vague language about “working with AI.”

How compensation models will be tested

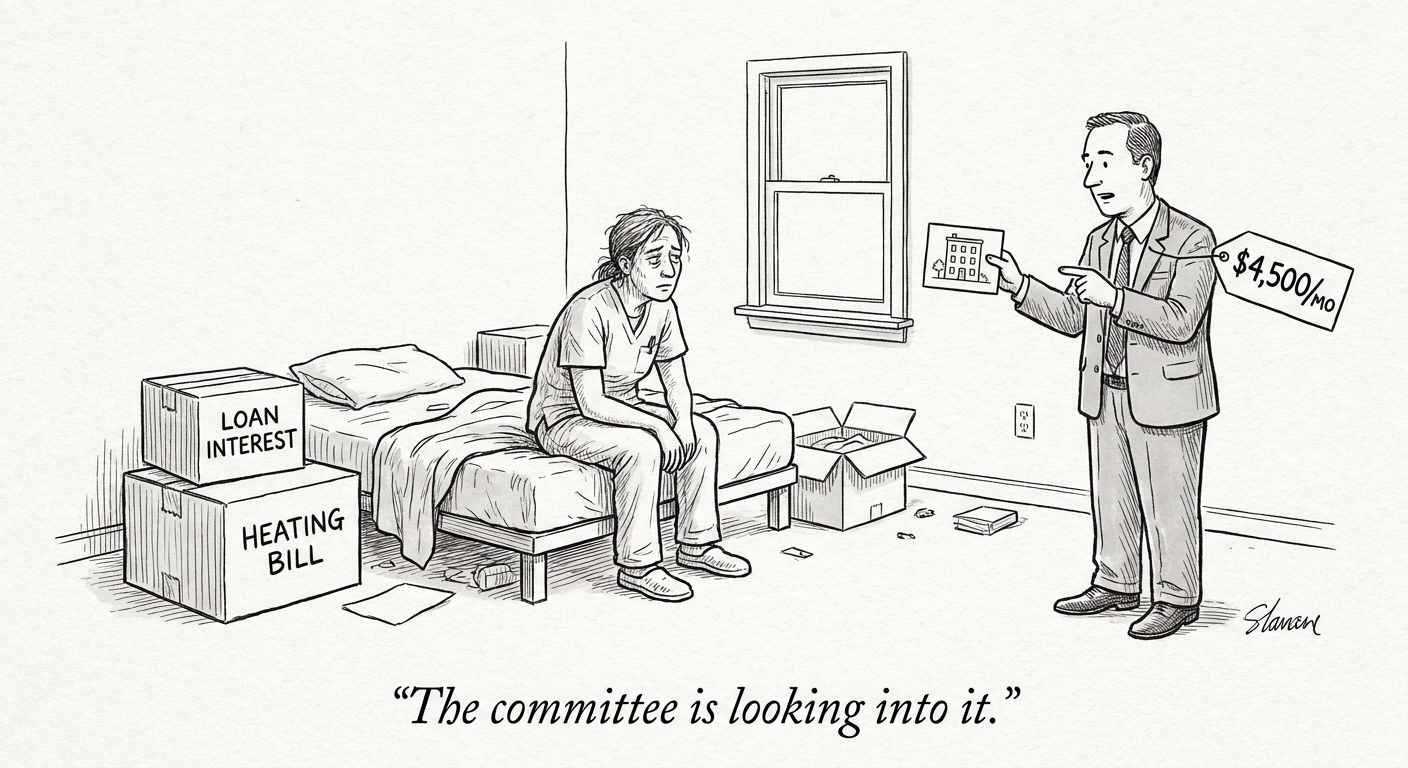

Most current pay systems—RVUs, salaries, or hybrids—assume work done by humans. If an algorithm provides the initial diagnosis, who gets credit for that productivity? If physicians spend more time checking AI work than producing assessments from scratch, how should their pay change?

Some employers will treat AI as a pure efficiency play and expect equal or higher productivity without extra pay. Others will acknowledge oversight as skilled clinical work and monetize it. Candidates should be prepared to ask employers specific questions: which tools are in use, how oversight tasks are allocated, and whether compensation covers AI-validation time.

How physicians can position themselves

There’s a window now, before AI integration becomes routine. Physicians who learn how these tools work—their strengths, their failure modes, and where they need human correction—will have bargaining power. That doesn’t mean embracing every new feature uncritically. It means informed engagement: pushing for reasonable productivity expectations, documented oversight roles, and pay that reflects the full scope of augmented clinical work.

Physician arguments that AI can’t replace judgment aren’t nostalgia. They’re a practical stance about the work health systems will need to support if they want safe deployment rather than firefighting after avoidable errors.

Looking ahead

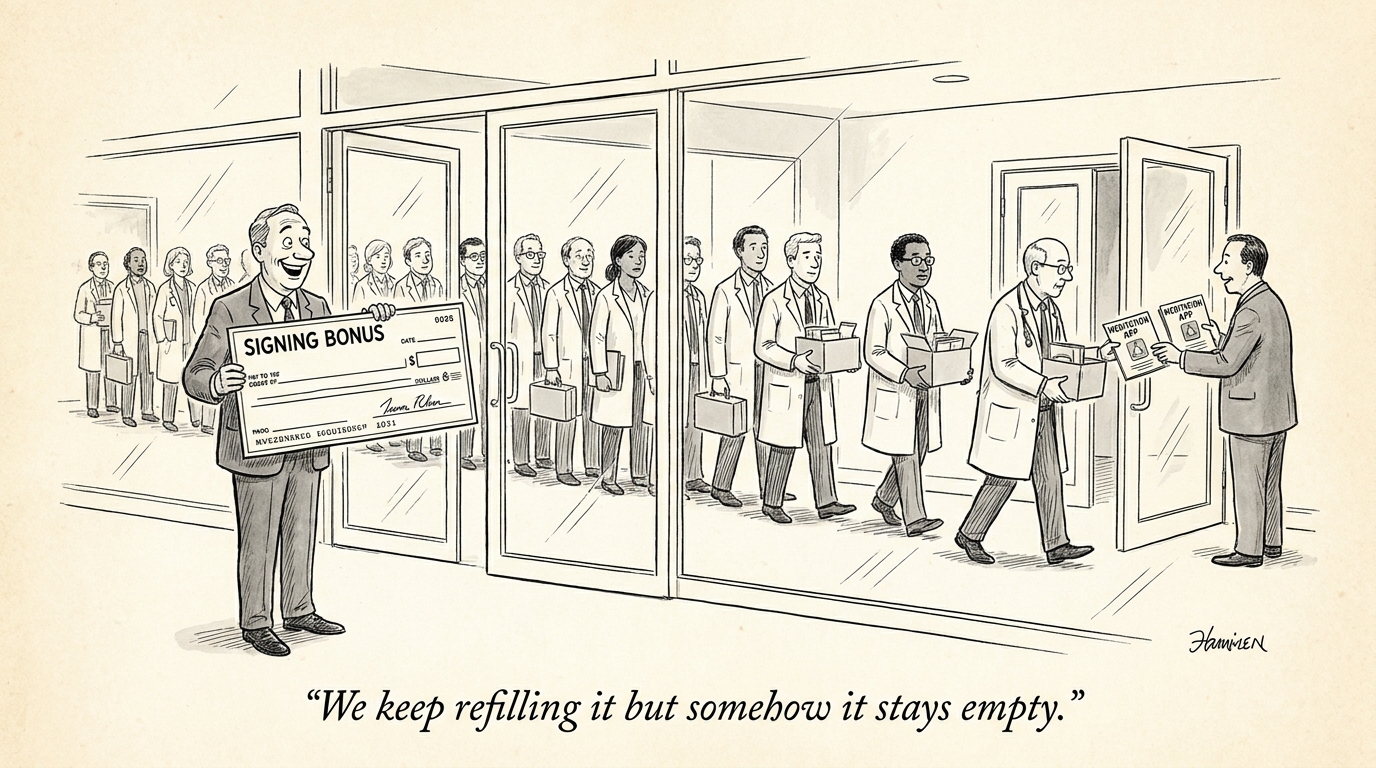

Diagnostic AI parity is an inflection point. Employment structures, pay models, and hiring tactics will shift as the tools improve and oversight rules harden. Some physicians will be squeezed by employers that treat AI as a way to boost numbers without investing in oversight. Others will win clearer contracts and new compensation lines for AI-validation work.

Health system leaders face a choice: invest in oversight infrastructure, update pay models, and recruit clinicians who can do augmented work—or accept the legal and clinical friction that follows when technology outpaces organizational design. Expect disputes in meetings, late-night workflow rewrites, and at least one malpractice case that forces everyone to get specific about who did what and why.

Picture a night intern scrolling through an AI-generated differential while an IV pump beeps in the corner. The outputs are fast; the decisions are not. That mess is where the next phase of clinical practice will be decided.

Sources

Did AI Really Beat Doctors at Diagnosis? – STAT

AI diagnostic reasoning nears physician performance – News-Medical.net

Medical AI matches doctors on diagnoses — but that doesn’t make it safe – Earth.com

AI in the emergency department: promising, powerful but still unproven – The Conversation

Why human review is key to the success of AI in health care – UC Davis Health

Physician Argues AI Cannot Replace Doctors’ Judgment – LetsDataScience

What 103 Physicians Really Think About AI in Medicine – Medical Economics

Georgia moves to keep humans involved in AI healthcare decisions – WSB Radio

Risk of keeping humans in healthcare AI’s loop – Healthcare Finance News

AI ‘doctors’ should be licensed — here’s a framework to do it – HealthLeaders Media

AI Diagnostic Reasoning Nears Physician Performance – Rama on Healthcare