Why this theme matters now

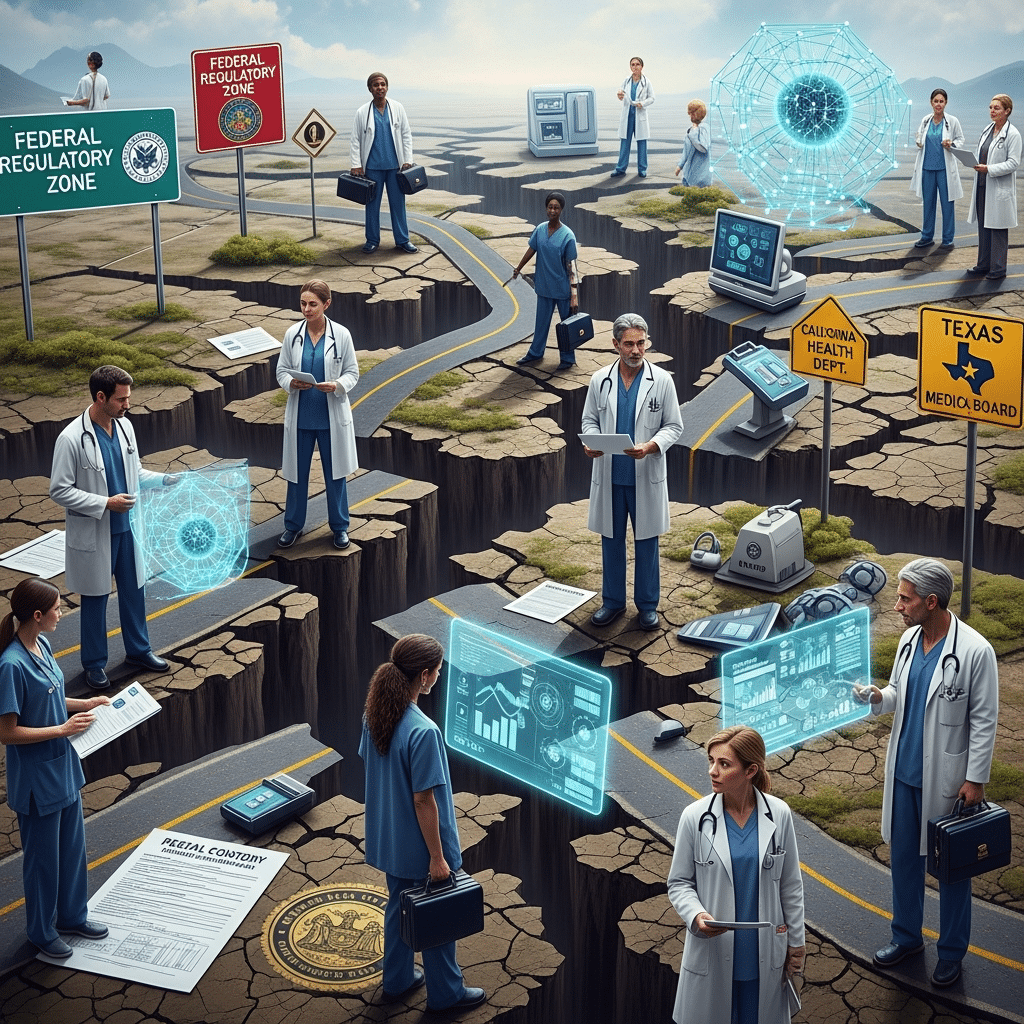

Healthcare organizations are being pulled in two directions: federal health authorities are signaling support for faster adoption of AI in healthcare across clinical workflows, while state and local authorities are introducing numerous, diverse rules that change how AI can be purchased, configured, and used. That dichotomy has immediate operational consequences for CIOs, compliance teams, clinicians, and recruiters trying to staff AI programs. The coming 12–24 months will be decisive for whether institutions can safely scale clinical AI or whether regulatory friction will leave promising systems on the shelf.

Federal momentum vs. state fragmentation

At the national level, health regulators are emphasizing pathways to bring AI into clinical workflows—creating incentives, guidance, and clearer expectations around safety and efficacy. That direction reduces one category of uncertainty and encourages vendors and provider organizations to prioritize AI-enabled care models.

At the same time, state legislatures and local regulators are advancing an array of distinct mandates covering explainability, data use, algorithmic auditing, consumer notification, and restrictions on certain types of automated decision-making. The result is a multijurisdictional mosaic: a single vendor may face different operational constraints in neighboring states, and a health system that spans multiple states may need region-specific deployments or feature flags.

Call Out: Federal support removes some national-level uncertainty, but regulatory diversity across states imposes operational and legal complexity that can negate these federal incentives unless systems are designed for jurisdictional variability.

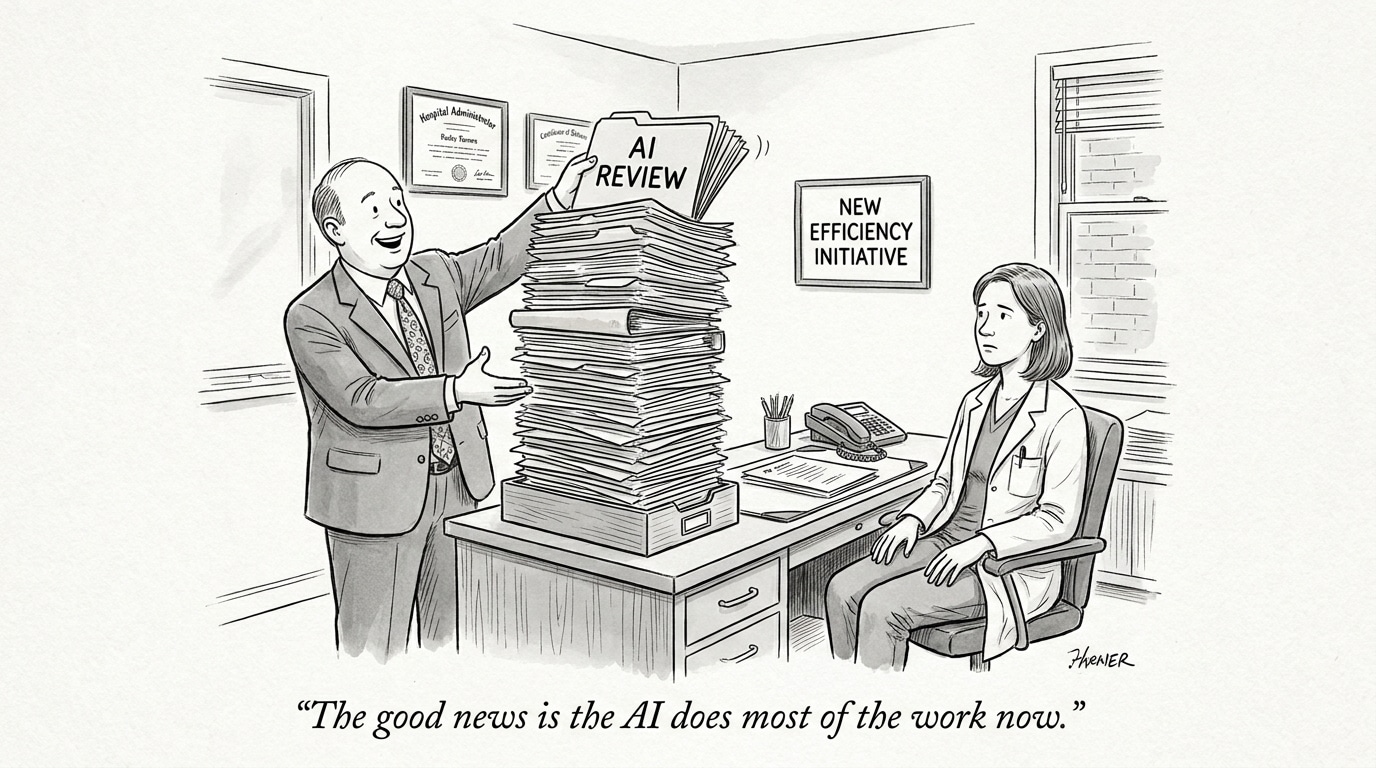

Operational impact on CIOs and technology procurement

Practical consequences for technology leaders include configuration complexity, fractured testing regimes, expanded legal review, and higher total cost of ownership. When states impose conflicting requirements—for example, different standards for model explainability or disparate documentation and reporting obligations—CIOs face three blunt options: maintain multiple versions of the same system; restrict deployments to a lowest-common-denominator configuration; or halt roll-outs until legal clarity emerges.

Each option has trade-offs. Multiple versions increase engineering and validation work, and create branching technical debt. A lowest-common-denominator approach limits clinical utility and undermines ROI. Delays to deployment, meanwhile, slow clinical learning and reduce the speed at which organizations can iterate on performance and safety.

Clinical deployment, safety, and equity trade-offs

Clinical AI systems typically require continuous monitoring, post-deployment auditing, and access to high-quality data. Varied state rules can affect what data can be used for retraining, how audits must be conducted, and whether certain demographic factors can be considered for fairness analyses. This jeopardizes both the ability to detect model drift and the capacity to remediate performance gaps that affect vulnerable populations.

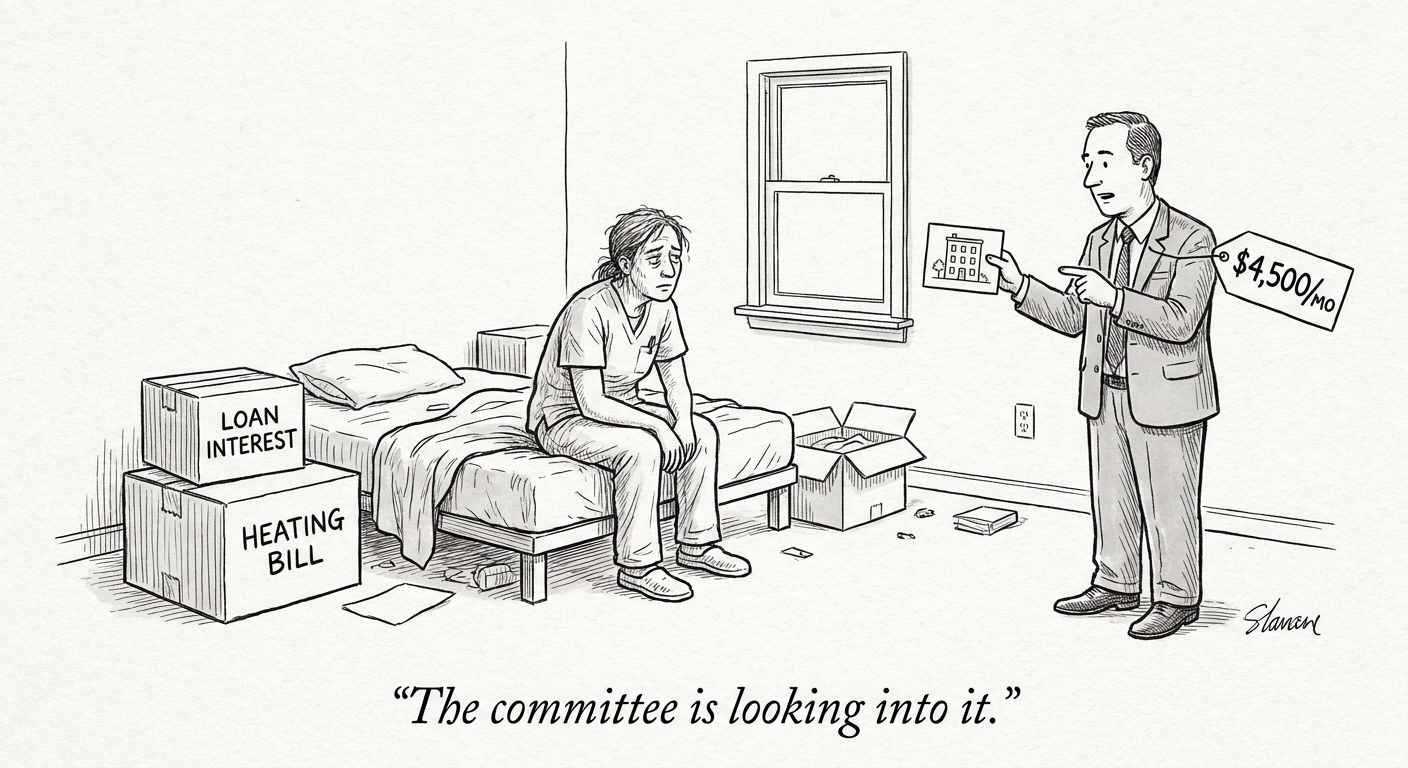

Moreover, inconsistent notification or consent rules influence clinician workflows and patient interactions. If some states require more extensive disclosure or opt-in processes, clinicians working across state lines must adapt to differing behavioral expectations—raising the risk of confusion, workflow friction, and clinician burnout.

Call Out: Divergent state requirements can force difficult clinical trade-offs—either constrain model capabilities to meet the strictest rule, or accept operational complexity that increases risk and cost. Neither path is neutral for patient safety or equity.

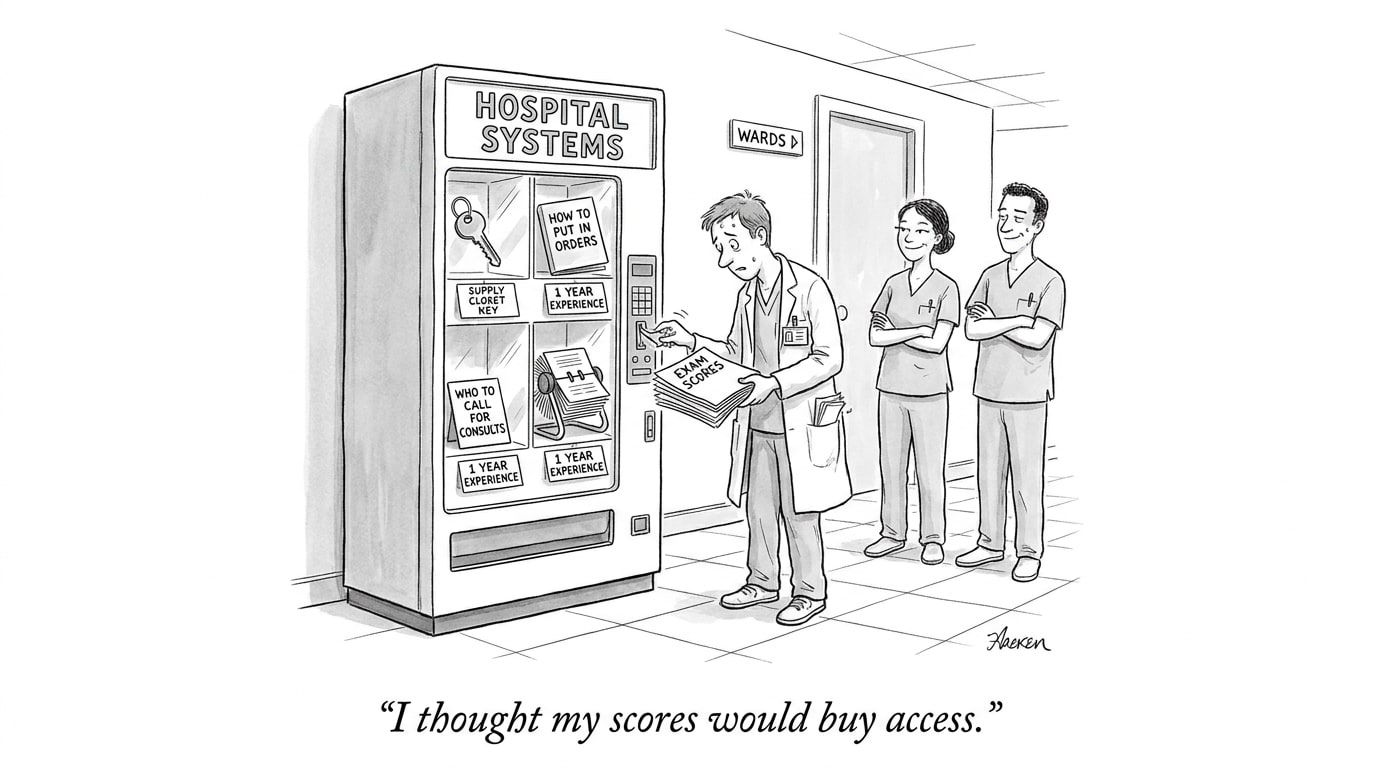

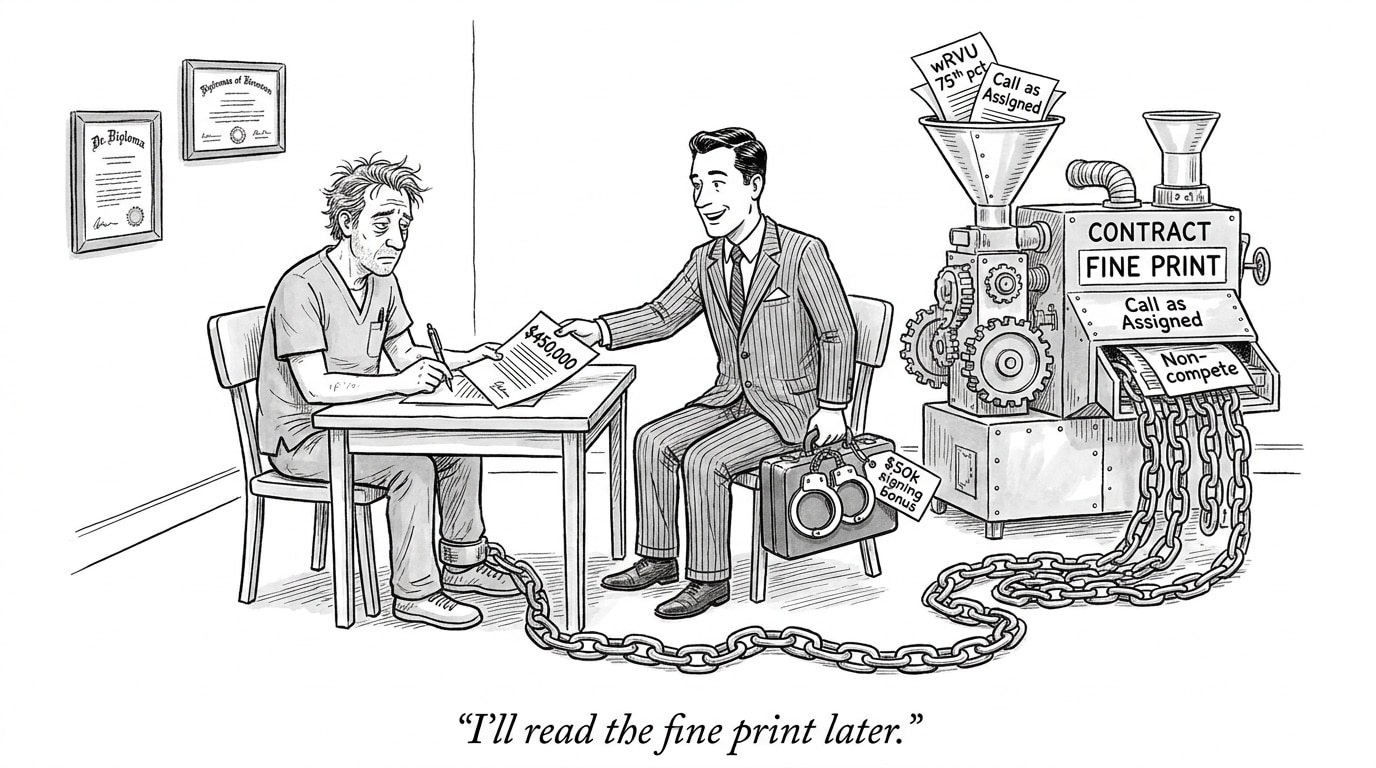

Workforce and recruiting implications

The regulatory patchwork creates a new demand profile for healthcare teams. Organizations will need people who combine clinical domain knowledge with legal and technical fluency: clinician-operators who understand the clinical context and regulatory requirements; compliance specialists who can translate jurisdictional rules into system-level guardrails; ML engineers and MLOps professionals who design configurable, auditable deployments; and procurement leaders who can negotiate contracts that account for multi-jurisdiction compliance.

For recruiting, this means two shifts. First, the profile of “must-have” skills will broaden: soft skills like cross-functional communication and policy literacy will be as valuable as model-building expertise. Second, talent supply will be geographically sensitive—organizations in states with more restrictive rules will compete for staff who can implement complex, audited systems. That creates opportunities for centralized hiring strategies, cross-state talent pools, and platforms that match specialized AI-healthcare roles quickly.

Policy design, vendor strategies, and strategic options

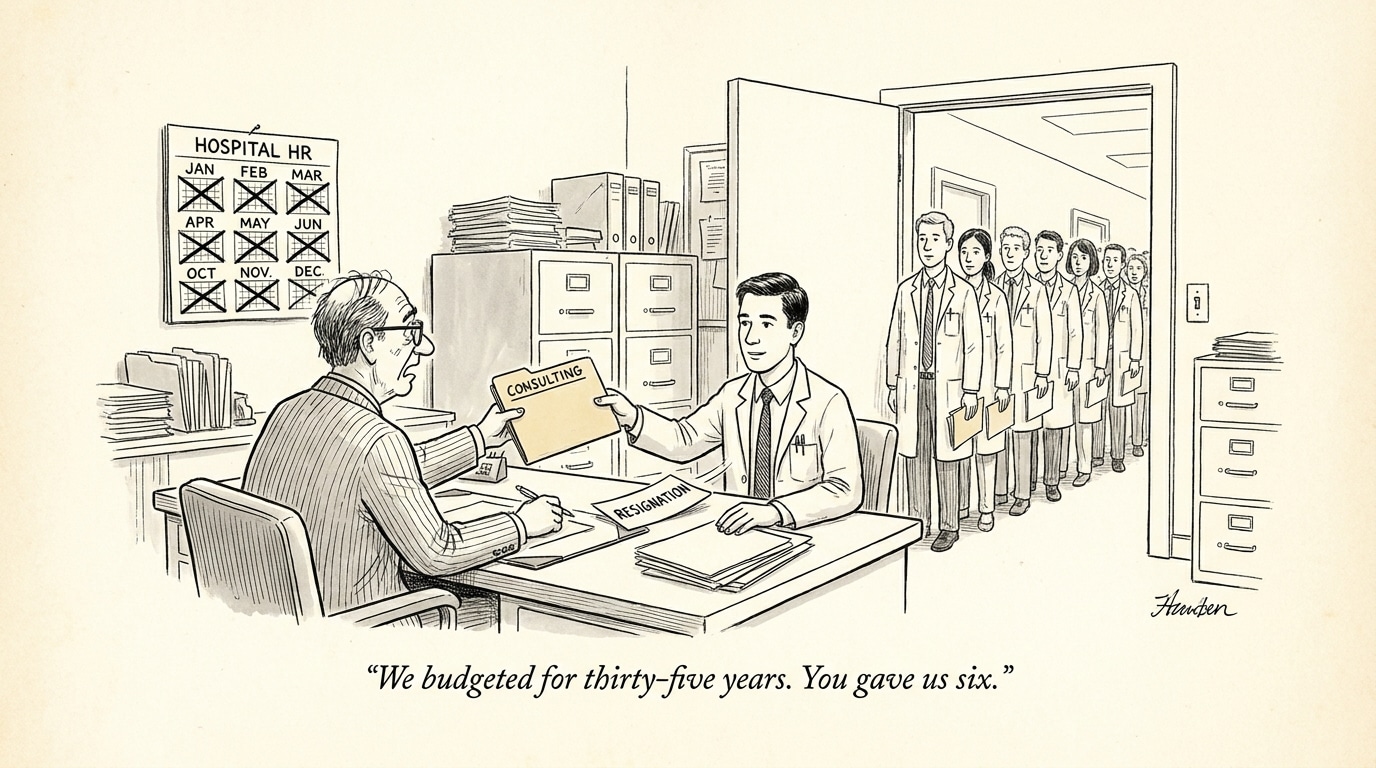

Vendors and health systems have several strategic responses. Some will standardize on the strictest common requirements and market safety-first products—easier for multi-state health systems but less differentiated clinically. Others will build modular, jurisdiction-aware architectures that enable feature toggles and locale-specific auditing, but that approach increases engineering complexity. A third path is active policy engagement—working with coalitions, trade groups, and regulators to advocate for harmonized rules or interoperable compliance frameworks.

From a policy standpoint, greater federal-state coordination or model uniformity would reduce operational friction. In the interim, organizations should prioritize flexible architectures, invest in robust governance, and hire talent educated in both regulation and system design.

Implications for healthcare industry and recruiting (conclusion)

The interplay between federal encouragement and state-level fragmentation is reshaping the economics, operational design, and workforce needs of clinical AI. Without pragmatic coordination—either via harmonized rules or industry-driven standards—there’s a real risk that compliance complexity will slow clinical adoption, increase costs, and shift competitive advantage toward well-resourced vendors and systems able to absorb the burden.

Recruiters and hiring managers must anticipate this reality now. Build teams with hybrid skill sets, prioritize hires that can operationalize regulatory requirements, and adopt procurement strategies that lock in clearer responsibilities for compliance. For job platforms and staffing partners, the market prize will go to services that can rapidly match organizations with candidates who bridge clinical, technical, and policy domains.

Sources

HHS Signals Policy Direction to Accelerate Adoption of AI in Clinical Care – JD Supra

State AI regulations could leave CIOs with unusable systems – InformationWeek