Why this matters now

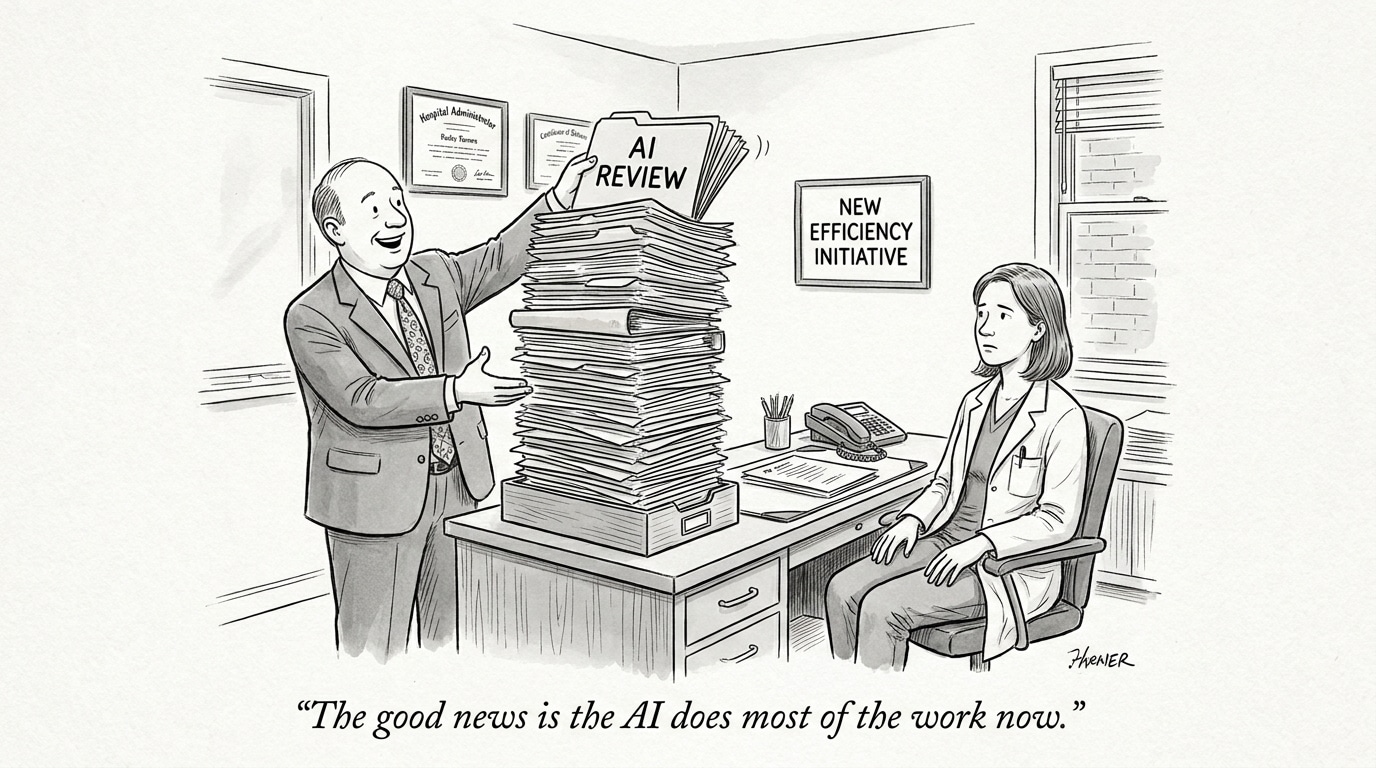

Federal action and congressional debate over artificial intelligence have moved from conceptual framing to concrete policy design, with direct implications for how health systems, vendors, and clinical workforces deploy and are governed by AI tools. In early 2026, competing policy impulses are colliding—one advocating accelerated federal adoption and infrastructure buildout, the other pressing for tighter guardrails around data use, workplace deployment, and institutional accountability.

The resolution of this tension will influence patient privacy protections, clinician liability exposure, procurement standards, and hiring criteria across the healthcare sector. These developments sit squarely within the broader evolution of AI in Physician Employment & Clinical Practice, where federal rulemaking directly shapes clinical authority boundaries, workforce expectations, and the governance architecture underpinning AI-enabled care.

Federal momentum versus fragmented rulemaking

One arm of the current federal picture is administrative momentum: agencies are moving quickly to adopt AI tools, update procurement practices, and embed automation into workflows. That creates incentives for vendors and health systems to accelerate pilots and large-scale rollouts to qualify for government contracts or meet new performance expectations.

At the same time, legislative and regulatory debates remain divided. Congressional hearings show sharply different views on how tightly to regulate workplace AI—ranging from calls for sector-specific oversight to arguments that broad innovation mandates will deliver benefits faster. This split creates a near-term environment of uncertainty: federal agencies will continue piloting and procuring, while statutory regulation may lag or emerge unevenly across jurisdictions.

Call Out

Accelerated federal adoption can standardize procurement requirements but also amplifies the risk of regulatory mismatch: agencies may require tools before statutes clarify liability, creating a compliance gap for health systems and vendors alike.

Data governance, privacy, and cybersecurity pressures

Health data sits at the crossroads of privacy law and AI utility. As policy attention turns to model training data, data sharing, and adversarial risks, two practical pressures emerge. First, demands for stronger de-identification, provenance tracking, and consent mechanics will reshape how synthetic and real-world data are used to train clinical models. Second, cybersecurity expectations—both for protecting training data and for ensuring model integrity—will rise, with regulators and purchasers increasingly requiring security attestations and breach-resistant architectures.

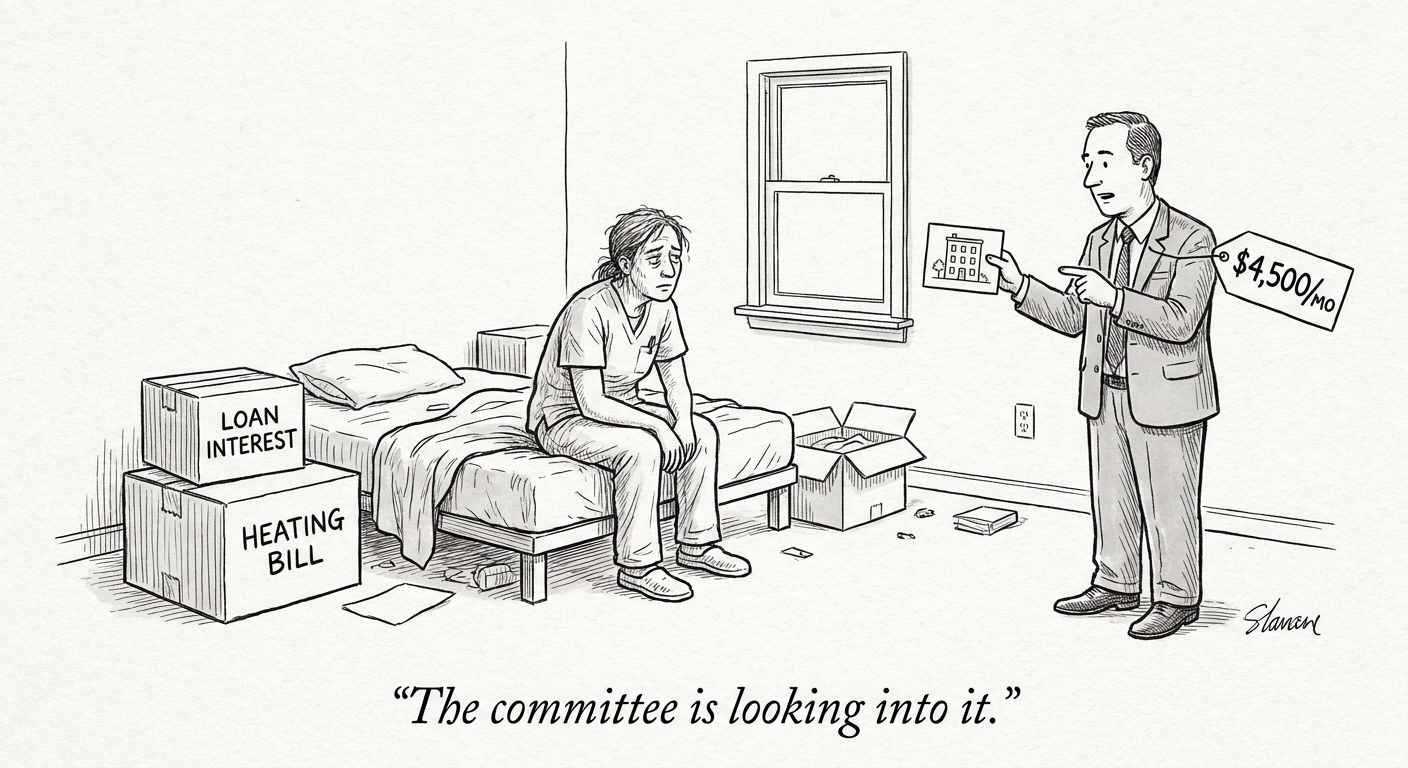

For healthcare organizations this means technical and legal workstreams must align: security teams, data governance committees, clinical informatics, and legal counsel will need shared standards for data minimization, logging, and third-party oversight. Smaller providers face particular strain because implementing these controls is resource-intensive but necessary to participate in vendor ecosystems and to meet payer and federal expectations.

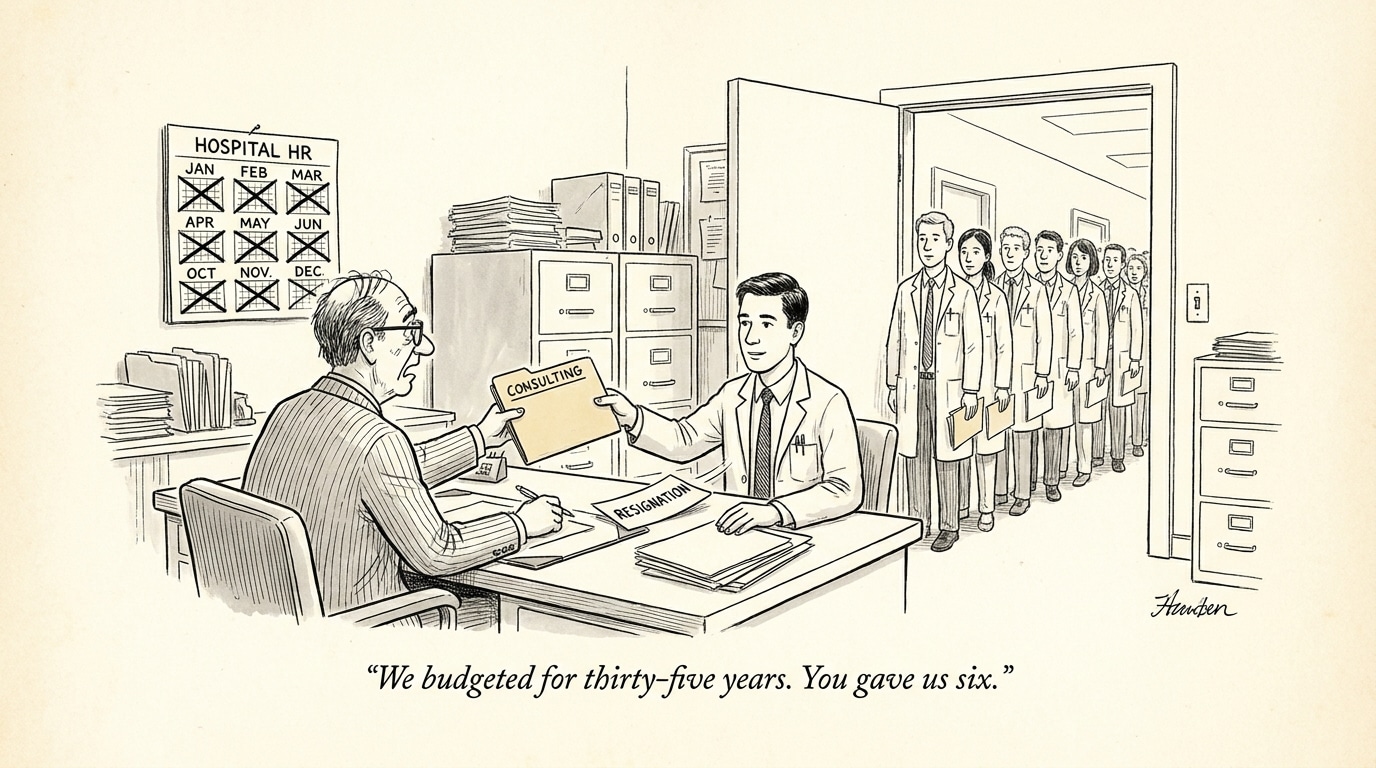

Workforce impacts: accountability, oversight, and new credentialing

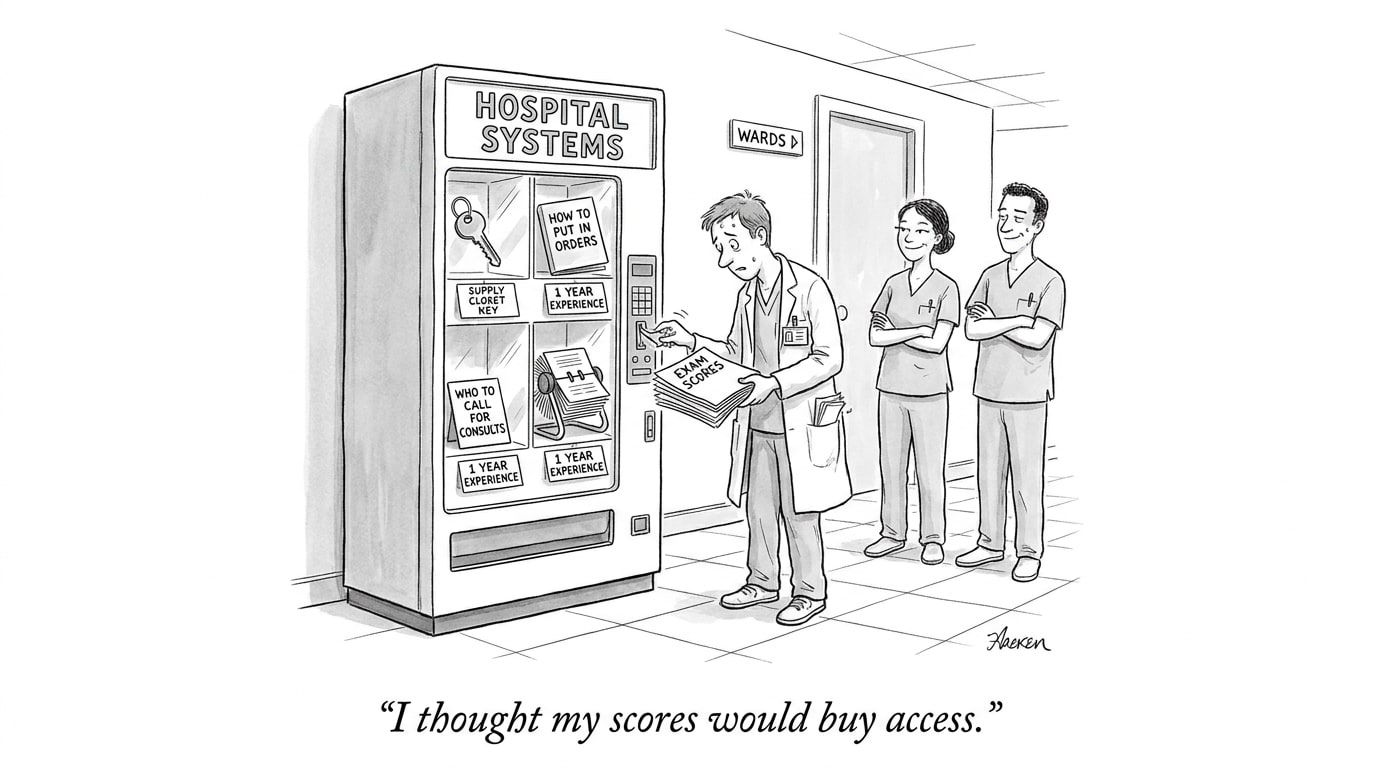

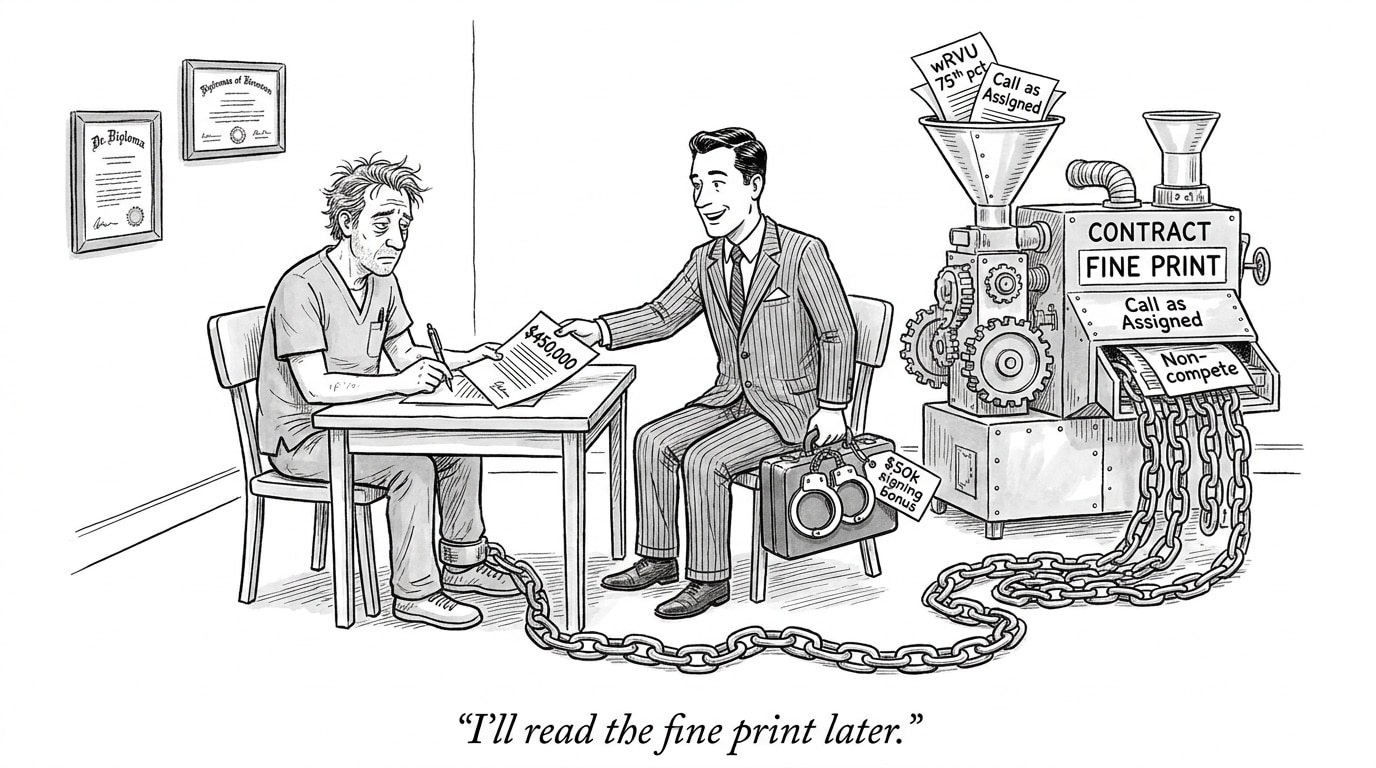

Congressional testimony has focused heavily on workplace impacts—how AI influences hiring, monitoring, and worker protections. In healthcare, those issues take specific shapes: AI-driven scheduling, productivity monitoring, clinical decision support, and automated documentation change how roles are defined and how performance is assessed. Policy choices about transparency, human-in-the-loop requirements, and auditability will influence whether these tools augment clinicians or become sources of new regulatory risk.

Operationally, healthcare employers will need to balance automation gains against professional standards and malpractice exposure. Expect credentialing processes to expand: organizations will begin to assess AI literacy, documented supervision practices, and familiarity with model limitations as part of hiring and privileging. Recruiting platforms and workforce marketplaces that surface these capabilities will gain strategic importance.

Call Out

AI competency will quickly become a hiring baseline in clinical roles: employers will prioritize candidates who can explain model outputs, manage AI-augmented workflows, and document human oversight—shifting job descriptions and recruitment priorities.

Compliance costs, market structure, and adoption trade-offs

Tighter expectations around data governance and transparency will raise compliance costs for vendors and health systems. That dynamic favors larger technology suppliers and health systems that can amortize security and regulatory engineering across many deployments. Conversely, innovators and smaller vendors risk being squeezed out or forced into partnerships with larger firms that can shoulder compliance burdens.

However, federal engagement also offers potential benefits: common procurement standards and government-backed validation processes could create clearer pathways to market and reduce duplication of compliance work across states. The net effect on innovation will depend on whether policymakers prioritize interoperable standards and certification pathways that are accessible to smaller actors.

Implications for healthcare recruiting and operations

For health system leaders and recruiters, the immediate implication is to treat AI policy as a workforce and operational issue, not solely a technology one. Job qualifications will increasingly incorporate AI governance familiarity, and talent sourcing must align with new oversight needs—embedding roles for model stewards, data governance officers, and clinician–AI translators.

Recruiters and job boards should update role definitions and evaluation criteria to reflect these changing requirements. Platforms that connect employers with professionals skilled in both clinical practice and AI governance will capture growing demand and sit at that intersection as an AI-powered healthcare job board—positioned to highlight candidates who bring both clinical expertise and documented experience in AI-augmented care delivery.

What leaders should do now

Operational leaders should take three practical steps: (1) inventory AI uses and the data flows that support them; (2) update hiring criteria and training programs to include AI oversight skills; and (3) plan procurement strategies that require vendor transparency, security controls, and audit trails. Policy uncertainty is not an excuse to pause—prepared organizations will convert regulatory compliance into a competitive advantage.

Sources

Congress hears split testimony on AI regulation and the workplace – HR Executive

Data Privacy, Cybersecurity, AI developments shaping 2026 – Nixon Peabody

Healthy AI: 2025 Year in Review – JD Supra

Trump set off a surge of AI in the federal government. See what happened. – The Washington Post