Why this theme matters now

Artificial intelligence is moving from research labs and decision-support dashboards into the operating room as part of broader AI in healthcare deployment across high-acuity settings. As clinical teams adopt intraoperative guidance, image interpretation, and robotic assistance powered by machine learning, reports of AI providing incorrect or misleading outputs are increasingly visible. This trend matters because the operating room is among the highest-stakes clinical environments: an algorithmic mistake can translate rapidly into a surgical error with lasting patient harm. For health systems, device makers, and clinical leaders, the rise in AI-related surgical mishaps is an urgent signal to reassess readiness, governance, and workforce capabilities.

Types of AI failures unfolding in the OR

Recent episodes present a consistent pattern: ML systems that were designed to augment surgeons have produced outputs that were incorrect, ambiguous, or misleading at critical moments. These failures fall into two broad categories. First, perceptual errors where a system misidentifies anatomy or pathology—mistakes that can alter incision sites, resections, or implant placement. Second, generative or interpretive errors—where AI outputs unsupported recommendations or synthesized visual information that does not match reality.

Both failure modes arise from the same underlying vulnerability: models trained on imperfect, biased, or insufficient data operating under distribution shifts in live clinical settings. Intraoperative lighting, unexpected bleeding, anatomical variation, or novel devices can produce inputs the model never encountered during development. These edge conditions transform well-performing prototypes into unreliable assistants when time and consequences are unforgiving.

Call Out: The OR magnifies model error. Even low-frequency AI failures can convert into serious patient harm because surgical decisions compound consequences in real time. Relying on unvalidated or poorly monitored models in the OR risks converting occasional model errors into catastrophic outcomes.

Why conventional validation falls short

Typical evaluation pipelines for surgical AI emphasize retrospective accuracy on curated datasets and selected metrics. But retrospective benchmarks do not capture intraoperative variability, human-machine interaction dynamics, or the cascading impact of an AI suggestion on team behavior. Validation that omits simulation under real-world stressors—unanticipated anatomy, instrument occlusion, or disrupted telemetry—overestimates operational safety.

Equally important is the evaluation of failure modes: how the interface communicates uncertainty, whether the system flags low-confidence outputs, and how clinicians can override or ignore guidance without workflow friction. Systems that present outputs as authoritative recommendations, rather than probabilistic support, change the cognitive load and decision-making heuristics of surgical teams, increasing the likelihood of automation bias.

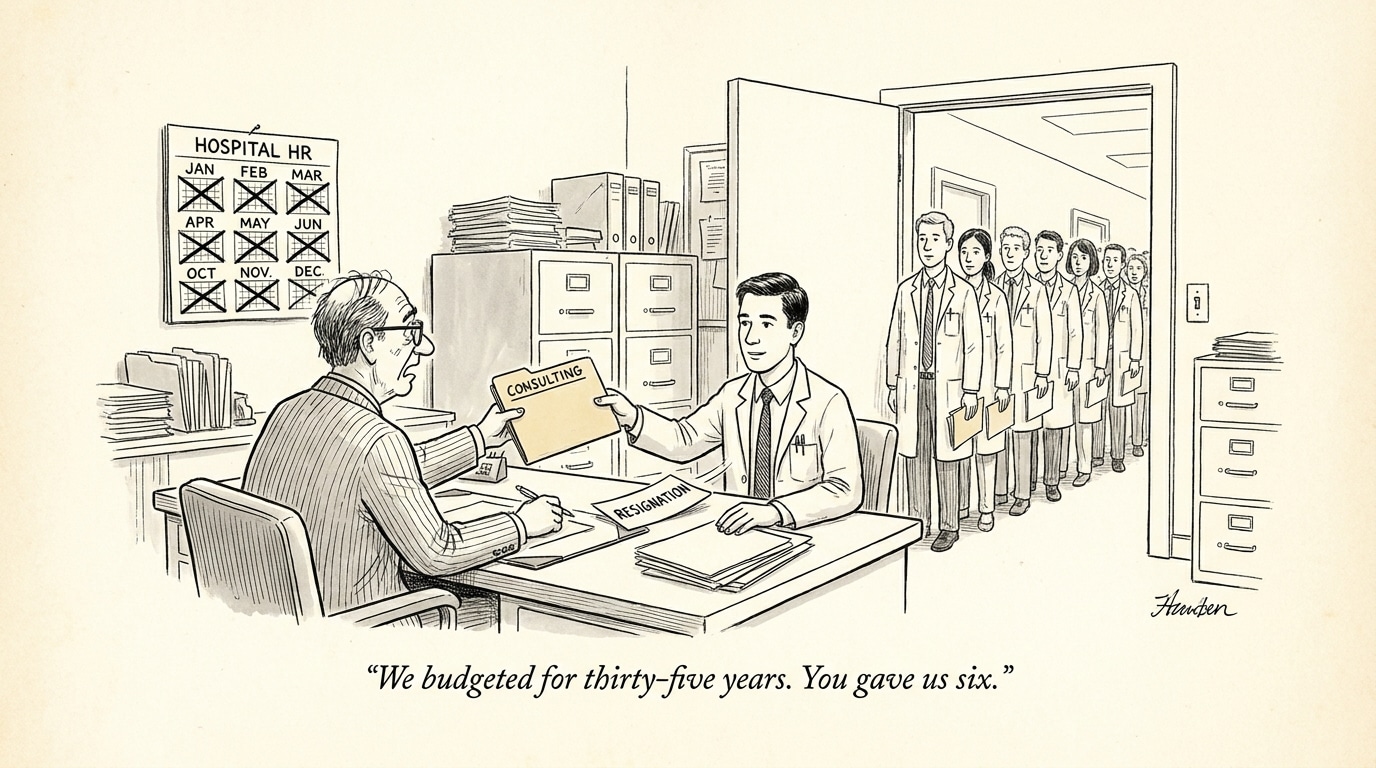

Governance gaps: oversight, monitoring, and accountability

Current regulatory and institutional frameworks are uneven when it comes to intraoperative AI. Software deployed as a medical device often faces premarket review, but post-deployment monitoring and requirements for continuous learning systems remain inconsistent. Moreover, clinical governance rarely extends to real-time telemetry collection and independent auditing of AI behavior in live cases.

Effective governance requires three elements: robust pre-deployment clinical validation under realistic conditions, mandated post-market surveillance with incident reporting tied to device behavior, and standardized protocols for human override and escalation when the AI behaves unexpectedly. Without these, institutions risk introducing tools that operate beyond their claimed performance envelope.

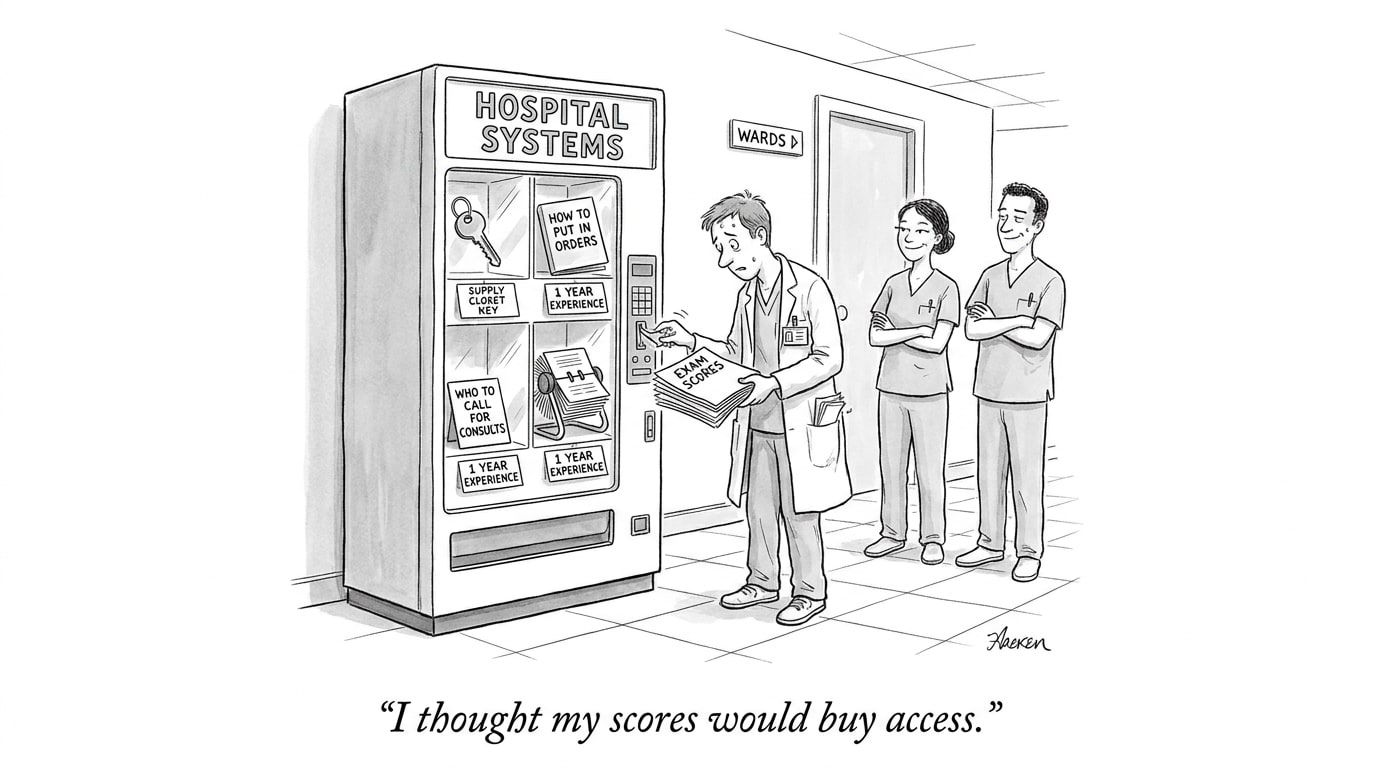

Workforce and operational implications

Surgeons and OR staff will need new skills to safely integrate AI into practice. Technical literacy about model limitations, familiarity with uncertainty representations, and training scenarios that simulate AI failure are necessary to prevent overreliance. Hospitals should consider role adaptations—AI safety officers, intraoperative AI supervisors, and engineers embedded in perioperative services—to bridge the gap between developers and clinicians.

Call Out: Hiring for AI-ready surgery isn’t optional. Health systems should recruit clinicians with AI literacy and support staff who can manage, monitor, and interpret intraoperative models; this reduces risk and preserves trust in high-stakes teams.

Implications for device developers and vendors

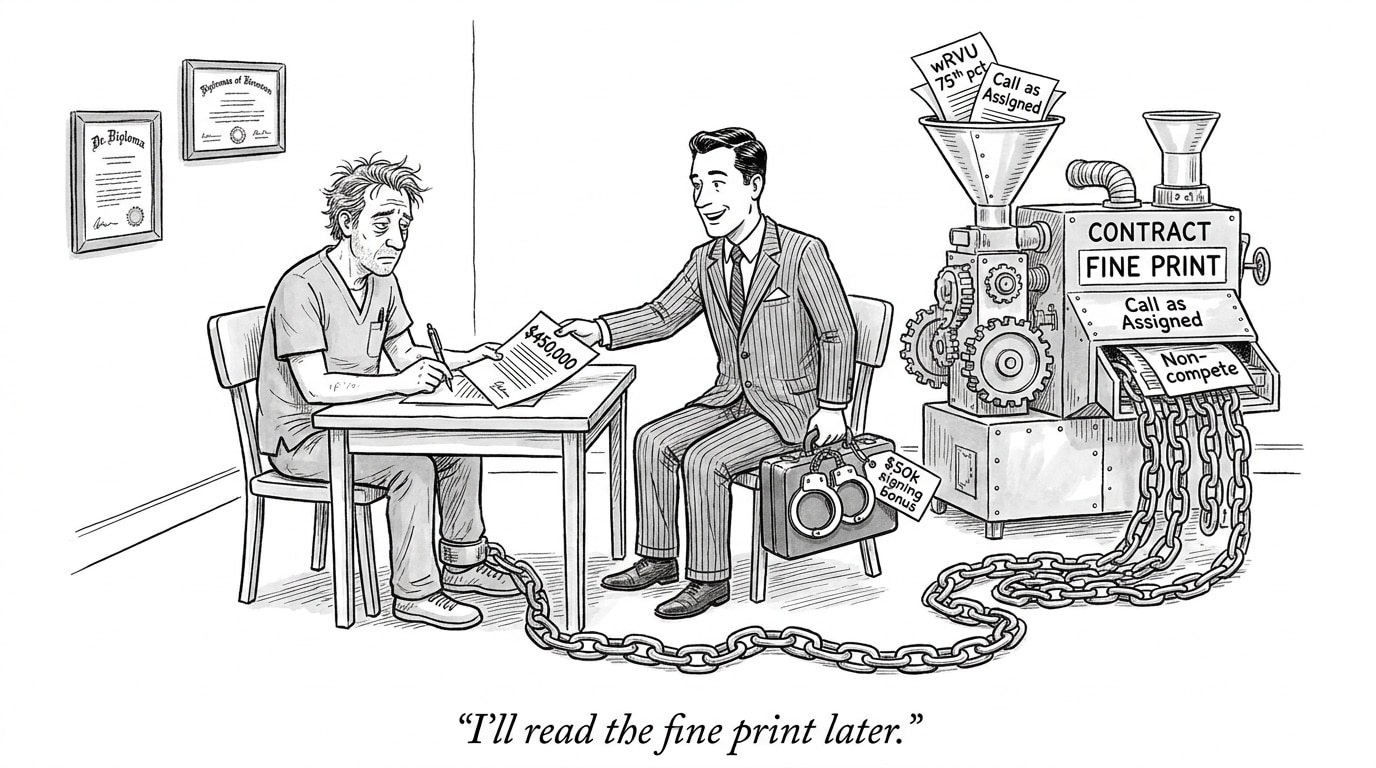

Manufacturers must prioritize robustness: stress-testing models across the breadth of intraoperative conditions, documenting failure modes, and engineering clear user interfaces that surface confidence and provenance. Where models adapt post-deployment, change-control processes and continuous validation loops are essential. Transparency around training data, intended use cases, and limitations must be part of labeling and clinician education.

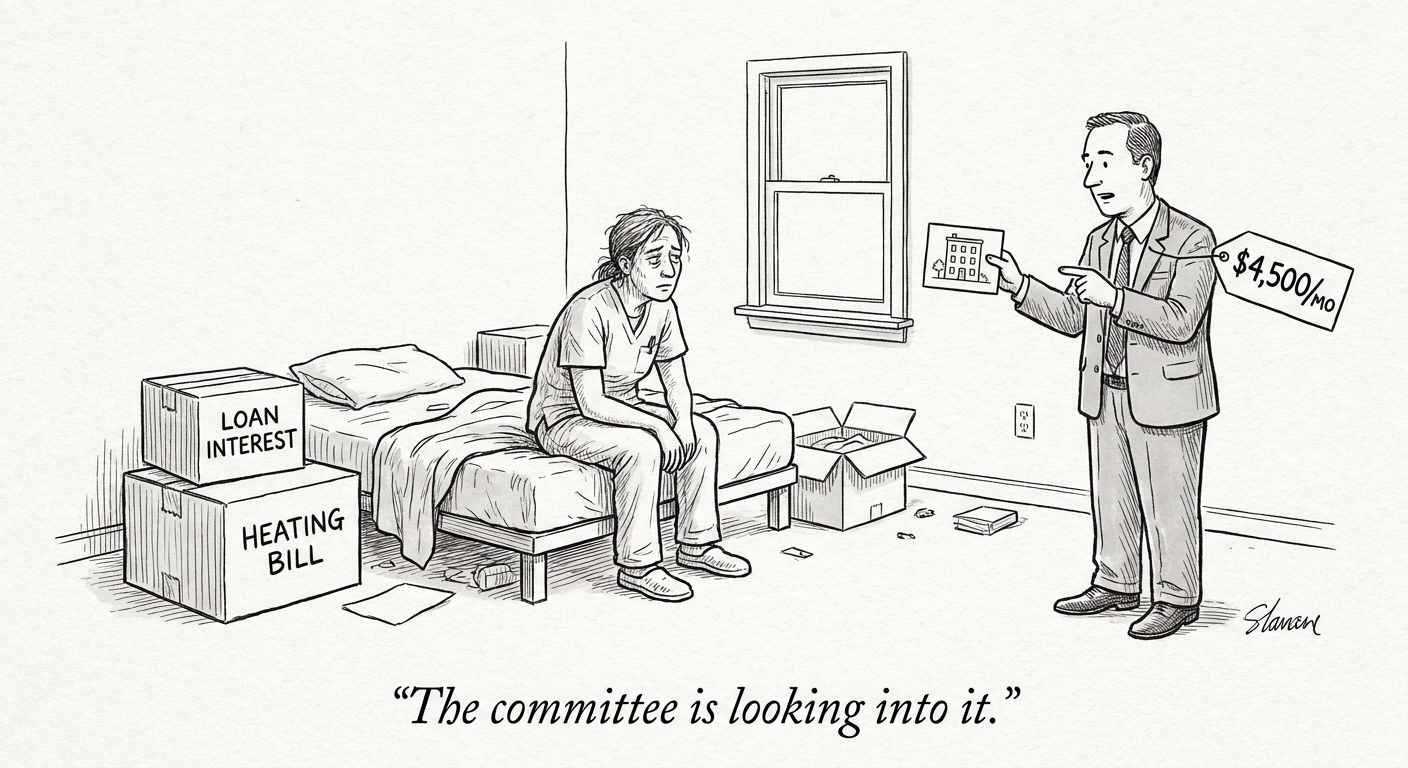

From a procurement perspective, hospitals should require demonstrable evidence of robustness, including prospective clinical evaluation, realistic simulation results, and independent safety audits. Contract language should address liability, update cadence, and incident response expectations for AI-enabled devices.

What this means for recruiting and workforce strategy

PhysEmp’s mission as an AI-powered healthcare job board highlights a practical path forward: recruitment strategies must prioritize cross-disciplinary capabilities. Candidates should demonstrate not only clinical excellence but also understanding of algorithmic risk, data provenance, and human factors. Roles that blend clinical operations with data science—clinical informaticians, surgical data stewards, and intraoperative AI analysts—will be central to safer adoption.

Employers can reduce operational risk by investing in simulation-based hiring and ongoing education. Integrating AI competency assessments into credentialing and privileging processes will help ensure clinicians can safely calibrate reliance on machine outputs.

Conclusion: readiness requires humility and systems design

The increasing visibility of AI-related surgical errors is a sober reminder that technological capability does not equal clinical readiness. High-performing models in controlled settings can still fail unpredictably in the OR. Closing that gap demands systems thinking: rigorous, context-aware validation; transparency and monitoring; redesigned workflows that respect human oversight; and a workforce equipped to detect and respond to AI failure. For recruiting teams and clinical leaders, the immediate work is practical—hire for AI literacy, demand evidence of robustness from vendors, and build the governance infrastructure that can catch problems before they reach patients.

Sources

The Last ‘Person’ You Want Handling Your Surgery Is a Hallucinating Robot – Gizmodo