Why this theme matters now

Large language models (LLMs) are moving out of research demos and into clinical workflows as part of broader AI in healthcare deployment. Recent work shows that off‑the‑shelf chat systems can improve access to basic diagnostic reasoning in low‑resource contexts while specialty‑tuned models are beginning to support complex decision making in fields such as cardiology and otolaryngology. That convergence — affordability plus increasing clinical sophistication — makes it timely to assess what these tools can actually deliver, where they may fall short, and how health systems should prepare.

Reaching underserved settings: low‑cost clinical chatbots

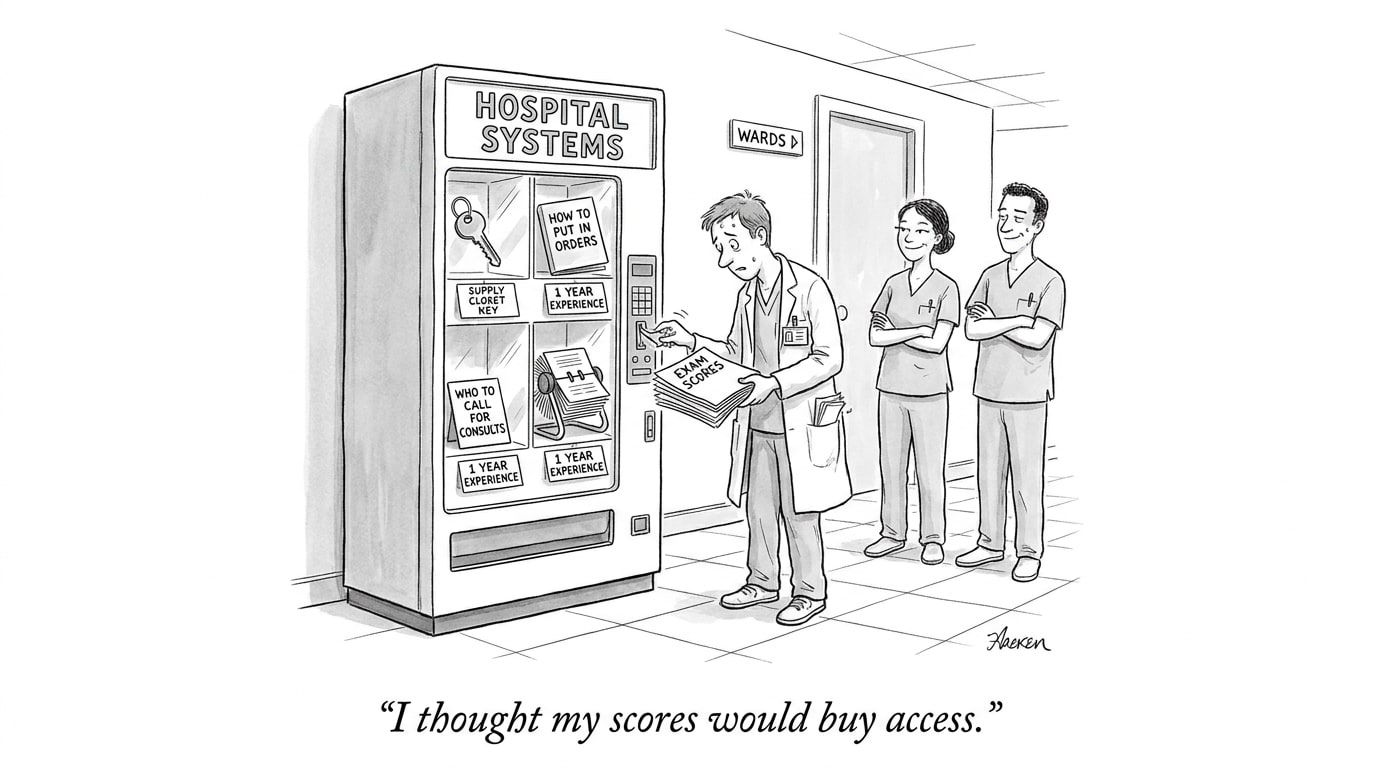

Affordable conversational agents are proving useful where clinician access is limited. When deployed as symptom‑checkers and triage aids, these systems can structure patient histories, surface likely diagnoses, and recommend next steps — potentially shortening the time to appropriate care. Their low marginal cost and lightweight infrastructure requirements mean they can be scaled to remote clinics or community health posts where specialist expertise is absent.

Yet performance is uneven. Accuracy depends on the model’s training data, how well it handles local disease prevalence and language variants, and whether it can safely escalate urgent cases. Implementation considerations — network connectivity, device availability, local regulation, and patient privacy — shape whether low‑cost chatbots actually improve outcomes rather than just generating more uncertain recommendations.

Call Out — Access vs. Accuracy: Low‑cost chatbots lower the barrier to clinical triage, but their impact depends on contextual validation: models must be calibrated against local disease patterns, language, and care pathways to avoid false reassurance or unnecessary referrals.

Specialty‑grade support: cardiology‑focused LLMs

Where medicine is complex and data‑rich, specialty‑focused LLMs are emerging as assistive copilots. Tailored models trained on cardiology literature, guidelines, and de‑identified records can synthesize multimodal inputs — problem lists, labs, and imaging summaries — to propose diagnostic differentials, risk stratification, and management options. Early evaluations show promise in structuring complex care plans and suggesting guideline‑concordant actions, particularly when paired with clinician oversight.

However, specialty models are not substitutes for domain expertise. Their strengths include rapid synthesis of disparate data and consistent application of guideline language; their limitations include sensitivity to input errors, potential to over‑apply recommendations without accounting for nuanced patient preferences, and the need for prospective evaluation to demonstrate outcome improvements rather than just concordance with expert opinion.

Otolaryngology: opportunities and current limits

Applications in otolaryngology illustrate both pragmatic benefits and discipline‑specific constraints. LLMs can automate documentation, generate patient education materials, and assist with differential diagnosis for common ENT presentations. They help standardize clinician communication and free time for procedural care.

But surgical specialties involve fine‑grained anatomical judgment, procedural planning, and intraoperative decision making that current language models cannot perform. For ENT, model utility is greatest in preoperative counseling, longitudinal symptom tracking, and decision support for routine management; tasks that require imaging interpretation, tactile assessment, or real‑time procedural adjustments remain clinician responsibilities.

Call Out — Specialty Fit Matters: Language‑based models best augment tasks that are text‑centric — documentation, guideline synthesis, patient communication — while procedural decision making and image‑dependent judgments still demand human expertise and validated algorithms tailored to those modalities.

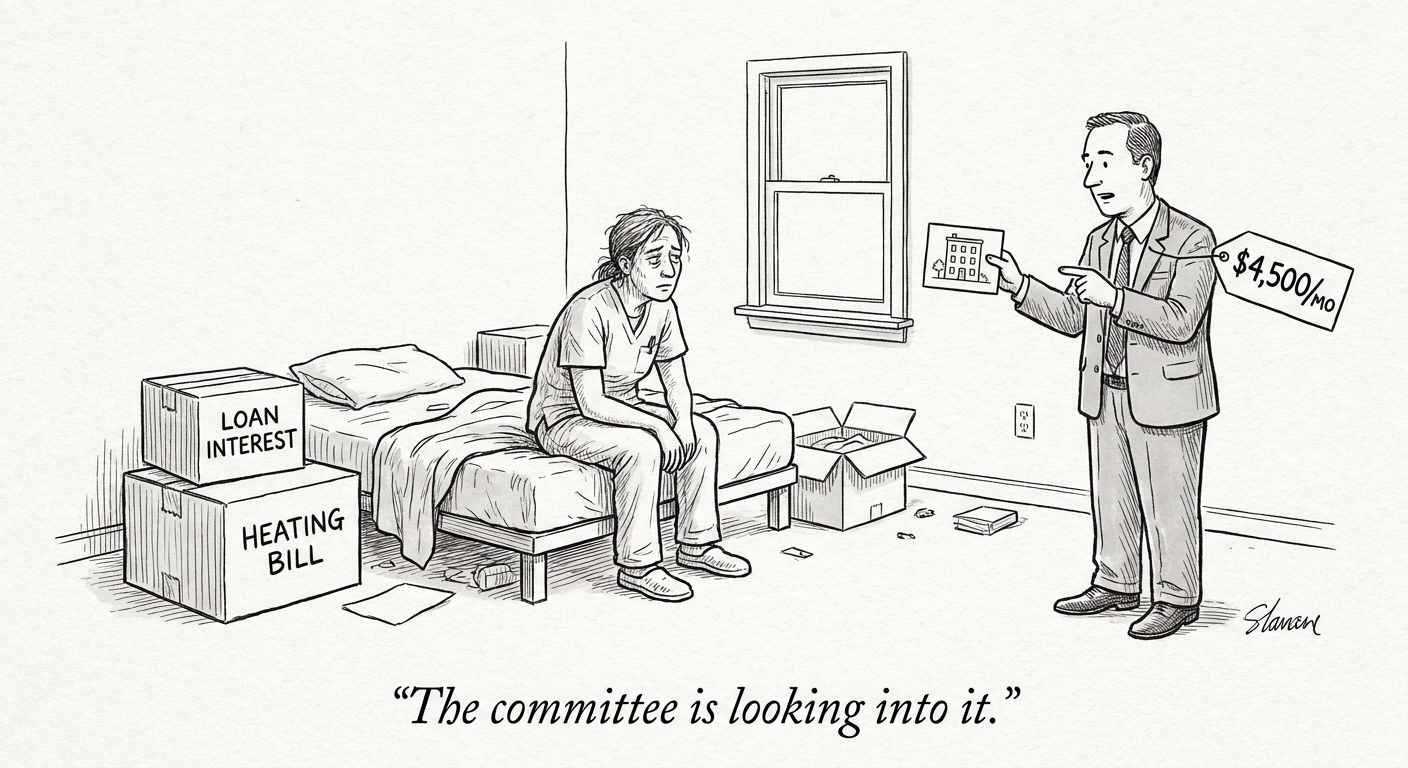

Validation, safety, and integration challenges

Across settings and specialties, three convergent challenges determine whether LLMs are clinically useful: validation, safety, and workflow integration. Validation requires prospective trials and context‑specific benchmarks rather than retrospective concordance alone. Safety includes managing hallucinations, biases in training data, and reliable recognition of scenarios that require escalation. Integration encompasses interoperability with electronic health records, human‑in‑the‑loop designs, user training, and regulatory compliance.

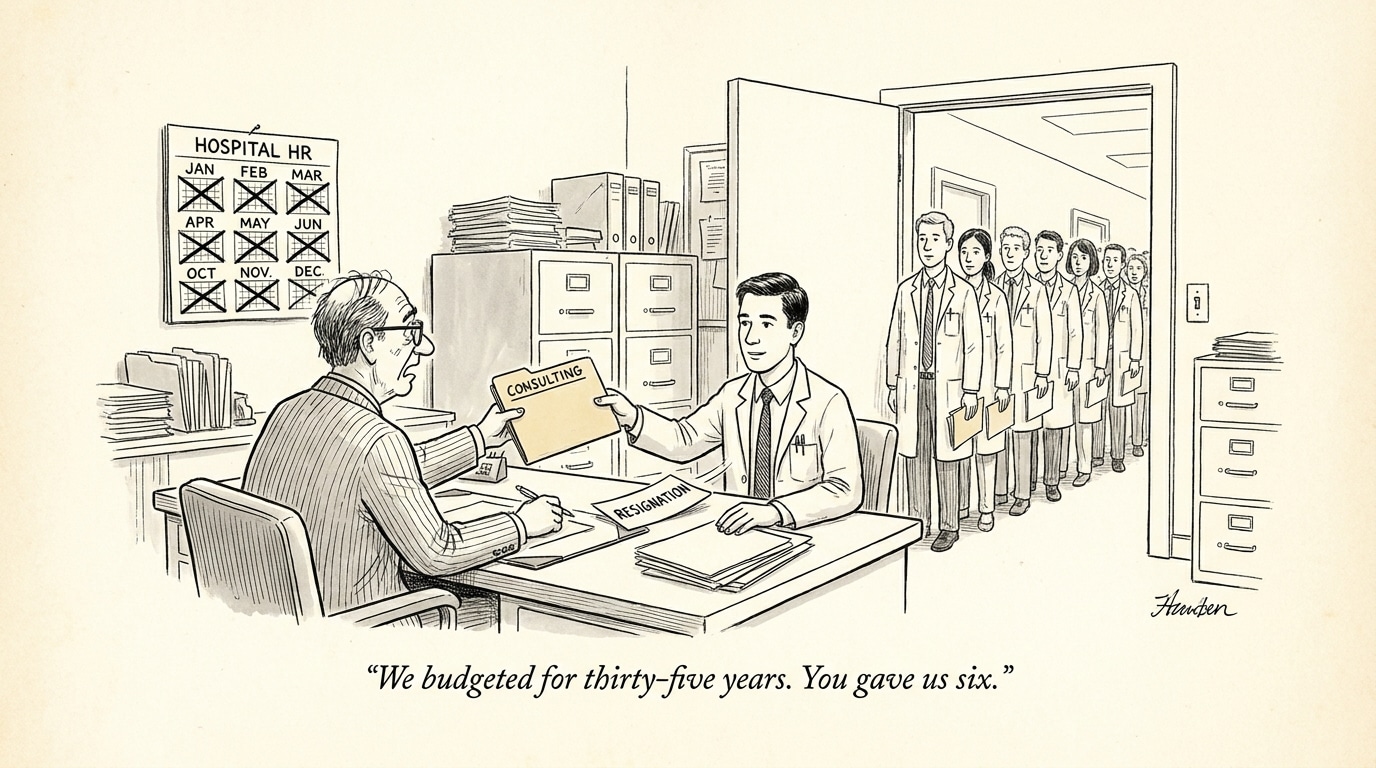

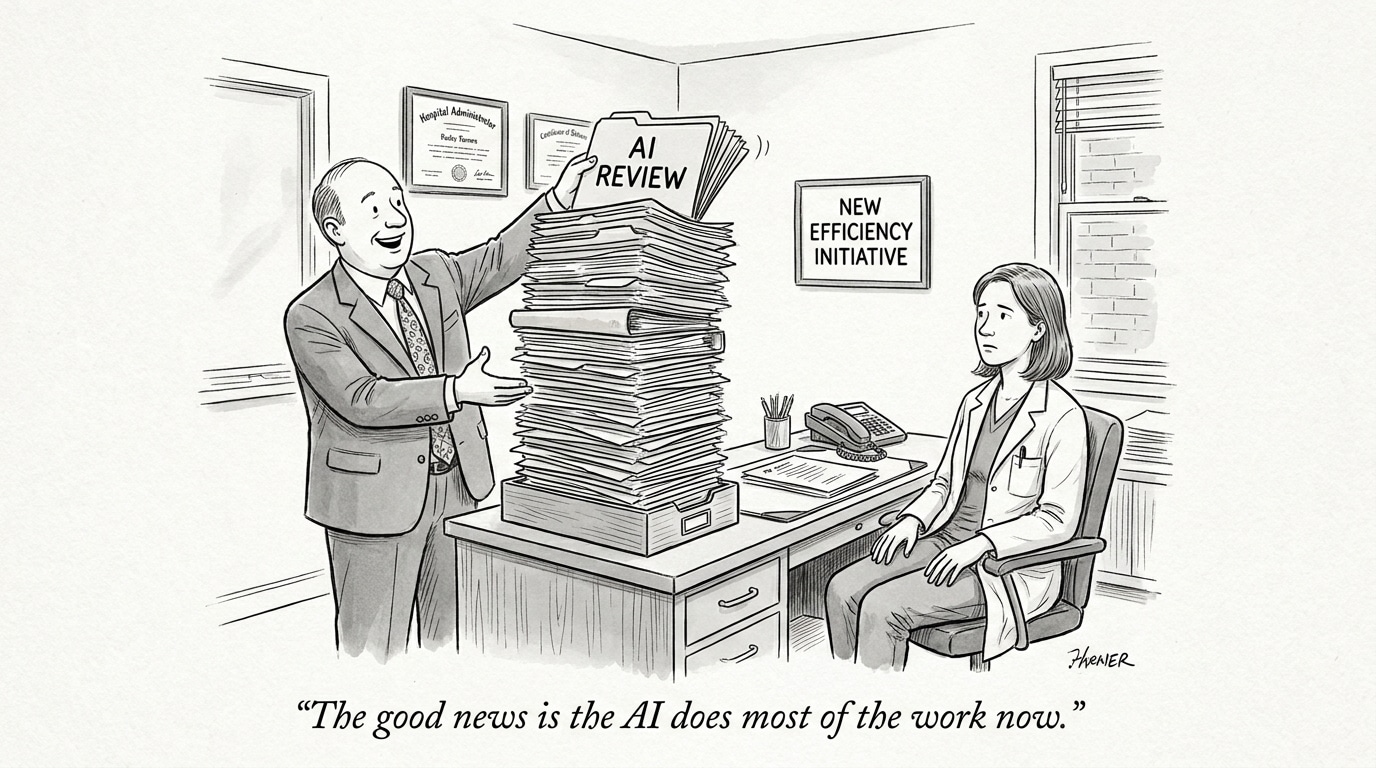

Operationalizing these models also raises workforce and governance questions: who is accountable when an AI‑informed recommendation is followed; how are updates and model drift managed; what metrics are tracked post‑deployment. Solving these is as much organizational design and policy work as it is technical model refinement.

Implications for healthcare systems and recruiting

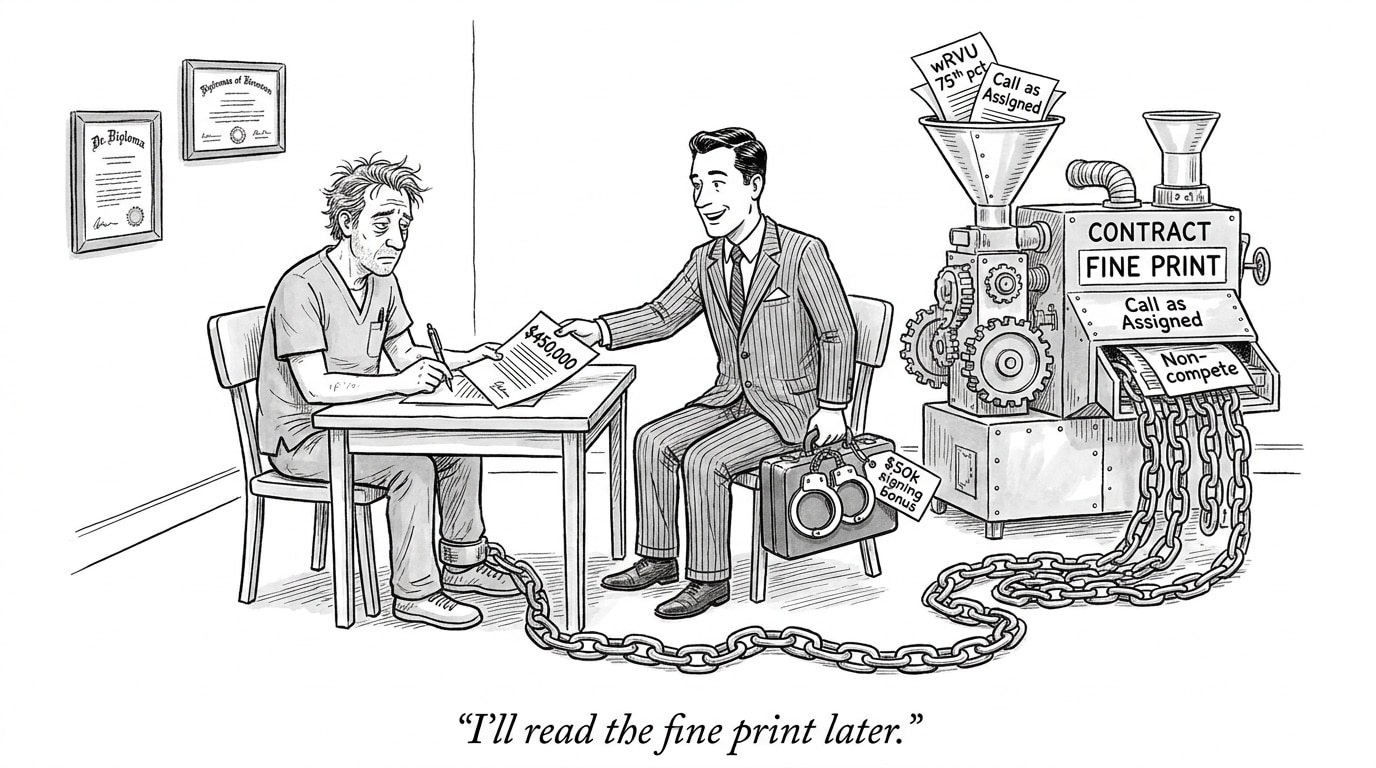

For health systems, the near term holds three action items. First, prioritize pilots that answer outcome‑oriented questions (does the tool reduce missed diagnoses, unnecessary referrals, or clinician time spent on low‑value tasks?). Second, establish governance that pairs technical oversight with clinical leadership to manage safety and compliance. Third, invest in clinician training so staff can use, interpret, and challenge model outputs effectively.

Recruiting priorities will shift. Employers will seek clinicians fluent in AI interpretation, informaticists who can bridge model behavior and clinical meaning, and engineering talent skilled in clinical data pipelines. Job platforms and hiring teams should highlight AI literacy and experience integrating models into care delivery.

Conclusion

LLMs are expanding what’s possible in both basic diagnostic access and specialty support. Their value is contextual: inexpensive chatbots can broaden access when carefully validated for local use; specialty models can accelerate complex care decisions when integrated with clinician oversight and robust evaluation. Success depends less on hype and more on rigorous testing, clear governance, and workforce development to ensure these tools augment care rather than introduce new risks.

Sources

Cheap AI chatbots transform medical diagnoses in places with limited care – Nature

A large language model for complex cardiology care – Nature Medicine

Large Language Models and Otolaryngology: A Review – JAMA Otolaryngology–Head & Neck Surgery