Why this matters now

2026 is emerging as a defining year for clinical artificial intelligence and digital health platforms. Regulators are refining definitions, tightening oversight thresholds, and clarifying distinctions between low-risk wellness applications and regulated clinical decision support systems. Expectations for validation rigor, labeling transparency, and post-market surveillance are becoming more explicit—particularly for prognostic and decision-influencing AI models.

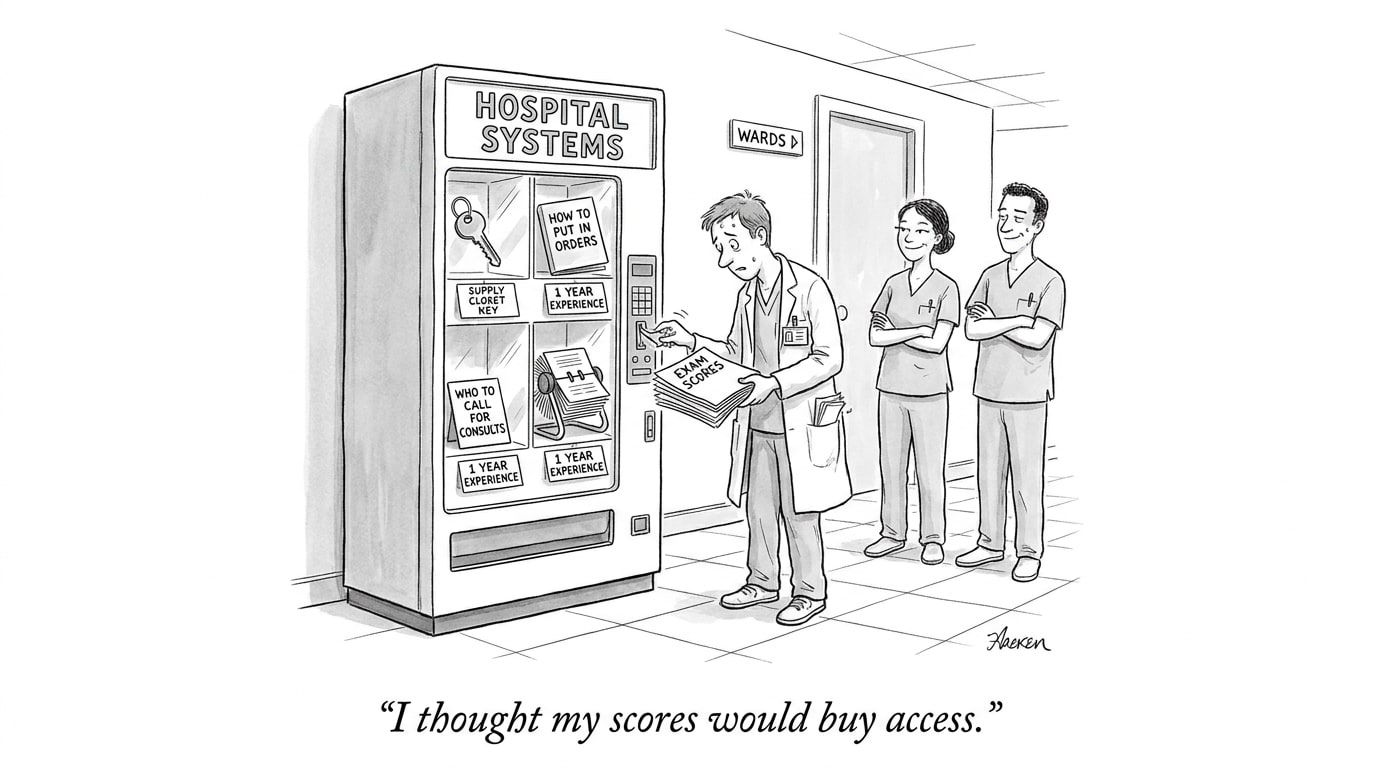

Healthcare organizations that deployed machine learning tools under comparatively permissive early-2020s guidance now face heightened authorization requirements and expanded compliance obligations. The operational implication is clear: the era of informal pilots and loosely governed experimentation is closing. Institutions must now integrate regulatory strategy directly into vendor selection, procurement workflows, credentialing standards, and frontline clinical operations.

These developments sit squarely within the broader transformation of AI in Physician Employment & Clinical Practice, where evolving regulatory expectations reshape liability exposure, workforce roles, and the governance infrastructure required for scalable AI deployment.

Regulatory axes shaping AI in healthcare

1) Risk-based classification is the dominant principle

Regulators are consolidating around a risk-tiered approach: the greater the potential for patient harm from erroneous outputs, the higher the regulatory scrutiny. That trend affects everything from premarket evidence requirements to allowable update mechanisms. For planners, the key is early identification of model use cases that could alter clinical decisions or workflows and therefore attract higher regulatory burdens.

2) The wellness vs clinical boundary is sharper

Authorities are refining what counts as mere wellness support versus software that meets the legal definition of a medical device. This has direct procurement implications: products positioned as lifestyle or informational may avoid device pathways, but small changes in labeling, claims, or integration with electronic health records can push a product across the line into regulated space. Legal teams and clinicians must control public-facing claims and how tools are embedded in care pathways.

3) Authorization and evidence expectations for prognostic AI

Prognostic algorithms—those predicting outcomes or trajectories—are receiving specific scrutiny. Regulators expect robust external validation, transparent performance metrics stratified by key subgroups, and prospective demonstrations of clinical utility where possible. For vendors, well-documented validation plans and reproducible performance reports are no longer optional; for buyers, due diligence must include scrutiny of validation cohorts and post-market monitoring commitments.

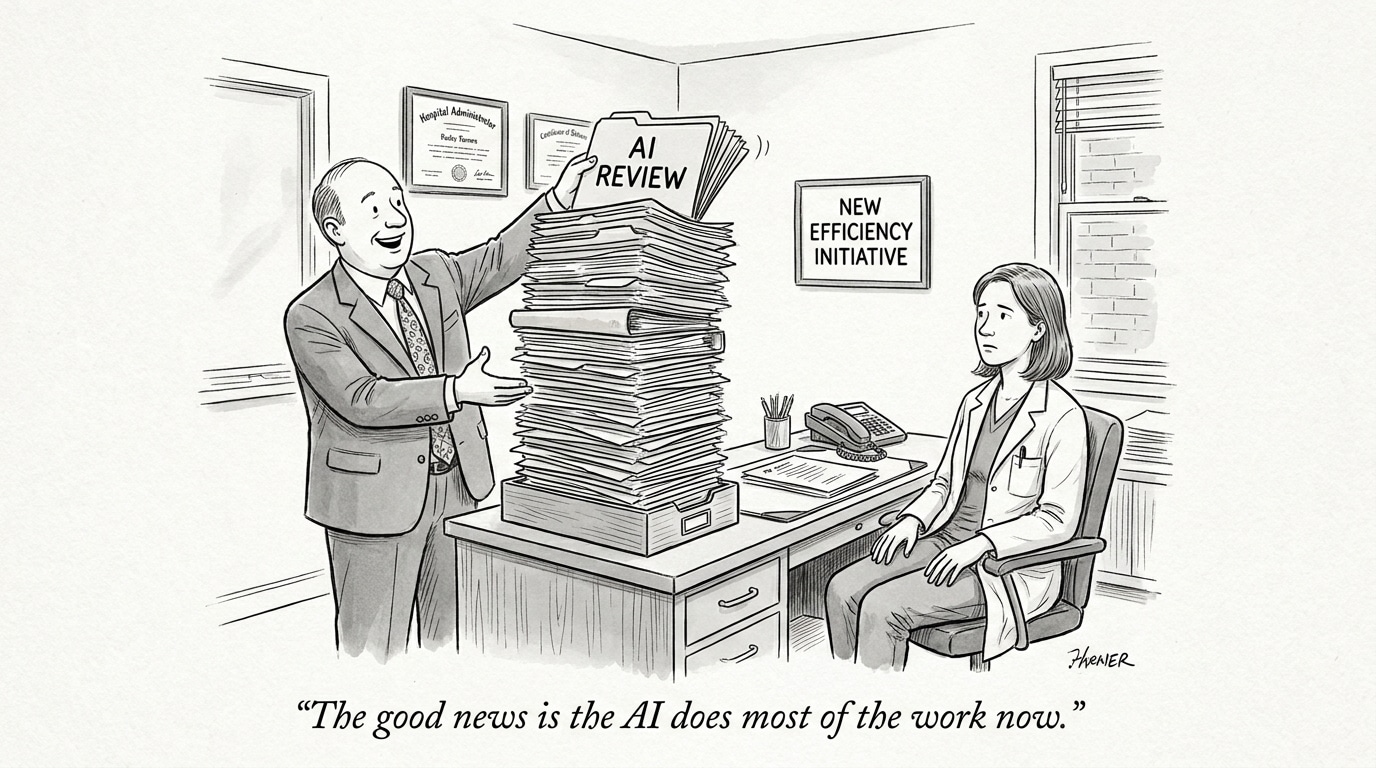

Operationalizing compliance: what leaders must do

Moving from awareness to action requires concrete governance and technical controls. Start with a comprehensive inventory that maps algorithms to clinical use, data inputs, and decision consequences. Establish a cross-functional AI governance committee with representation from clinical leadership, regulatory affairs, legal, data science, and cybersecurity. Require vendors to provide reproducible validation artifacts and clear update protocols for models that change over time.

Call Out: Regulatory readiness starts with classification. Misclassifying an AI tool as low-risk because it appears “informational” can create costly retrofits; treat classification as a strategic control, not an administrative checkbox.

Key technical controls and documentation

Regulators are increasingly interested in lifecycle evidence: premarket design controls, bias and fairness testing, performance stratification, and post-market surveillance plans. Implement continuous monitoring that captures real-world performance drift and adverse events. Strengthen data governance to ensure provenance, consent alignment, and representative sampling. Maintain a clear change-management pathway for software updates, including trigger-based notification to regulators when changes affect safety or performance.

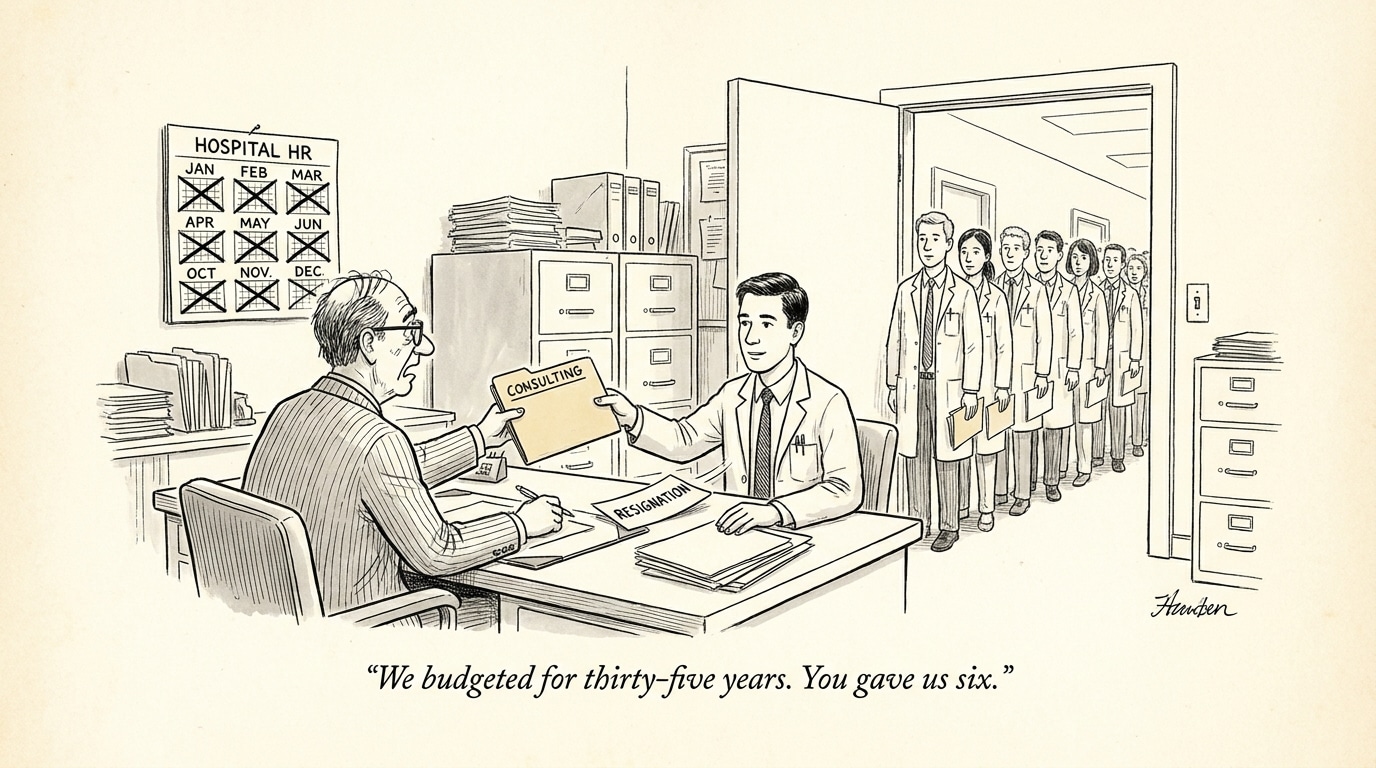

Workforce and recruiting implications

The compliance shift turns regulatory and quality expertise into a strategic hiring priority. Expect higher demand for professionals who can bridge domains: regulatory scientists with machine learning literacy, clinicians experienced in AI-enabled workflows, and data engineers versed in auditability and lineage. Organizations will also need program managers to operationalize evidence generation and post-market surveillance.

Call Out: Hiring for regulatory-AI hybrids is now a competitive differentiator. Teams that combine clinical insight, regulatory acumen, and data engineering will execute compliant deployment faster and with less operational risk.

Recruiters and hiring teams should update role descriptions to include regulatory-compliance competencies, familiarity with medical device definitions, and experience in longitudinal performance monitoring. For healthcare institutions and vendors alike, partnerships with specialized job platforms can shorten time-to-hire for these rare hybrids.

Implications for the healthcare industry and recruiting (conclusion)

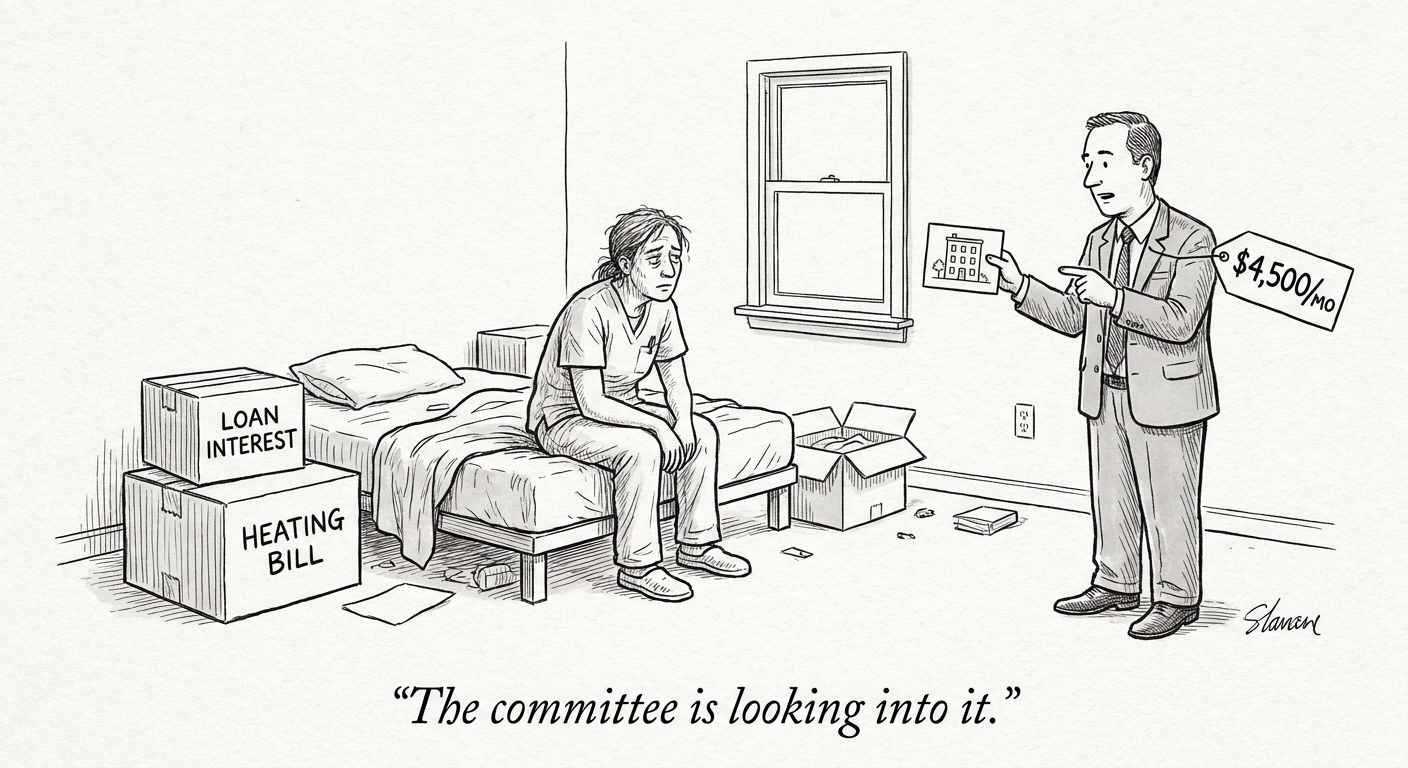

The 2026 regulatory environment raises the bar for evidence, transparency, and lifecycle control of AI and digital health tools. Healthcare leaders must transition from punctuated validation efforts to sustained, auditable governance across design, deployment, and post-market phases. Practically, that means investing in new roles, revamping procurement and vendor-contract terms, and embedding monitoring into clinical operations.

For recruiting teams, the mandate is clear: prioritize interdisciplinary candidates who can operationalize compliance and translate regulatory expectations into reproducible technical and clinical processes. Organizations that act now to align governance, talent, and procurement strategies will avoid disruptive rework and position themselves to safely scale AI-driven care.

Sources

Regulating Digital Health Technologies – The Regulatory Review

The AI Regulation Landscape for 2026: What Legal and Compliance Leaders Need to Know – JD Supra

Authorization of prognostic AI medical devices – Nature Machine Intelligence

New Year, New Guidance: FDA Revisits Wellness and CDS Boundaries – Morgan Lewis