Why this theme matters now

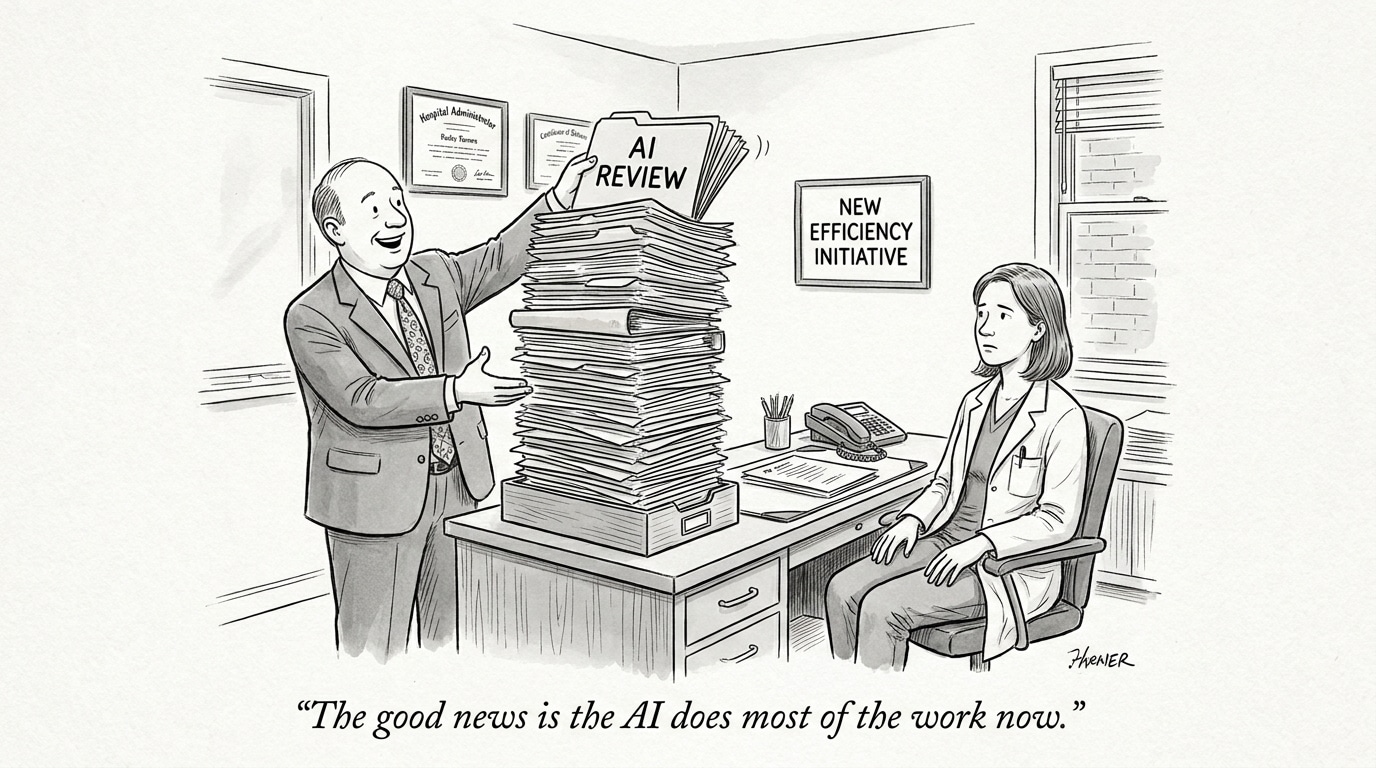

The debate over artificial intelligence in healthcare has shifted from abstract optimism to a contest between two realities: an overheated market with inflated expectations, and an operational landscape where intelligent automation could materially address persistent care-delivery failures. This tension matters because health systems must decide how to approach broader AI in healthcare adoption—whether to slow, regulate, or accelerate deployment—choices that affect patient safety, workforce roles, capital deployment, and the evolution of care outside acute settings. The question is no longer just whether AI can do more, but how organizations prioritize investments that yield measurable clinical and operational returns.

AI investment alone is not a cure: systems must align technical capabilities with workflow redesign and governance to avoid wasted spend and safety risks.

Disentangling hype from utility

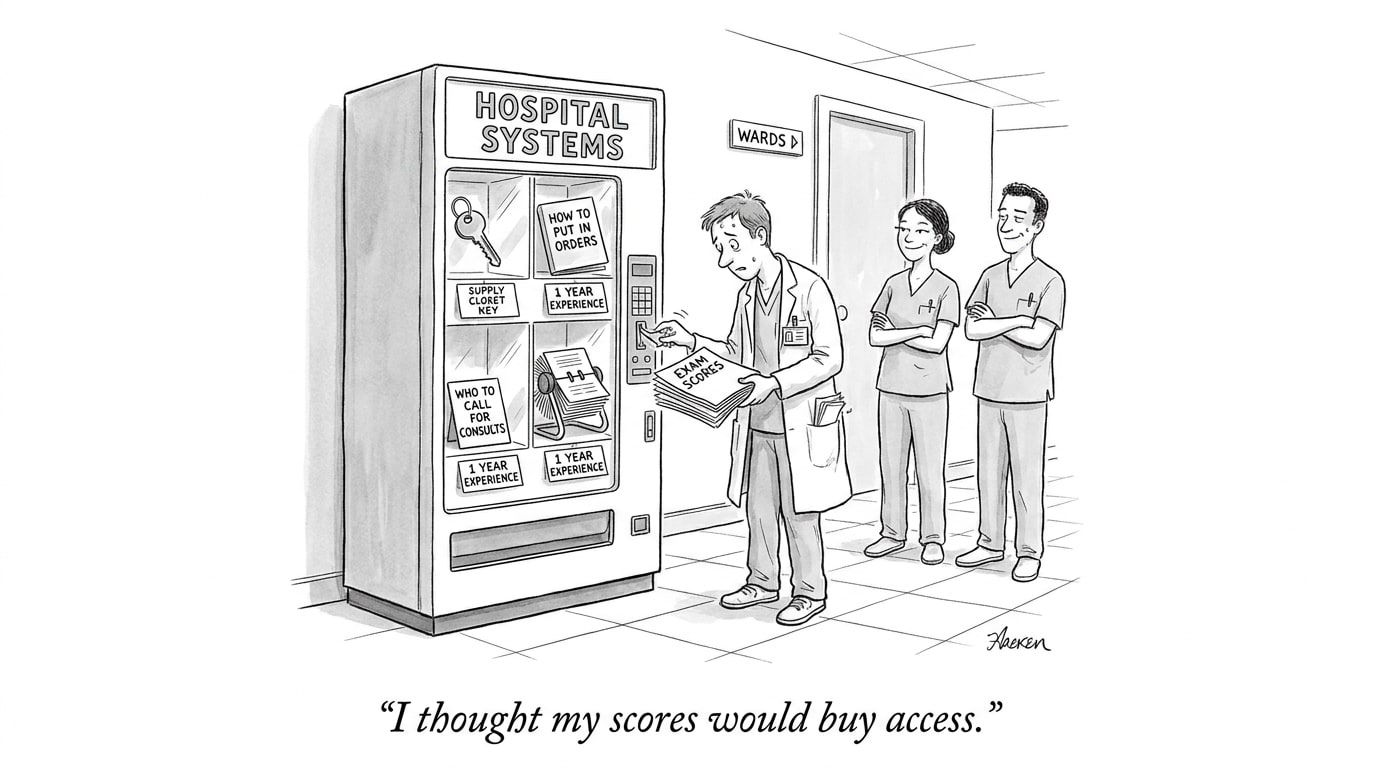

Hype cycles are intrinsic to emerging technologies. The healthcare sector has experienced rapid inflows of capital and publicity for AI models promising diagnostic accuracy, administrative efficiency, and clinical decision support. Yet enthusiasm has outpaced validated impact: many tools perform well in constrained retrospective studies but falter when integrated into complex clinical workflows, with inconsistent user adoption and uncertain outcome improvement.

Distinguishing legitimate promise from overreach requires three filters: (1) prospective clinical validation in representative care settings; (2) measurable patient-centered outcomes, not just model metrics; and (3) integration pathways that preserve clinician agency and liability clarity. When products meet these criteria, they move beyond proof-of-concept toward sustainable deployment. When they do not, continued investment risks an unsustainable bubble that could erode trust and invite heavy-handed regulation.

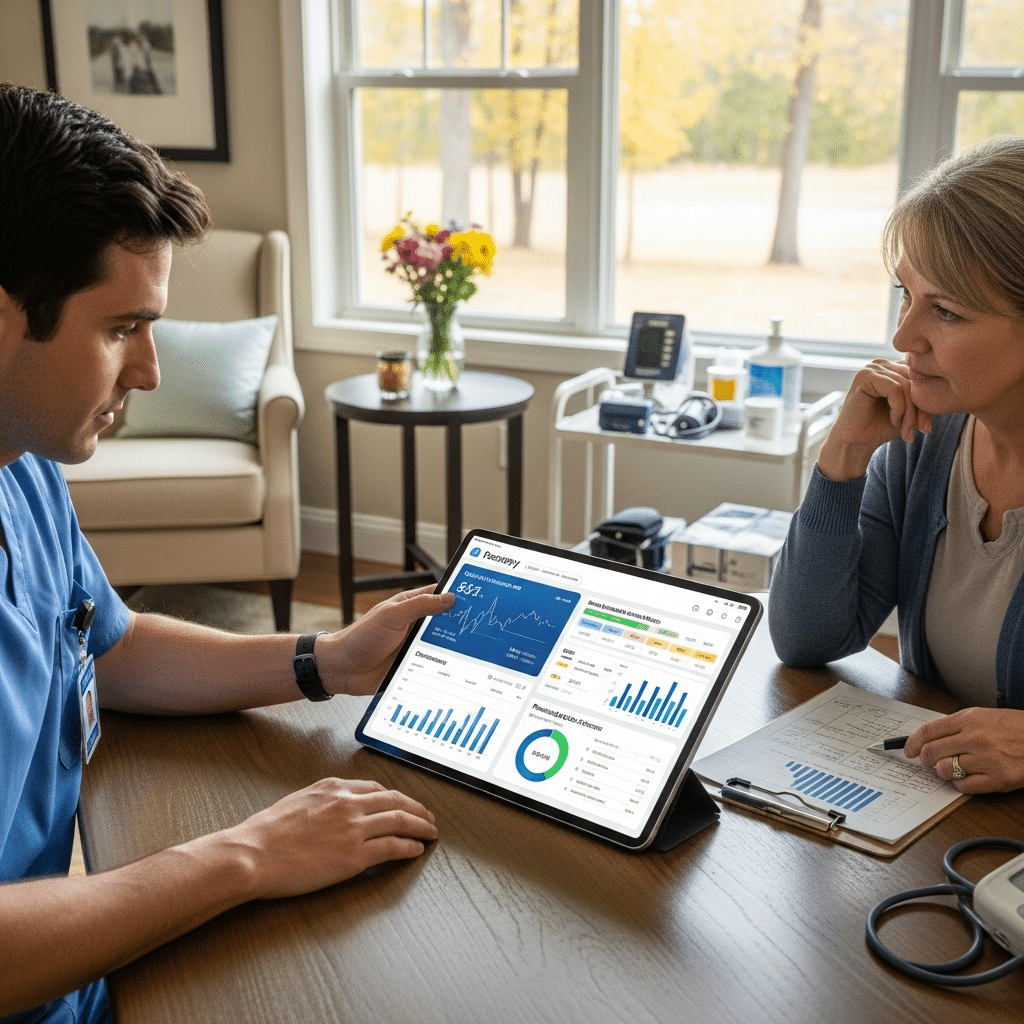

Agentic AI and the case of post-acute care

Post-acute settings—skilled nursing, home health, rehabilitation—face chronic staffing shortages, fragmented information flows, and high readmission rates. These are precisely the domains where automation that can act with some autonomy (agentic AI) could produce value: dynamically scheduling visits, coordinating medication reconciliation, detecting clinical deterioration earlier, and routing tasks to the right clinician or caregiver.

However, the gap between potential and practice is substantial. Post-acute environments are heterogeneous, often lacking standardized data infrastructure. Agentic systems that assume continuous, high-fidelity data streams face reliability challenges. There are also ethical and legal trade-offs: delegating actions to AI agents raises questions about responsibility for errors and how to maintain human oversight without negating efficiency gains.

Agentic AI can amplify workforce capacity in understaffed post-acute care, but only with standardized data inputs, transparent decision rules, and clear accountability structures.

Comparing risk profiles: overhype vs under-adoption

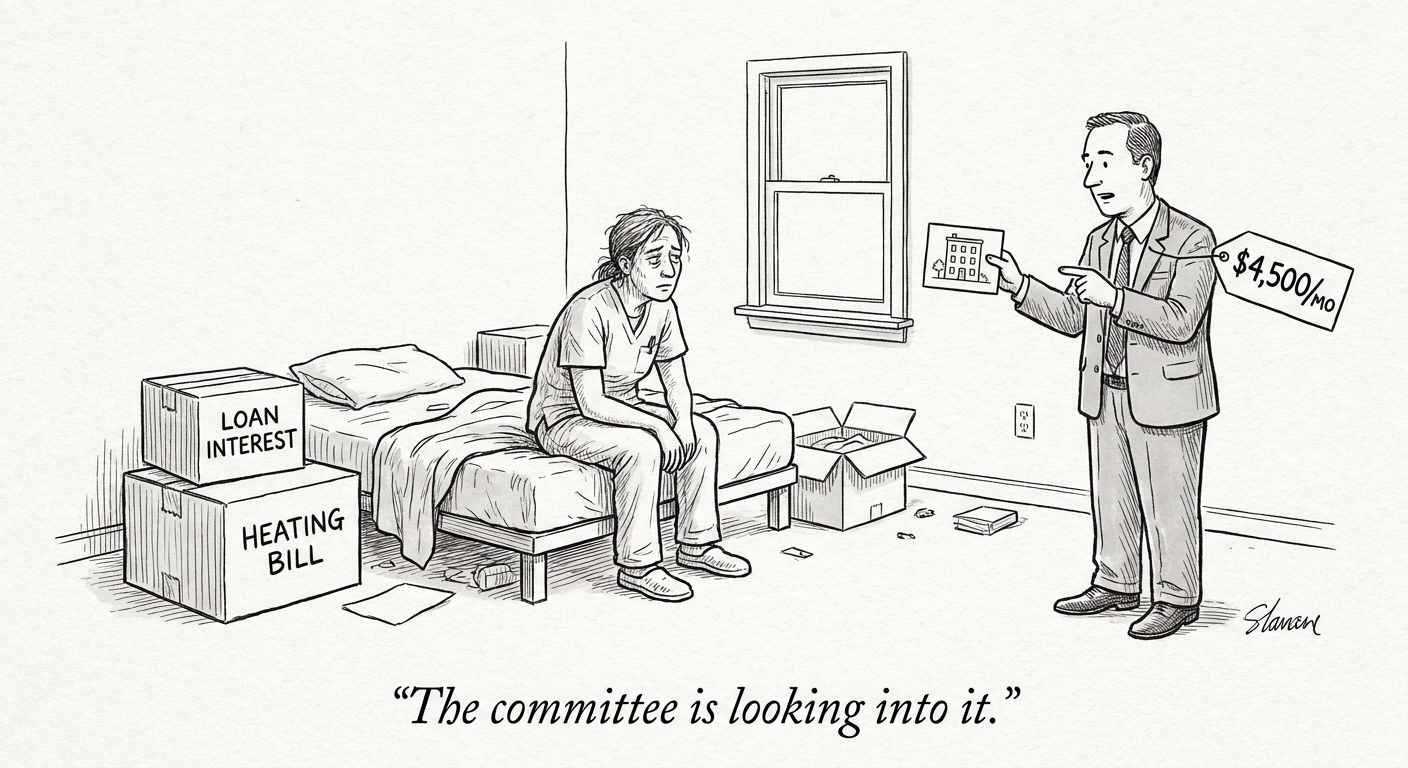

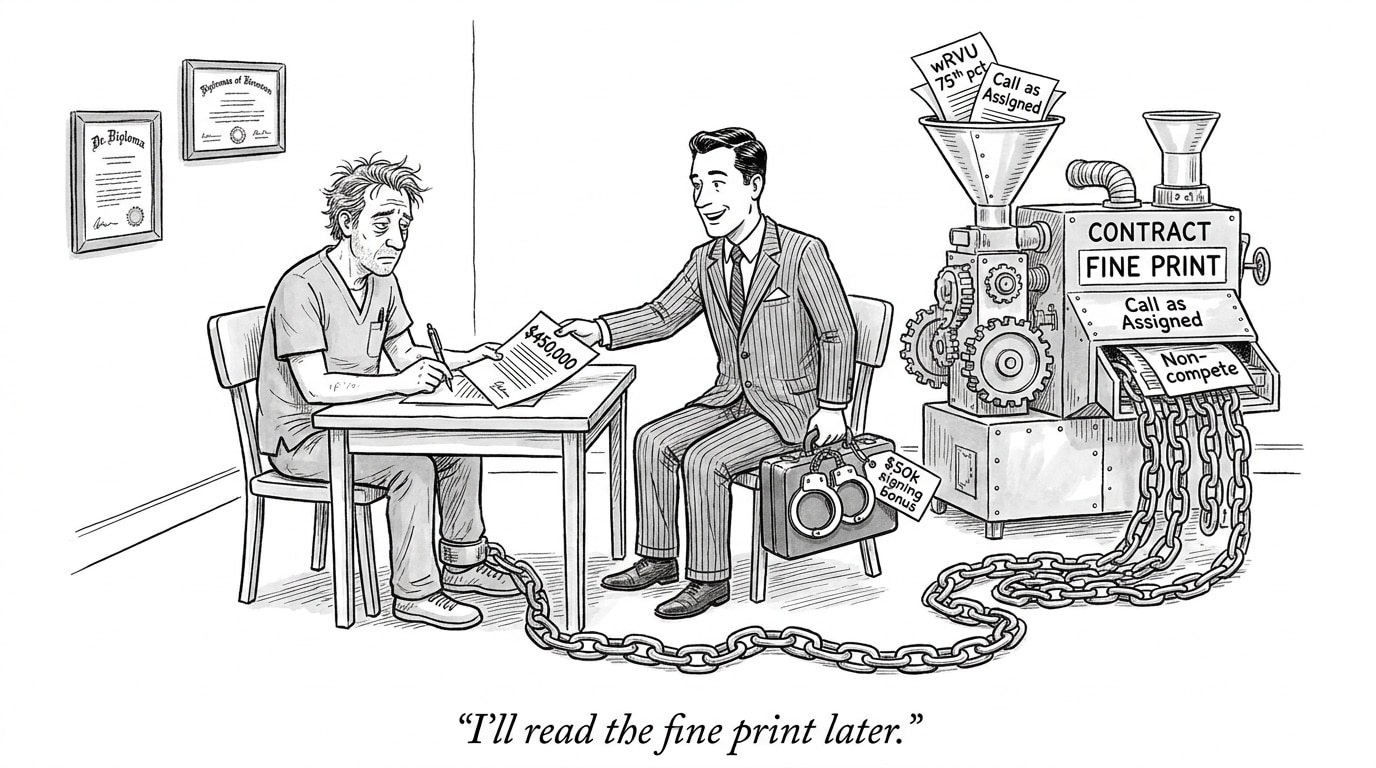

Both extremes—unchecked hype and reflexive resistance—carry risks. Overhyping AI inflates valuations, channels resources to immature products, and risks patient harm when systems are deployed prematurely. It can also produce regulatory clampdowns that slow legitimate innovation.

Conversely, under-adoption can perpetuate inefficiencies and safety gaps. Older adults, complex care transitions, and community-based services could benefit from targeted automation that augments scarce clinical capacity. The optimal stance for health systems is strategic pragmatism: adopt where evidence and operational readiness align, iterate with rigorous monitoring, and be prepared to decommission tools that fail to deliver.

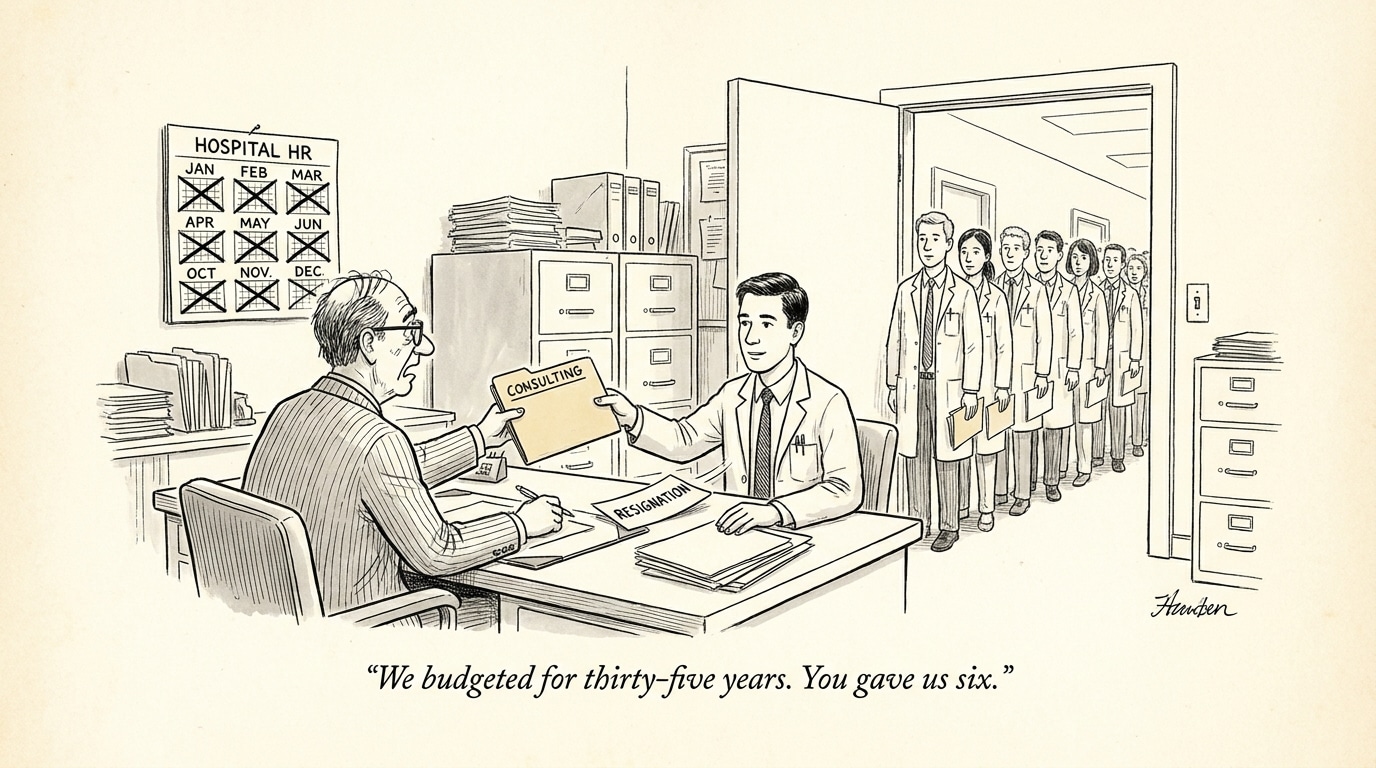

Operational and workforce implications for healthcare organizations

AI decisions should be treated as enterprise transformation initiatives, not point-tool procurements. Successful implementations need cross-disciplinary teams—clinical leaders, informatics, operations, legal, and human resources—to redesign workflows, retrain staff, and reassign roles. Recruiting priorities will shift toward hybrid skill sets: clinicians who understand digital workflows, data scientists with domain experience, and implementation specialists who can bridge product engineering and frontline practice.

Implications for purchasers and policymakers

Health system leaders must demand transparent evidence, insist on interoperability, and require post-deployment monitoring tied to contractual recourse. Payers and regulators should encourage field trials with real-world outcomes and create incentives for shared-data ecosystems that reduce deployment friction. Policy levers that reward outcome-based payment models will also make investments in dependable, task-oriented AI more attractive.

Conclusion: a pragmatic roadmap

The current moment calls for calibrated action. Stakeholders can avoid the extremes of blind enthusiasm or paralyzing skepticism by: prioritizing validated use cases; building infrastructure for reliable data capture in non-acute settings; aligning incentives across payers and providers; and retooling the workforce for hybrid human–AI workflows. When these elements converge, AI becomes less a speculative bubble and more a practical set of tools that address entrenched gaps in care delivery—especially in post-acute services where the human and economic need is greatest.

Sources

Viewpoint: Healthcare needs AI ‘bubble to burst’ – Becker’s Hospital Review