Why this theme matters now

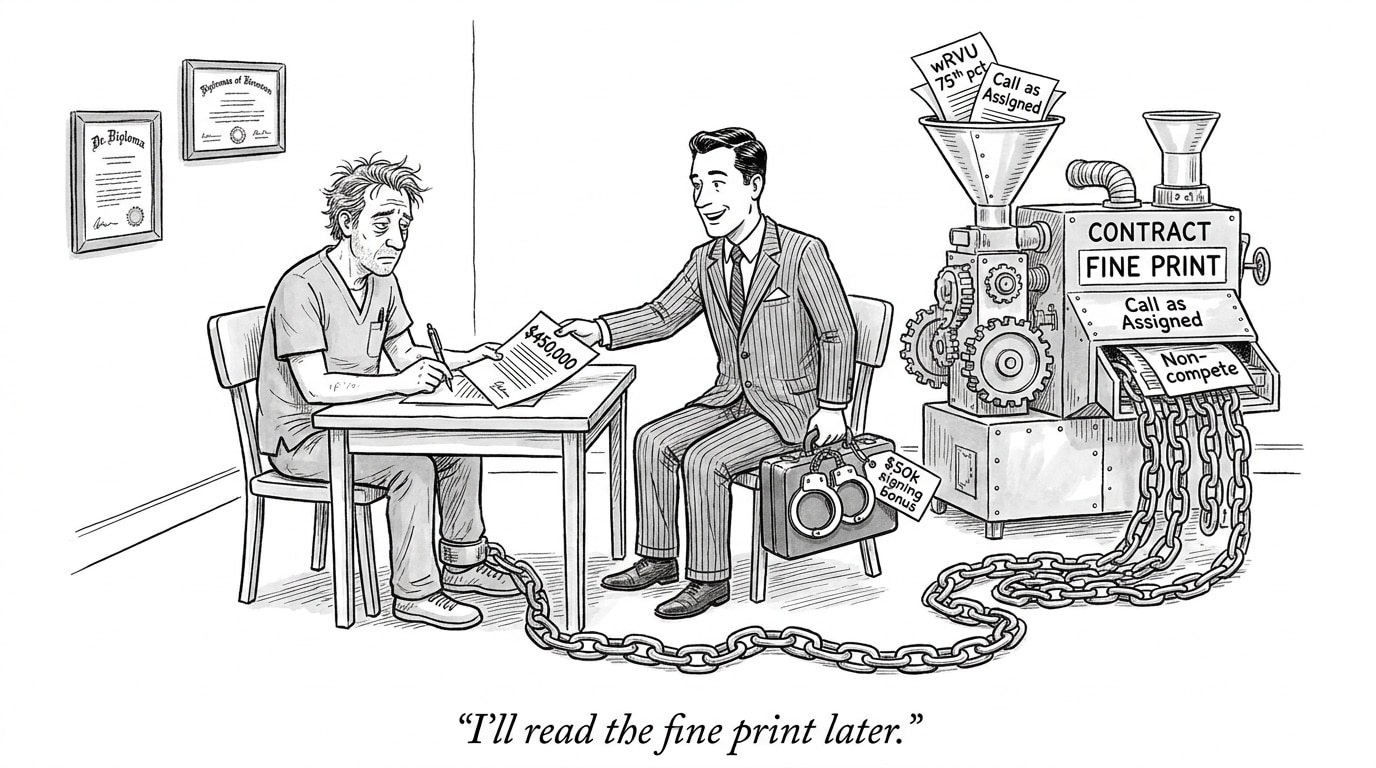

Health systems are accelerating AI pilots and procurement, driven by promises of faster diagnosis, operational efficiency, and personalized care. At the same time, high-profile demonstrations that models can leak patient information and that algorithms can perform unevenly across populations have put ethical risks squarely in executives’ decision agendas. The stakes are not hypothetical: privacy breaches and algorithmic harm can erode patient trust, invite regulatory action, and amplify existing health inequities. Institutions that want to deploy AI at scale must embed these safeguards within structured AI in Physician Employment & Clinical Practice that protect patient data while preventing algorithmic bias.

Technical tools to protect data — options and tradeoffs

Multiple technical approaches can reduce the risk of exposing identifiable data while enabling model training and inference. Federated learning keeps patient records on local systems and transmits only model updates; differential privacy adds statistical noise to outputs to bound individual re-identification risk; synthetic data and data anonymization aim to provide usable datasets without direct identifiers; and cryptographic methods such as homomorphic encryption and secure multiparty computation enable computation on encrypted inputs.

None of these is a turnkey solution. Federated learning can limit central visibility into data quality and requires robust orchestration and secure aggregation to prevent reconstruction attacks. Differential privacy involves carefully calibrated noise that can degrade clinical model performance if applied too aggressively. Synthetic data can fail to capture rare but clinically important patterns. Cryptographic schemes often carry substantial computational cost and engineering complexity. Choosing an approach therefore requires explicit tradeoff analysis that weighs privacy guarantees against clinical validity and operational feasibility.

Call Out: Privacy strategies are not one-size-fits-all—health systems must align the strength of privacy guarantees with use-case criticality, expected model performance, and the operational capacity to implement and audit complex cryptographic or distributed solutions.

Spotting and correcting algorithmic bias

Algorithmic fairness starts upstream. Training data that under-represents subgroups, encodes historical treatment differences, or reflects flawed labels will likely produce models that perform unequally. Robust bias mitigation requires a combination of actions: rigorous cohort analysis to identify performance disparities, use of fairness-aware metrics (e.g., subgroup sensitivity, predictive parity), adversarial testing to probe hidden failure modes, and targeted retraining or augmentation where gaps exist.

Operationalizing fairness also means instituting pre-deployment checks and post-deployment monitoring with stratified performance dashboards. Explainability tools and model cards provide clinicians and auditors with context about intended uses and limitations. Importantly, fairness work should involve clinical domain experts and representatives of impacted communities to surface real-world harms that purely technical tests might miss.

Governance: translating ethics into procurement, contracts, and practice

Ethical AI requires organizational structures that assign clear responsibilities and decision rights. Effective governance spans vendor selection criteria, contractual clauses that set data handling expectations, continuous validation requirements, and incident response plans. Procurement teams must insist on vendor transparency about training data provenance, documented fairness testing, and technical documentation enabling third-party audits.

In practice this means cross-functional committees—combining clinical leaders, privacy officers, legal counsel, data scientists, and patient-representation advisors—who review new AI deployments against risk thresholds. Contracts should embed rights to audit, data-use limitations, and model-update governance, ensuring downstream changes do not silently degrade privacy or fairness.

Call Out: Governance is the operational bridge between ethical intent and real-world outcomes—without enforceable procurement standards, auditing rights, and multi-disciplinary oversight, technical safeguards rarely translate into sustained trust.

Operationalizing trust: monitoring, explainability, and contingency planning

Deploying a model is the start, not the finish. Continuous monitoring for data drift, performance degradation by subgroup, and potential privacy leakage is essential. Explainability mechanisms that are clinically meaningful—rather than binary “feature importance” outputs—help clinicians interpret model recommendations and guard against misapplication.

Institutions should also run tabletop exercises that simulate privacy incidents and biased outcomes to stress-test response protocols. These exercises reveal gaps in notification procedures, remediation steps, and patient communication strategies—practical elements that regulators increasingly expect to see documented.

Implications for healthcare organizations and recruiting

Meeting the dual imperatives of privacy and fairness requires investment in people and processes as much as in technology. Health systems need privacy engineers, MLops professionals skilled in secure model deployment, fairness and validation specialists, and governance leads who can translate technical assessments into policy. Procurement and clinical informatics must learn to evaluate vendor claims about privacy-preserving training and fairness testing critically.

For recruiting, this means expanding role definitions and hiring pipelines. Candidates must combine technical depth with domain fluency: a privacy engineer who understands HIPAA and clinical workflows; a model validator skilled in statistical fairness techniques and clinical evaluation; and an AI ethics officer who can coordinate cross-functional governance. Platforms that connect health systems with professionals experienced in privacy-preserving ML and bias mitigation will be strategically valuable.

Sources

How Hospitals Can Use AI Without Exposing Patient Data – Newswise