Why this matters now

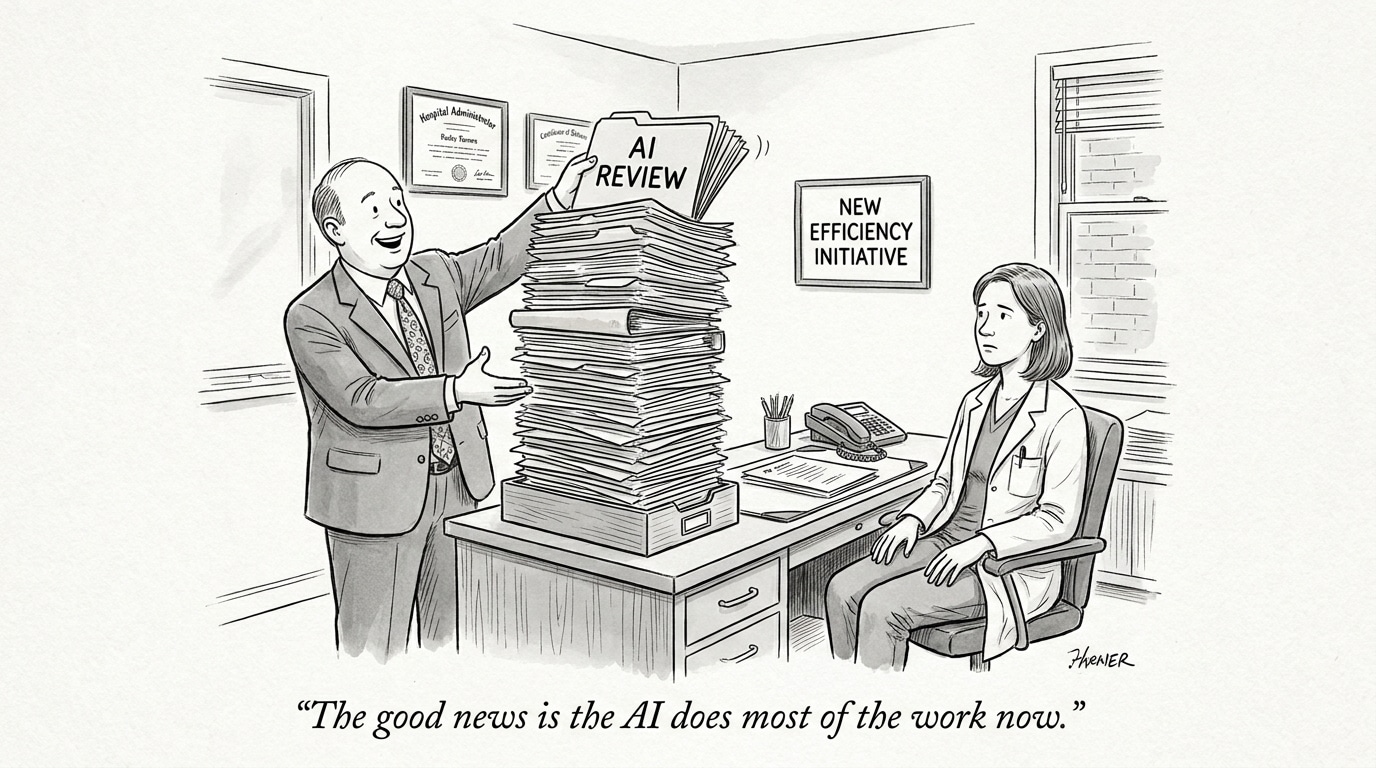

Investments in AI in healthcare accelerated rapidly over the past five years, driven by expectations of streamlined workflows, fewer diagnostic errors, and lower costs. Now a mix of disappointing returns, implementation friction, and uneven digital skills among clinicians and administrators is creating a more skeptical environment. That maturation point matters because organizations must decide whether to double down on scaled deployments, pause to rebuild foundations, or shift to selective, measurable pilots. The choices made in the next 12–24 months will determine whether AI becomes an operational asset or an expensive cautionary tale.

The hype cycle and its consequences

Like other sectors, healthcare experienced a surge of supplier claims that AI would quickly transform care delivery. When early pilots produced incremental rather than revolutionary gains, leaders began questioning the broad promises. That reaction is not just rhetorical: tightening budgets and regulatory scrutiny are forcing health systems to scrutinize vendor performance more closely and demand clearer proof of value before committing to enterprise-wide rollouts.

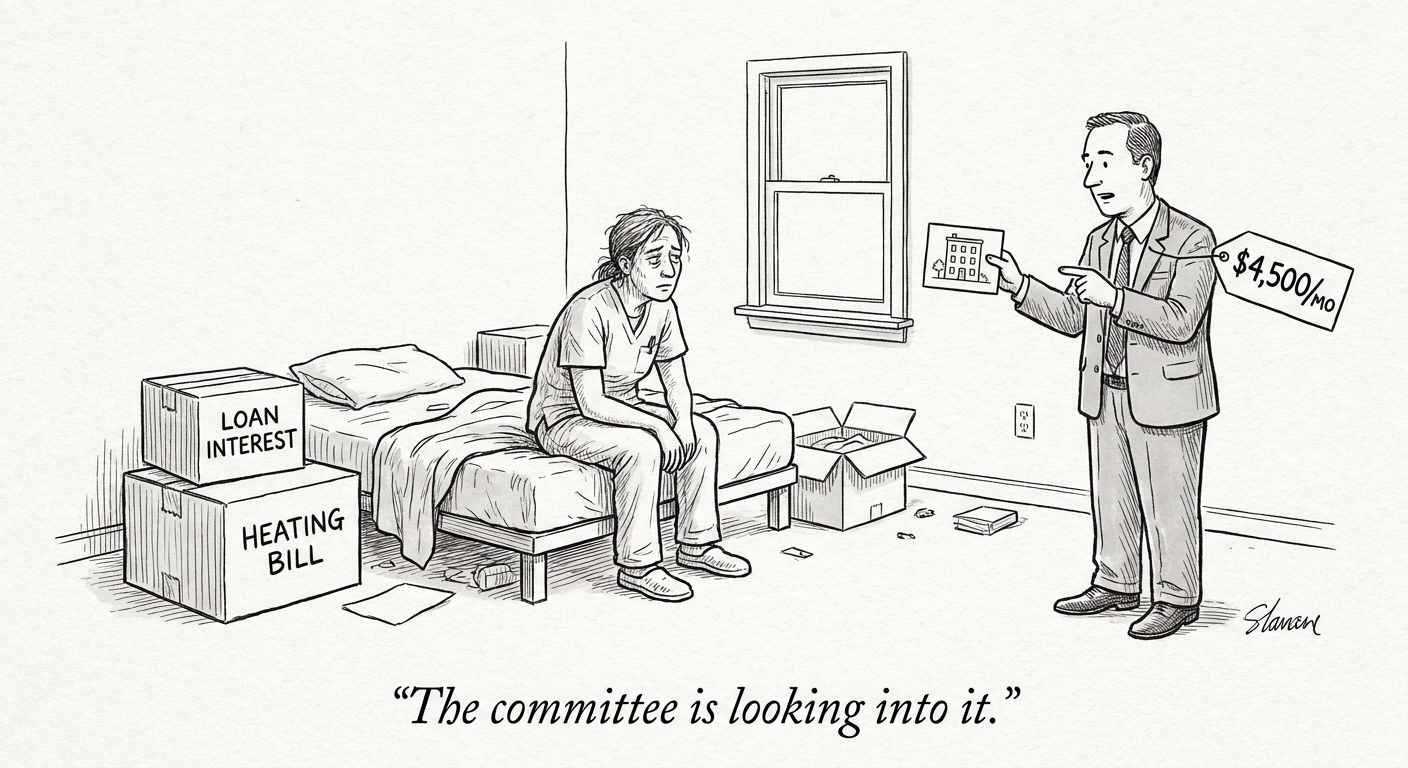

Why ROI often falls short

Failure to achieve projected returns usually reflects a mix of measurement gaps and mismatched expectations. Vendors often present accuracy or throughput metrics from controlled settings; translating those metrics into sustained cost savings or capacity gains requires additional system changes. Hidden costs—data engineering, integration with electronic health records, clinician training, and ongoing validation—can outstrip initial estimates. Moreover, improvements in a narrow metric (e.g., model sensitivity) do not automatically convert to downstream efficiencies without associated workflow redesign and governance.

Call Out: Real ROI arrives when AI is measured end-to-end—from data curation and clinician adoption to downstream operational effects—not just by performance on test sets or isolated metrics.

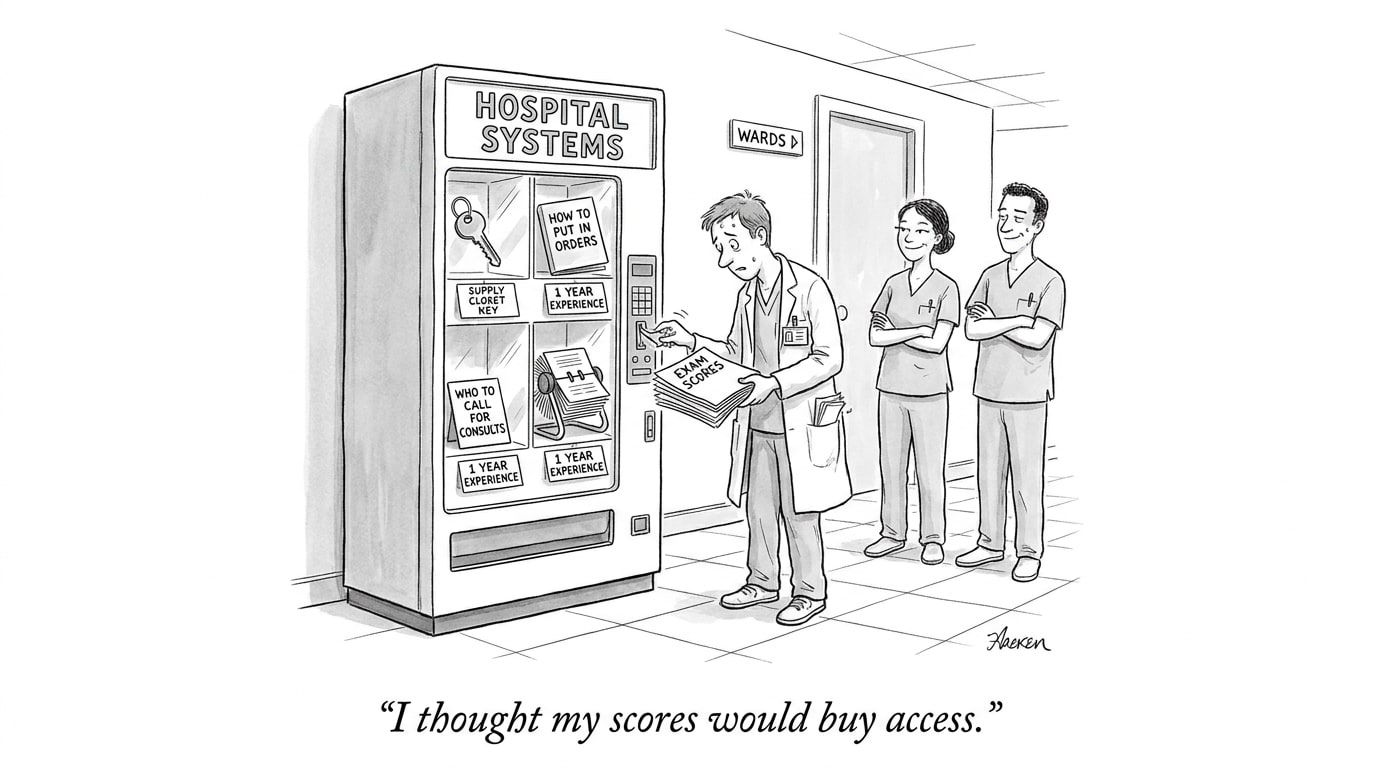

Digital literacy as the implementation linchpin

Adoption challenges frequently trace back to uneven digital literacy among frontline staff and leadership. Clinicians who lack familiarity with how models are built, validated, and updated are less likely to incorporate outputs into decision-making. Administrative teams without analytics fluency struggle to translate model scores into operational triggers. Investing in foundational digital skills—data interpretation, model limitations, and error modes—enables more informed acceptance testing and faster troubleshooting when AI behaves unexpectedly.

Call Out: Building digital literacy across clinical and administrative roles reduces dependence on external vendors and accelerates meaningful, sustainable AI adoption.

Practical interventions that close the gap

Several concrete practices reduce the gap between promise and delivery:

- Start with measurable use cases: Select opportunities where the causal chain between an AI prediction and an operational outcome is clear and trackable (e.g., task prioritization that shortens time-to-intervention).

- Define full-cost ROI: Budget for data pipelines, integration, human-in-the-loop processes, and ongoing monitoring when computing expected returns.

- Adopt phased scaling: Use tightly controlled rollouts with pre-specified success criteria before expanding scope.

- Invest in governance: Establish multidisciplinary oversight that includes clinicians, IT, data scientists, compliance, and operations to manage model lifecycle and risk tolerance.

- Measure clinician adoption and behavior: Track how often outputs are used, ignored, or overridden, and correlate usage with outcomes to determine real-world value.

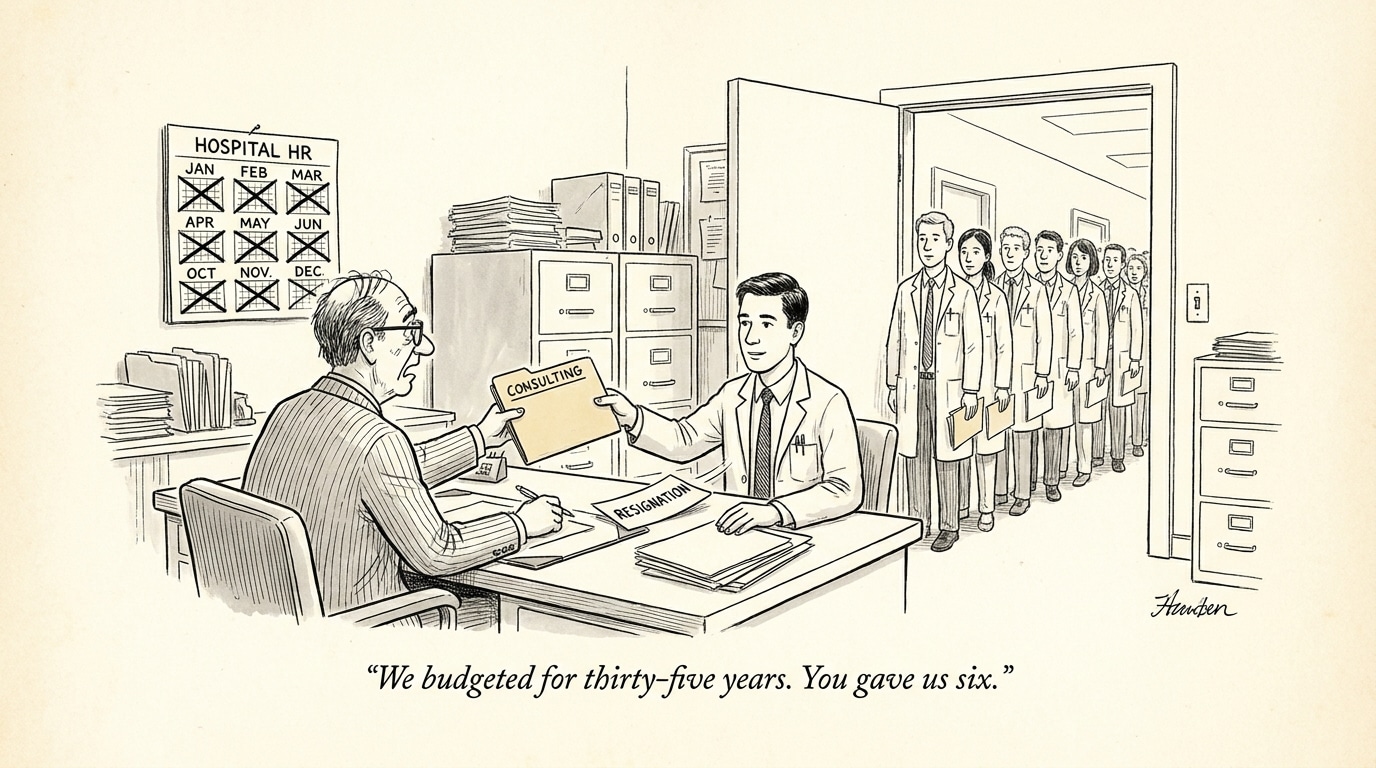

Recruiting and workforce implications

For hiring and talent strategy, the present moment shifts priorities. Rather than hiring only for algorithmic expertise, organizations should recruit for roles that bridge domains: implementation engineers who can integrate models into EHRs, clinical informaticists who can translate model outputs into care pathways, and upskilling leads who can deliver digital literacy programs. Employers should also seek candidates with experience measuring operational impact and change management—not just ML model development. Job descriptions and evaluation criteria must evolve to emphasize cross-functional delivery over isolated technical achievement.

Conclusion: a pragmatic path forward

AI in healthcare is at an inflection point where enthusiasm must be balanced with operational rigor. The most costly mistake now is one of timing—either abandoning promising technologies because early pilots underperformed due to avoidable implementation failures, or expanding prematurely without the people, processes, and measurement capabilities required to capture value. Organizations that slow down to shore up digital skills, governance, and realistic ROI accounting are more likely to convert AI investments into durable improvements.

For healthcare recruiters and hiring managers, this means adjusting talent plans to prioritize interdisciplinary implementers and educators. Job boards and marketplaces that understand these nuanced role requirements—linking clinical domain knowledge with integration and change-management skills—will play a pivotal role in helping systems get from pilot to scale.

Sources

Viewpoint: Healthcare needs AI ‘bubble to burst’ – Becker’s Hospital Review

AI adoption in healthcare requires digital literacy – MobiHealthNews