Why this theme matters now

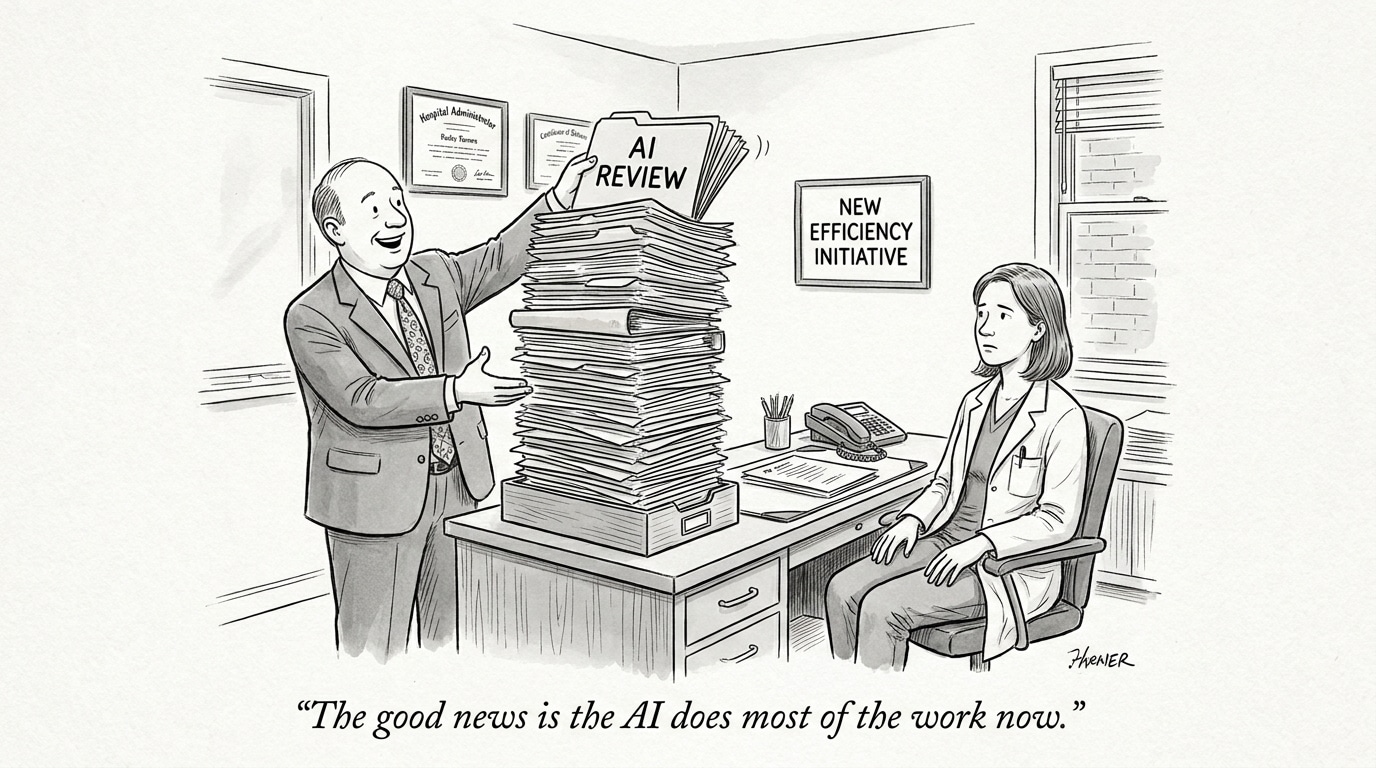

AI tools have moved from research labs into clinical workflows and consumer-facing channels at breakneck speed. That rapid diffusion has created a paradox: potential for improved diagnosis, triage, and administrative efficiency alongside an increasing number of reported safety incidents, regulatory questions, and misuse cases. The signals are converging … indicating the sector has not yet matched innovation with structured AI in Physician Employment & Clinical Practice capable of ensuring safe and accountable deployment. For health systems, technology vendors, and talent teams, the risk is not only technological failure but reputational and legal exposure that could slow adoption and harm patients.

Patterns of misuse and emergent risks

Recent incidents highlight multiple failure modes: consumer chatbots delivering inappropriate medical guidance, pilot programs deploying algorithmic prescribing without transparent validation, and clinical tools being adopted before robust real-world evaluation. These are not isolated bugs but symptomatic of three common conditions: vendors optimizing for rapid deployment rather than exhaustive testing; buyers assuming general-purpose models generalize to specific clinical contexts; and clinicians or patients using AI outputs without clear guardrails. The result is a widening gap between capability and safe, accountable use.

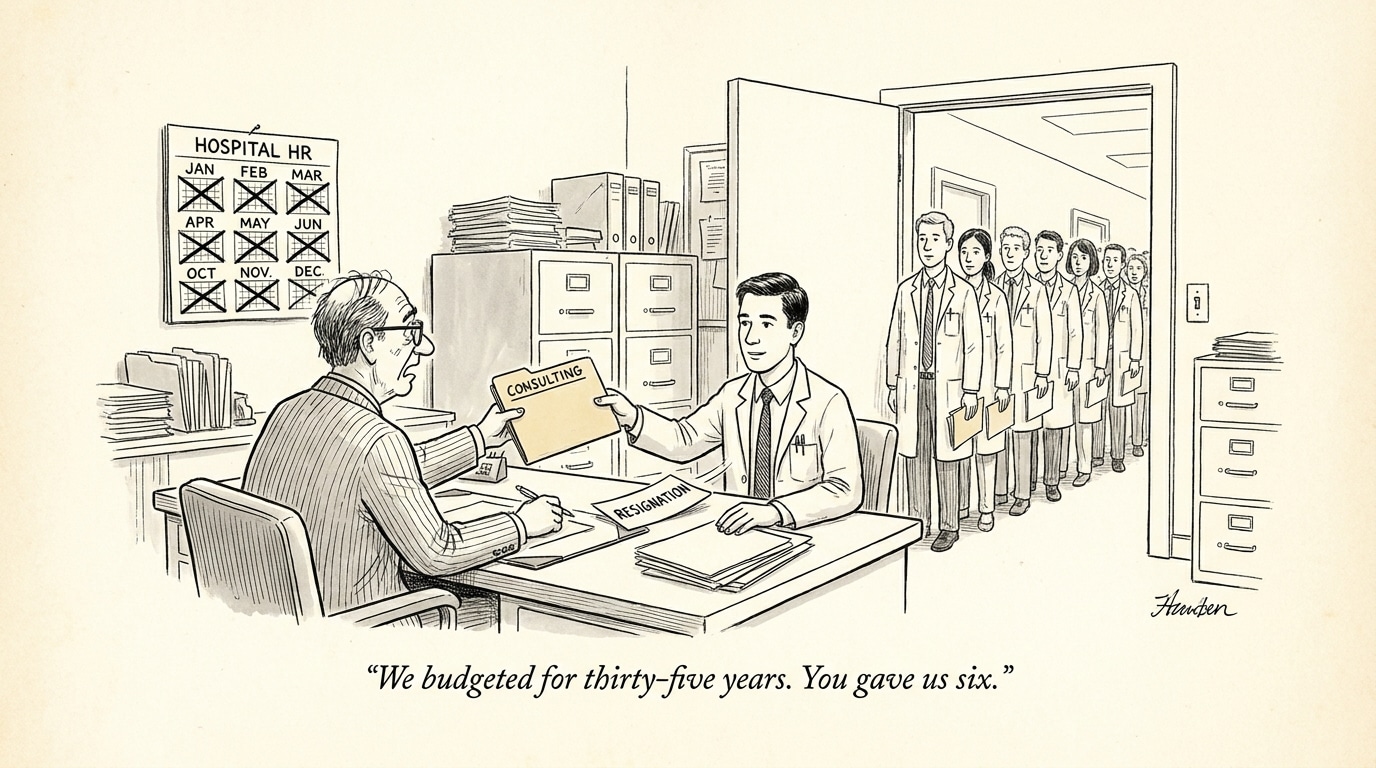

What’s driving the overreach

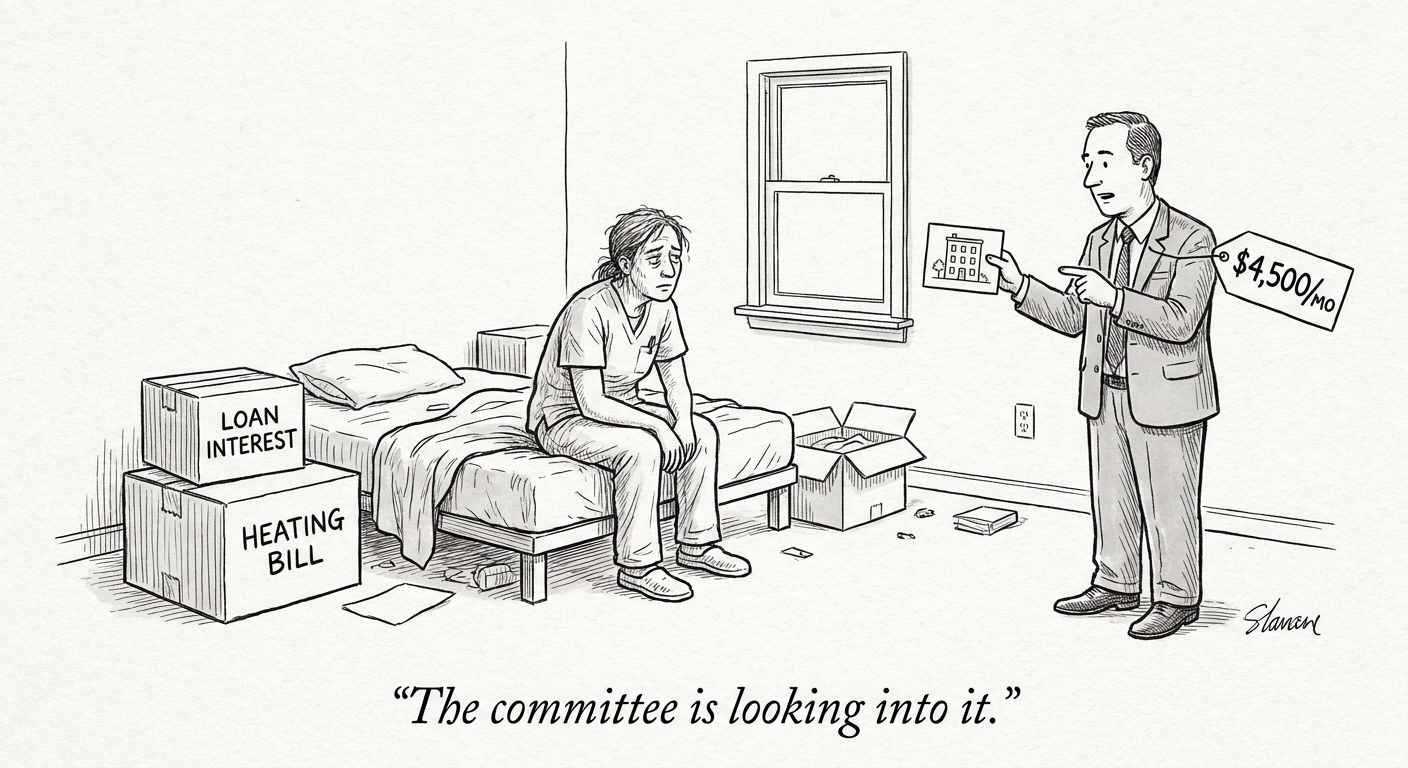

Several systemic drivers explain why health AI has outpaced governance. First, strong commercial incentives reward first movers and broad claims of clinical benefit. Second, a cultural appetite within health systems for efficiency gains and labor relief encourages fast adoption. Third, regulatory frameworks and procurement processes have lagged technical advances, creating ambiguity over liability, validation standards, and post-deployment monitoring. Together, these factors create an environment where imperfect models are integrated into care pathways and consumer channels before their limits are fully understood.

Call Out: Patient safety and momentum are in tension — unchecked deployment of loosely validated models shifts risk from vendors to frontline clinicians and patients. Robust validation and ongoing surveillance must be treated as non‑optional investments, not optional features.

Comparing responses: regulation, critique, and media attention

Responses across stakeholders are diverging. Some regulators and oversight bodies are probing specific pilots and products, asking for data on validation, bias, and adverse outcomes. Health system leaders and clinicians are increasingly vocal about the need for independent evaluation and conservative deployment strategies. Meanwhile, investigative reporting has raised public awareness of concrete harms and gaps, which amplifies political and legal scrutiny. This combination of bottom-up clinician caution, top-down regulatory inquiry, and public scrutiny is forcing a recalibration of how health AI is procured and governed.

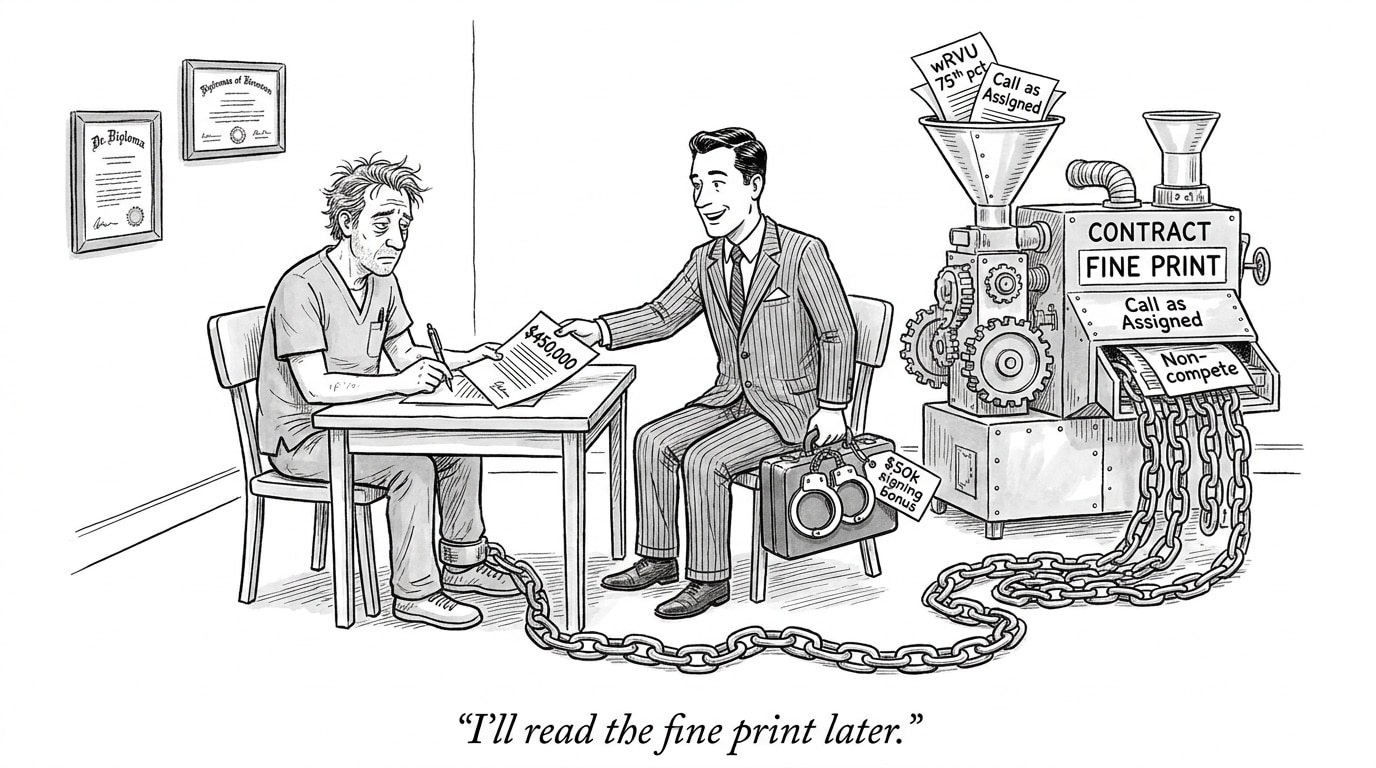

Call Out: Expect a new procurement playbook: explicit evidence thresholds, clinical risk classification, and post-market surveillance will be prerequisites for major deployments. Talent teams must value governance literacy alongside technical skills.

Practical course corrections for health systems and vendors

To move from reactive to proactive, organizations should adopt a layered strategy. First, require transparent, context-specific validation data — not only performance metrics but documentation of the training data, known failure modes, and subgroup performance. Second, implement staged rollouts with active clinician oversight and mechanisms for rapid rollback when anomalies appear. Third, build continuous monitoring pipelines that capture both technical drift and clinical outcomes. Fourth, clarify accountability in contracts: who owns adverse outcomes, how liability is apportioned, and what remedial actions are mandated. Finally, invest in workforce training so clinicians and procurement teams can critically evaluate vendor claims and operationalize safeguards.

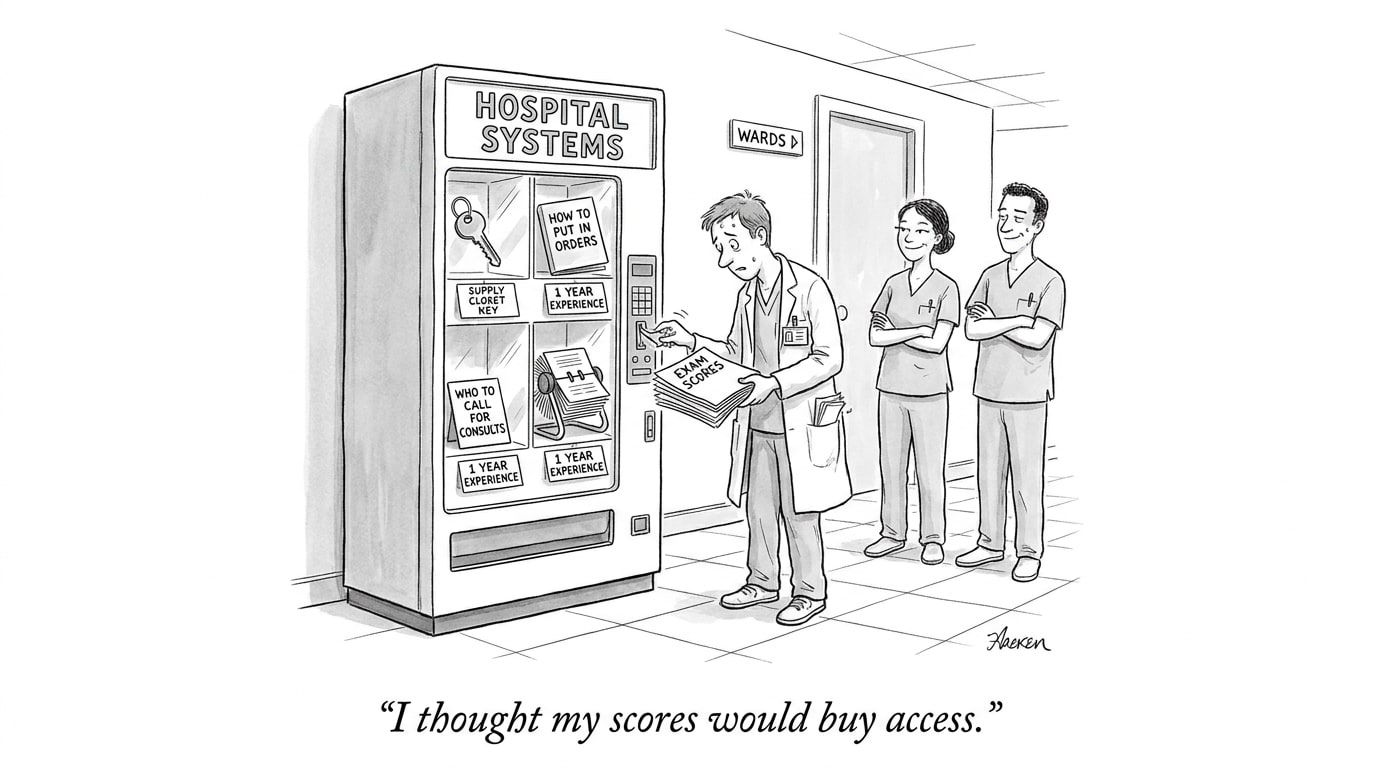

Implications for healthcare recruiting and workforce strategy

The evolving risk landscape changes the profile of talent health systems need. Beyond data scientists and ML engineers, organizations must hire or upskill professionals in AI governance, clinical safety engineering, post-deployment monitoring, and regulatory compliance. Job descriptions should explicitly require experience with real-world evidence generation, risk assessment frameworks, and cross-functional implementation — skills that bridge clinical, technical, and legal domains. For vendors, recruiting must emphasize clinical validation expertise and transparency practices to regain buyer trust.

Conclusion: rebuilding trust through governance

The current phase of AI in healthcare appears likely to produce a corrective moment: one driven by regulatory scrutiny, critical reviews, and high‑visibility failures. That correction can be constructive if it raises the bar for evidence, accountability, and operational controls. For organizations that anticipate and implement rigorous governance, the moment is an opportunity to differentiate on safety and credibility rather than speed to market. For recruiting leaders and workforce planners, the priority is clear: source talent that treats governance as a first-class competency and embeds monitoring and validation into the lifecycle of every deployed model.

Sources

Misuse of AI chatbots in health care tops 2026 Health Tech Hazard Report – Health Journalism

The Coming Clinical Correction: Why Health Care Needs Its AI Bubble To Burst – Health Affairs

The FDA questions underlying Utah’s AI prescription pilot – STAT