Why this theme matters now

The last 18 months have seen regulators, privacy officials, and industry groups converge on a shared realization: clinical and operational AI cannot be treated as experimental software. Pressure from health authorities and privacy commissioners reflects mounting evidence that AI systems affect diagnosis, treatment, patient privacy, and organizational liability. As vendors accelerate model releases and health systems embed AI into workflows, the window to put durable governance in place is closing. Organizations that move from reactive checklists to integrated governance will reduce risk and unlock real operational value.

Regulatory patchwork and emergent guardrails

Regulation is forming along multiple axes—privacy, product safety, and institutional accountability—rather than through a single harmonized law. Privacy authorities emphasize risk assessments and data-handling controls for models trained on patient data or that generate sensitive inferences. Health regulators and agencies responsible for medical devices are increasingly treating high-risk clinical decision support tools with device-like expectations for validation, transparency, and post-market surveillance. At the institutional level, guidance is pushing for governance that spans procurement, deployment, monitoring, and clinician oversight.

That multiplicity of frameworks creates complexity: compliance is not a single checkbox but a set of interoperable controls. For instance, a health system deploying an AI triage tool must satisfy privacy impact assessments, demonstrate clinical validity to oversight bodies, and maintain contractual controls over vendors. Organizations that design governance to map these overlapping obligations will be better positioned to avoid enforcement actions and patient harm.

Operationalizing compliance: from policy to practice

Translating regulatory expectations into daily operations requires three capabilities: risk stratification, evidence pipelines, and continual monitoring. Risk stratification determines which models require the strictest controls based on clinical impact and data sensitivity. Evidence pipelines collect and version datasets, document model performance across subpopulations, and create audit trails that mirror regulatory evidence requirements. Continual monitoring uses telemetry and outcome tracking to detect performance drift and emergent biases once models are live.

Technologies can assist, but organizational processes matter most. Cross-functional review boards that include clinicians, privacy officers, legal, and IT—backed by clear decision rights—shorten approval cycles while ensuring accountability. Procurement contracts must mandate access to model documentation and rights to audit. And incident response playbooks should integrate reporting to regulators when thresholds for patient safety or privacy are met.

Governance is operational: mapping models to risk, baking evidence collection into the development lifecycle, and running continuous post-deployment surveillance are non-negotiable components of a compliant AI program.

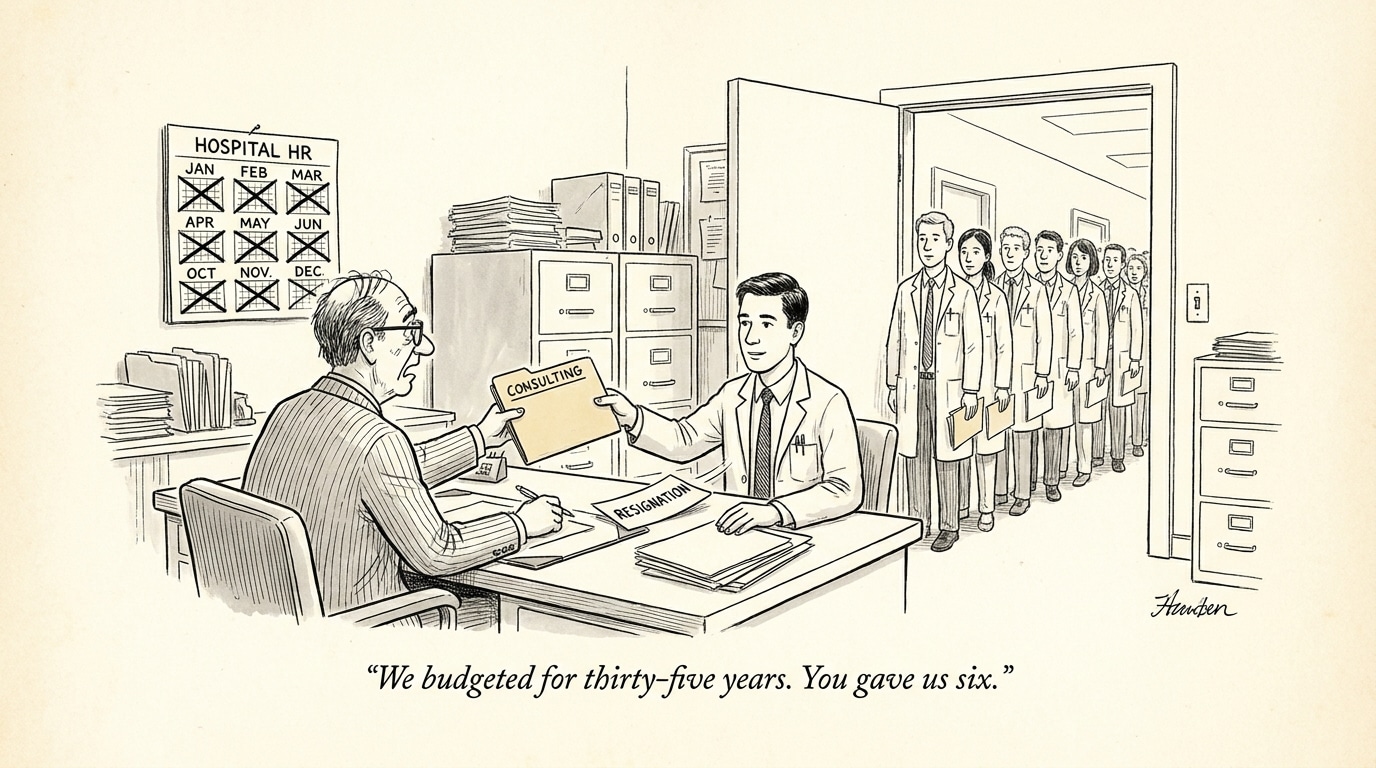

Legal risk, vendor management, and liability allocation

Legal exposure shifts as AI moves from pilot to production. Potential liabilities include regulatory sanctions, malpractice claims tied to clinical recommendations, and privacy enforcement for improper data use. Contractual terms—warranties, indemnities, audit rights, and data stewardship clauses—become primary tools for transferring and managing risk with vendors. However, contracts alone are insufficient: organizations must verify vendor claims through independent validation and maintain the capability to rollback or disable systems that deviate from expected performance.

Risk allocation also influences procurement strategy. For high-risk clinical applications, organizations may demand higher transparency, reproducibility, and access to model internals; for lower-risk administrative tools, standard vendor assurances might suffice. Legal and compliance teams therefore need tiered contract templates aligned to the organization’s risk framework.

Embedding audit rights, performance guarantees, and data provenance clauses into vendor contracts is essential—but equally important is the internal capability to validate and enforce those contractual commitments.

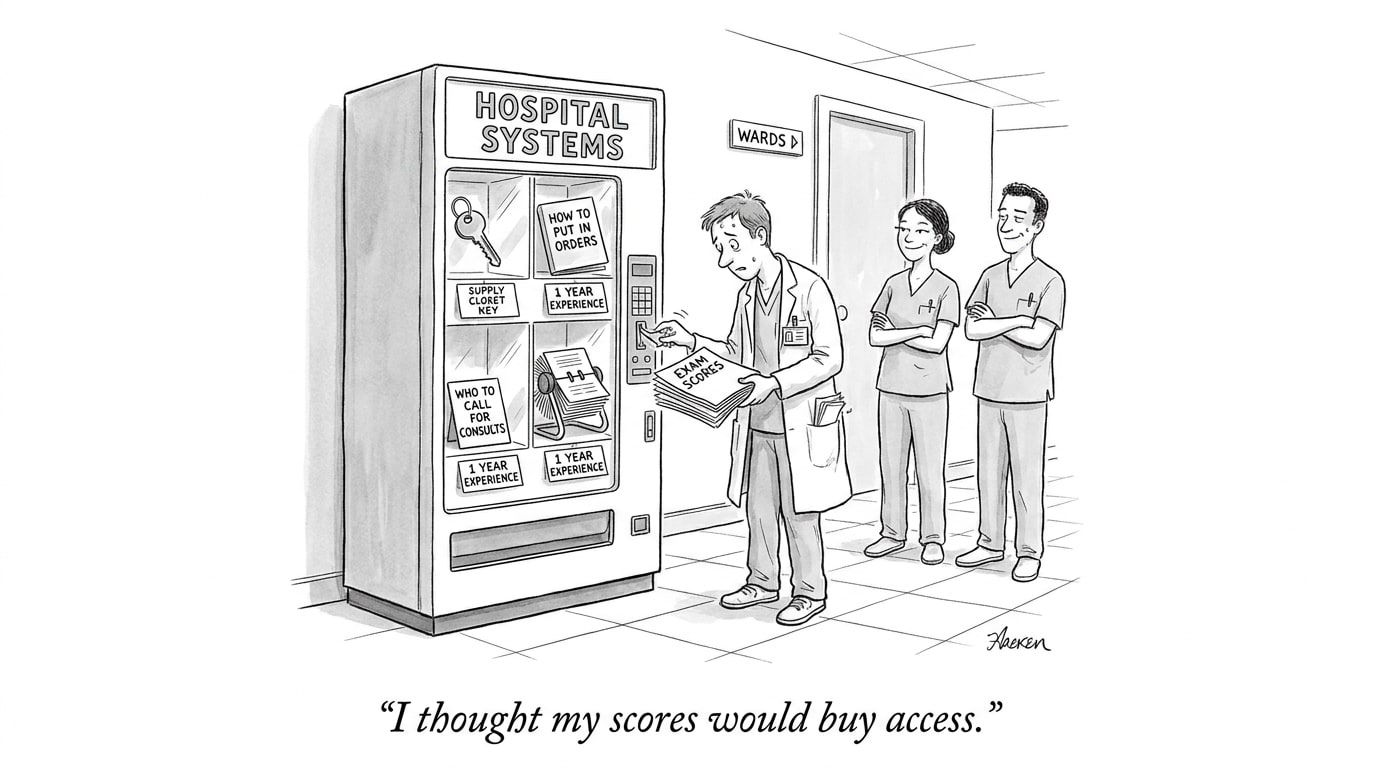

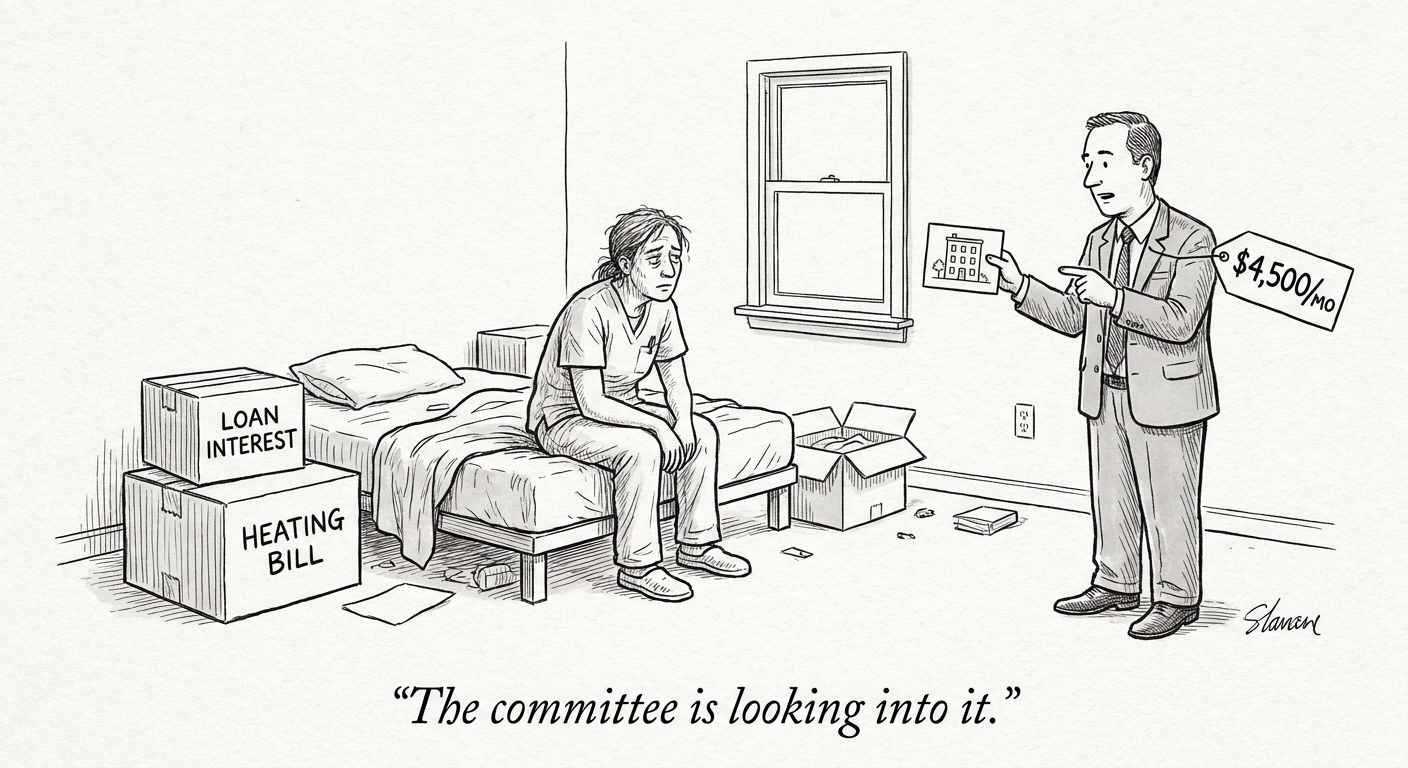

Workforce and recruiting implications for health systems

Robust governance demands new roles and new skills. Health systems will need clinical AI stewards, model risk officers, data governance leads, and privacy liaisons who can operate at the intersection of care delivery, data science, and regulation. Recruiting for these roles requires candidates who understand both clinical workflows and regulatory expectations, not just technical expertise.

For recruiters and job boards in the healthcare AI space, this trend alters demand signals: employers increasingly prioritize experience with regulatory evidence generation, clinical validation studies, and operational monitoring. Platforms like “PhysEmp” that surface candidates with hybrid experience—clinical domain knowledge plus regulatory or implementation expertise—will be more valuable to hiring managers aiming to scale AI safely.

Implications for the industry and next steps

Healthcare organizations should move from ad hoc AI projects to a programmatic approach that links policy, technical controls, and legal safeguards. Immediate steps include: establishing a risk-tiering framework for AI, creating an evidence pipeline for model validation, revising procurement standards to require transparency and auditability, and building cross-disciplinary governance bodies with clear escalation paths.

Regulators are signaling enforcement will follow guidance—so waiting for final laws is a risky strategy. Organizations that build governance now will not only reduce legal exposure but will also accelerate adoption by providing clinicians and patients with credible assurances that AI is safe, fair, and reliable.

Sources

The Need for Comprehensive Governance of AI Use in Health Care Settings – JD Supra

Ontario’s IPC covers potential risks, guardrails as AI meets health care – IAPP

Using AI to Operationalize Regulatory Controls in Health Tech – IQVIA