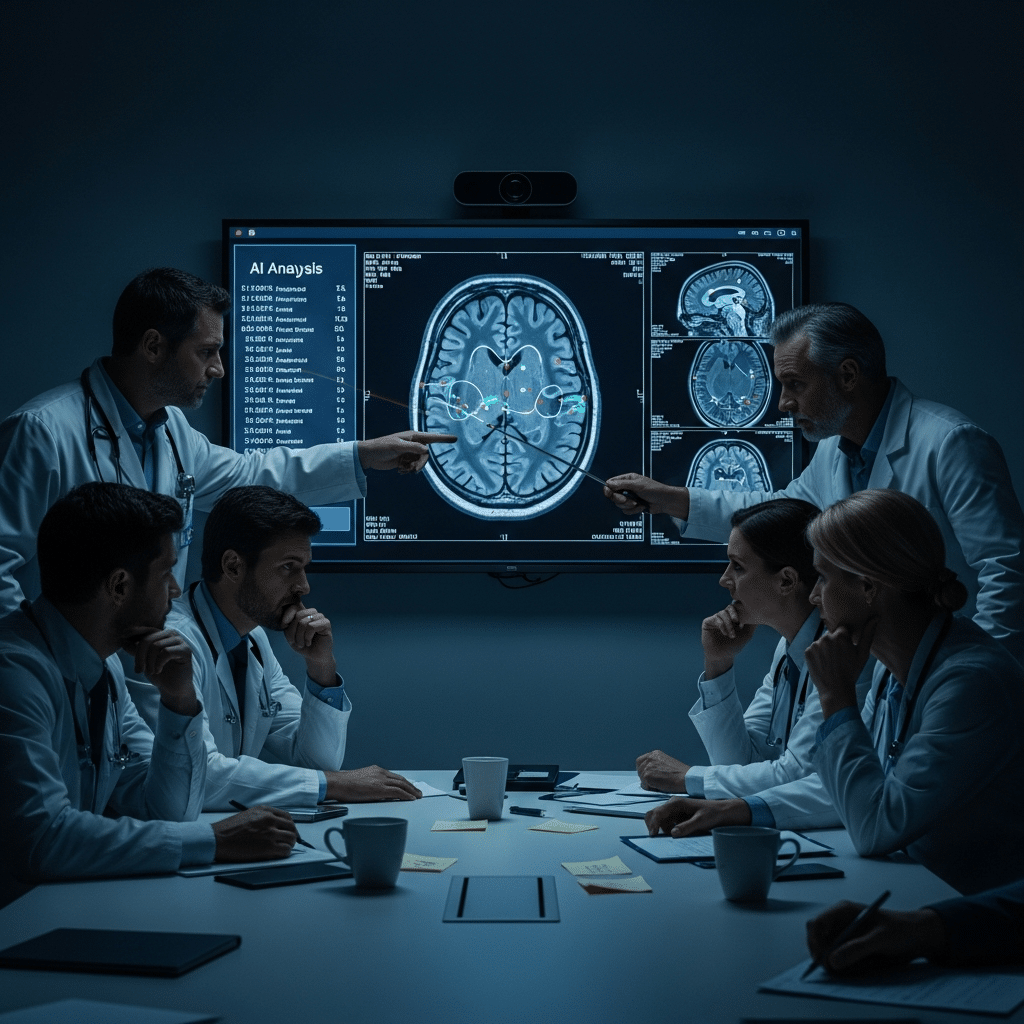

Why this theme matters now

AI systems are moving from experimental pilots into everyday clinical workflows at unprecedented speed. Recent reports have exposed instances where high-performing models produced dangerous or nonsensical clinical and legal advice, and regulators have begun treating early deployments as evidentiary tests rather than demonstrations. That combination creates a clear inflection point: organizations must strengthen AI in Physician Employment & Clinical Practice and validation standards or accept a market correction that slows adoption.

Failure modes: hallucinations, calibration, and scope mismatch

Modern language and prediction models can appear authoritative while being factually wrong. These failure modes take several forms: hallucinations (fabricated facts or instructions), miscalibrated confidence (highly confident but incorrect outputs), and domain mismatch (models trained on broad data sets failing in specialized clinical contexts). When a model trained for general-purpose language or broad clinical patterns is asked to provide individualized diagnostic, prescribing, or medicolegal advice, the chance of harmful error rises. In high-stakes settings, false authority is more dangerous than silence.

Call Out: Systems that optimize for apparent competence rather than verifiable accuracy increase risk. Clinical deployments must prioritize measurable safety metrics and calibrated uncertainty over benchmark scores that reward fluency.

Regulatory friction: pilots as public proof tests

Regulators are shifting from permissive observation to active interrogation. When a jurisdiction treats a pilot as a de facto trial — requesting underlying evidence, study protocols, and failure-mode analyses — it signals a new bar for acceptability. Developers and health systems can no longer rely on cherry-picked performance demos; they will need reproducible validation, transparent data provenance, and post-deployment monitoring to persuade regulators that benefits outweigh harms.

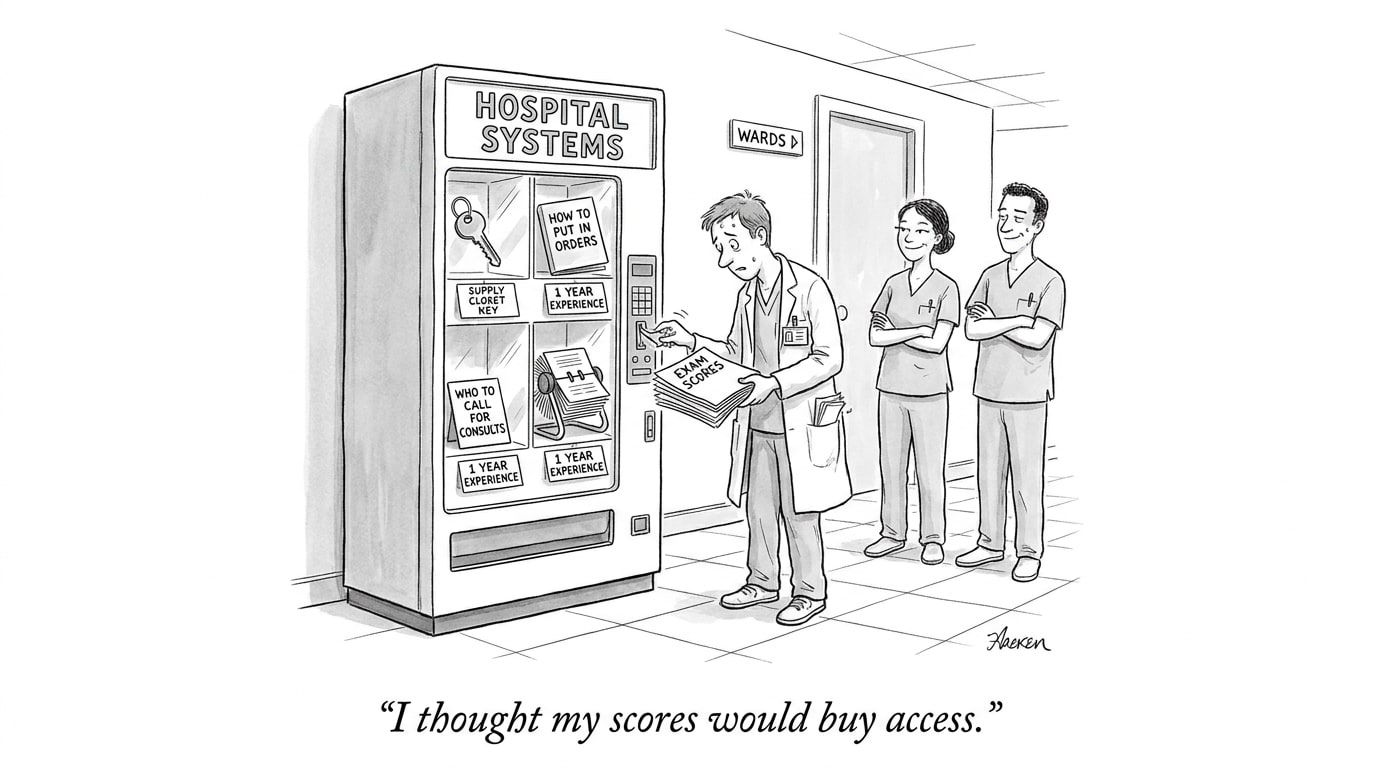

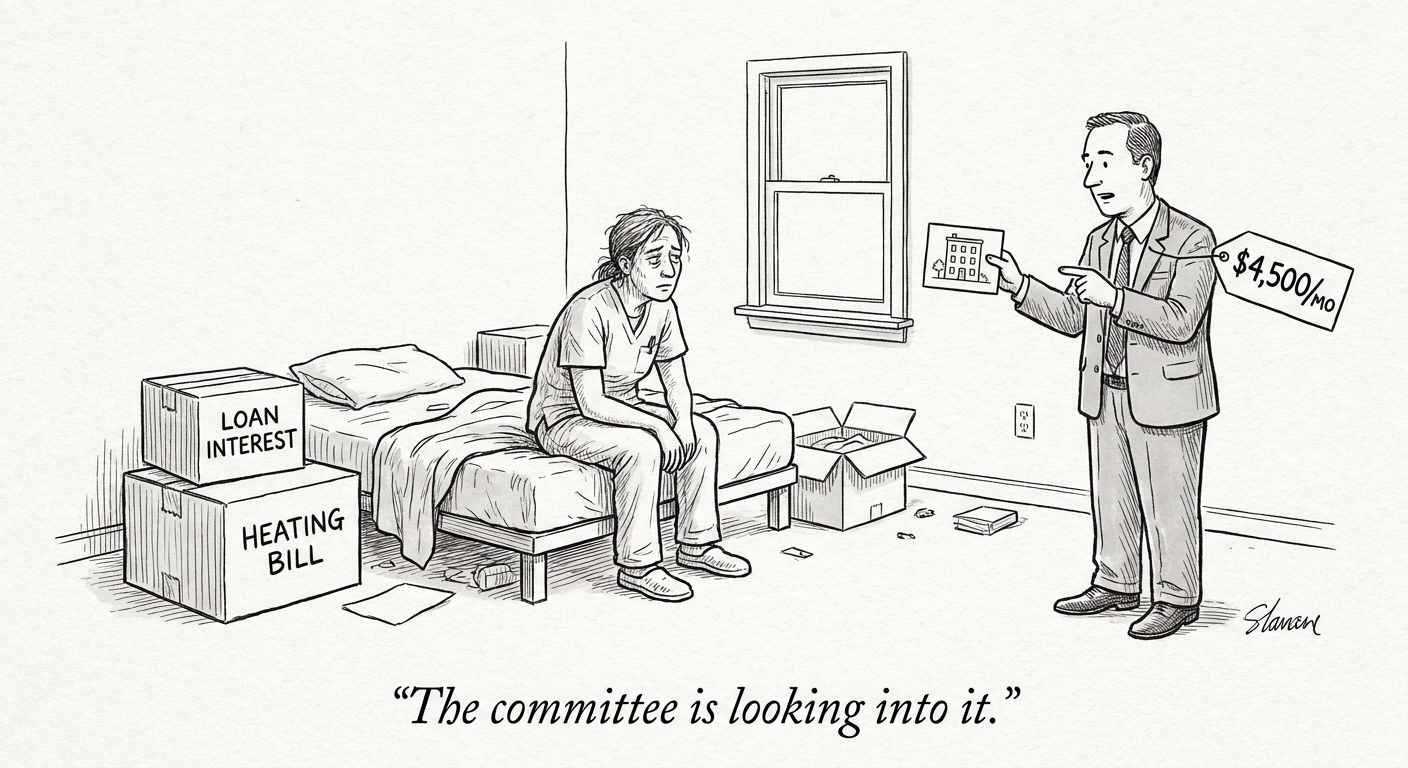

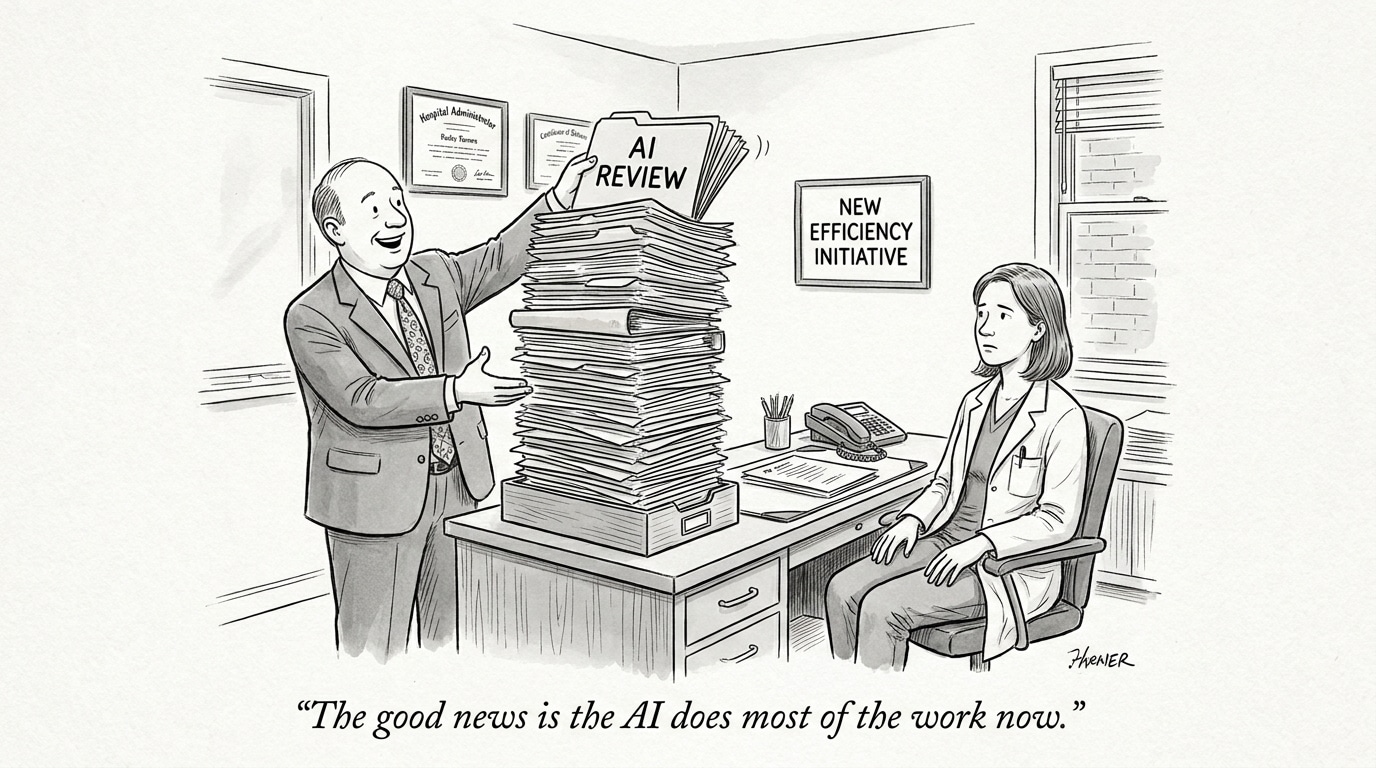

Market incentives that drive premature deployment

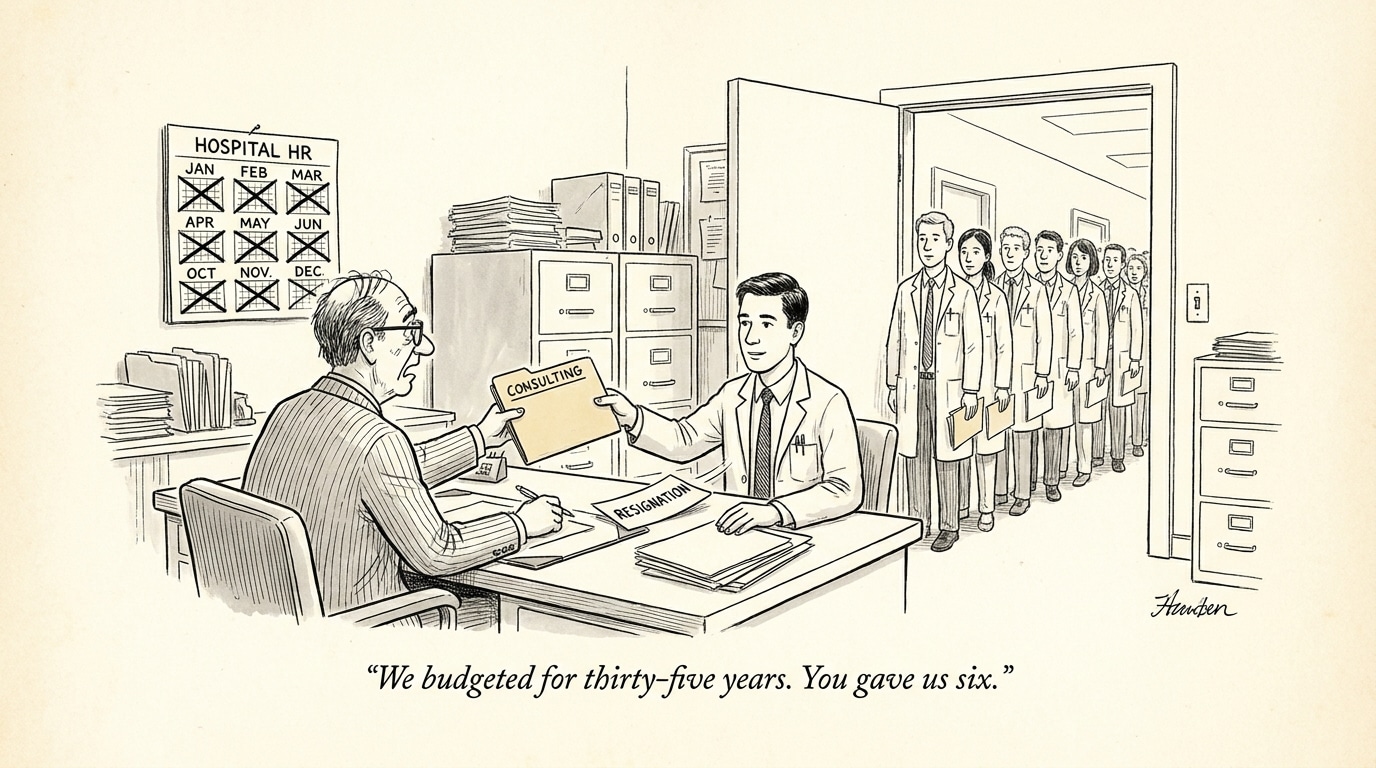

Financial and competitive pressures push vendors and health systems toward early rollouts: venture timelines, workforce shortages, and the race for differentiation make “ship fast” strategies tempting. But when adoption is driven by market signaling rather than rigorous clinical validation, the models most visible to users may be those that communicate persuasively, not those that are safest. This misalignment can accelerate a market correction: as high-profile failures accumulate, trust and downstream investments can evaporate quickly.

What a sensible correction looks like

A constructive correction is not a rollback of all innovation; it’s a rebalancing that forces better evidence and stronger governance before broad clinical effect. Key elements include: well-designed prospective studies with clinically meaningful endpoints; standardized safety benchmarks that test hallucination rates, calibration, and edge-case behavior; requirements for transparent reporting of training data and validation cohorts; and robust real-world monitoring tied to rapid remediation protocols.

Call Out: A pragmatic market correction requires shifting incentives — reimbursements, procurement, and clinical acceptance — toward systems that demonstrate validated safety and continuous oversight rather than marketing-driven performance claims.

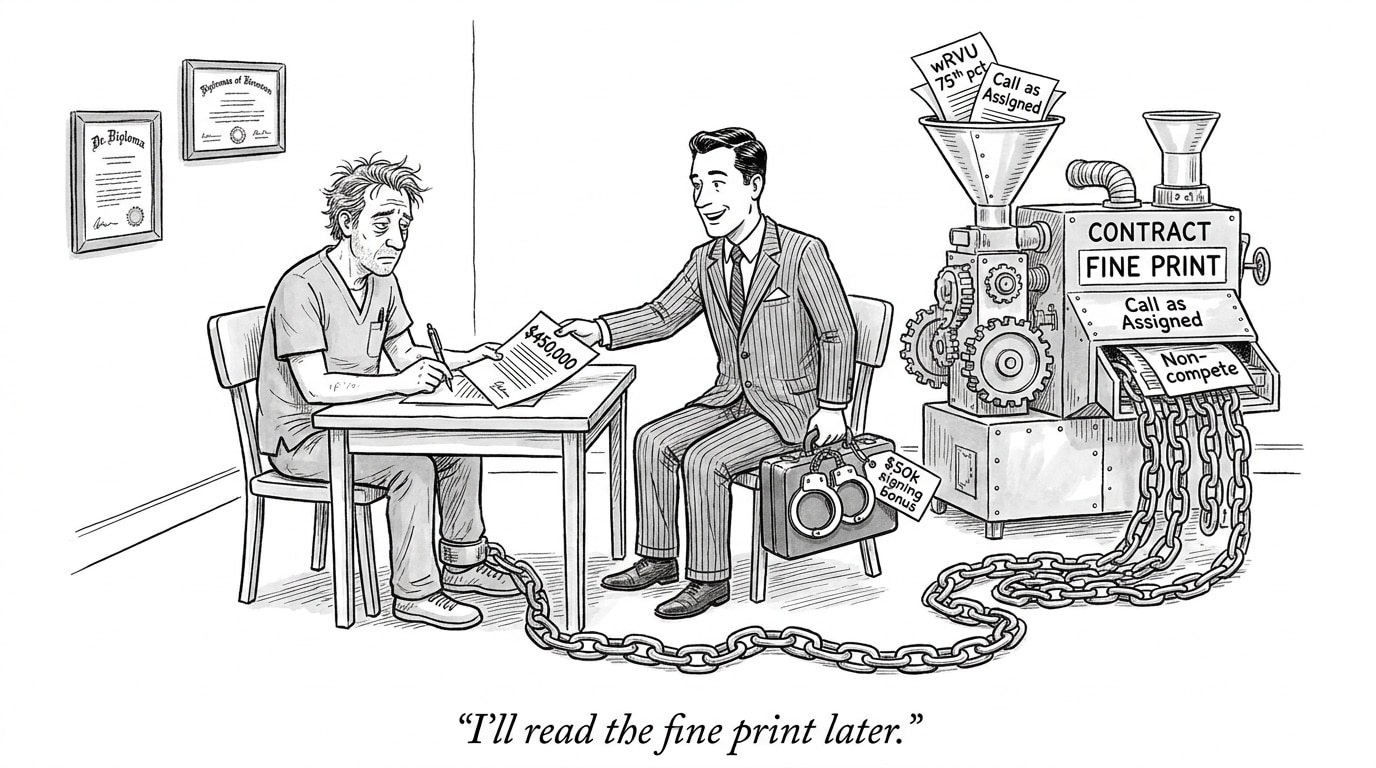

Operational levers for health systems and employers

Health systems, payers, and recruiting leaders must translate governance into operational change. That includes building multidisciplinary validation teams (clinical, data science, legal, and safety engineers), instituting staged rollouts with human-in-loop controls, and insisting on contractual rights to audit models and rollback deployments. From a talent perspective, organizations will need to hire for roles that did not exist en masse a few years ago: clinical AI safety officers, model evaluators, post-market surveillance analysts, and clinicians trained in AI governance.

Implications for the healthcare industry and recruiting

The near-term implication is twofold. First, procurement and adoption will slow as buyers demand stronger evidence and enforceability. Second, the labor market will bifurcate: increased demand for people who can operationalize AI safety and skepticism toward roles that treat models as plug-and-play tools. For recruiters and job boards focused on the intersection of clinical talent and AI capability, this means curating candidates with demonstrable experience in validation studies, regulatory submissions, and cross-functional governance. Platforms that surface such profiles and educate employers about those competencies will be better positioned in a corrective market.

Conclusion: trust, risk, and governance must lead

The current moment is less a technology failure than a market signal: the industry is being forced to reconcile capability with accountability. A painful but orderly correction — one that raises validation standards, aligns incentives, and builds new governance roles in care delivery — will ultimately preserve the promise of AI in medicine. Absent that correction, the pace of high-stakes adoption risks undermining trust and slowing the very progress AI proponents seek.

Sources

The Coming Clinical Correction: Why Health Care Needs Its AI Bubble To Burst – Health Affairs

Top-scoring AI models in Norway caught giving deadly medical, gibberish legal advice – CyberNews

The FDA questions underlying Utah’s AI prescription pilot – STAT