Why this theme matters now

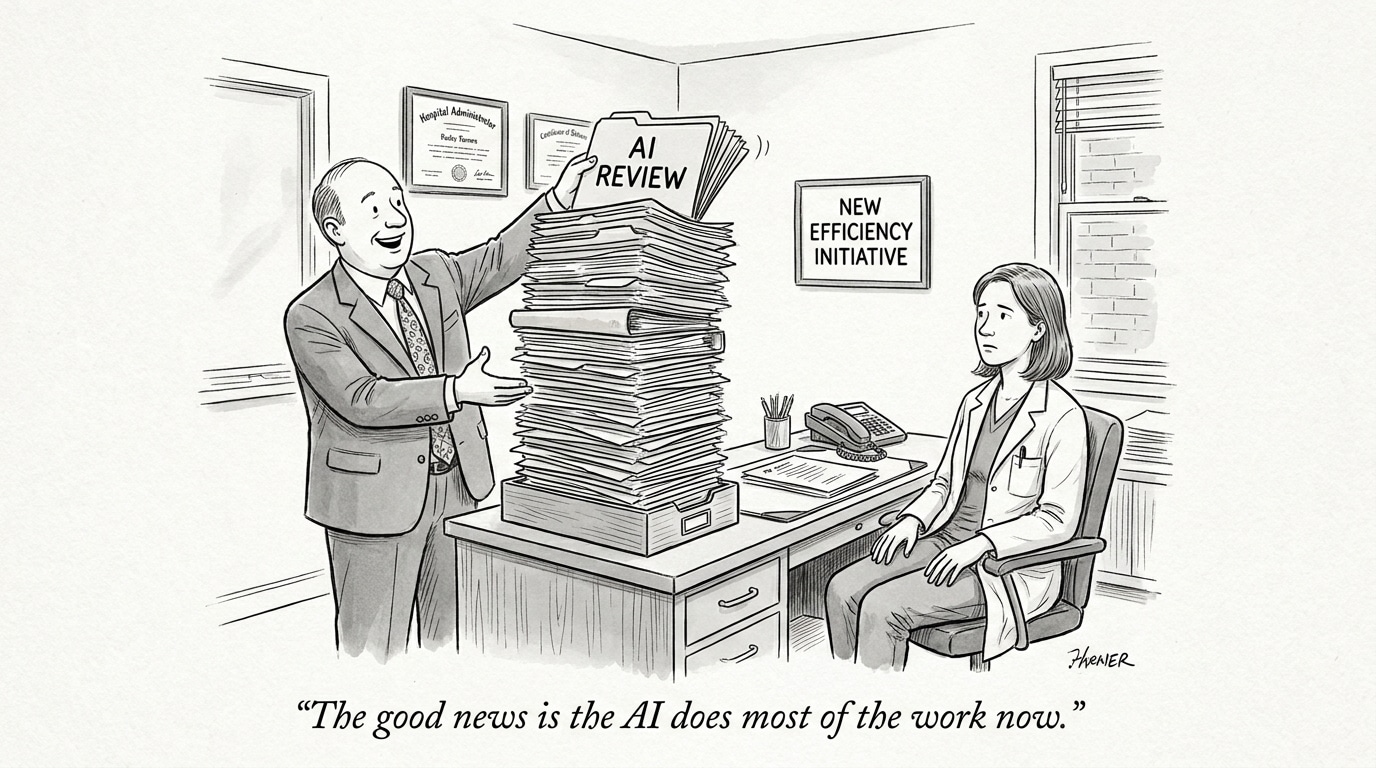

The rapid rollout of AI-driven recruitment tools has begun to reshape how employers find and filter talent—and how candidates find work. What started as automation to reduce administrative friction has evolved into opaque decision layers that can proactively surface, bury, or block candidates before human eyes ever evaluate them. For physician recruiting and staffing, where candidate quality, regulatory compliance, and timing are mission-critical, these shifts raise operational, legal, and ethical questions that recruiters and healthcare leaders can no longer treat as peripheral—underscoring the growing impact of AI in healthcare workforce systems and hiring infrastructure.

AI moves the funnel earlier: discovery, ranking, and invisibility

Modern hiring platforms do more than accept applications; they predict which openings a person might consider, which listings to show at the top of a feed, and which candidates to promote to employers’ dashboards. That pre-click personalization can improve relevance for many users but also creates invisible paths: candidates who don’t match opaque model signals never surface to the right roles, and employers never know what or whom they missed. For physician roles—where active searches are often time-sensitive and depend on licenses, specialties, and geographic constraints—this pre-filtering can quietly exclude qualified clinicians who simply don’t match a platform’s statistical profile.

Analytical implication

Recruiters must treat platform-driven discovery as a new layer of sourcing risk. Reliance on a single marketplace or algorithmic feed increases the chance high-value candidates are never presented. Diversifying sourcing channels and insisting on transparent ranking signals will be essential to avoid inadvertent talent loss.

Call Out: AI triaging can save time but also create blind spots—especially for niche physician roles. Healthcare recruiters should assume candidate invisibility is a live risk and design sourcing processes that surface talent outside algorithmic defaults.

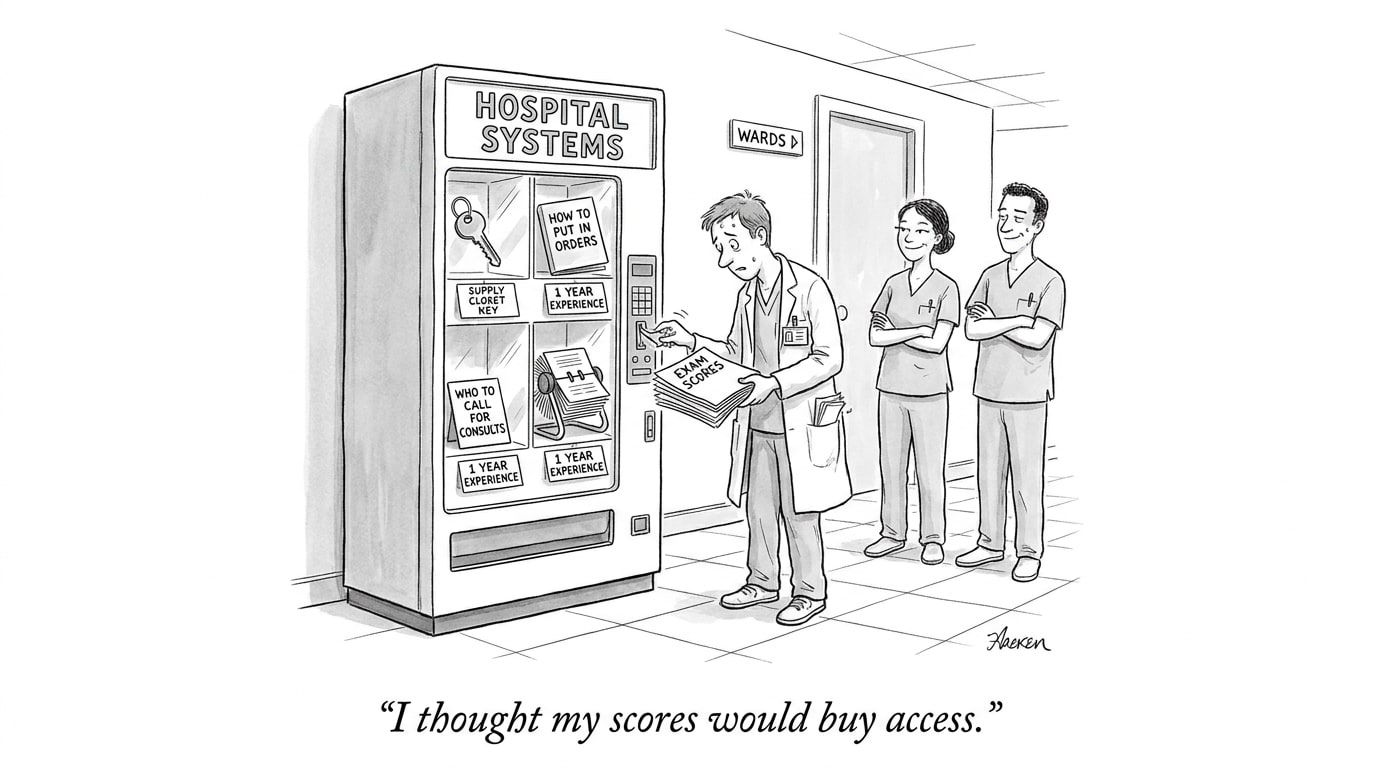

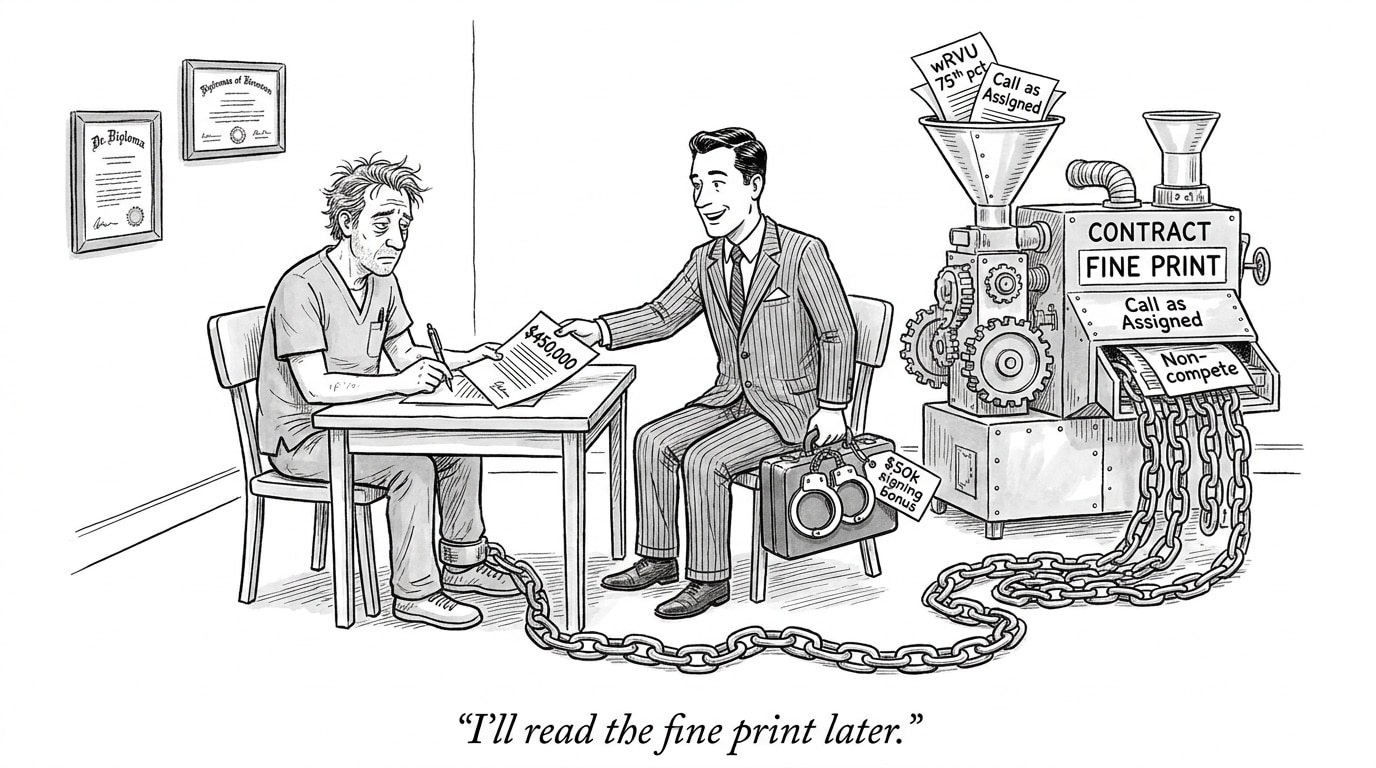

Opaque decisioning and legal exposure

Opacity is not just an inconvenience; it is an emergent legal and reputational risk. Lawsuits and regulatory attention are now testing whether algorithmic design or platform behavior can be treated as sabotaging job seekers or enabling discriminatory outcomes. For organizations that hire via third-party platforms, questions about accountability quickly surface: if a platform’s model reduces a qualified physician’s visibility because of proxy variables correlated with protected traits, who is responsible—the platform, the employer, or both?

Analytical implication

Healthcare systems and staffing firms must integrate vendor governance into hiring compliance. Contract clauses requiring fairness testing, model explainability, and remedial mechanisms are not optional; they’re risk mitigators. Staffing decisions for physicians carry quality-of-care and legal stakes that make defensive due diligence mandatory.

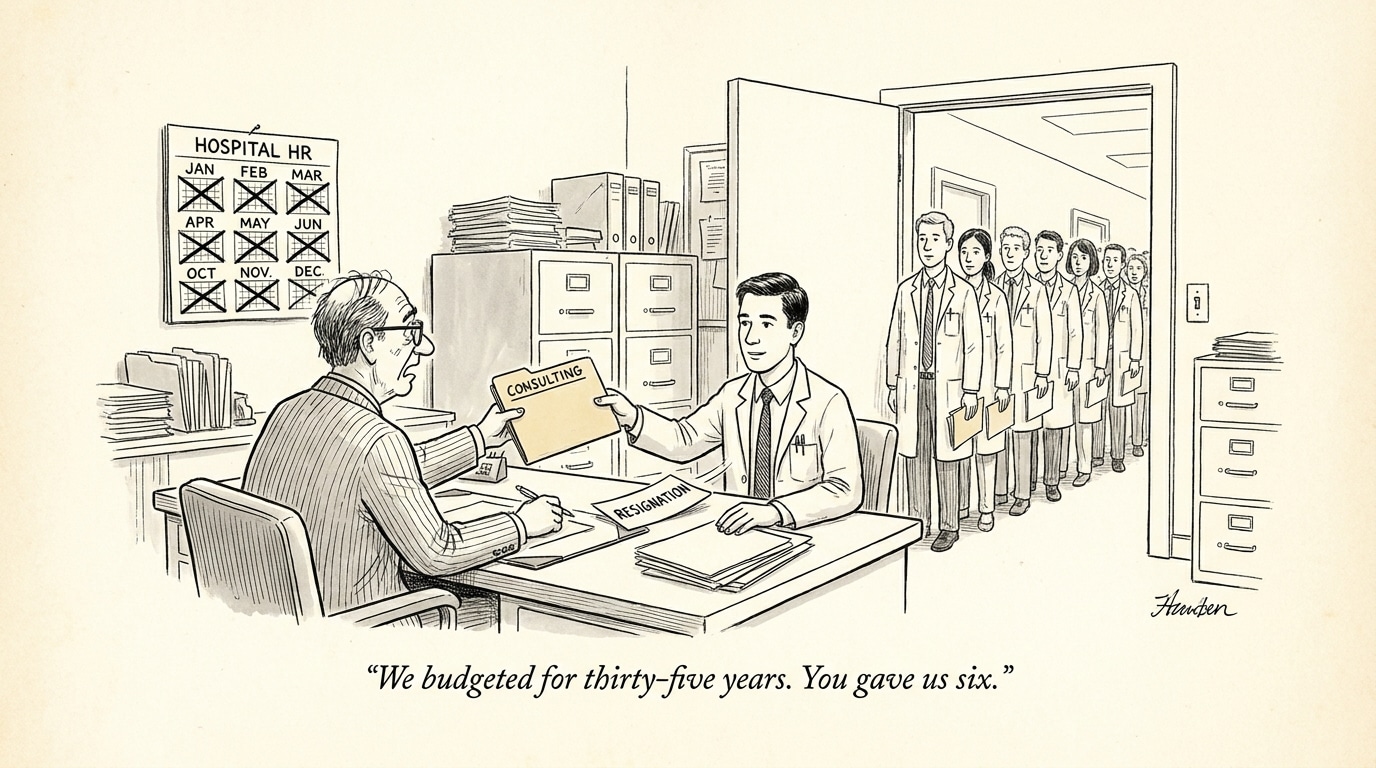

Market distortions: two-sided strain and efficiency illusions

AI-driven hiring platforms can create feedback loops that appear efficient while straining both sides of the market. Employers receive concentrated candidate lists optimized for short-term engagement metrics; candidates see highly curated lists that bias their perceptions of demand. The result is misaligned expectations, faster rejections, and more shadowed re-sourcing activity—friction that looks like speed but can lengthen true time-to-fill in specialized markets such as physician staffing.

Analytical implication

Operational metrics must evolve. Time-to-hire and application-to-offer ratios still matter, but so do ‘‘discovery coverage’’ (the share of relevant candidates actually exposed to a role), auditability of ranking logic, and quality-adjusted fill rates. Employers that rely solely on platform-reported efficiency gains may be blind to growing long-tail sourcing costs.

Call Out: Treat platform-provided efficiency metrics as provisional. Validate performance with independent sourcing audits and quality-adjusted hiring outcomes—especially for high-stakes physician placements.

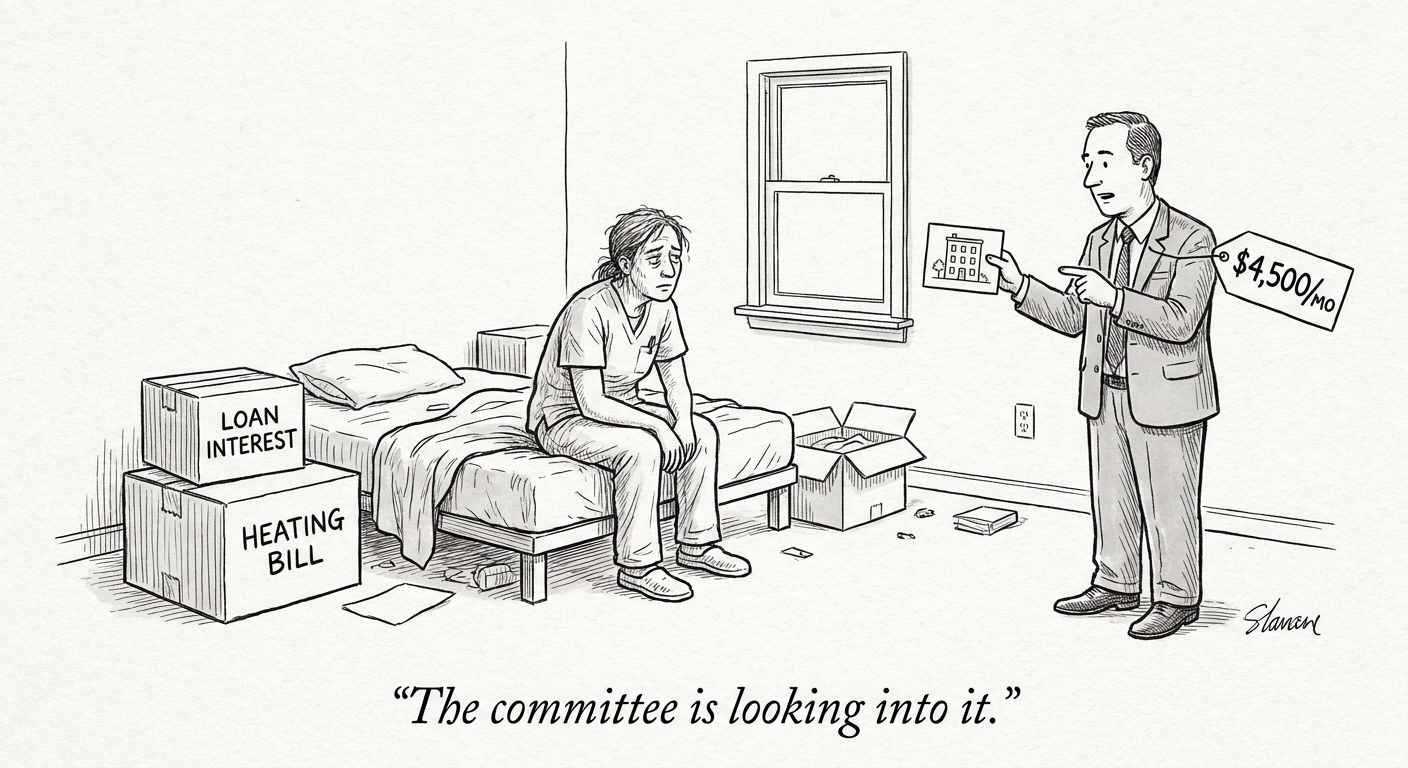

Practical steps for physician recruiters and healthcare leaders

AI in hiring isn’t going away. The strategic question for physician staffing is how to use these tools while preserving fairness, transparency, and clinical standards. Practical steps include:

- Vendor governance: Require model documentation, third-party audits for bias, and contractual remedies for harmful outcomes.

- Human-in-the-loop policies: Retain clinician or recruiter review gates for final shortlists and keep manual escalation channels for missed candidates.

- Metric redesign: Add discovery coverage, long-term retention, and quality-of-care proxies to hiring KPIs to detect hidden harms masked by surface efficiency.

- Candidate advocacy: Provide clear appeals and redress pathways when candidates believe algorithmic decisions harmed their prospects.

- Multi-channel sourcing: Combine platform feeds with direct outreach, specialized physician networks, and trusted job boards to reduce dependency on any single algorithmic gatekeeper.

Job boards and marketplaces that serve the physician market—”PhysEmp” included—should make explainability and clinician-centric workflows a differentiator. Platforms that offer transparency dashboards, audit logs, and recruiter controls will be better positioned to win trust in a sector where patient outcomes and regulatory compliance are on the line.

Conclusion: implications for healthcare hiring and recruiting

AI has the potential to make physician recruiting faster and more precise, but the arrival of pre-click personalization, opaque ranking, and platform incentives also introduces material risks. For healthcare organizations, the priority must shift from treating AI as an efficiency tool to treating it as an operationally critical system that requires governance, measurement, and human oversight. Recruiting teams should press vendors for transparency, expand sourcing strategies, and adopt new metrics that reveal hidden harms. Failure to adapt risks both talent shortages and legal exposure—while thoughtful integration can preserve clinical standards and improve placement quality.

Sources

Is the job market broken in 2026? How AI Is straining hiring on both sides – Computerworld

Class action lawsuit claims AI platform sabotages job seekers – NBC San Diego

AI is reshaping job discovery before candidates even click – AI Group