This analysis synthesizes 15 sources published the week ending Apr 21, 2026. Editorial analysis by the PhysEmp Editorial Team.

The promise of ambient AI scribes—reduced documentation burden, reclaimed face time with patients, and streamlined clinical workflows—has driven rapid adoption across health systems. Yet emerging research now confirms what many physicians suspected: AI-generated clinical notes consistently underperform human-authored documentation on quality metrics. This tension between efficiency gains and documentation integrity is reshaping how health systems must structure physician oversight, redefine productivity expectations, and recalibrate compensation models tied to clinical output. For physicians navigating career decisions and executives building AI-enabled care teams, the implications extend far beyond technology adoption into fundamental questions about AI in Physician Employment & Clinical Practice.

The Quality Gap: What the Research Actually Shows

A convergence of studies published this week delivers a consistent verdict: physicians produce higher-quality clinical notes than AI scribes across multiple dimensions. Research findings indicate AI-generated documentation lags in accuracy, completeness, and clinical utility—the very attributes that determine downstream care quality, coding accuracy, and medicolegal defensibility. While AI scribes demonstrate modest reductions in documentation time, these efficiency gains come with measurable quality trade-offs that mainstream coverage of healthcare AI frequently overlooks.

The quality differential is not marginal. Studies reveal systematic gaps in how AI scribes capture nuanced clinical reasoning, document pertinent negatives, and maintain the specificity required for accurate coding and billing. For specialists, where documentation complexity increases substantially, these shortcomings become more pronounced. The implication is clear: ambient AI cannot simply replace physician documentation effort—it requires active physician oversight to meet quality thresholds.

Health systems deploying AI scribes without structured physician review protocols risk degraded documentation quality that compounds across coding accuracy, care continuity, and liability exposure—creating hidden costs that offset efficiency gains.

Reframing Physician Oversight as Essential Infrastructure

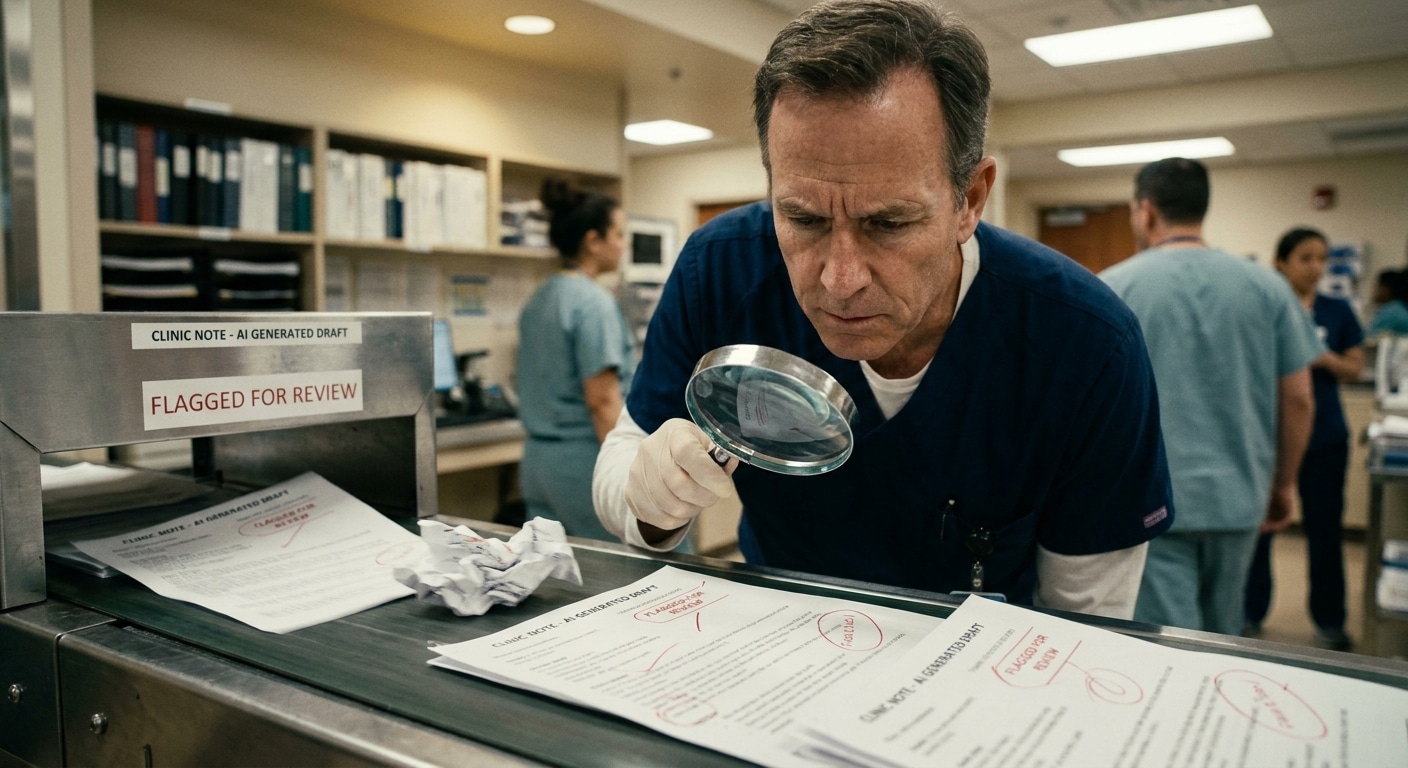

The dominant narrative around AI scribes positions them as physician burden reducers—tools that free clinicians from administrative tasks to focus on patient care. This framing, while appealing, obscures a critical operational reality: AI-assisted documentation workflows do not eliminate physician documentation responsibility; they restructure it. Physicians must now review, edit, and validate AI-generated content rather than create documentation from scratch.

This shift has profound implications for how health systems define physician productivity. If AI scribes reduce time spent typing but require equivalent time for quality review and correction, net efficiency gains diminish significantly. More importantly, the cognitive load of verifying AI output—scanning for errors, omissions, and mischaracterizations—differs fundamentally from the cognitive load of original documentation. Early evidence suggests this review burden may be underestimated in current workflow designs.

Implications for Productivity Metrics

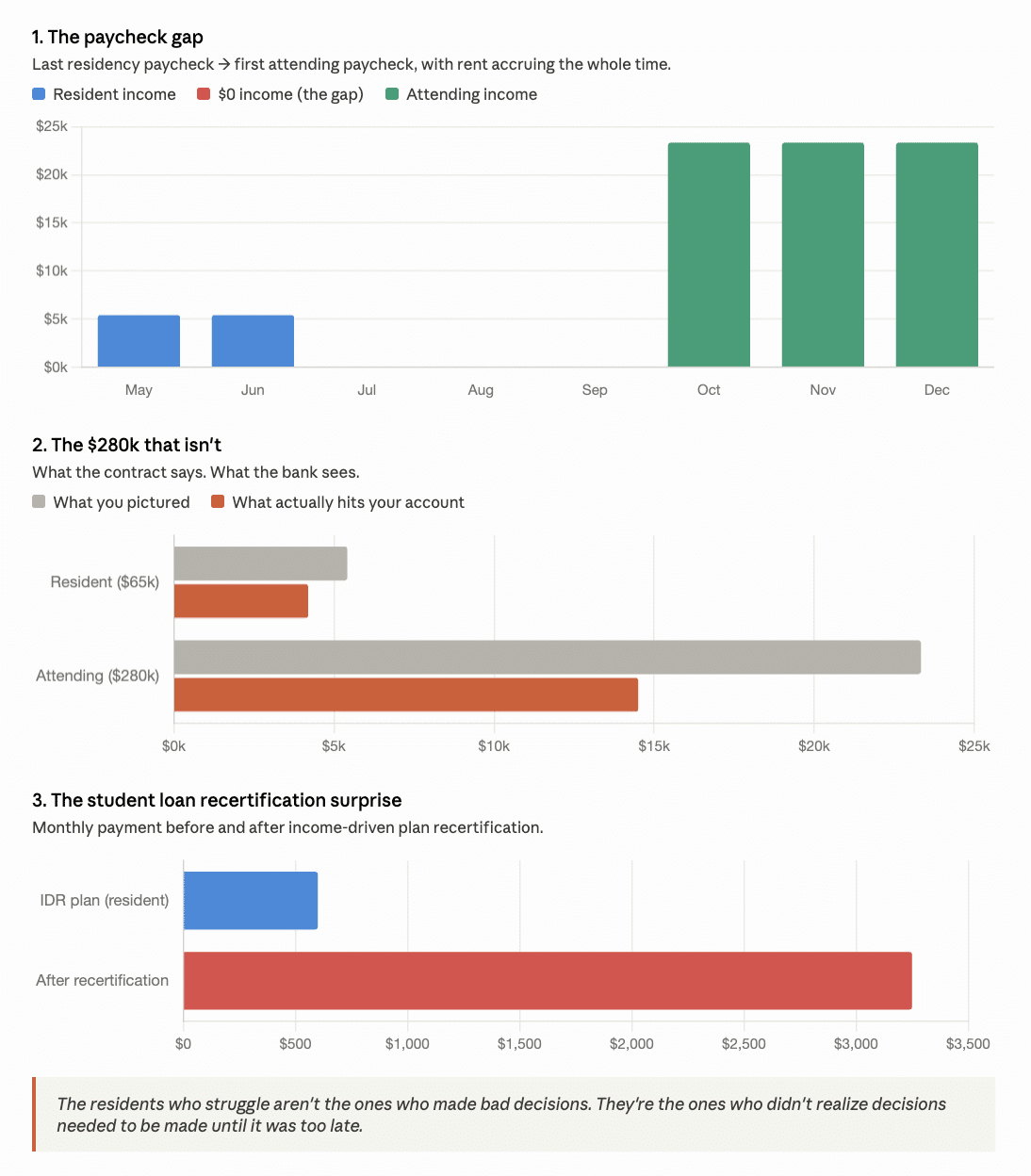

Health systems that have tied physician compensation to documentation-related productivity metrics face a recalibration challenge. If AI scribes were projected to increase patient throughput by reducing documentation time, but quality review requirements consume much of that saved time, productivity assumptions embedded in compensation models may prove overly optimistic. Physicians evaluating employment opportunities at AI-enabled organizations should scrutinize how productivity expectations account for oversight responsibilities—and whether compensation structures reflect the actual time demands of AI-assisted workflows.

The Documentation Quality-Compensation Connection

Clinical documentation directly drives revenue cycle performance. Incomplete or imprecise notes result in undercoding, denied claims, and compliance vulnerabilities. If AI-generated documentation systematically underperforms on specificity and completeness, health systems face revenue leakage that may exceed the cost savings from reduced documentation time. This creates a strategic tension: organizations seeking efficiency through AI adoption must simultaneously invest in physician oversight infrastructure to protect revenue integrity.

For physicians, this dynamic creates potential leverage in employment negotiations. As health systems recognize that AI scribes require skilled clinical oversight to function effectively, the physician’s role in documentation workflows becomes more—not less—essential. Physicians with demonstrated expertise in managing AI-assisted documentation, including efficient review protocols and quality assurance practices, may command premium positioning in recruitment conversations.

The research confirms that physician oversight isn’t a transitional requirement until AI improves—it’s a structural necessity that should be reflected in compensation models, productivity expectations, and staffing ratios for AI-enabled clinical environments.

Strategic Considerations for Health System Deployment

Health systems currently deploying or expanding ambient AI scribe programs face a strategic inflection point. The efficiency narrative that justified initial investments must now incorporate quality assurance costs. Several operational questions demand attention:

First, how will physician time for AI output review be accounted for in scheduling templates and productivity calculations? Organizations that simply layer AI scribes onto existing workflows without adjusting time allocations risk physician burnout and documentation quality degradation. Second, what training and competency standards will govern physician oversight of AI documentation? The skill set for effective AI review differs from traditional documentation competencies and may require dedicated development. Third, how will quality metrics evolve to capture AI-assisted documentation performance, and how will these metrics connect to compensation?

Recruiting and Retention Implications

For hospital executives and physician recruiters, the AI scribe quality gap introduces new dimensions to talent strategy. Recruiting physicians into AI-enabled environments requires transparency about workflow realities—including oversight expectations and quality review responsibilities. Organizations that oversell AI efficiency benefits without acknowledging review burdens risk early turnover and dissatisfaction. Conversely, health systems that thoughtfully integrate AI scribes with appropriate physician support structures can differentiate themselves as employers who leverage technology responsibly rather than as cost-reduction mechanisms.

Physicians evaluating opportunities should probe beyond surface-level AI adoption claims. Key questions include: What quality metrics govern AI-generated documentation? How is physician review time factored into productivity expectations? What happens when AI output requires substantial correction—is that time recognized in workload calculations? The answers reveal whether an organization views AI as a genuine physician support tool or as a mechanism to extract additional throughput without corresponding compensation.

The Incomplete Mainstream Narrative

Coverage of AI scribes in healthcare media overwhelmingly emphasizes time savings and physician satisfaction with reduced documentation burden. What this narrative consistently misses is the employment and labor-market dimension: AI scribes do not reduce the need for physician involvement in documentation—they transform it. The physician remains the essential quality guarantor, the clinical judgment that validates AI output, and the professional whose oversight determines whether documentation meets care, coding, and compliance standards.

This distinction matters enormously for workforce planning. Health systems cannot staff AI-assisted clinical environments with fewer physicians on the assumption that AI handles documentation. They may, however, need to restructure how physician time is allocated, how productivity is measured, and how compensation reflects the oversight function that AI makes more—not less—critical.

Looking Ahead: Structural Shifts in Physician Employment

The AI scribe quality research arriving this week signals a maturation in how health systems must approach clinical AI deployment. The initial enthusiasm for efficiency gains is giving way to more nuanced operational realities that position physician oversight as irreplaceable infrastructure rather than transitional scaffolding.

For physician employment structures, this means compensation models must evolve to recognize oversight as a distinct value-generating activity. Productivity metrics must account for quality review time alongside patient encounters. Staffing ratios must reflect the reality that AI augments rather than substitutes for physician documentation judgment. And recruiting strategies must honestly represent the workflow realities of AI-enabled practice environments.

Physicians entering or navigating this landscape hold meaningful leverage: their clinical judgment remains the quality control mechanism that makes AI documentation viable. Health systems that recognize this reality—and structure employment terms accordingly—will be better positioned to attract and retain physician talent in an increasingly AI-integrated clinical environment.

Sources

6 Health Systems Enhancing Care Delivery With Ambient AI Scribes – American Hospital Association

Reclaiming the Exam Room: How ModMed Scribe 2.0 Empowers Specialists – Healthcare IT Today

The ROI of Ambient AI in Health Care and Autonomous Coding – KevinMD

Clinicians get the benefit of ambient AI — what about patients? – Healthcare IT News

AI scribe use modestly reduces clinical documentation time – Ophthalmology Advisor

AI Scribes Lower Quality – UW Medicine News

AI Scribes Lag Clinicians on Note Quality – Conexiant

Study: Human notes best, AI scribes across the board – Healio

How Artificial Intelligence Documentation Hurts Patients – KevinMD

The Hidden Risks of AI Documentation Tools in Clinical Practice – KevinMD

Clinicians produce higher-quality notes than AI scribes study finds – Becker’s Hospital Review

Doctors make better clinical notes than AI scribes new study finds – EMJ Reviews

Lower-quality scores seen for AI vs human-generated visit notes – EMPR

The Questions You Should Be Asking About AI Scribing Tools – Medical Economics

Physicians Beat AI Scribes on Clinical Note Quality Study Shows – Rama on Healthcare