This analysis synthesizes 3 sources published February 2026. Editorial analysis by the PhysEmp Editorial Team.

Why this matters now

Artificial intelligence is transitioning from research environments into routine clinical settings, bringing intensified scrutiny to regulatory frameworks, operational protocols, and evolving workforce roles. As deployment expands, oversight structures are being tested in real time, forcing health systems to reconcile innovation velocity with compliance discipline and clinical accountability.

Two parallel dynamics are emerging. State governments are piloting concrete AI applications within care delivery environments, while federal agencies are investing in institutional capacity to supervise digital health technologies at scale. The result is a layered regulatory landscape that will directly shape adoption timelines, liability allocation, privileging standards, and workforce planning decisions.

These developments sit squarely within the broader transformation of AI in Physician Employment & Clinical Practice, where regulatory experimentation and governance infrastructure determine how AI reshapes clinical authority, staffing models, and long-term physician recruitment strategy.

State-level experimentation: operational pilots as policy laboratories

States are no longer passive implementers of federal digital health rules; they are running controlled operational pilots that place AI directly within clinical workflows. These pilots serve multiple functions simultaneously: they enable real-world validation of algorithmic behavior, surface gaps in clinical governance (for example, documentation and escalation protocols), and force clarity on scope—what tasks AI can perform autonomously, what requires human oversight, and what data sharing is permissible under state law.

From a regulatory-design perspective, pilots accelerate the translation of high-level principles into implementable guardrails. Observing model performance in situ lets regulators and systems test monitoring requirements, define acceptable error rates for low-risk tasks, and identify where workflow redesign is necessary. For provider organizations, procurement decisions will increasingly hinge on localized permissibility and pilot-derived outcomes as much as vendor claims or broad federal guidance.

Call Out — States as experimental regulators: Subnational pilots convert abstract AI principles into operational questions—who reviews model outputs, how liability is assigned, and what quality metrics matter. These practical answers will disproportionately determine near-term clinical AI adoption.

Federal capacity-building: institutionalizing digital health governance

At the federal level, creating or strengthening dedicated digital health leadership signals recognition that existing, distributed regulatory structures struggle with rapidly evolving AI technologies. Centralized digital health units concentrate technical expertise, maintain institutional memory, and improve coordination across premarket review, postmarket surveillance, and standards-setting activities.

Stronger federal capacity can yield more consistent expectations around evidence generation, transparency, and lifecycle monitoring. However, federal leadership must balance uniformity with flexibility: states will continue to act as laboratories, and a one-size-fits-all rulebook could stifle locally meaningful innovation. The interplay between federal guidance and state experimentation will define the de facto regulatory landscape for many clinical AI deployments.

Operational governance: connecting pilots and policy

The crucial governance question is how high-level rules translate into day-to-day operational practices at the clinician-system interface. Pilots expose where policy meets practice: inadequate escalation protocols can turn an approved tool into a safety risk, and poorly specified data provenance requirements can undermine postmarket monitoring.

- Task definition: delineate which clinical decisions the AI may initiate, which it may recommend, and where human sign-off is mandatory.

- Escalation protocols: define clear reviewer roles, timelines for human intervention, and documentation standards to support accountability.

- Monitoring and performance metrics: implement continuous evaluation of safety, equity, and clinically meaningful outcomes, not just algorithmic accuracy.

- Data stewardship: ensure consent, retention, and provenance frameworks align with both deployment and future model improvement needs.

Operational governance determines whether AI reduces clinician burden or simply shifts it. Pilots highlight friction points—such as where a model’s confidence metric does not map to clinicians’ decision thresholds or where state consent rules constrain necessary data flows for monitoring. Addressing those frictions requires combining clinical insight with engineering and regulatory expertise.

Call Out — Operational governance matters more than labels: A state-approved AI tool can still create risk without clear escalation paths, monitoring metrics, and data provenance controls. Governance design decides whether AI is a burden-shifter or a productivity lever.

Comparative dynamics: what distinguishes state and federal approaches

Speed versus scale

States can move faster to test narrow, context-specific uses of AI, producing actionable learnings in months. Federal entities, by contrast, aim for scale and consistency but move more deliberately. That trade-off means early adopters may find permissive states attractive testing grounds, while broader rollouts will likely await harmonized federal expectations.

Local nuance versus national interoperability

State pilots can embed local clinical workflows and legal nuances into design, improving immediate fit. Yet divergent state rules risk fragmenting markets and complicating vendor development. Federal coordination on interoperability and evidence standards could reduce that friction—if federal guidance is sufficiently attuned to operational realities surfaced by pilots.

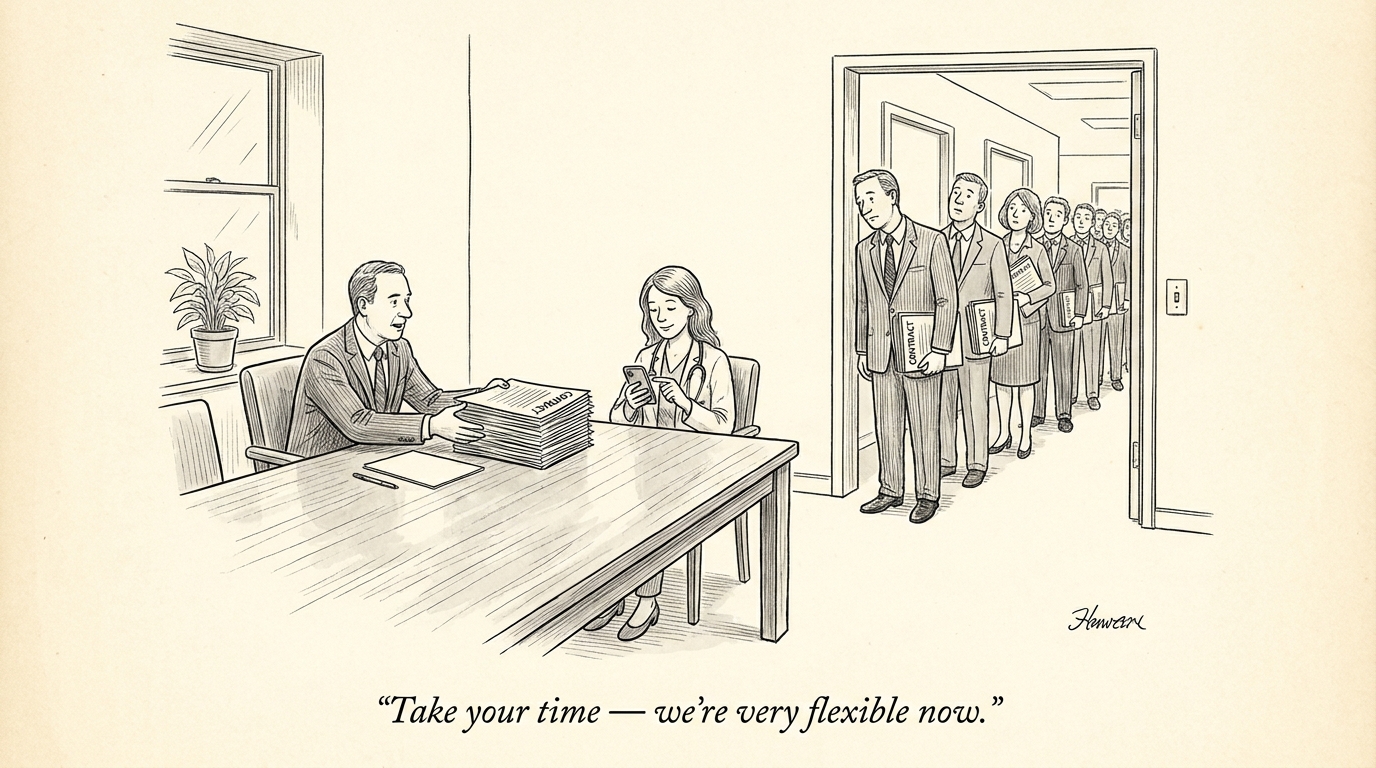

Implications for the healthcare industry and recruiting

Regulatory fragmentation and evolving federal capacity create concrete hiring and organizational imperatives. Health systems must build roles that bridge clinical judgment, technical validation, and regulatory compliance: physician-informaticists who can adjudicate AI recommendations, product managers who translate state conditions into deployment specifications, and quality engineers who design continuous monitoring programs.

Recruiting will emphasize hybrid skill sets—clinical credibility plus demonstrated experience with model evaluation, risk assessment, and real-world performance monitoring. Vendor selection criteria will expand beyond accuracy claims to include readiness for multi-jurisdictional pilots, robust postmarket surveillance capabilities, and clear data governance practices.

Strategic takeaways for leaders

1) Treat states as policy laboratories—monitor pilots and incorporate local learnings into deployment plans. 2) Invest in institutional capacity—hire and retain staff who can operationalize governance requirements. 3) Prioritize observable metrics—safety, clinician time savings, and equity measures will be the currency of sustainable adoption. 4) Design for portability—build modular governance and technical architectures that can be tuned to local requirements.

Conclusion

The interplay between state pilots and federal capacity-building is accelerating the maturation of clinical AI governance. For health systems and recruiters, the imperative is not only to adopt promising technologies but to operationalize governance—staffing the right roles, embedding monitoring systems, and designing escalation protocols that protect patients while enabling innovation. Active engagement with state pilots and federal guidance will be a strategic advantage for health systems and vendors navigating this transition.