Why this matters now

AI-driven selection tools have moved from experimental to operational across healthcare hiring workflows, altering how employers screen, rank, and interview candidates. That shift matters acutely for physician recruiting and staffing, where small algorithmic biases or opaque decisions can change career trajectories and clinical-team composition.

Legal challenges and regulatory debate are converging on three core questions: how transparent are automated hiring decisions, whether existing legal frameworks cover algorithmic selection, and what practices employers should adopt now to reduce risk. For organizations hiring clinicians, these questions affect compliance, candidate experience, and the integrity of workforce pipelines.

Regulatory uncertainty: a moving compliance target

Regulatory frameworks for employment selection are in flux. As public authorities, courts, and legislators reassess how anti-discrimination laws and guidance apply to algorithmic systems, employers face an uncertain compliance horizon. That uncertainty raises operational trade-offs: continue using high-performance opaque models and accept legal exposure and candidate pushback, or adopt more transparent—but potentially less predictive—approaches that may slow hiring.

For physician recruiting teams, where credentialing and fairness are mission-critical, the stakes are elevated: algorithmic errors may not only lead to litigation but also degrade patient care if they distort specialty pipelines or geographic distribution of clinicians.

Call Out: Regulatory flux forces active risk management. Health systems must treat algorithmic hiring tools as governance-critical systems—subject to audit, documentation, and ongoing validation—to avoid legal and operational consequences.

Transparency and explainability: what employers will be asked to show

Legal actions and demands for disclosure are pushing transparency from a best practice toward an operational requirement. Courts and regulators are increasingly interested in whether candidates can learn why they were rejected by an AI-driven process and whether those systems embed proxies for protected characteristics. Employers may be compelled to produce decision logs, feature importances, and validation reports in discovery or regulatory inquiries.

That means recruitment teams must build evidence trails: test data sets, performance metrics across demographic groups, and human-in-the-loop controls that document when and how automated outputs were overridden. Absent these artifacts, organizations risk being unable to demonstrate that a system operates fairly or that disparate impacts were appropriately mitigated.

Operational tension: trade-secret claims vs. disclosure expectations

Vendors frequently resist exposing model internals by citing intellectual property. But claimants and regulators view explainability as essential to adjudicating discrimination claims. Employers who embed third-party models therefore need contractual leverage to obtain sufficient transparency for compliance, or they must favor solutions designed for explainability over black-box performance.

Call Out: Contracts and procurement matter. Recruiters should insist on contractual rights to model documentation, subgroup validation results, and logging history to satisfy likely disclosure requests.

Candidate experience and market effects

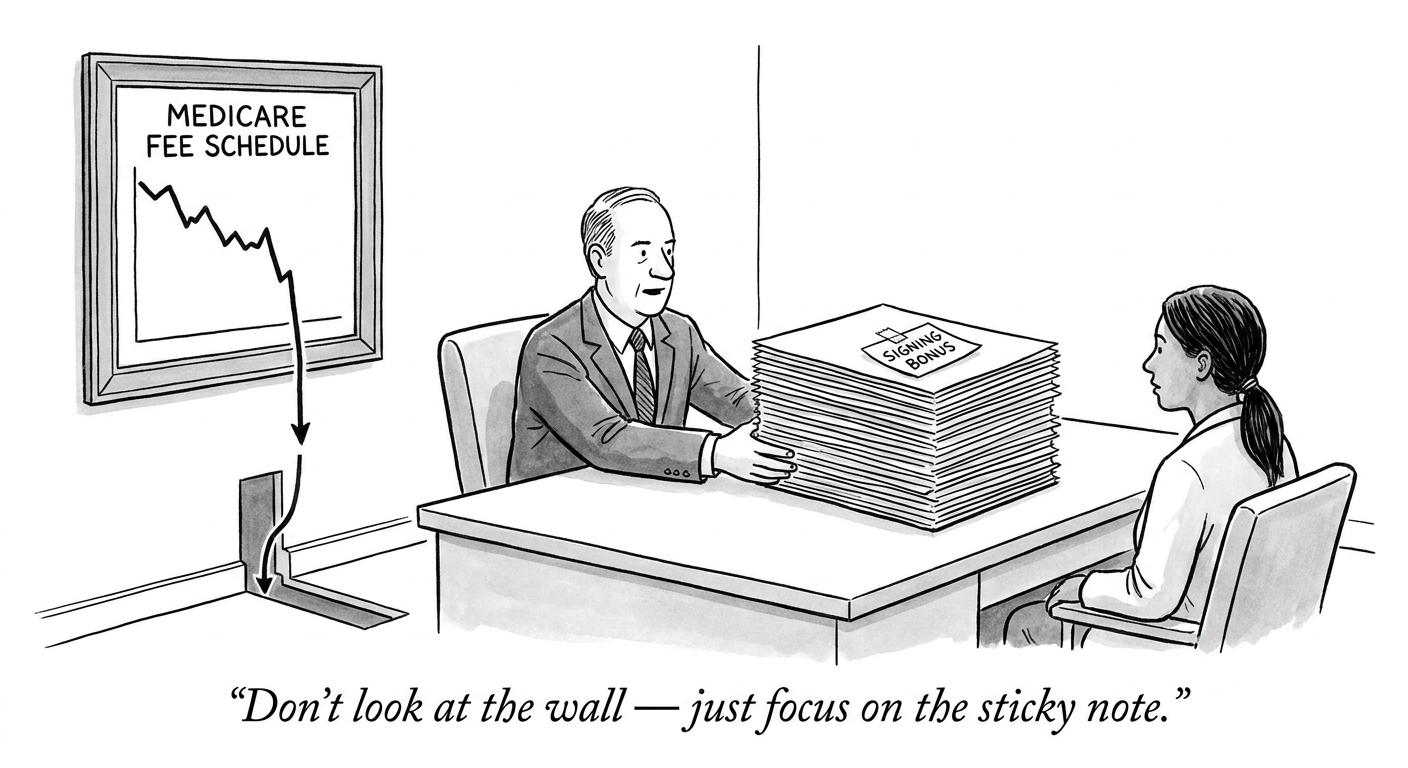

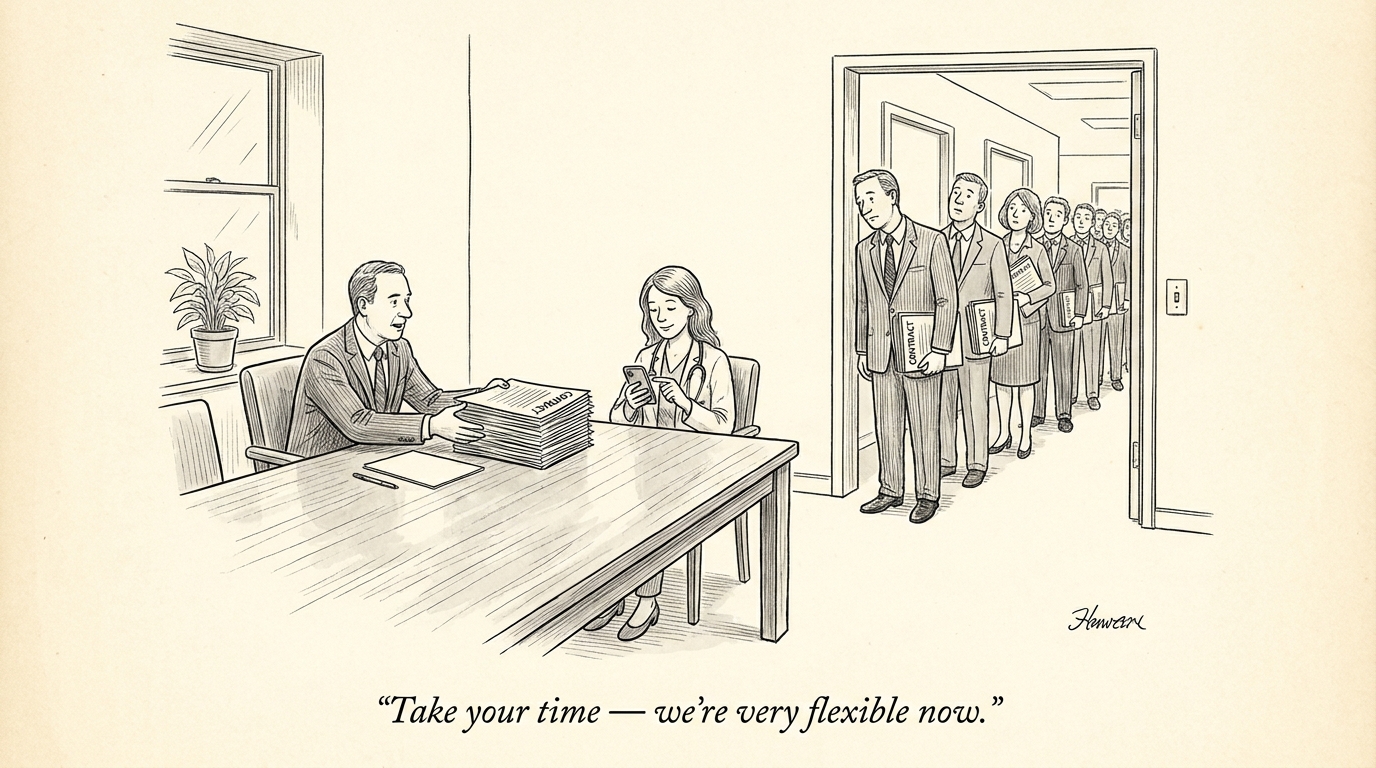

Beyond litigation risk, opaque hiring systems shape labor-market dynamics. Candidates denied visibility into automated evaluations are more likely to file complaints or litigate, and publicized disputes can reduce an employer’s attractiveness—particularly among high-value clinicians who compare offers from multiple systems. For medical specialties in short supply, reputational and candidate-supply effects can be material.

Moreover, job seekers are adapting: they optimize for automated filters, tailor resumes to algorithmic preferences, and increasingly seek employers that offer transparent processes. This dynamic creates a two-way selection pressure—systems that favor opacity may produce short-term efficiency but long-term recruitment challenges.

Designing hiring systems for healthcare: practical guardrails

Healthcare employers should take a proactive posture. Practical steps include:

- Demanding vendor transparency and access to validation artifacts during procurement.

- Implementing routine bias audits using representative clinical applicant populations.

- Maintaining human-review gates for decisions that remove a candidate from further consideration.

- Logging decision explanations and candidate touchpoints to meet potential disclosure needs.

These practices reduce legal exposure and preserve trust with talent pools. They also support continuous improvement: logged outcomes enable measurement of model drift and signal when revalidation or retraining is required.

Recruiters can operationalize these steps through technology and governance. For example, teams can integrate vendor dashboards into hiring operations or use compliance playbooks to evaluate candidate-impact metrics on a regular cadence. Additionally, PhysEmp’s AI-aware hiring dashboard and similar platforms can help surface transparency metrics and match clinicians with employers prioritizing auditable processes.

Implications for healthcare industry and recruiting

The combined legal, regulatory, and candidate pressures will reshape how health systems source clinicians. Expect three near-term industry shifts: procurement will prioritize explainability, legal teams will demand stronger documentation and oversight, and competitive advantage will accrue to organizations that balance algorithmic efficiency with demonstrable fairness. For physician recruiters, this means embedding technical and legal literacy into hiring workflows and investing in audit-capable systems.

Longer term, consistent standards and disclosure norms may emerge, reducing uncertainty. Until then, the practical imperative for employers is clear: treat algorithmic hiring tools like clinical decision support systems—document assumptions, validate performance rigorously, and retain human oversight to safeguard equity and operational resilience.

Sources

What Happens to AI Hiring When the Uniform Guidelines Disappear? – TLNT

Job applicants sue to open ‘black box’ of AI hiring decisions – The Seattle Times