Why this matters now

Over the past year, algorithmic tools have moved from research proofs to clinically actionable outputs: models can surface early biomarkers tied to metabolic risk, and domain-specific algorithms are being trained for oral health and other specialties. At the same time, clinicians and commentators are asking whether pattern recognition and probabilistic outputs are sufficient for care that depends on context, trust, and moral judgement. The result is a pivotal moment: healthcare organizations must decide how to deploy increasingly capable AI in healthcare systems without undermining the aspects of medicine that remain fundamentally human.

From population prediction to individualized risk

Recent computational models demonstrate that AI can detect subtle, multivariate signals—molecular, imaging-derived, or behavioral—that precede clinical disease. When applied at scale, these predictive outputs offer new pathways for early intervention and risk stratification that were previously impractical.

For health systems, the analytic yield is twofold: better targeting of preventive services and more efficient allocation of limited resources. But the technical capacity to flag elevated risk does not automatically translate into improved outcomes. Predictive accuracy must be paired with workflows that convert prediction into action: timely confirmatory testing, culturally competent counseling, and accessible interventions for patients identified as high risk.

Call Out — Translating prediction into practice: Predictive algorithms shrink the diagnostic window, but value emerges only when predictions trigger equitable, timely clinical pathways—testing, counseling, and support services—so that flagged risk becomes prevented disease, not just flagged data.

Specialty algorithms: focused gains, specific gaps

Algorithms tailored to narrow clinical domains—such as oral health—illustrate both promise and limitation. Narrow models require domain-specific inputs, curated labels, and representative training sets. When those conditions are met, a specialist algorithm can surface nuanced patterns that human inspection might miss or that scale-based screening programs could not sustain.

However, specialty tools are sensitive to data drift and population differences. An oral health model trained on a single academic center’s dataset may underperform when exposed to different demographics, care settings, or imaging equipment. Robust external validation, continuous monitoring, and mechanisms for local recalibration are essential.

Call Out — Algorithmic stewardship matters: Specialty algorithms are not plug-and-play. They demand ongoing validation, diversity in training data, and governance structures that assign responsibility for monitoring model performance across care sites.

What makes a physician ‘real’ in an age of AI

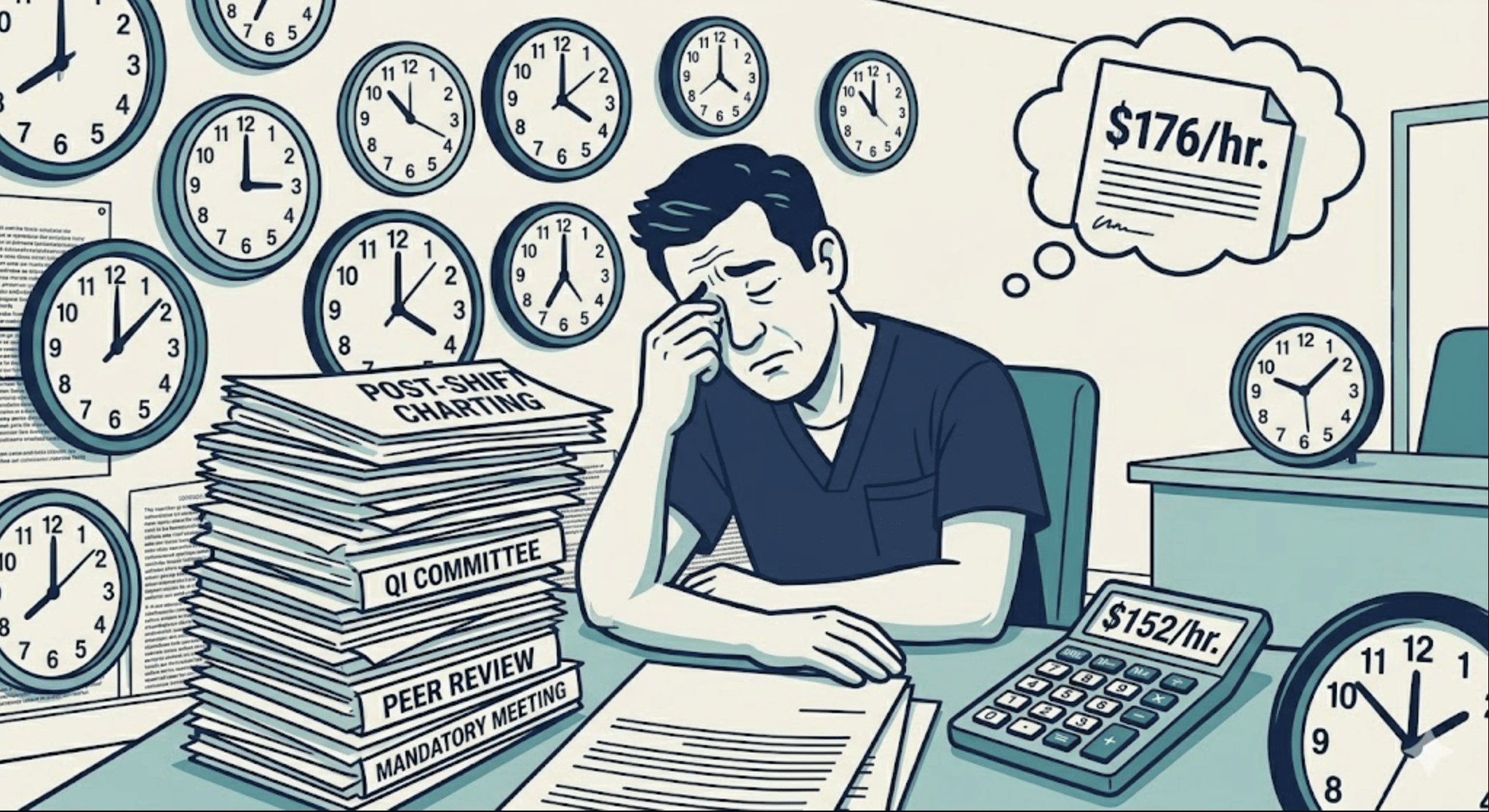

AI excels at pattern recognition, probability estimation, and surfacing associations across large datasets. This technical prowess reallocates certain cognitive tasks—triage, risk scoring, imaging pre-read—to machines. But the physician’s role extends beyond computation. Clinical practice weaves together diagnosis, values-based decision-making, shared deliberation, and the therapeutic relationship.

Two dimensions of physician value are especially resistant to automation. First, contextualization: clinicians synthesize social determinants, longitudinal knowledge of the patient, and nuanced symptom narratives that algorithms often cannot access. Second, moral and legal accountability: patients look to humans for responsibility when decisions have uncertain trade-offs or when adverse outcomes occur.

These dimensions do not make AI irrelevant; they reshape the physician’s remit. Instead of replacing clinicians, current and near-term AI will amplify their capacity, shifting time from repetitive pattern recognition toward patient-centered activities that demand empathy, negotiation, and complex judgment.

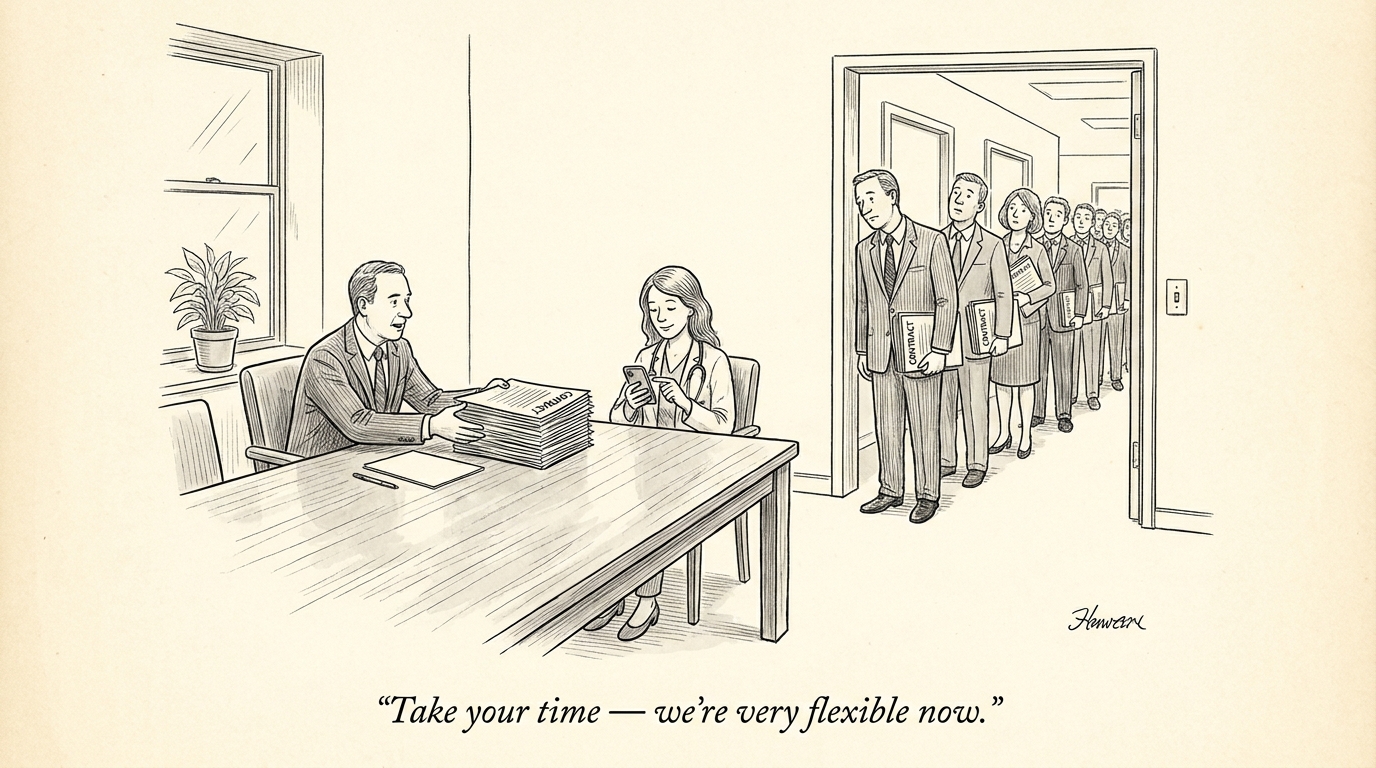

Integration, workforce, and recruiting implications

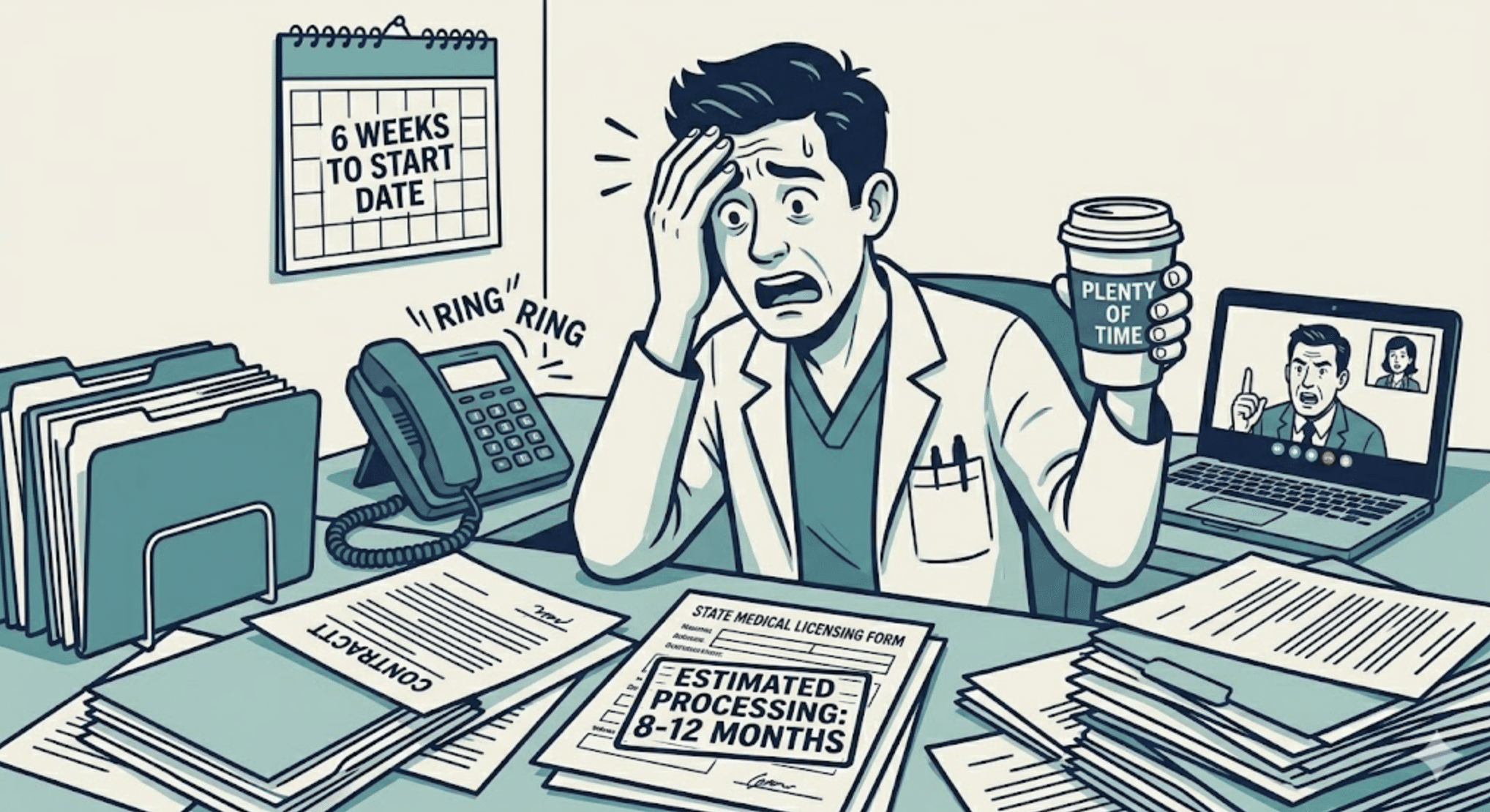

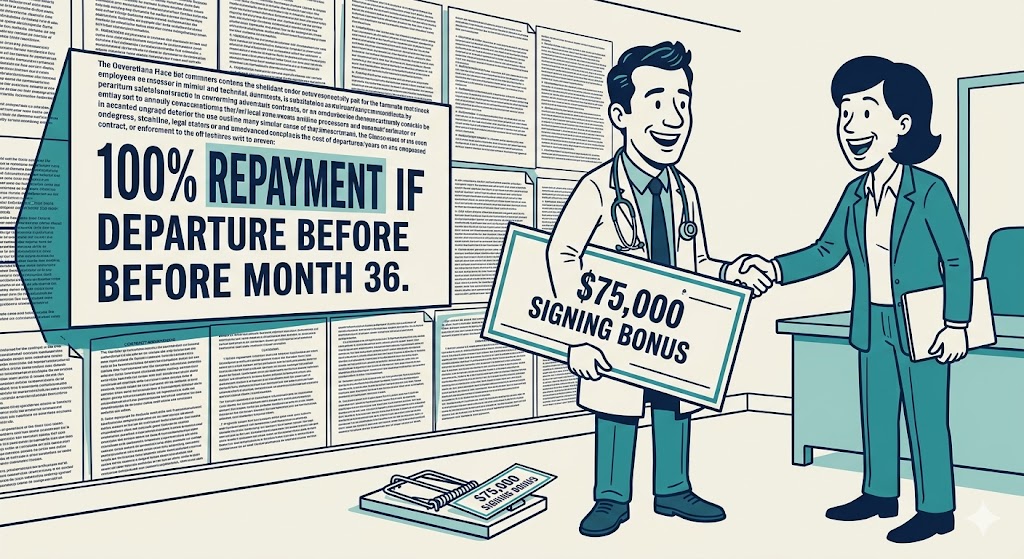

Operationalizing AI at scale is as much an organizational challenge as it is a technical one. Successful deployment requires integration into electronic health records, redesign of clinical pathways, clinician training, and governance around liability and explainability. From a workforce perspective, these shifts create new role hybridizations and hiring priorities.

Health systems will increasingly seek clinicians who can interpret algorithmic outputs, identify model failure modes, and communicate probabilistic results to patients. They will also need roles focused on algorithm stewardship: data scientists embedded in clinical teams, clinical informaticists who translate model outputs to workflows, and ethicists or patient advocates who ensure tools respect patient autonomy and equity.

For recruiting platforms and talent strategists, this means two practical actions: prioritize listings that combine clinical expertise with digital literacy, and develop pipelines for upskilling existing staff. Platforms can play a role by surfacing jobs that explicitly require or train clinicians in AI interpretation and oversight, and by connecting employers with candidates who bring both clinical domain knowledge and experience in data-driven settings.

Conclusion: a complementary partnership

AI is expanding what is detectable and actionable in medicine; it accelerates screening, sharpens risk stratification, and augments specialty workflows. Yet those algorithmic capacities do not obviate the clinician’s essential functions: contextual judgment, ethical responsibility, and the human relationship that undergirds shared decision-making. For health systems and recruiters, the immediate priority is pragmatic integration: amplify AI where it improves efficiency and accuracy, while investing in people, governance, and training that preserve trust and equitable outcomes.

Sources

AI model identifies biomarkers that predict prediabetes risk – Medical News Today

Building an algorithm for oral health – Tufts Dental Central

In the Age of AI, What Makes a Physician Real? – KevinMD

AI, Google no substitute for a doctor’s visit (Opinion) – The News-Press