Why this matters now

The simultaneous uptake of consumer-facing and clinic-facing AI is reshaping access, workflows, and expectations across health services. This convergence is squarely relevant to the core topic of AI in healthcare, as conversational agents and automated reception systems move from pilots to routine use.

For health system leaders, clinicians, and talent teams, these parallel trends raise practical questions about safety, data governance, and workforce readiness. Patient-facing tools can expand immediate access to support, while clinic-facing automation promises cost reductions—yet both introduce new vectors for error, inequity, and fragmented care if implemented without coordinated oversight.

Patient adoption: AI as an on-demand mental health entry point

Young adults and other digitally native groups are increasingly turning to chat-based AI for mental health support because these tools lower friction—offering anonymity, 24/7 availability, and rapid coping strategies when clinical services are delayed or inaccessible. Many of these systems combine symptom triage, psychoeducational prompts, and cognitive-behavioral techniques to provide near-term relief and self-management advice.

These capabilities expand reach, but their clinical boundaries are clear: algorithmic conversations are designed for scale and immediacy rather than longitudinal assessment or complex diagnosis. Without enforced escalation pathways, transparent limits of scope, and mechanisms to document and share critical safety information with care teams, patient-facing AI risks delaying necessary clinical care or providing inappropriate reassurance.

Call Out: AI conversational tools broaden immediate access and reduce barriers to help-seeking, but they must embed clear triage and escalation protocols and support documentation flows to ensure vulnerable users receive timely clinical intervention.

Clinic adoption: Automating front-desk and administrative workflows

Private clinics and smaller practices are piloting AI receptionists and virtual intake agents to reduce administrative staffing costs and improve throughput. These systems can handle appointment booking, basic intake questions, and routine patient communications—functions that traditionally consume significant staff time and contribute to operational drag.

However, front-desk automation intersects with sensitive operational and clinical processes: verifying patient identity, reconciling insurance eligibility, and capturing accurate intake histories. Failures in these domains produce downstream consequences—missed or incorrect appointments, billing errors, and gaps in clinical handoff—that can degrade both revenue integrity and patient safety.

Comparative analysis: Shared technology, divergent priorities

Both patient-facing chatbots and clinic reception AIs are built on similar technical layers—natural language understanding, intent classification, and workflow orchestration—but they optimize for different outcomes. Consumer tools emphasize engagement, access, and rapid response; clinic systems emphasize reliability, regulatory compliance, and interoperability with EHRs and billing systems.

Trust dynamics diverge as well. Patients often trade some accuracy for immediacy and perceived privacy, while clinics must prioritize auditability and liability management. This misalignment creates two distinct governance problems: consumer tools may operate outside clinical oversight, and clinic tools must meet higher standards for documentation and data protection. The regulatory and compliance ecosystem has not yet harmonized standards across these use cases, producing inconsistent risk profiles for patients and providers.

Call Out: The same NLP foundations power both mental health chatbots and AI receptionists; aligning on shared safety metrics, data governance, and escalation protocols is essential if these tools are to augment — not fragment — clinical care.

Implications for care quality, workforce, and recruiting

Adopting AI at both the patient and clinic edges changes the competencies health organizations must recruit and cultivate. Clinical staff and administrators will need practical literacy in AI behavior, failure modes, and monitoring approaches. Organizations should create roles responsible for continuous performance monitoring, safety auditing, and vendor oversight to ensure systems meet clinical standards.

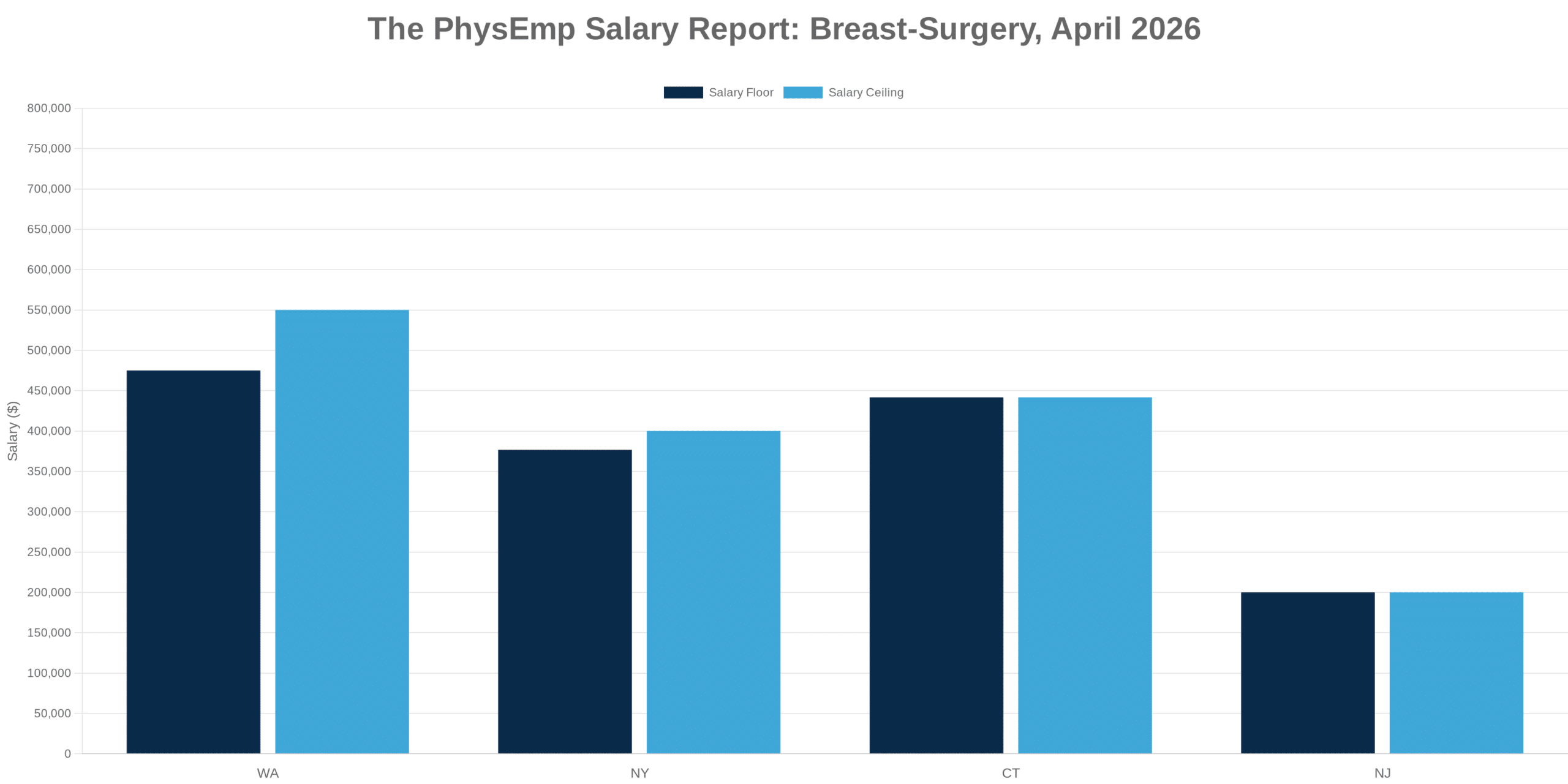

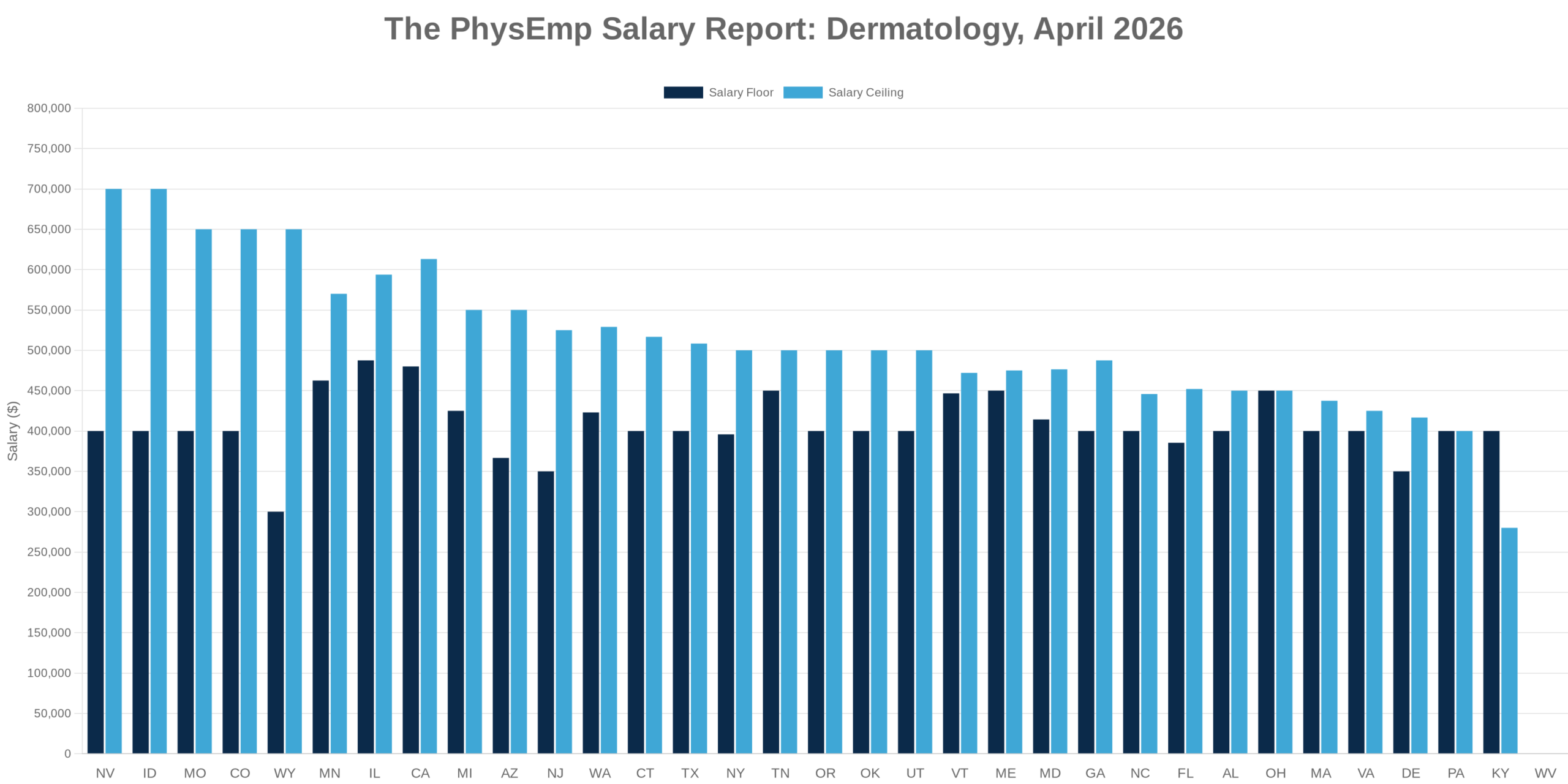

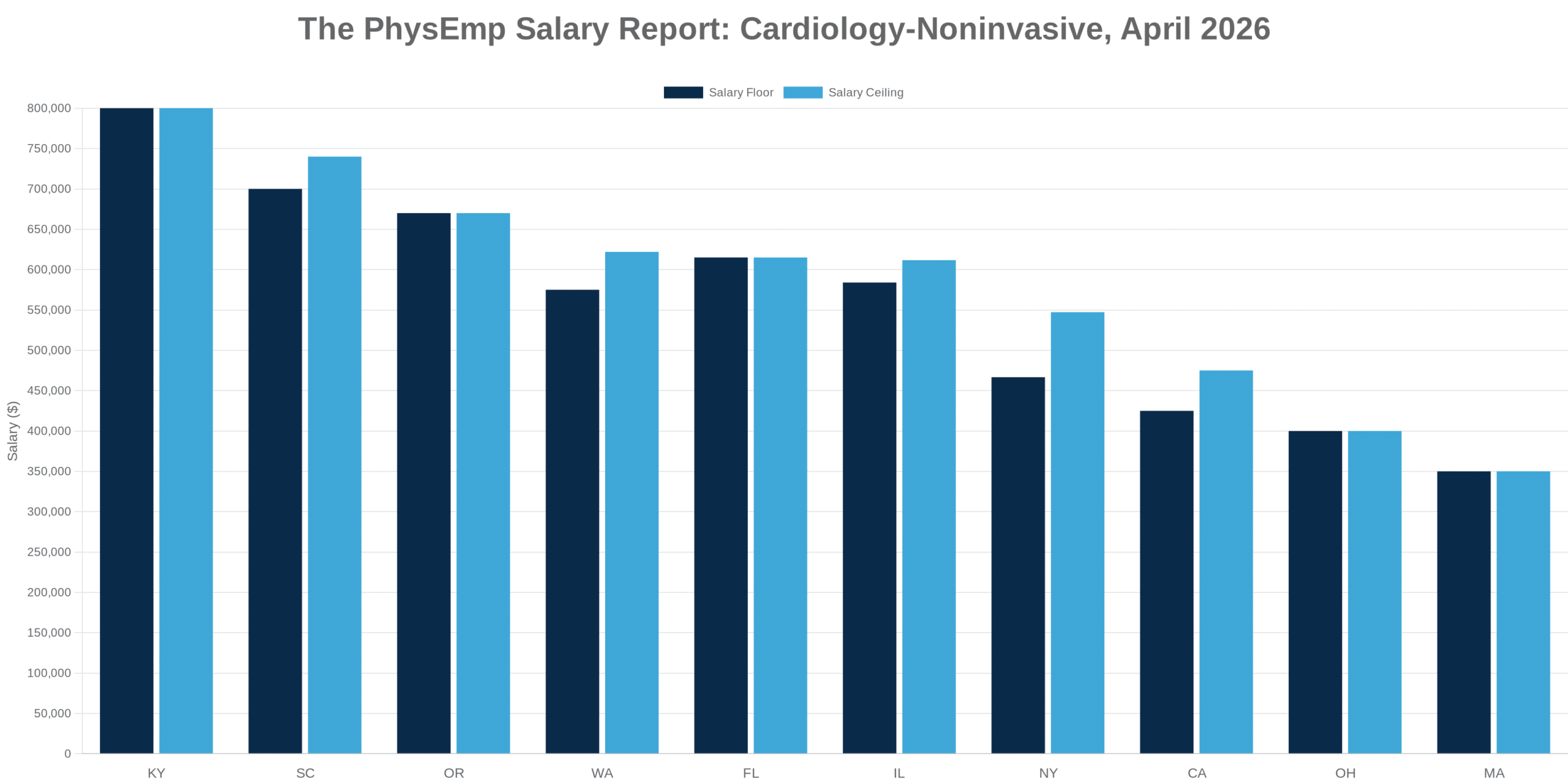

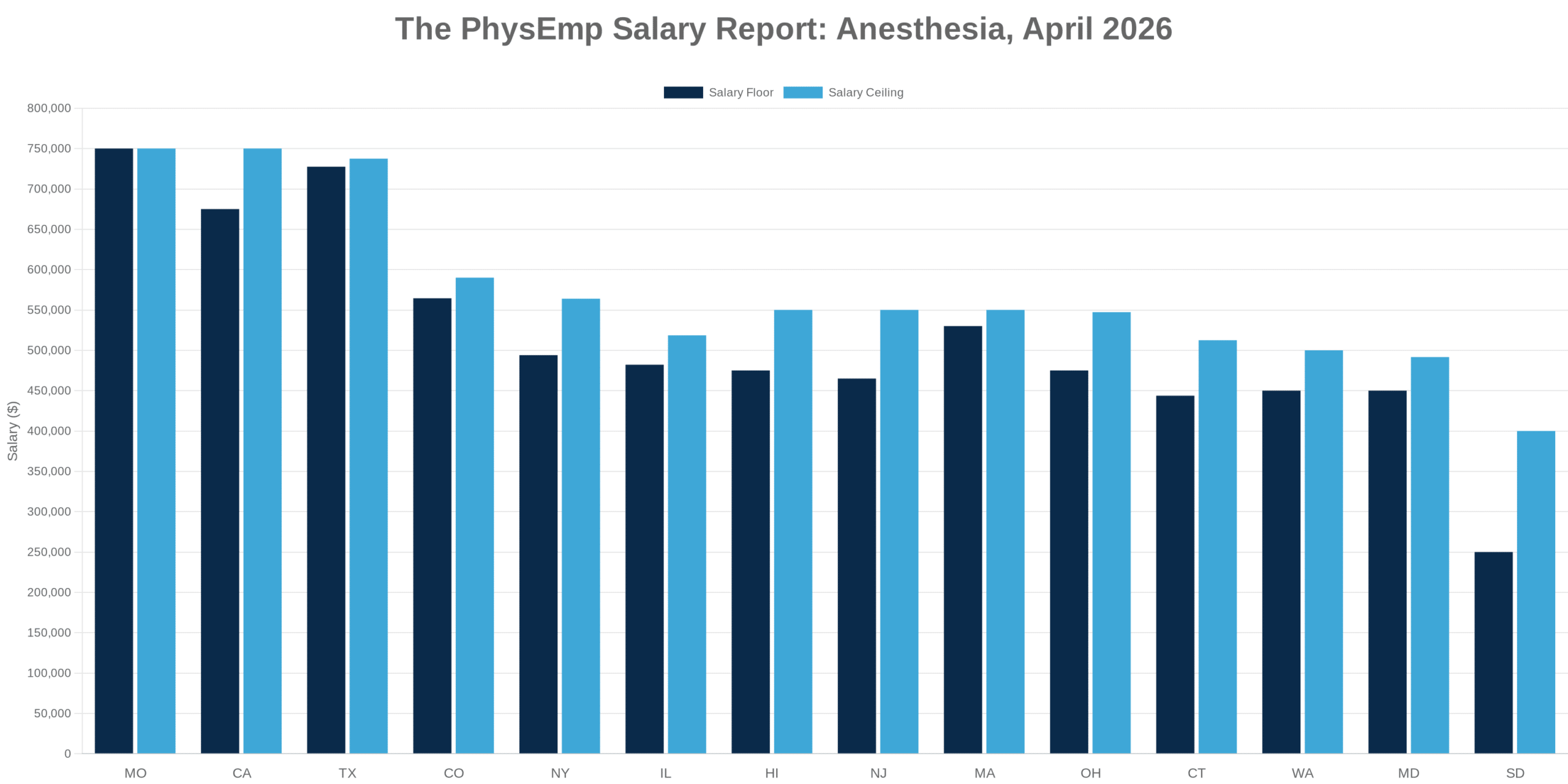

Recruiting strategies must evolve accordingly. Employers should prioritize candidates who can operate within AI-augmented workflows, interpret algorithmic outputs, and translate those signals into safe clinical decisions. In addition, cross-functional hires who bridge clinical expertise, informatics, and compliance will become valuable. For example, platforms like PhysEmp can help connect hiring teams with clinicians experienced in AI-enabled clinical and administrative settings, supporting more targeted recruitment for these evolving needs.

Conclusion: Integrate with guardrails, measure outcomes

The dual adoption of AI—by patients seeking immediate mental health support and by clinics automating administrative tasks—offers tangible benefits in access and efficiency. Realizing those benefits at scale requires intentional governance: interoperable escalation pathways, clear safety metrics, robust privacy protections, and workforce development plans that build AI fluency.

Where stakeholders align on evaluation standards and monitoring, AI can improve access and reduce operational burden. Absent that alignment, these technologies risk amplifying fragmentation, creating safety blind spots, and shifting burden onto clinicians and administrative staff without commensurate resources or oversight.