Why this theme matters now

Healthcare organizations are deploying artificial intelligence at an accelerating pace — from clinical decision support to operational automation. That momentum creates opportunity, but also concentrates three interdependent conditions that determine whether AI improves outcomes or magnifies harm: a digitally literate workforce, robust cybersecurity and operational resilience, and clinician leadership that shapes governance and trust. These prerequisites must be embedded within structured AI in Physician Employment & Clinical Practice; they are no longer optional technicalities but strategic levers that determine program success, patient safety, and regulatory exposure.

Digital literacy: workforce capability as a limiting factor

Adoption succeeds or fails at the human-technology interface. Clinical teams, IT staff, and operational leaders must not only understand AI outputs but also the limitations, failure modes, and appropriate contexts for use. Without that baseline competency, organizations risk misinterpreting model outputs, misconfiguring systems, and failing to detect drift or bias. Digital literacy reduces misuse, speeds troubleshooting, and contributes to more meaningful data collection — all of which improve model performance over time.

Digital literacy is less an HR checkbox than a continuous capability development program: measuring comprehension, embedding explainability in workflows, and creating rapid feedback loops between front-line users and model owners.

Cybersecurity and resilience: protecting AI’s integrity

Introducing AI creates new attack surfaces. Models trained on sensitive health data or integrated into clinical workflows become targets for data exfiltration, model inversion, and adversarial manipulation. Moreover, supply-chain risks emerge when third-party models or cloud services are used without rigorous vetting. Cyber incidents that compromise model inputs or outputs can produce direct clinical harm or degrade clinician trust, even if patient data is not exposed.

Security for healthcare AI must be proactive: threat modeling for model-specific attacks, continuous monitoring for anomalous predictions, and layered controls across data, model, and deployment endpoints.

Clinician leadership: governance, trust, and accountability

Clinicians are the translators between algorithmic insight and patient care. Their involvement in procurement, validation, and governance frameworks determines whether AI tools are clinically meaningful and ethically deployed. When clinicians lead, governance focuses on clinical validity, workflow fit, and patient-centered outcomes rather than purely technical metrics. Leadership also provides a credible human interface to patients and regulators — essential for informed consent and shared decision-making.

Operationalizing clinician leadership

Practical mechanisms include multidisciplinary AI review boards, clinician-run pilots with defined outcome measures, and formal escalation pathways for unexpected model behavior. Embedding clinicians in vendor negotiations and SOWs (statements of work) ensures contractual accountability for performance and safety, including post-deployment monitoring obligations.

How the three prerequisites interact

These three factors are mutually reinforcing. A digitally literate clinician base detects anomalies sooner and escalates them, enabling security teams to respond. Strong cybersecurity reduces the incidence of incidents that would erode clinician trust. Clinician-governed validation establishes the acceptance criteria that digital literacy programs teach clinicians to monitor. When one area is weak, the others bear increased burden: inadequate training forces clinicians to rely on vendor claims; poor security generates incidents that training cannot anticipate.

Implications for procurement, deployment, and talent

Healthcare leaders should reframe AI initiatives as institutional capability-building rather than point technology purchases. Procurement should require evidence beyond accuracy metrics: independent validation in relevant clinical populations, documented adversarial testing, and commitments for post-deployment monitoring and model updates. Contracts must allocate responsibilities for incident response and require explainability and audit trails.

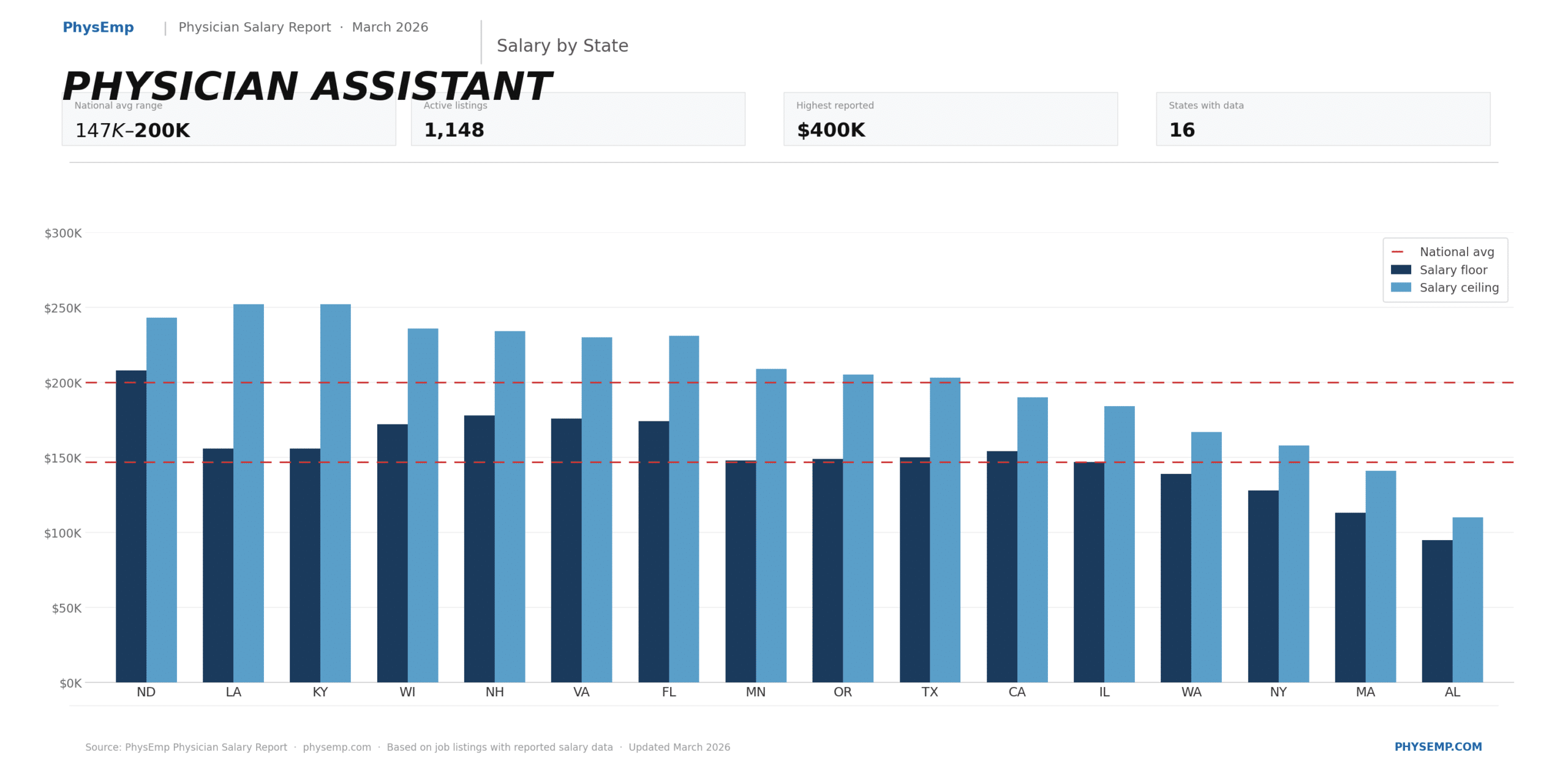

From a talent perspective, hiring priorities should shift. Organizations need clinicians who understand AI’s operational impacts, data scientists with domain expertise, and cybersecurity engineers versed in model-specific threats. This cross-functional talent profile is scarce; building it requires targeted recruitment, role redefinition, and retention strategies. Here, platforms that connect AI-aware clinicians and technical experts can help match needs to candidates quickly — a gap that specialist job boards and marketplaces are positioned to fill. Consider aligning workforce-development investments with recruitment: certification programs, protected time for AI governance work, and career paths that combine clinical practice with informatics responsibilities.

Regulatory and governance outlook

Regulators are increasingly focused on safety, explainability, and monitoring. Anticipate requirements for systematic reporting of adverse events, clearer expectations for real-world performance monitoring, and stronger vendor accountability. Governance frameworks should therefore define performance baselines, drift detection thresholds, and reporting workflows that align with regulatory expectations and payer scrutiny.

Implications for the healthcare industry and recruiting

For health systems and vendors, the lesson is that responsible AI adoption is multidisciplinary and long-term. Organizations that invest early in workforce digital literacy, embed clinician leadership in procurement and governance, and harden AI deployments against cyber threats will not only reduce risk — they will accelerate value realization. Failure to invest in these areas will slow adoption, increase total cost of ownership, and expose institutions to reputational and regulatory risk.

For recruiting specifically, demand will grow for hybrid professionals: clinicians with digital fluency, engineers familiar with regulated health environments, and cybersecurity specialists who understand model threats. Recruiters and job platforms should surface role descriptions that emphasize governance experience, evidence of clinical validation work, and capabilities in continuous monitoring. Employers should package development pathways and governance responsibilities into roles to attract talent that values mission-driven, safety-first AI work.

Conclusion

AI’s promise in healthcare depends less on raw algorithmic performance and more on institutional readiness: digitally fluent users, defenses that protect model integrity, and clinician-led governance that keeps patient welfare central. These are strategic investments that determine whether AI deployments deliver sustainable clinical and operational benefits, or become expensive liabilities. Organizations that treat these prerequisites as core capabilities—not optional add-ons—will lead the next wave of safe, effective AI in healthcare.

Sources

AI adoption in healthcare requires digital literacy – MobiHealthNews

AI Adoption in Healthcare Doubles, But Cybersecurity Risks Loom Large – HIT Consultant

Clinicians must lead on AI in healthcare, say medical bodies – Rama on Healthcare