Why this theme matters now

After years of promising prototypes and pilot deployments, artificial intelligence in diagnostic imaging is producing reproducible, clinically meaningful results. Multiple recent large trials and implementation studies now show improvements not only in algorithm metrics but in patient-centered outcomes and day-to-day radiology workflows. That shift marks a maturation of AI in healthcare, changing how health systems, vendors, and talent teams approach adoption and oversight.

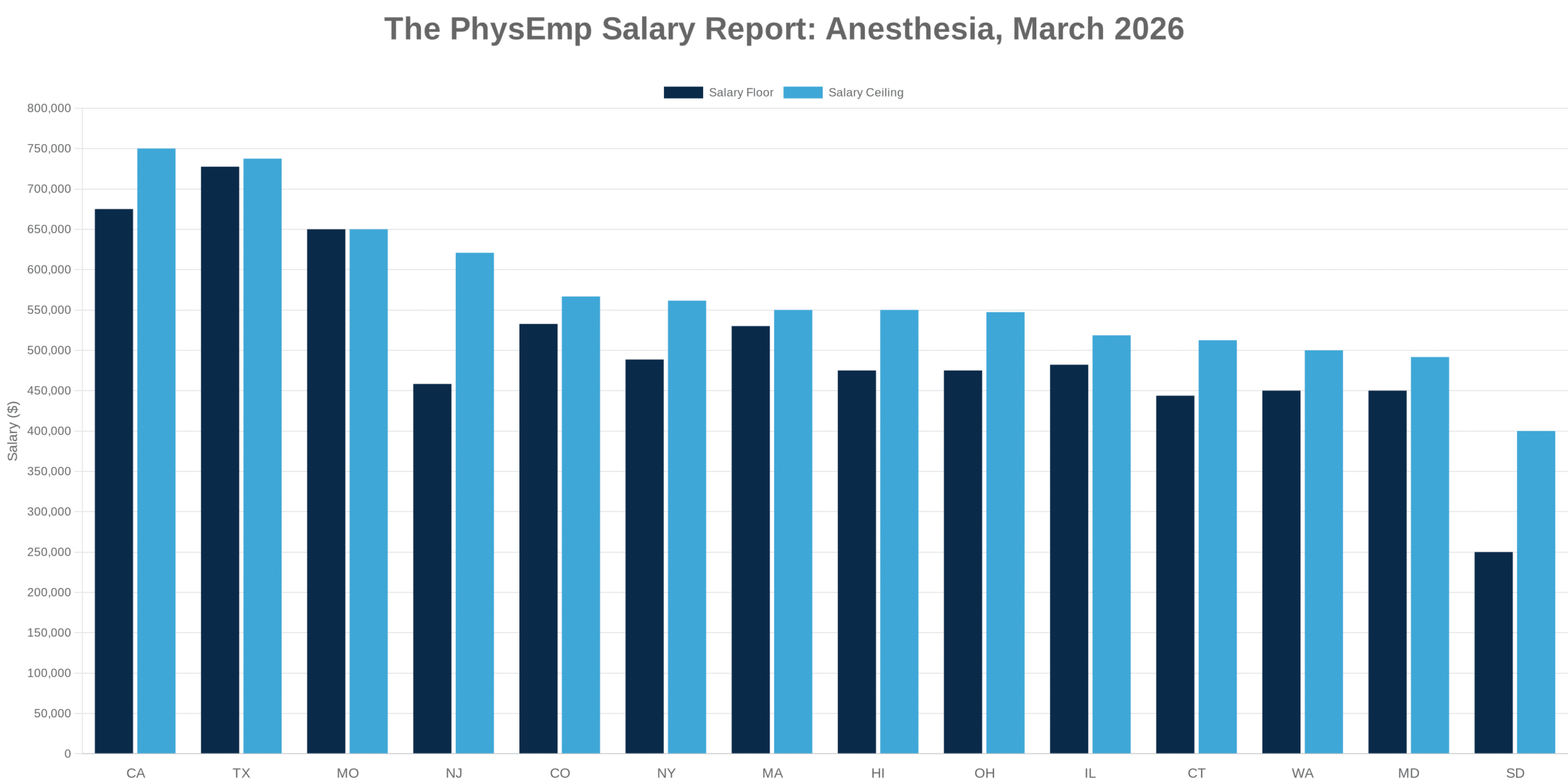

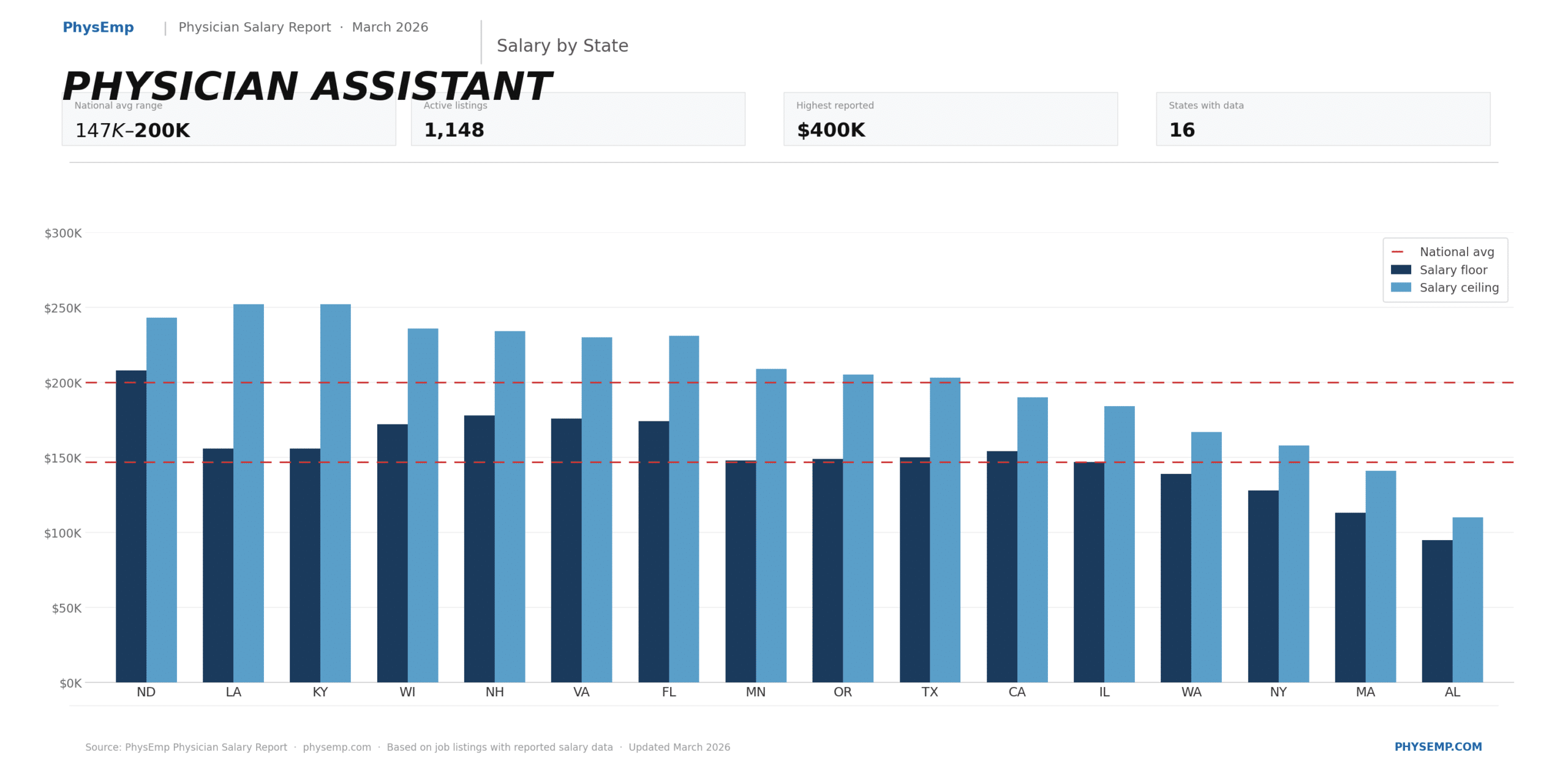

Demand for radiologists continues to support competitive compensation structures. Explore current opportunities on our Radiology Physician Jobs page.

Clinical validation in breast screening: moving beyond retrospective claims

Recent randomized and large-scale prospective evaluations have tested AI as an adjunct to population mammography screening rather than as a standalone lab benchmark. These studies report that using AI alongside radiologists increases the detection of screen-detected cancers and decreases the number of cancers that appear between scheduled screens (interval cancers). Importantly, these results come from controlled trials with patient-level outcomes, which elevates the evidentiary standard compared with typical retrospective performance studies.

That level of validation matters for regulators and payers: demonstrating an impact on interval cancer rates and real-world detection changes the calculus for reimbursement, clinical pathways, and liability frameworks. For radiology practices, validated adjunctive tools can be positioned as quality-improvement interventions rather than speculative productivity hacks.

Call Out: Trials that link AI to patient outcomes unlock a shift from vendor-led adoption to clinician-guided implementation—creating leverage for health systems to demand robust post-deployment monitoring and performance guarantees.

Interpretability and diagnostic specificity: reducing false positives

One thread across recent research is the emphasis on interpretable models that stratify risk instead of producing opaque binary labels. In breast and MRI contexts, explainable approaches help separate high-risk findings from benign ones, which reduces false-positive diagnoses and downstream unnecessary workups. Reducing false positives has dual benefits: it limits patient anxiety and reduces downstream costs and imaging cascade pressure on radiology departments.

Interpretability also matters for adoption. Clinicians are likelier to accept assistance when they can inspect the model’s reasoning or risk stratification. From a governance perspective, interpretable outputs facilitate audit trails and targeted re-review strategies when algorithm performance diverges from expectations.

Workflow augmentation: structured reporting and chest radiography

Beyond cancer detection, AI is showing measurable gains in everyday imaging workflows. Structured-reporting tools combined with automated image triage and natural-language assistance can standardize chest x-ray interpretation, accelerate report generation, and route urgent findings more reliably. These workflow-oriented gains address two perennial problems: variability in report content and delays in communicating critical results.

Workforce effects here are subtle but significant. When AI handles routine prioritization and templated reporting, radiologists can spend more time on complex cases and consultative work. That rebalancing changes productivity metrics—fewer routine reads per shift may not indicate lowered output if complexity and value-per-case increase.

Call Out: Workflow AI that standardizes reporting and prioritizes critical studies can improve throughput without sacrificing diagnostic quality—this creates an operational opportunity to redesign radiology roles around high-value interpretation and clinical collaboration.

Agentic AI and the boundary of autonomy

Discussion of ‘agentic’ AI—systems with more autonomous decision-making and action—has intensified alongside these clinical results. While the current evidence base supports augmented, human-centered tools, the prospect of AI that initiates workflows or autonomously flags and communicates findings raises regulatory, ethical, and liability questions. Establishing clear boundaries for autonomy and human oversight will be critical as vendors push features that offload more routine decisions to software.

For hospitals and radiology groups, this means investing in governance frameworks that define acceptable automation, monitor real-world performance, and specify fail-safes. It also shapes contracting: procurement should include service-level expectations and shared-responsibility clauses for downstream harms related to automated actions.

Implications for the healthcare industry and recruiting

The shift from experimental to evidence-based AI in radiology changes talent needs. Health systems will increasingly require clinicians with AI literacy, informaticians who can validate and monitor models in production, and operations managers who can redesign workflows around augmented interpretation. Recruiters should expect new combined roles: clinical-AI leads, deployment engineers embedded in imaging departments, and regulatory/compliance specialists versed in algorithm governance.

For prospective hires, the value proposition changes: expertise in model evaluation, data-shift detection, and human-AI interface design becomes as valuable as traditional subspecialty skills. Organizations that move early to hire cross-functional teams that include radiologists, data scientists, and quality experts will be better positioned to translate trial-level benefits into consistent clinical practice.

At the same time, vendors and health systems should plan for longitudinal monitoring budgets and staff positions dedicated to post-deployment surveillance. The most successful implementations will treat AI as an ongoing clinical service—requiring training, governance, and iterative improvement—rather than a one-time software purchase.

Conclusion

Recent high-quality studies mark a turning point: AI in radiology is graduating from promising algorithms to tools with documented clinical effects and operational value. That evidence creates both an opportunity and an obligation. Opportunity, because validated tools can improve detection, reduce false positives, and streamline workflows; obligation, because integration demands robust governance, new skill sets, and continuous performance monitoring.

For hiring teams and leaders, the implication is clear—build multidisciplinary capabilities now. Recruit clinicians who can evaluate and guide AI, technologists who can sustain and monitor deployed models, and managers who can rework workflows to realize the value demonstrated in trials. For employers and candidates exploring these roles, resources and job connections can be found via “PhysEmp”.

Sources

Agentic AI in Radiology – Radiology (RSNA Journals)

Structured reporting, AI improve chest x-ray workflows – AuntMinnie