Why this theme matters now

Leading academic health systems are no longer experimenting with AI as a novelty; they are formalizing repeatable processes for AI in healthcare deployment in clinical settings. That shift matters because AI’s promise—efficiency gains, improved diagnostics, and decision support—collides with complex risks: patient safety, workflow disruption, biased outcomes, and regulatory uncertainty. How top institutions navigate those trade-offs will shape standards, vendor behavior, and hiring priorities across the health sector.

Governance and staged rollout: building guardrails before scale

Top medical centers are instituting cross-functional governance frameworks to manage AI adoption. These frameworks commonly define who signs off on pilots, how risk thresholds are set, and what monitoring is required post-deployment. Rather than pushing models directly into high-stakes use, institutions favor phased deployments: retrospective validation, prospective shadow testing, clinician-in-the-loop pilots, then limited clinical use with continuous outcome monitoring. This staged approach reduces the chance of unanticipated harms and creates a roadmap for measuring impact.

Call Out: Institutional governance that enforces phased testing and continuous monitoring reduces downstream harm and creates a defensible path for wider AI deployment—transforming AI from a point solution into an operational capability.

Clinician engagement and education: making AI a team sport

Deliberate adopters treat clinicians as partners, not just end users. Successful programs invest in training that explains model limitations, appropriate use cases, and how to interpret probabilistic outputs within clinical reasoning. They also build channels for clinician feedback—structured incident reporting and model performance dashboards—that feed back into model refinement. This two-way engagement addresses two common failure modes: mismatch with real-world workflows and clinician mistrust that leads to under- or over-reliance.

Designing workflows, not widgets

Effective integration prioritizes workflow fit. That means embedding alerts and recommendations into existing EHR pathways with attention to timing, cognitive load, and escalation rules. When AI augmentations feel like added noise, clinicians ignore them; when they are tightly integrated and transparently explain their logic and confidence, uptake improves and utility becomes measurable.

Validation, safety, and bias mitigation: operationalizing rigor

Academic centers are converging on rigorous validation practices that resemble clinical trial methodology more than traditional software testing. This includes pre-deployment performance characterization across patient subgroups, prospective evaluation of clinical endpoints where feasible, and explicit plans for model drift detection. Safety engineering practices—such as rollback mechanisms, human overrides, and incident response playbooks—are baked into deployment plans to contain unanticipated failure modes.

Call Out: Requiring subgroup performance checks and drift monitoring as part of any deployment contract forces vendors to treat robustness and equity as first-order deliverables, not optional features.

Data stewardship and interoperability: the unsung enabler

Reliable AI requires curated, interoperable data pipelines. Institutions approaching AI thoughtfully invest in data governance: provenance tracking, standardized data definitions, and infrastructure for labeled, annotated datasets that are suitable for both development and independent validation. They also negotiate vendor responsibilities for data handling, ensuring that model updates don’t introduce silent shifts in behavior when upstream data changes.

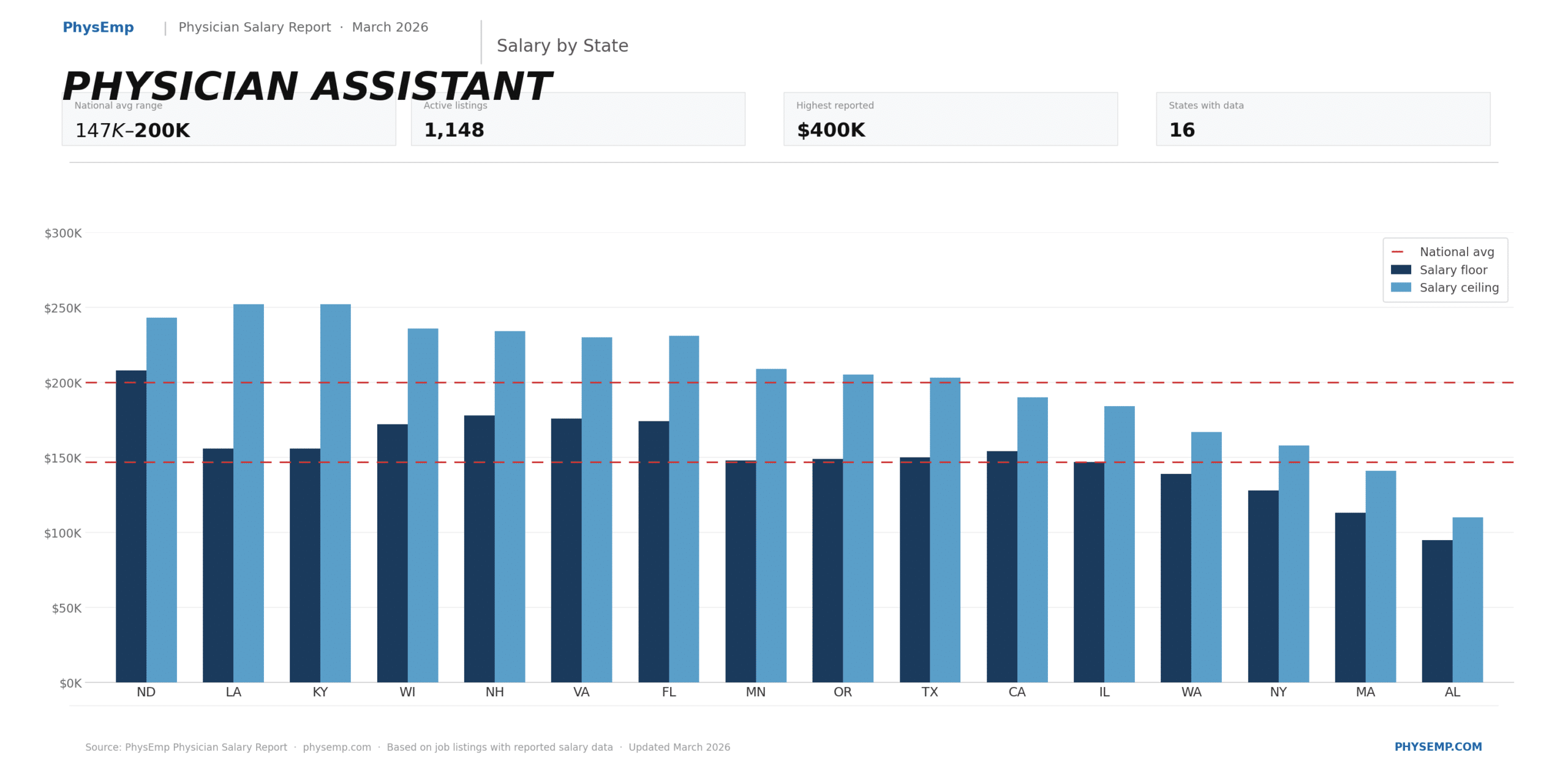

Workforce and recruiting implications

These institutional practices change the kind of talent health systems need. Recruiting priorities now include clinician-data scientists, health-focused ML engineers, implementation scientists, and roles such as AI safety officers and clinical informaticists who can translate between technical teams and front-line providers. Organizations that hire broadly across these disciplines will move faster and safer; those that lack this multidisciplinary bench risk slower, riskier deployments.

For hiring teams, the shift is practical: evaluate candidates for skills in clinical context translation, post-deployment monitoring, and ethical risk assessment—beyond traditional model-building prowess.

Implications for healthcare leaders and recruiters

Deliberate adoption creates a competitive advantage: it reduces downstream remediation costs, accelerates safe scaling, and enhances stakeholder trust. For executives, this means investing early in governance, validation infrastructure, clinician education, and multidisciplinary hiring. For recruiting leaders, it means redefining job descriptions, prioritizing candidates with hybrid profiles, and partnering with specialized job platforms to source talent who understand both clinical complexity and ML lifecycle management.

Regulators and payors will watch how leading academic centers operationalize safety and measurement. Institutions that codify transparent validation outcomes and post-market surveillance data are better positioned to influence coverage decisions and contribute to pragmatic standards that others can adopt.

Conclusion: from pilot projects to resilient capability

Academic medical centers are moving from isolated AI pilots to institutional capabilities that balance innovation with patient safety. That transition is not automatic; it requires intentional governance, clinician partnership, rigorous validation, and new kinds of hires. The centers that succeed will be those that view AI adoption as an organizational competence—one that demands process, people, and persistent measurement—rather than a one-off technology procurement.