Why this theme matters now

Healthcare is on the cusp of an operational shift: machine learning models and decision-support tools are moving from pilot projects into routine clinical workflows. As tools proliferate, risk shifts from purely technical model performance to how clinicians and systems use, monitor, and govern those tools. Academic medical centers are uniquely positioned to lead because they can embed training within structured AI in Physician Employment & Clinical Practice, that determine whether AI augments care safely and equitably.

Comparing educational approaches: degree programs vs. embedded training

Universities are experimenting with different curricular vehicles to raise AI literacy across the workforce. One path is formal, degree-based programs that provide deep, structured instruction in AI methods applied to biomedical problems. These programs typically cover data science fundamentals, translational research methods, and domain-specific applications — creating graduates who can design, validate, and shepherd models through clinical translation.

The complementary approach embeds AI training into existing clinical education: short courses, fellowships, and modular upskilling for clinicians and allied health professionals. This model emphasizes applied competence — understanding model outputs, recognizing failure modes, and integrating AI-driven insights into clinical decision-making without becoming a data scientist.

Both approaches address different needs. Degree programs supply technical depth and a pipeline of specialized talent; embedded training spreads baseline competence widely across clinical staff. Health systems facing immediate operational needs should prioritize scalable upskilling, while academic centers can cultivate deeper expertise through master’s and postgraduate offerings that replenish the pipeline over several years.

Call Out: Institutions should treat AI education as a two-track enterprise: broad-based clinician literacy to ensure safe adoption, plus concentrated technical programs to develop the engineers and clinician-scientists who will build and validate the tools.

Governance and assurance: building systems, not just skills

Training clinicians to use AI matters, but it’s insufficient on its own. Governance frameworks — policy, testing, monitoring, and accountability — are required to ensure models perform as intended in heterogeneous clinical settings. New assurance labs and multidisciplinary oversight committees combine expertise in informatics, ethics, biostatistics, and clinical operations to operationalize validation standards, continuous monitoring, and incident response processes.

These governance units act as intermediaries between vendors, researchers, and frontline clinicians. Their functions include pre-deployment risk assessment, post-deployment surveillance for drift and bias, and education for end-users. This institutional infrastructure changes procurement and implementation workflows: models are no longer treated like software packages but as clinical interventions requiring lifecycle management.

Workforce implications: new roles and shifting competencies

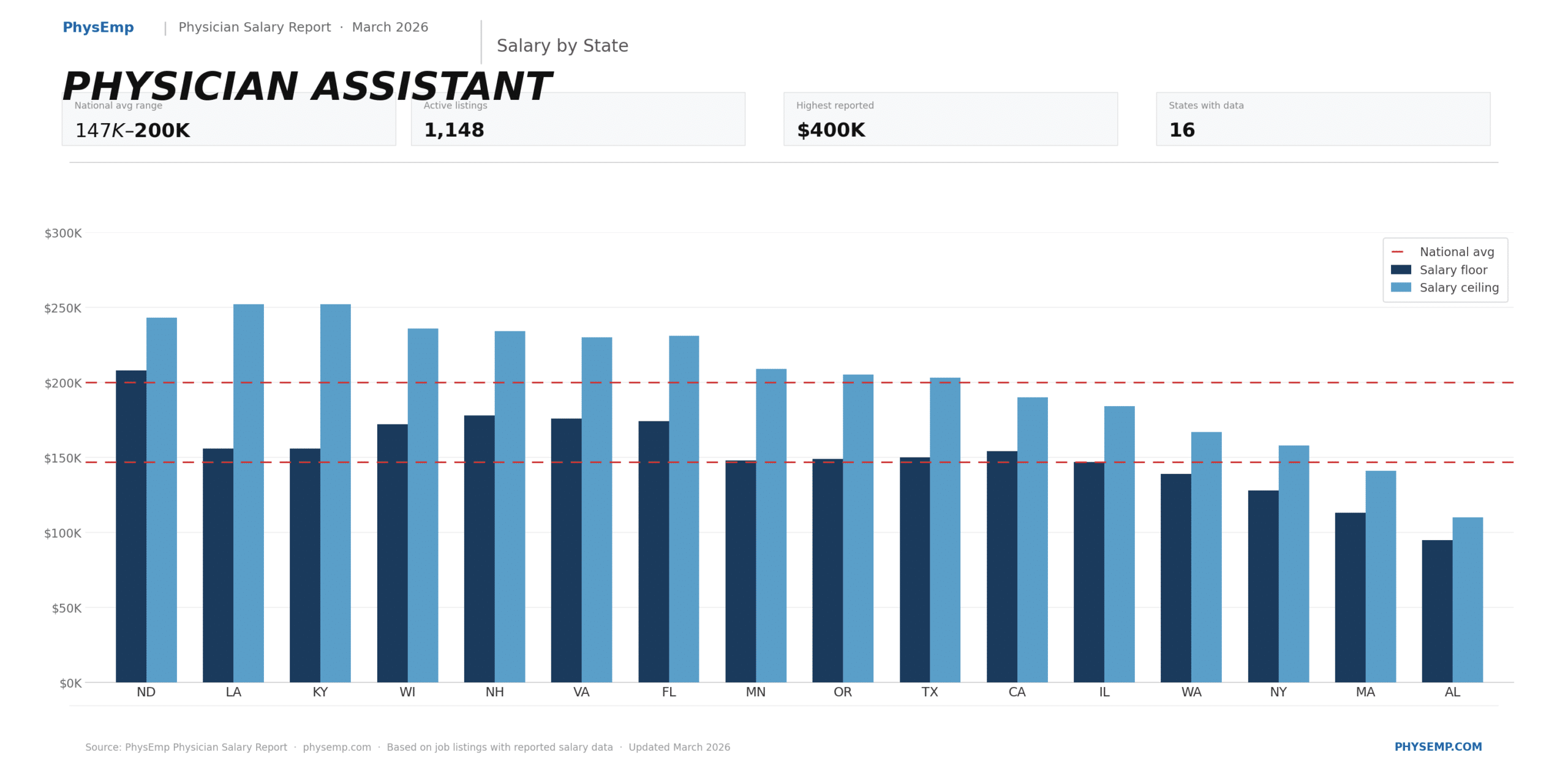

As academic programs and assurance structures mature, the healthcare labor market will evolve along three vectors. First, demand will grow for hybrid professionals — clinicians with rigorous data-science training who can bridge clinical needs and model development. Second, new operational roles will emerge: AI stewards, model governance officers, and clinical informaticists focused on model lifecycle management. Third, baseline hiring criteria will shift: employers will increasingly value demonstrable competency in AI literacy for many clinical and administrative roles.

Recruiters and workforce planners must adjust role definitions, competency frameworks, and continuing-education budgets. Organizations that proactively develop internal certification pathways, apprenticeship programs, or partnerships with academic centers will have a recruiting advantage and will be better positioned to manage risk when deploying AI at scale.

Call Out: Expect hiring rubrics to combine clinical experience with verified AI competence — whether via degree programs, certification, or documented on-the-job achievements — making structured academic offerings an important signal in the labor market.

Operationalizing partnerships between academia and health systems

Academic medical centers can act as hubs connecting education, governance, and clinical practice. Effective partnerships share data governance agreements, co-design curricula tied to real-world workflows, and create secondment opportunities so clinicians rotate through assurance labs. These ties accelerate translational work and ensure educational programs teach skills that match operational needs.

However, partnerships require clarity on intellectual property, data stewardship, and liability. Institutions that explicitly define these terms and build joint governance structures reduce friction and speed implementation. For healthcare employers, formal relationships with academic centers become a reliable source of vetted talent and best practices.

Implications for the healthcare industry and recruiting

The shift toward institutionalized AI education and assurance has immediate recruiting consequences. Employers will need to identify and attract candidates who demonstrate both clinical credibility and AI competency. Job descriptions should specify the types of AI literacy required (interpretation, monitoring, co-design) rather than using vague statements about familiarity with AI.

Organizations should also invest in internal career ladders that recognize AI-related competencies, from clinical champions who guide adoption to technically trained clinician-scientists who lead model development. Job boards and recruiting platforms that can surface verified AI credentials and connect employers with candidates trained through recognized academic programs will add measurable value.

From a systems perspective, institutions that invest now in education and assurance reduce long-term risk: better-trained users, clearer governance, and a talent pipeline aligned with operational needs lower the probability of harmful deployments and improve adoption outcomes.

Sources

UF College of Medicine launches AI in biomedical and health sciences master’s program – UF CLAS News