This analysis synthesizes 15 sources published the week ending May 5, 2026. Editorial analysis by the PhysEmp Editorial Team.

A Harvard study showing OpenAI’s o1 model outperformed emergency physicians on diagnostic accuracy has generated a week of breathless coverage. Almost none of it engages with what the finding actually means for how physicians get hired, paid, and scheduled. The headline number—59% diagnostic accuracy for the model versus 44% for physicians on complex emergency cases—is being treated as a science story. For anyone tracking AI in Physician Employment & Clinical Practice, it’s a hiring story.

The dominant frame is binary: will AI replace doctors? That question is already obsolete. AI diagnostic tools are reshaping what hospitals expect from physicians, how clinical teams are structured, and which metrics define physician value—well before any replacement conversation gets serious.

Beyond Replacement: The Productivity Recalibration

The Harvard study matters less for the accuracy gap than for the methodology it validated. Researchers tested o1 against real ED conditions—time pressure, incomplete information, diagnostic ambiguity. This wasn’t a tidy academic exercise. It was a proof-of-concept for operational deployment.

For hospital administrators, that creates immediate pressure to integrate AI diagnostic support into emergency workflows. The math is simple: if a tool improves diagnostic accuracy by 15 percentage points, systems that don’t adopt it carry both quality and liability exposure. But integration isn’t replacement. It’s workflow redesign that alters what the physician is actually doing on shift.

Health systems will start evaluating emergency physicians less on standalone diagnostic accuracy and more on how well they synthesize AI recommendations, manage edge cases, and keep throughput moving. That’s a different competency profile than the one EM hiring has historically rewarded.

EM compensation has long been tied to high-acuity decision-making under pressure. If AI takes the first pass on diagnostic reasoning, the physician’s value shifts toward procedures, patient communication, and exception management. Recruiters should expect downward pressure on base guarantees, with AI-collaboration metrics quietly appearing in productivity bonus structures.

The Liability Paradox and Staffing Implications

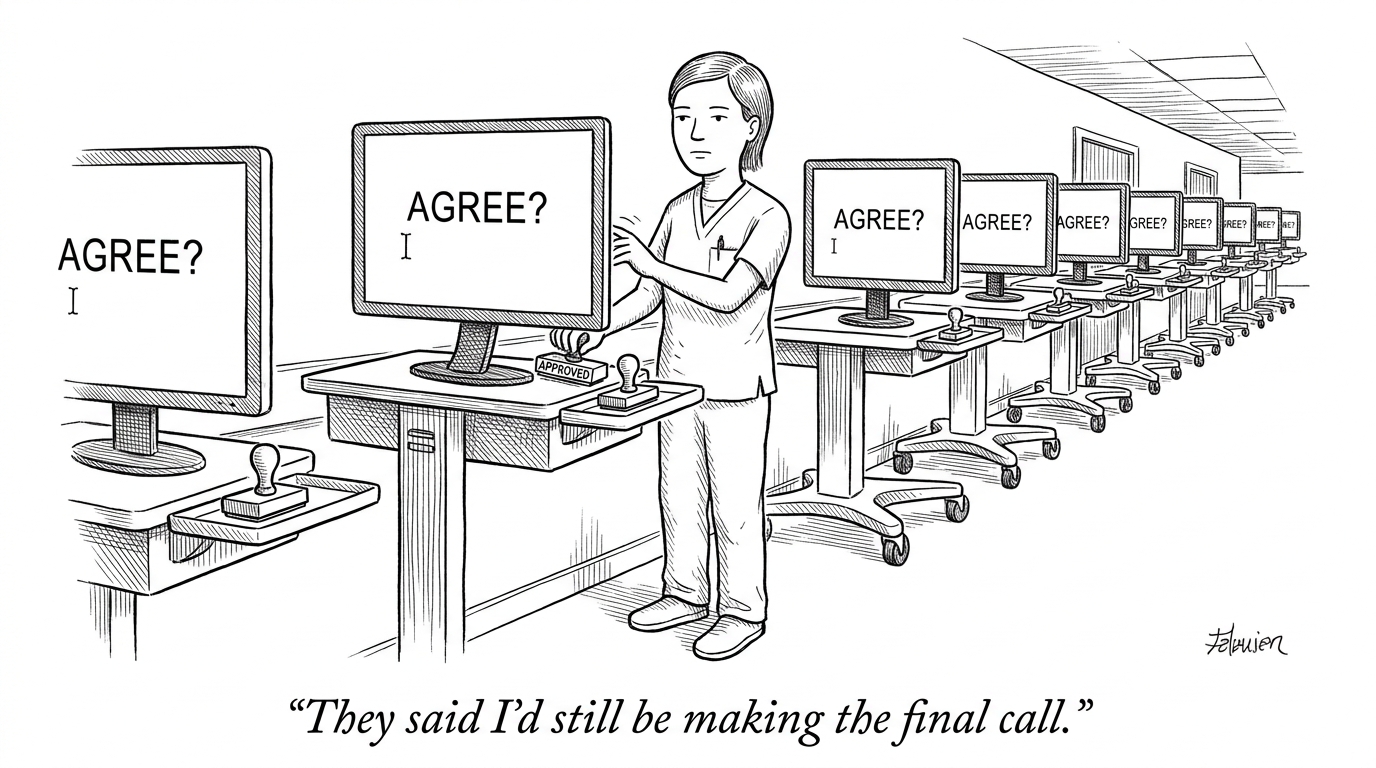

Most coverage skips the liability architecture, which is where this gets interesting. The Harvard study showed AI superiority in a research context. Clinical deployment requires a physician to accept or reject each AI recommendation. So the physician becomes legally responsible for supervising a system that demonstrably outperforms their unassisted judgment. Sit with that for a moment.

This will reshape staffing in predictable ways: AI handles initial diagnostic workup, mid-level providers run routine cases with AI support, and physicians concentrate on complex presentations, procedures, and the liability-bearing final call. The physician headcount story isn’t elimination. It’s role concentration.

Implications for Physician Demand

EDs face chronic staffing shortages. AI diagnostic support doesn’t fix the shortage—it changes its shape. Some systems will need fewer physicians per shift if AI-assisted workflows raise throughput. Others will keep headcount flat and absorb more volume. Either way, the supply-demand dynamics that have pushed EM compensation up over the past decade are about to look different.

Physicians evaluating EM offers should ask whether prospective employers have an AI integration roadmap, and how that roadmap affects expected patient volumes, shift structures, and productivity expectations. A strong comp package without an AI strategy attached may not survive the term of the contract.

Documentation and the Expanding AI Footprint

The diagnostic accuracy findings land alongside fast-accelerating deployment of ambient documentation tools. The convergence is the point. If AI handles diagnostic reasoning and clinical documentation, the physician’s workflow compresses to physical exam, procedures, and patient interaction.

That compression has compensation implications well beyond emergency medicine. Specialties where cognitive work—diagnosis, treatment planning, documentation—is the primary value contribution face the most restructuring pressure. Radiology, pathology, and the diagnostic component of primary care all sit inside AI’s expanding capability envelope.

Hospital executives building physician compensation models should expect AI-augmented productivity metrics to become standard within roughly 24 months. Contracts negotiated today without AI-adjustment provisions will likely require costly renegotiation as integration accelerates.

The Collaboration Model Takes Shape

Several outlets argued that AI’s diagnostic edge doesn’t reduce the need for physicians—it intensifies the need for physicians who can work alongside AI. True, but incomplete. The collaboration model emerging from the Harvard work positions physicians as quality assurance layers and exception handlers, not primary diagnostic engines. That’s a meaningful demotion in cognitive labor, even if the title and the salary don’t change immediately.

For candidates, this shift demands new skills. Comfort with AI interfaces, a feel for where these models fail, and the ability to override algorithmic recommendations efficiently will start showing up as differentiating factors in hiring. Physicians who resist AI integration, or who lack fluency with the tools, will see their options narrow.

For recruiters and executives, the collaboration model means rethinking credentialing and onboarding. AI-collaboration competency should be a standard evaluation dimension. Existing staff need a path to proficiency. The investment is substantial. It’s also no longer optional.

Strategic Positioning for the AI-Integrated Future

The Harvard findings will accelerate AI diagnostic deployment whether individual systems welcome it or not. Competitive pressure, liability exposure, and quality benchmarks will drive adoption. The strategic question isn’t whether to integrate. It’s how to structure physician roles, compensation, and workflows around AI capabilities that keep getting better.

Systems that move early will pick up a recruiting advantage by offering physicians clearly defined roles in AI-augmented environments instead of ambiguous transitions. Physicians who develop AI-collaboration skills now will command premium positioning when health systems start competing seriously for that talent.

What’s coming is neither physician replacement nor business as usual. It’s a restructuring of what physician work means, how it gets measured, and what it pays. Somewhere in a Boston ED right now, an attending is overruling o1 on a chest pain workup and signing the chart anyway. That’s the job, until it isn’t.

Sources

AI outperforms doctors in Harvard trial of emergency triage diagnoses – The Guardian

AI is starting to beat doctors at making correct diagnoses – Science

Study suggests AI is ‘good enough’ at diagnosing complex medical cases to warrant clinical testing – Harvard Medical School

AI Outperforms Doctors Harvard Study Finds – Harvard Magazine

In Harvard study AI offered more accurate diagnoses than emergency room doctors – TechCrunch

AI outscores doctors in ER diagnosis study finds – San Francisco Chronicle

In real-world test an AI model did better than ER doctors at diagnosing patients – Aspen Public Radio

AI doctors openai patient care diagnosis – NPR

AI helps diagnose emergency-department patients at hospital – The Boston Globe

AI outperforms human physicians on emergency diagnoses study – Becker’s Hospital Review

AI Is Starting to Outperform Doctors — Here’s Why Doctors Are Needed Now More Than Ever – Forbes

Could AI Surpass Doctors at Clinical Reasoning? – Inside Precision Medicine

Could AI surpass doctors clinical reasoning – HealthLeaders Media

Harvard study AI outdiagnose doctors OpenAI O1 preview – Fortune

An AI Just Beat Doctors at Diagnosing ER Patients – Singularity Hub