This analysis synthesizes 13 sources published the week ending Mar 24, 2026. Editorial analysis by the PhysEmp Editorial Team.

The physician workforce is caught in a paradox: the same AI tools that promise liberation from documentation burden may be quietly eroding the clinical skills that define physician value. With 81% of physicians now reporting AI use and ambient scribes proliferating across health systems, a structural tension is emerging between productivity gains and professional competency preservation. This dynamic sits at the heart of AI in Physician Employment & Clinical Practice, where the tools designed to reduce burnout are simultaneously raising fundamental questions about what it means to practice medicine—and how physicians will be compensated, evaluated, and recruited in an AI-augmented landscape.

The latest AMA survey data and Doximity’s 2026 specialty-level analysis confirm what many health systems already know: physician AI adoption has moved from experimentation to expectation. But beneath the efficiency metrics lies a growing unease among clinicians themselves—one that mainstream AI coverage consistently fails to address. The employment and labor-market implications of automation bias, deskilling, and clinical judgment erosion are not peripheral concerns; they are central to how physician careers, compensation structures, and professional autonomy will evolve over the next decade.

The Deskilling Dilemma: Productivity vs. Proficiency

The term “deskilling” has emerged as the dominant concern in physician AI discourse, appearing across multiple analyses this week. When 81% of physicians report using AI tools while simultaneously flagging deskilling as their top concern, the contradiction is instructive. Physicians are not rejecting AI—they are warning that uncritical adoption may degrade the very competencies that justify physician-level compensation and clinical authority.

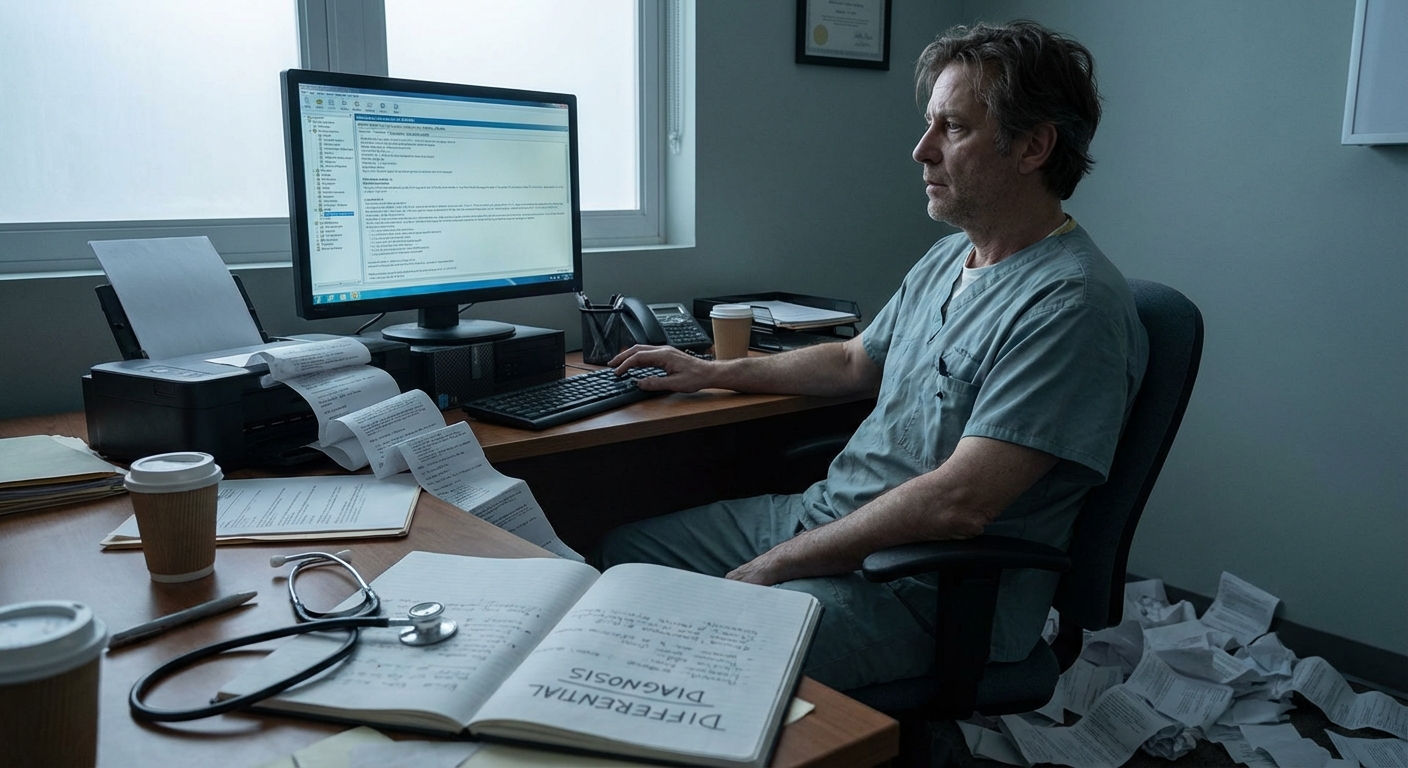

The “autopilot” metaphor now circulating in clinical circles captures the risk precisely. Ambient AI scribes that passively document encounters, suggest diagnoses, and pre-populate treatment plans reduce cognitive load—but they also reduce cognitive engagement. Over time, physicians who rely heavily on AI-generated documentation may find their differential diagnosis skills atrophying, their pattern recognition dulling, and their ability to catch AI errors diminishing. This is not speculative; it mirrors documented phenomena in aviation and other high-stakes fields where automation has been studied for decades.

For employed physicians, deskilling represents a career risk that productivity metrics alone cannot capture. A physician who becomes dependent on AI tools may find their professional mobility constrained—unable to practice effectively in settings without equivalent technology, and vulnerable to replacement by less experienced clinicians augmented by the same tools.

Hospital executives and recruiters must recognize that deskilling is not merely a quality concern—it is a workforce planning issue. Health systems investing heavily in AI documentation tools are simultaneously creating physician workforces whose skills may be tethered to specific technology stacks. This has implications for recruitment, retention, and the portability of physician talent across organizations.

Automation Bias: The Hidden Liability in AI-Assisted Care

Beyond deskilling, automation bias represents an acute patient safety and liability concern that is only beginning to receive appropriate attention. When AI scribes generate clinical notes, physicians face a cognitive trap: the documentation appears authoritative, professionally formatted, and comprehensive. The temptation to approve with minimal review is substantial, particularly for time-pressured clinicians seeing 20+ patients daily.

The risk is not that AI documentation is consistently wrong—it is that errors, when they occur, are more likely to go undetected. A physician who dictates notes develops an intimate familiarity with their documentation. A physician who reviews AI-generated notes is performing a different cognitive task entirely—one that research suggests humans do poorly. We are pattern-matchers, not error-detectors, and AI-generated text can easily pass the “looks right” test while containing clinically significant omissions or inaccuracies.

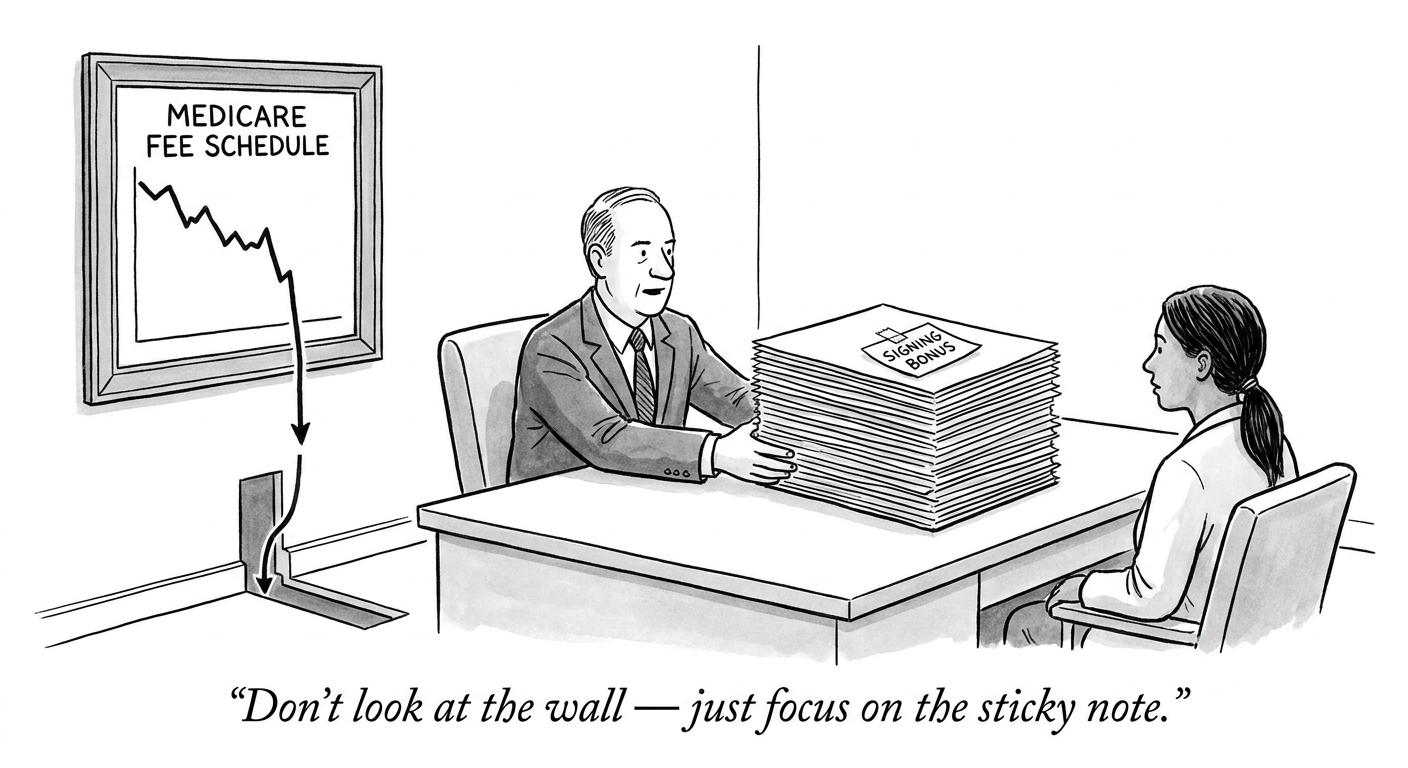

Implications for Compensation and Liability

The liability question remains unresolved and represents a significant gap in current employment contracts. When an AI scribe generates documentation that a physician approves, and that documentation later contributes to an adverse outcome, the legal and professional responsibility falls entirely on the physician. Yet compensation models have not adjusted to reflect this new category of risk. Physicians are being asked to assume liability for AI-generated content while being compensated as if they were producing documentation themselves.

This asymmetry should concern both physicians evaluating employment opportunities and executives designing compensation structures. Productivity-based models that reward patient volume may inadvertently incentivize rushed AI documentation review, compounding automation bias risks. Health systems that fail to account for the cognitive demands of AI oversight in their productivity expectations may be setting up both their physicians and their organizations for quality failures.

The Clinical Education Crisis Within the Crisis

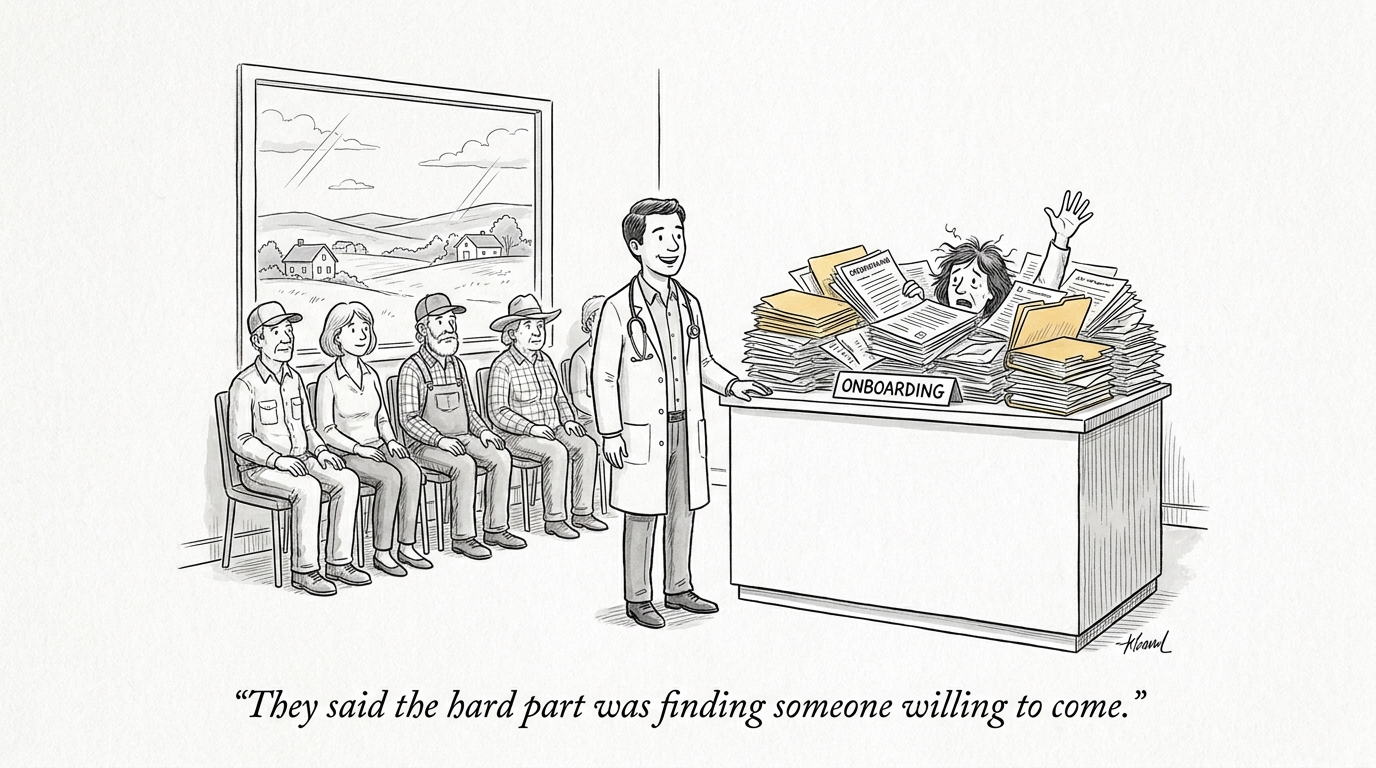

One underexplored dimension of AI scribe adoption is its impact on clinical education and the physician pipeline. Documentation has historically served a dual purpose: it creates a legal record of care, but it also forces physicians—particularly trainees—to synthesize clinical information, articulate diagnostic reasoning, and develop the narrative skills essential to effective practice.

The argument that AI scribes can “rescue clinical education from burnout” by freeing trainees from documentation burden contains a dangerous assumption: that documentation is purely administrative overhead with no educational value. This framing misunderstands how clinical reasoning develops. The struggle to articulate a patient’s presentation, to organize information into a coherent narrative, to commit to a diagnosis in writing—these are formative experiences that shape physician cognition.

Health systems deploying AI scribes at scale must consider the long-term workforce implications. Today’s residents trained with AI documentation assistance may become tomorrow’s attendings with underdeveloped clinical reasoning skills—a problem that will manifest in quality metrics, malpractice exposure, and ultimately, in the perceived value of physician labor.

Regulatory Vacuum and the “Wild West” Problem

The calls to regulate the “wild west” of medical AI scribes reflect a genuine governance gap. Unlike pharmaceuticals or medical devices, AI documentation tools have entered clinical practice without rigorous validation requirements, standardized accuracy metrics, or clear liability frameworks. This regulatory vacuum creates asymmetric risk: health systems capture productivity gains while physicians absorb professional and legal exposure.

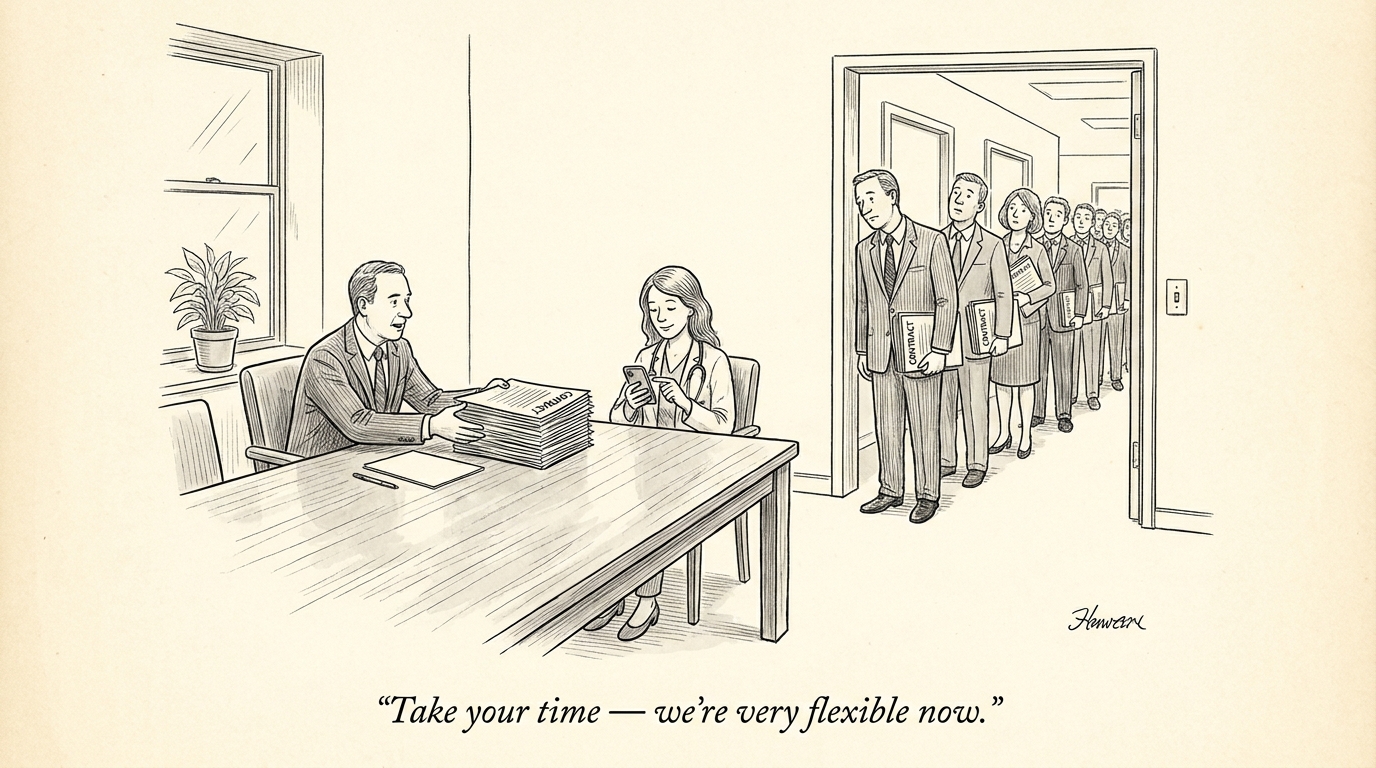

For physicians evaluating employment opportunities, the AI governance posture of potential employers should be a material consideration. Organizations with thoughtful AI implementation frameworks—including physician training requirements, documentation review standards, and clear liability policies—represent lower-risk environments than those pursuing aggressive AI deployment without corresponding safeguards.

Hospital executives and recruiters should recognize that AI governance is becoming a competitive differentiator in physician recruitment. Sophisticated candidates are increasingly asking about AI policies, documentation review expectations, and liability coverage. Organizations that can articulate a balanced approach—capturing AI efficiency while protecting physician autonomy and managing risk—will have advantages in attracting top talent.

Navigating the Integration Without Losing Professional Identity

The path forward requires rejecting both uncritical AI enthusiasm and reflexive resistance. Physicians must develop what might be called “AI fluency”—the ability to leverage AI tools effectively while maintaining the clinical judgment and skills that define physician value. This is not a passive process; it requires deliberate practice, ongoing skill maintenance, and clear boundaries around AI reliance.

For employed physicians, this means actively negotiating the terms of AI integration in their practice. What documentation review time is built into productivity expectations? What training is provided on AI limitations and error patterns? What liability protections exist for AI-assisted documentation? These questions should be as central to employment negotiations as compensation and call schedules.

The mainstream narrative around AI in healthcare focuses heavily on efficiency gains and burnout reduction—legitimate benefits that deserve recognition. But this coverage consistently misses the employment and labor-market dimensions: how AI changes the skills physicians need, how it shifts liability and risk, how it affects compensation models, and how it may ultimately reshape which physicians are recruited and how they are valued. These are not secondary considerations; they are the structural forces that will determine physician careers for the next generation.

Health systems that approach AI integration as purely a technology deployment problem will find themselves managing workforce crises they did not anticipate. Those that recognize AI adoption as fundamentally a physician workforce strategy—requiring attention to skills development, liability management, compensation alignment, and professional identity preservation—will build sustainable competitive advantages in both care quality and talent acquisition.

Sources

Ambient scribes lower physician workload but introduce new challenges – DocWire News

AI in Clinical Documentation: The Hidden Risk of Automation Bias – KevinMD

Ambient AI in Clinical Practice: Clarifying the Boundaries of Physician Judgment – Rama on Healthcare

81% of doctors report AI use — ‘deskilling’ top concern – eMarketer

AMA Survey Finds Rapid Growth in Physician AI Adoption – The ASCO Post

Using medical AI as ‘autopilot’ risks deskilling of clinicians – Ophthalmology Times

Doximity Releases 2026 Report on Physician AI Adoption Across 15 Medical Specialties – Rama on Healthcare

How AI Scribes Can Rescue Clinical Education From Burnout – KevinMD

The Gray Area of Doctors, AI and Patient Safety – Rama on Healthcare

Clinicians Fear AI Is the New EHR — Let’s Not Prove Them Right (Viewpoint) – Chief Healthcare Executive

4 must-haves for health execs deploying ambient AI scribes at scale – HealthExec

We Need to Regulate the Wild West of Medical AI Scribes – Rama on Healthcare

From Burden to Breakthrough: Why Ambient AI Is the New Frontline of Clinician Support – Rama on Healthcare