AI initiatives in healthcare promise faster diagnoses, improved workflows, and reduced clinician burden — yet a large share never progress beyond pilots. This matters for anyone focused on AI in healthcare, because stalled projects consume scarce clinical time, hiring bandwidth, and capital without delivering sustained value.

Moving from prototype to production requires more than an accurate model; it demands repeatable operational practices, aligned economics, and organizational will. Below I synthesize the recurring barriers that cause AI efforts across health systems, pharma, and startups to stall, then outline practical signals and levers leaders and recruiting teams can use to improve adoption chances.

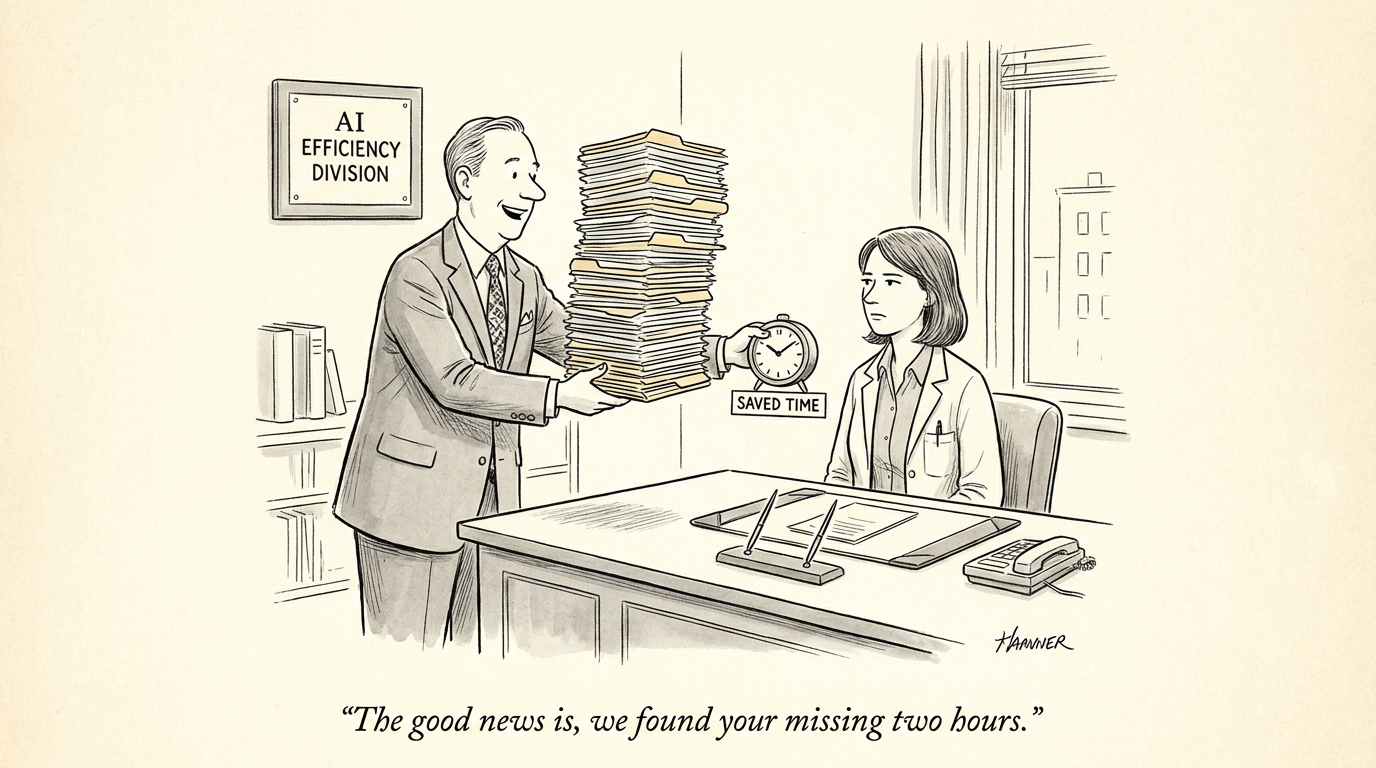

Implementation and the “pilot purgatory”

Pilots are useful for learning, but when treated as isolated experiments they rarely create durable change. Many pilots validate technical feasibility on curated datasets and under controlled conditions; the moment a solution encounters routine clinical variability — differing EMR configurations, fluctuating staff capacity, or simultaneous initiatives — friction multiplies. A common failure mode is absence of an operational pathway: no product owner, limited integration budget, unclear uptime or maintenance expectations, and no handoff to an operations team. Without those elements, pilots become academic exercises rather than deployable products.

Call Out:

Signal to watch: a pilot with no assigned product owner, no uptime requirement, and no integration budget is unlikely to scale. Treat pilots as product development cycles, not one-off experiments.

Data quality, drift, and governance gaps

Data is both the enabler and Achilles’ heel for clinical AI. Models that perform well on historical or lab-annotated data often degrade when exposed to different patient populations, new device vendors, or evolving coding practices. Robust operationalization requires pipelines that harmonize data from disparate sources, continuous monitoring to detect distributional shifts, and clear procedures for model retraining and validation. Equally critical is governance: documented decision logs, change control, and transparency for clinicians and compliance reviewers. When governance is weak, clinical teams rightly distrust black-box outputs and compliance teams apply conservative brakes that halt rollout.

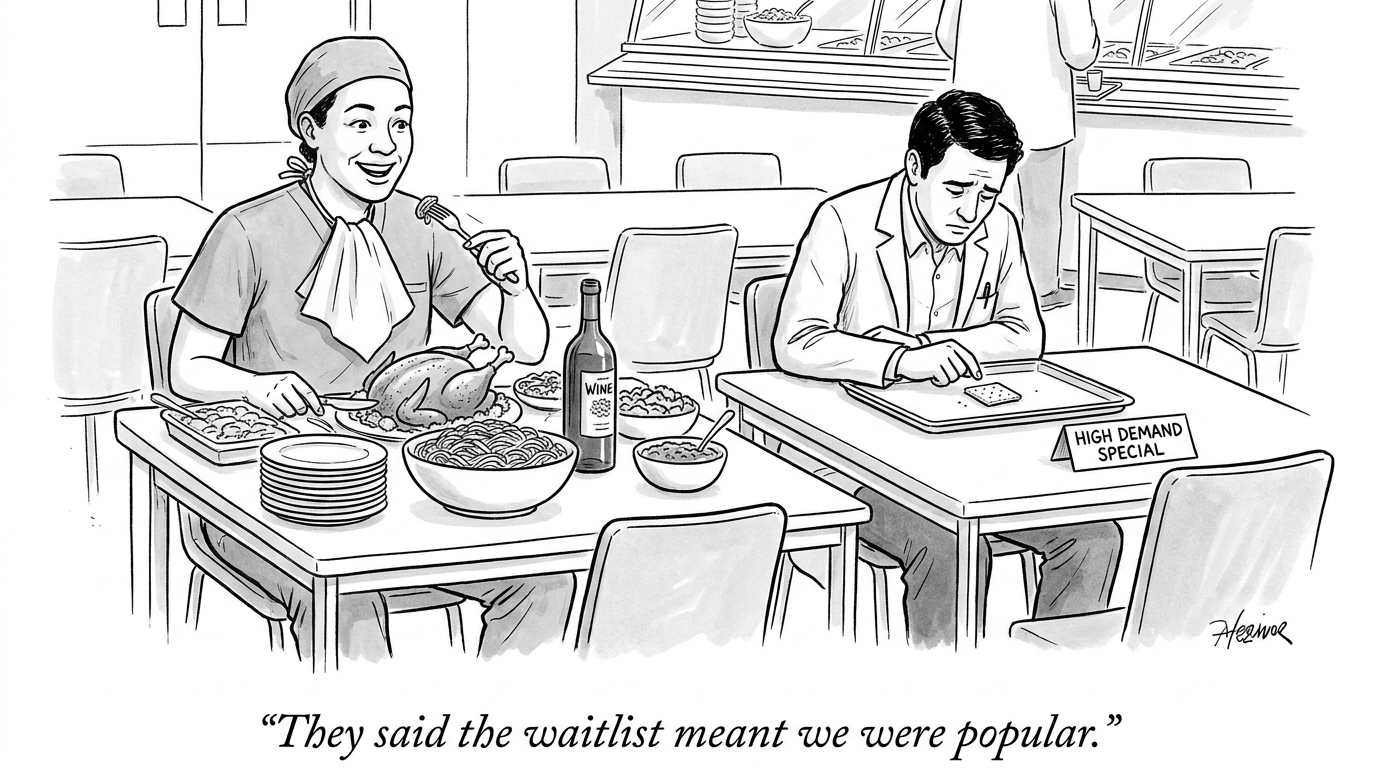

Economic misalignment and startup sustainability

Financial incentives heavily influence whether a product gets integrated. For vendors, high customer acquisition costs, prolonged validation periods, and uncertain reimbursement push early-stage companies into precarious economics. For buyers, the total cost of ownership — integration engineering, local validation, staff training, and ongoing support — must be justified against near-term operational benefits. When expected benefits are diffuse, delayed, or difficult to measure, procurement slows, contracts become conservative, or pilots end without expansion. This dynamic favors solutions with clear, measurable ROI or payment models that share risk between vendor and purchaser.

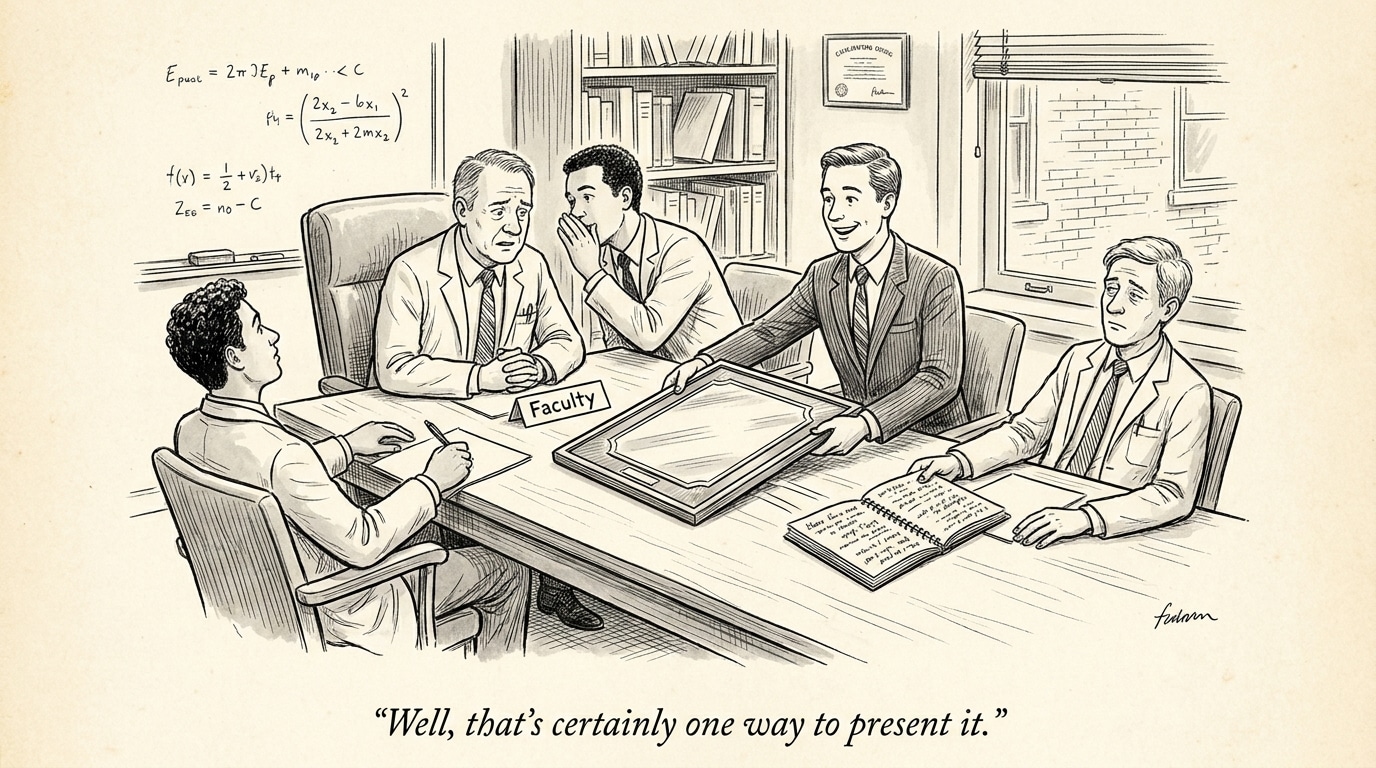

Organizational adoption and cultural friction

Even technically sound solutions fail if they do not align with clinical workflows or address a felt need. Clinicians will adopt tools that demonstrably save time, reduce cognitive burden, or mitigate safety risks. By contrast, initiatives that require substantial workflow change for marginal gains encounter resistance. Successful adoption strategies emphasize early clinician involvement in design, transparent performance reporting, and staged rollouts that produce visible, repeatable wins. Treating AI projects primarily as technical deployments rather than change-management efforts underestimates the human and institutional work required.

Operational levers that unlock scaling

There are pragmatic steps organizations can take to reduce the risk of stalling. Define deployment criteria up front: clinical or operational KPIs, integration milestones, and an explicit budget for engineering and support. Create cross-functional teams that include product managers who understand clinical context, data engineers who maintain pipelines, and compliance professionals who codify governance. Invest in modular integration patterns and retraining workflows so models can be updated safely. Consider commercial terms that align incentives — phased payments or outcome-based contracts — to lower the buyer’s threshold for commitment.

KPI discipline: what to measure

Prioritize metrics that reflect clinical and operational value, not just statistical performance. Examples: reduction in time-to-decision, measurable decreases in adverse events, percent of tasks automated without increased false positives, or clinician time reclaimed per week. Measuring these during pilot stages helps build the business case for scale.

Call Out:

Adoption hinges on perceived clinical benefit and reliability, not novelty. Prioritize demonstrations that reduce clinician burden or measurable safety risks; these create the political and operational momentum to scale.

Implications for healthcare leaders and recruiting

Hiring and budgeting decisions should reflect the work of taking AI into production. Recruit for hybrid competencies: product managers who can translate clinical needs into technical requirements, reliability engineers with health-IT experience, and data stewards who can maintain lineage and quality. Candidates with demonstrated experience shipping clinical software or operating within regulated environments are disproportionately valuable. For health system leaders, shifting budget allocation toward lifecycle operations — integration, monitoring, and support — reduces the chance that AI investments become sunk costs.

For investors and startups, the implication is clear: design business models and cash plans that account for long validation cycles and expensive integration. Shared-risk contracting and tight partnerships with early adopter systems can de-risk deployments and demonstrate replicable value. For policymakers, clearer reimbursement pathways and incentives for measurable outcomes would lower systemic friction and accelerate meaningful adoption.

Sources

AI failure examples: What real-world breakdowns teach CIOs – TechTarget

Why AI Adoption Stalls, According to Industry Data – Harvard Business Review

The Pilot Purgatory: Why 80% of Pharma AI Projects Fail — and How to Fix It – Rama on Healthcare

Kintsugi CEO Says Building AI for Healthcare Is Financially Unsustainable for Startups – Forbes