Why this matters now

Deployments of artificial intelligence across payers, clinical services, and public-facing media are shifting where decisions get made and who controls clinical information. That shift goes to the heart of AI in Physician Employment & Clinical Practice because trust depends on predictable, auditable decision pathways and clear lines of accountability. Recent reporting and studies show these foundations are fraying as insurers accelerate algorithmic adjudication, clinicians and patients express uneven trust, and manipulated audiovisual content undermines perceived authenticity.

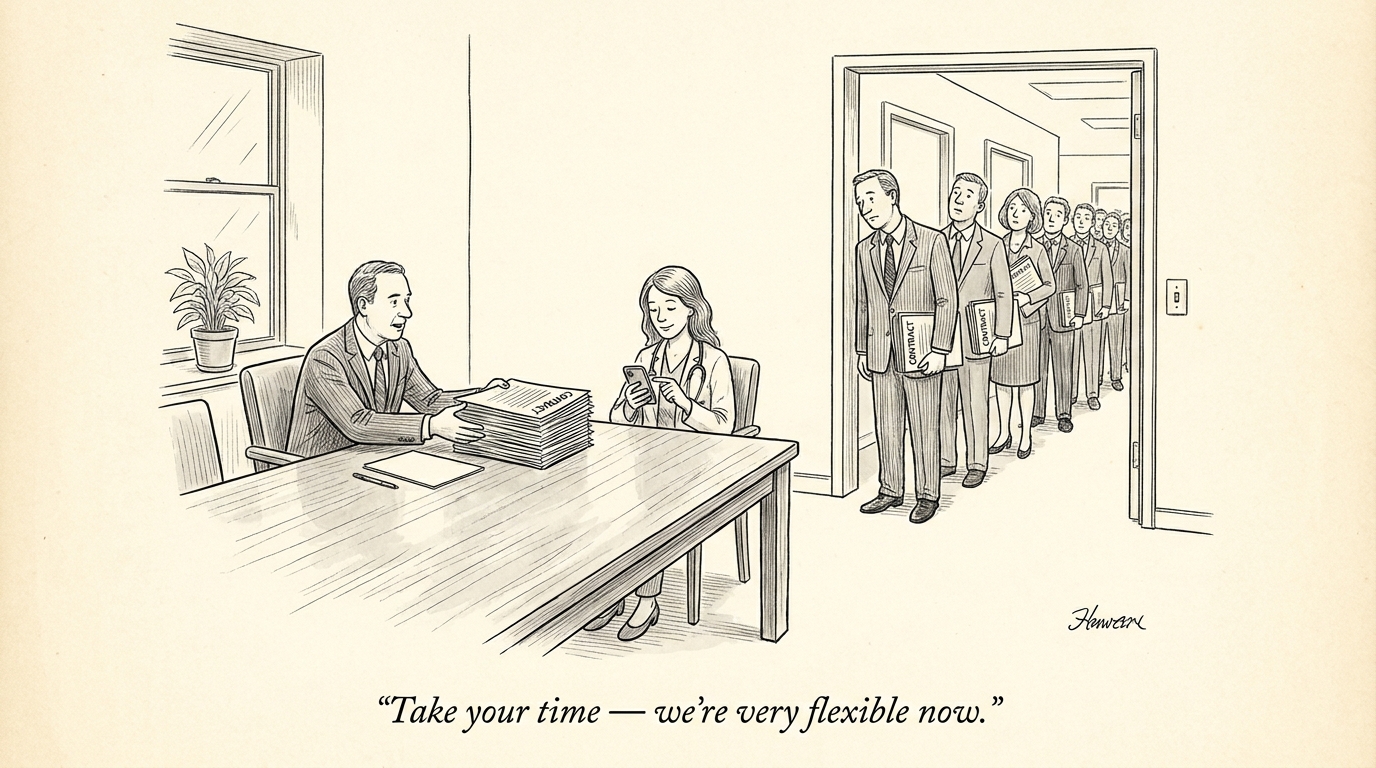

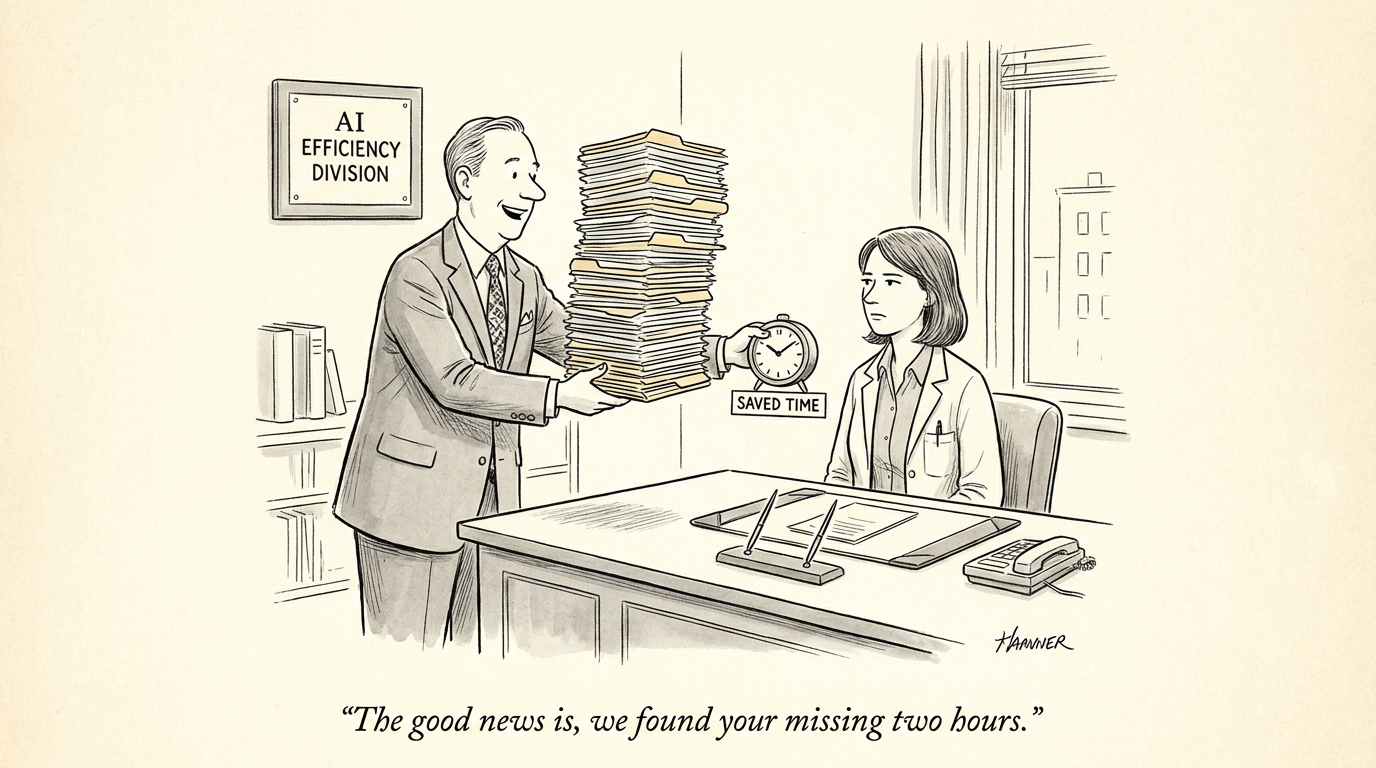

Payer-driven automation versus clinical autonomy

Insurers are increasingly using algorithmic systems to automate authorization, claims review, and medical necessity determinations. When payers replace or heavily mediate clinical judgement with proprietary models, two dynamics emerge: (1) clinicians feel sidelined in care decisions and (2) patients receive outcomes produced by opaque processes. Both dynamics damage the interpersonal and institutional trust necessary for effective care coordination.

From a governance perspective, the speed and scale of insurer deployments often outpace the establishment of independent validation, transparent performance reporting, and mechanisms for clinicians to contest or audit algorithmic actions. Without standardized audit trails and vendor accountability, operational gains come at the cost of perceived legitimacy among the workforce and the public.

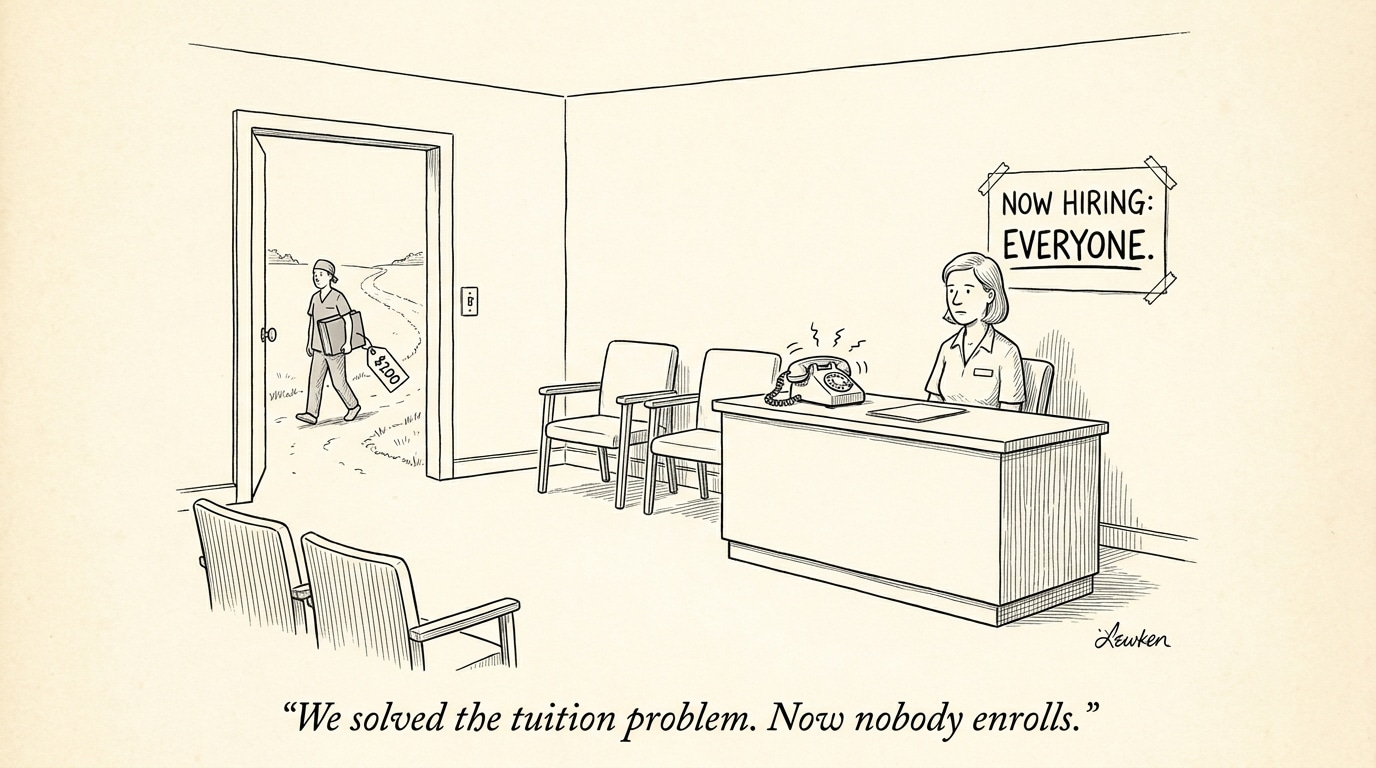

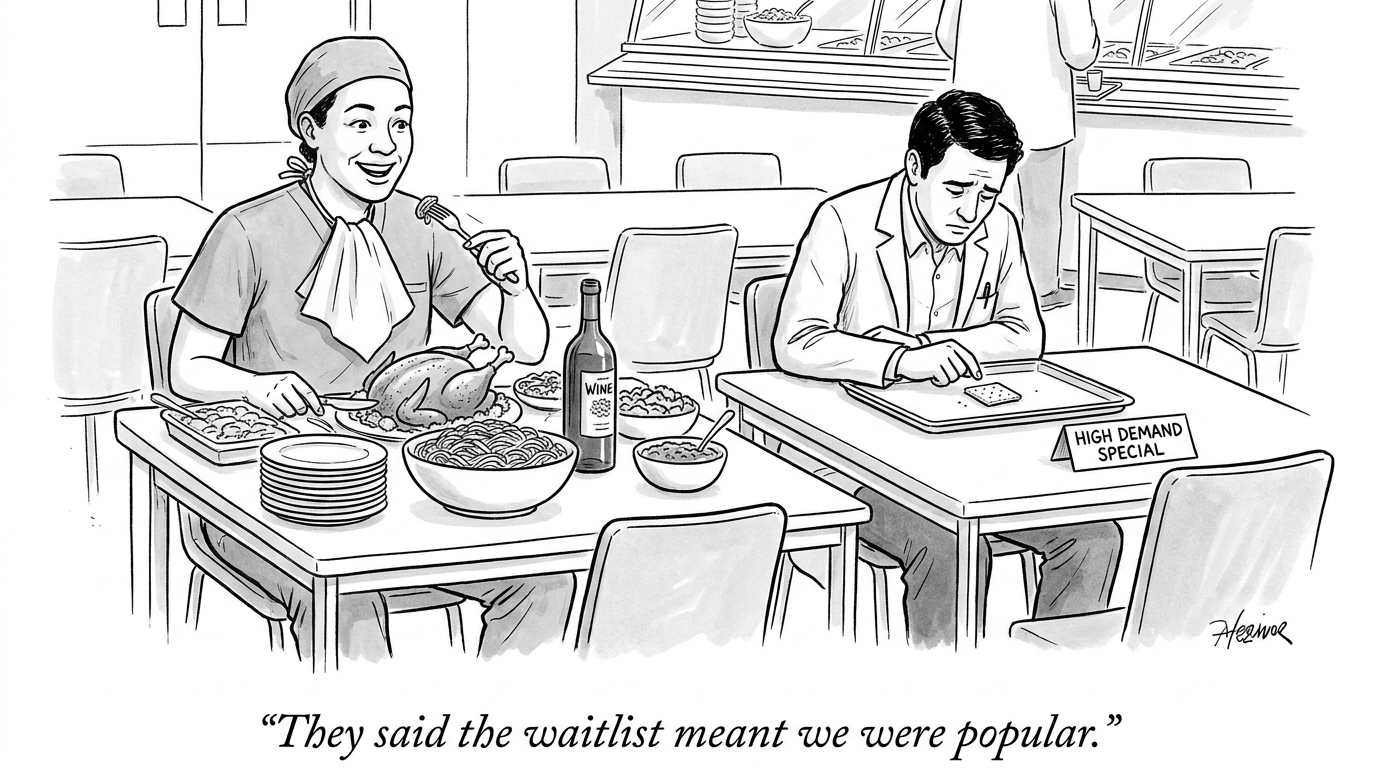

Trust is not uniform — demographic and contextual gaps matter

Acceptance of clinical AI is heterogeneous across patient groups, clinician specialties, and demographic lines. Empirical work in ophthalmology and related fields shows that trust correlates with factors such as prior exposure to technology, perceived transparency, and the presence of clinician endorsement. Where systems are presented as assisting clinicians rather than substituting for them, acceptance increases; where systems are black boxes, acceptance falls.

These patterns imply that deployments should be tailored: the same algorithmic product will be interpreted differently by an experienced specialist, a primary care clinician, an older patient, or a younger, digitally native user. Governance frameworks that treat trust as binary will fail — policy must account for granular trust gradients and aim to raise baseline confidence through explainability, user education, and context-specific performance evidence.

Call Out: Restoring trust requires more than accuracy metrics—patients and clinicians demand transparency about who controls decision rules, how models are validated across subgroups, and accessible routes to contest outcomes.

Deepfakes, authenticity, and the erosion of informational trust

Emerging synthetic media technologies make it feasible to produce convincing audiovisual representations of clinicians. Even when deployed for benign purposes (telehealth avatars, training videos), the presence of deepfakes in public discourse lowers confidence in what is genuine. The risk is not merely reputational: manipulated content can be weaponized to spread false medical advice, impersonate providers in teleconsultations, or discredit legitimate professionals.

Countering these risks requires layered controls: provenance standards for clinical communications, verifiable identity protocols for telehealth, and media literacy initiatives for patients and clinicians. Absent such measures, high-profile incidents of falsified clinician content produce spillover effects that damage trust in otherwise legitimate AI tools and human providers.

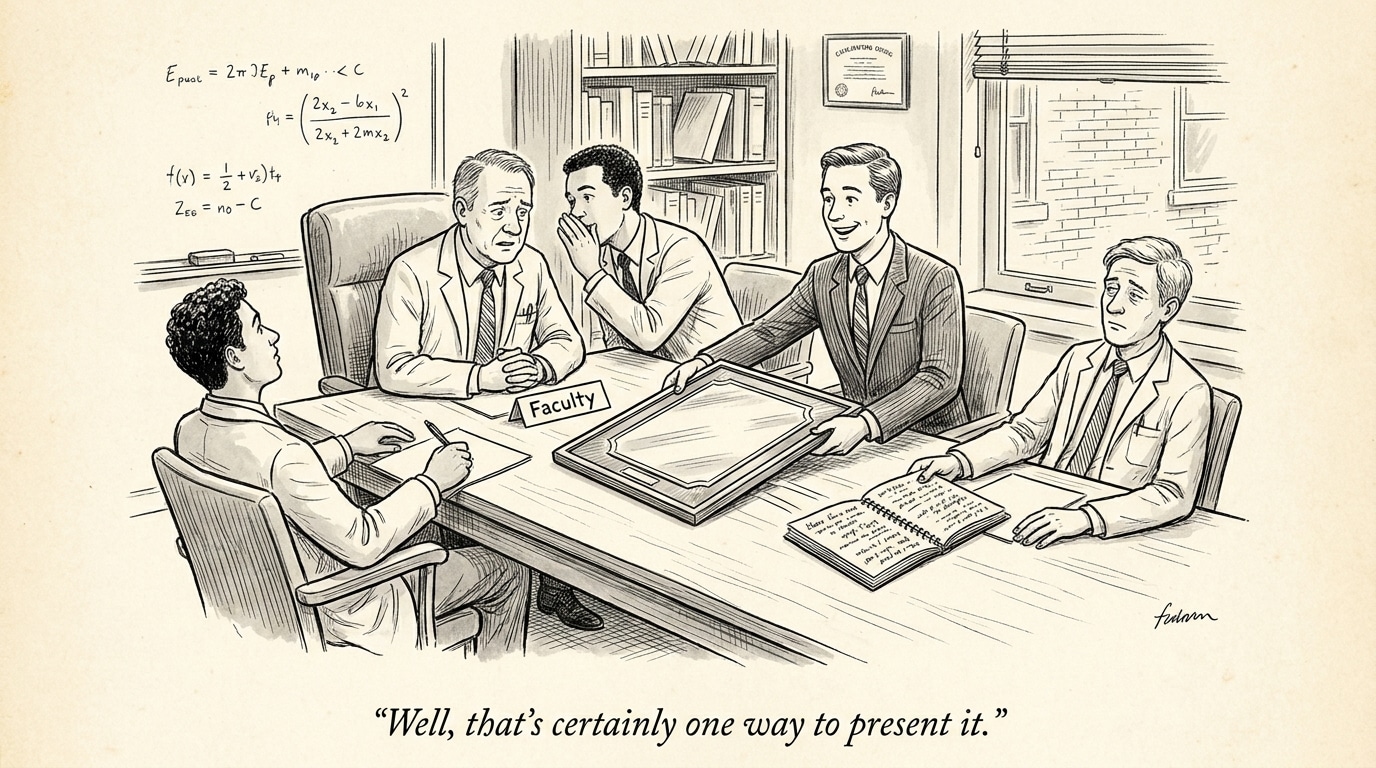

Governance gaps and practical fixes

Current governance shortfalls fall into three categories: transparency (insufficient disclosure of model logic and performance), accountability (unclear remedial paths when algorithms err), and oversight (limited independent evaluation and ongoing monitoring). Addressing these gaps means operationalizing governance across procurement, deployment, and lifecycle management.

Immediate governance levers

- Require model documentation and performance reports (model cards) that include subgroup performance, failure modes, and training-data provenance.

- Mandate human-in-the-loop thresholds for decisions with material clinical consequences and standardized appeal processes for disputed determinations.

- Institute independent audits and post-deployment monitoring by accredited third parties—especially for payer systems that directly influence access to services.

- Adopt provenance and authentication standards for clinician-facing and public communications to limit misuse of synthetic media.

Call Out: Insurers and vendors must be required to publish verifiable audit summaries and patient-facing explanations; otherwise, short-term efficiency gains will translate into long-term trust deficits that raise clinical, legal, and operational costs.

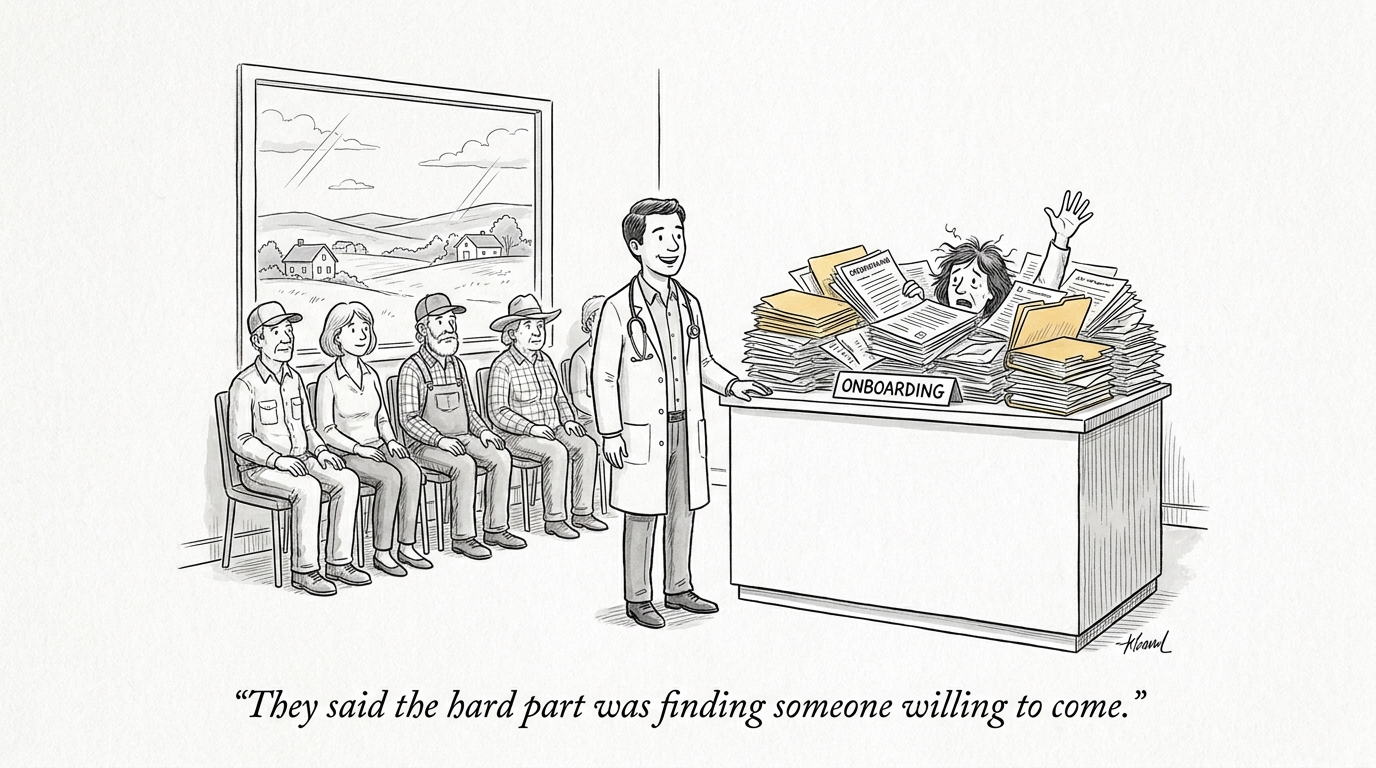

Implications for the healthcare industry and recruiting

For health systems and staffing organizations, the erosion of trust changes both demand and workforce needs. Clinical leaders will be asked to play larger roles in AI procurement, validation, and governance—creating demand for physician informaticists, clinical AI auditors, and ethics officers. Recruitment strategies should prioritize candidates with hybrid skill sets (clinical expertise plus experience in AI governance or quality assurance).

Organizations that proactively invest in transparent governance and clinician-centered implementation will gain a competitive advantage in talent attraction and retention. Conversely, entities that outsource decision-making to opaque systems risk clinician disengagement and higher turnover. For recruiters, this means articulating an employer’s governance commitments and upskilling pathways as part of the value proposition to prospective hires.

Conclusion

AI offers measurable operational and diagnostic benefits, but those benefits are conditional on trust. Rapid adoption by payers, uneven acceptance across demographic groups, and the rise of synthetic media are converging to challenge foundational assumptions about who is trusted to decide in healthcare. Practical governance—centered on transparency, accountability, and verifiable authenticity—must be implemented now to prevent efficiency gains from undermining long-term clinical and public confidence.

Sources

Health insurers expand AI use, undermining providers’ trust – STAT

Viewpoints: Deepfake doctors are damaging public trust – KFF Health News