Why this matters now

The rapid spread of large language models and clinical decision algorithms into everyday practice has turned a technical curiosity into an operational imperative. Health leaders now face practical choices about when to deploy AI and how to protect patients and staff. This discussion sits directly under our core pillar: AI in healthcare, and it requires clearer evaluation criteria, deployment guardrails, and workforce planning tied to clinical safety.

Recent analyses reveal a widening gap between demonstrable gains — automation of routine tasks and improved throughput — and clear risks, especially when conversational agents provide open‑ended advice. The immediate question for systems and recruiters is not whether AI will matter, but how to adopt tools that improve outcomes without creating new sources of error or mistrust.

Comparing risk profiles: narrow tools versus open‑ended chatbots

AI applications in medicine fall into two broad risk buckets. Narrow, task‑focused systems (e.g., medication reconciliation, imaging triage, or structured decision support) have constrained inputs and outputs and are therefore easier to validate and monitor. They typically support clinicians by reducing repetitive work and highlighting clinically relevant items for human review.

Conversational chatbots and generalist LLM interfaces present the opposite profile: broad capabilities, high variability, and a propensity to generate plausible but unverified statements. When these systems are used as direct patient interfaces for triage or treatment guidance without robust safety nets, they can produce incorrect, outdated, or misapplied advice that is presented with unwarranted confidence.

Why provenance and uncertainty matter

Two technical deficits drive many unsafe outcomes: lack of provenance and opaque uncertainty. Provenance — a clear trail showing which data and guidelines supported a recommendation — matters because it lets clinicians judge relevance and correctness. Uncertainty quantification is equally important; models that provide calibrated confidence scores and that flag cases outside their training distribution help users decide when escalation is necessary.

Systems that omit provenance or present deterministic answers from probabilistic models shift the burden of error onto clinicians and patients. Effective implementations expose limitations, require clinician confirmation for consequential actions, and make it simple to trace and rectify mistakes.

Call Out: Deploy only where failure modes are visible and manageable — for example, in documentation automation, prioritization workflows, or narrowly scoped decision support — and avoid using generalist chatbots for unsupervised patient triage until rigorous provenance and uncertainty mechanisms exist.

Validation and governance: operational requirements

Safe adoption demands a governance stack that integrates validation, monitoring, and lifecycle management. Pre‑deployment validation should test models on representative clinical cases, checking for performance across demographics and atypical presentations. Post‑deployment monitoring must capture errors, clinician overrides, and drift in inputs or outcomes so organizations can detect degradation early.

Governance also requires clear assignment of accountability. Clinical teams need documented workflows that define when model outputs are advisory versus actionable, and vendor contracts should codify responsibilities for updates, known limitations, and incident response. Human‑in‑the‑loop designs — where clinicians retain final decision authority and explanations accompany machine recommendations — are a practical default for high‑stakes contexts.

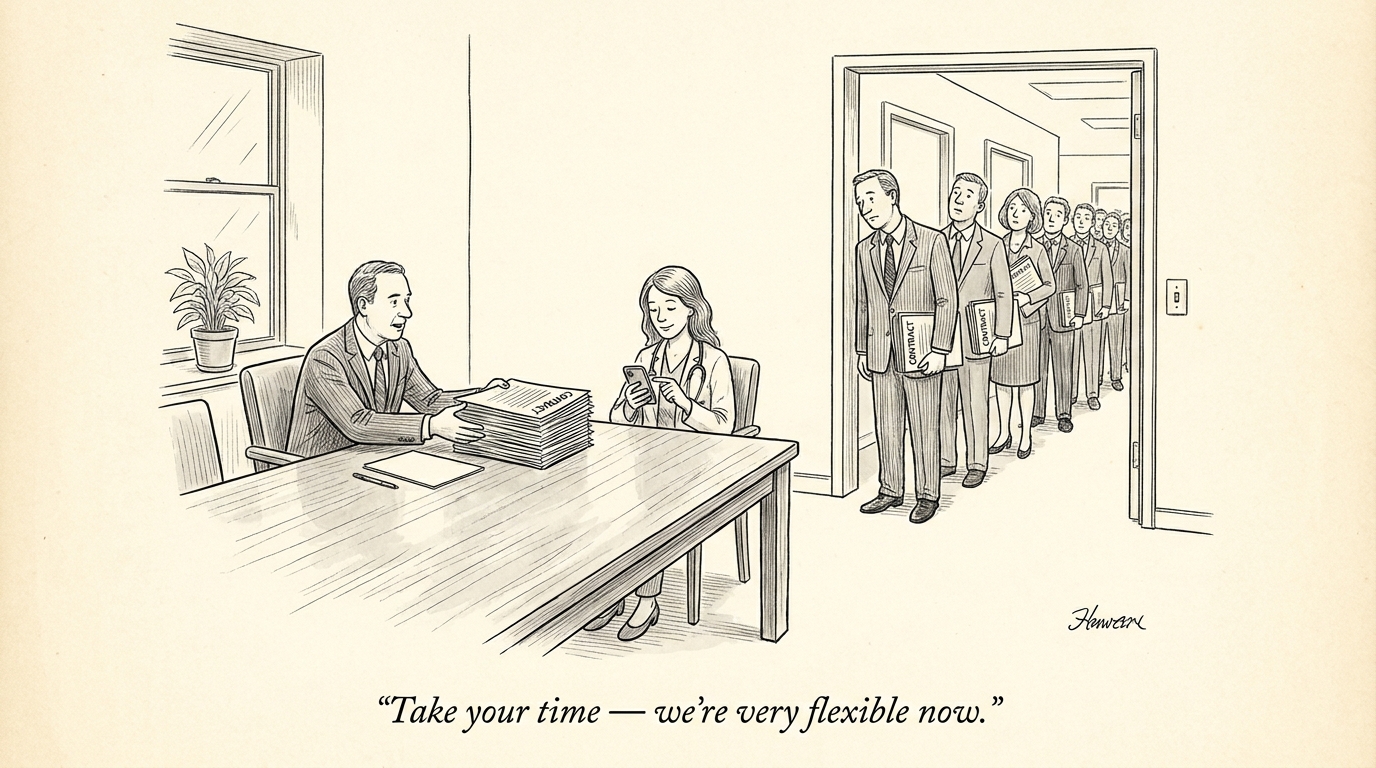

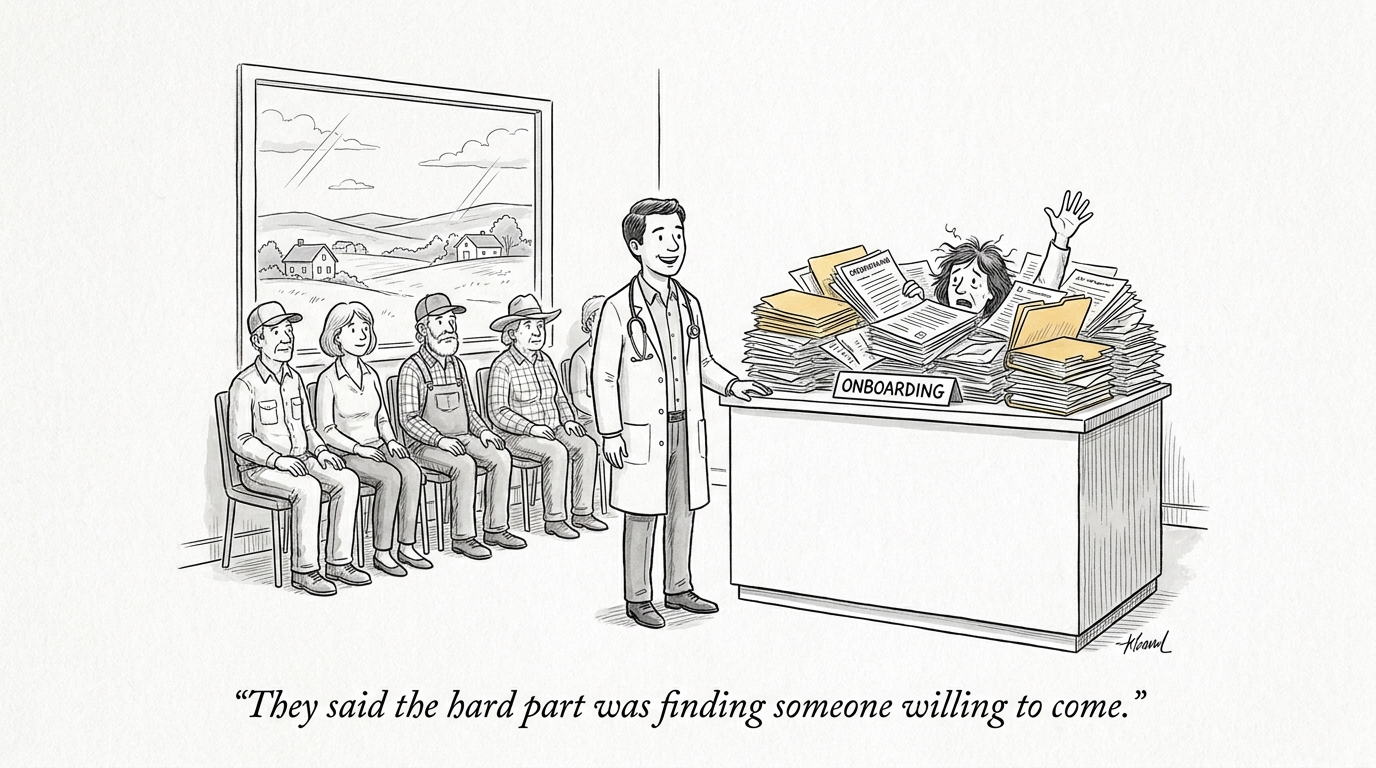

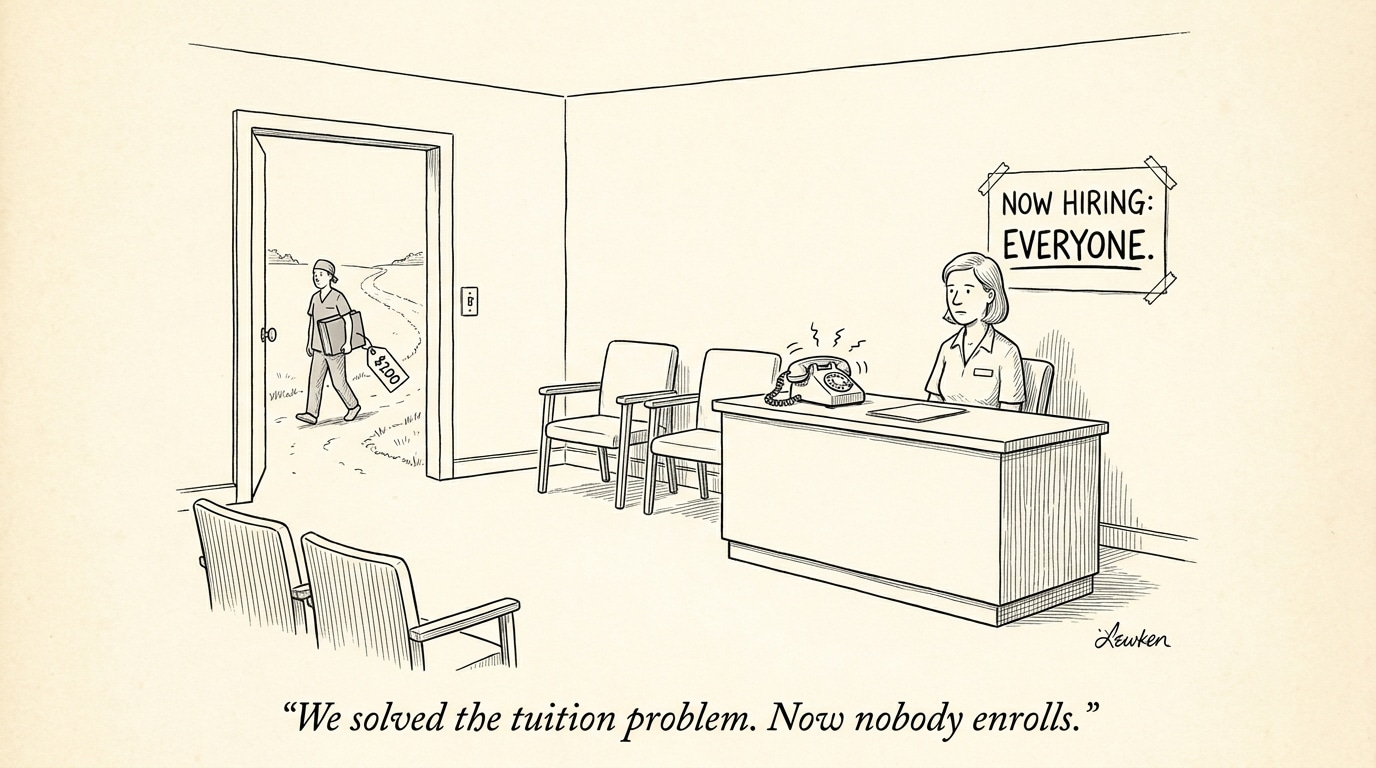

Workforce implications: hiring, training, and roles

As systems incorporate AI, the mix of skills healthcare organizations require will shift. Routine documentation and low‑complexity triage work will decline in relative importance, while demand will increase for clinicians skilled in complex judgment, care coordination, and quality oversight of algorithms. Recruiting should reflect this transition.

Prioritize clinicians with digital‑health competence and quality‑improvement experience — candidates who can evaluate model outputs, participate in validation exercises, and translate algorithmic findings into safe care plans. Equally important are hires with operational experience who can design workflows that integrate AI outputs without disrupting clinical responsibility.

Call Out: Recruiting and training must be proactive — hire clinicians who can partner with technologists, run validation studies, and lead continuous monitoring so AI enhances care rather than creating hidden failure points.

Conclusion: a pragmatic adoption pathway

The debate about AI in clinical medicine should move from abstract promises and fears to operational criteria: where are benefits measurable, and where are risks controllable? The pragmatic path is to validate narrowly, monitor continuously, keep humans responsible for high‑stakes choices, and evolve hiring practices to support that model. Doing so preserves patient safety, maintains clinician trust, and ensures that investments in technology and people reinforce one another.

In short: adopt AI where outcomes can be measured and failure modes are transparent; constrain or defer deployment where ambiguity and patient risk remain high. That approach aligns procurement, clinical governance, and recruiting so that innovation advances care rather than undermining it.

Sources

What On Earth Is Wrong With Intelligent Medicine? – Forbes

What on Earth is Wrong with Intelligent Medicine? – Rama on Healthcare

Ask the Doctors: Thoughtful adoption of some AI systems may improve care – Times‑Standard