Why this theme matters now

Health systems are moving from proof-of-concept AI pilots to broad operational deployments: predictive triage, documentation assistants, revenue-cycle automation and even agentic tools that can take multi-step actions. That shift exposes a persistent gap — technology capability outpacing workforce readiness. Without deliberate investment in clinician and staff training, organizations risk wasted spend, clinician frustration, patient safety issues and stalled ROI. Organizations that treat AI as a plug-and-play product will discover that success depends on human orchestration as much as algorithmic performance — a core lesson in scaling AI in healthcare beyond isolated pilots.

From pilots to practice: the adoption gap

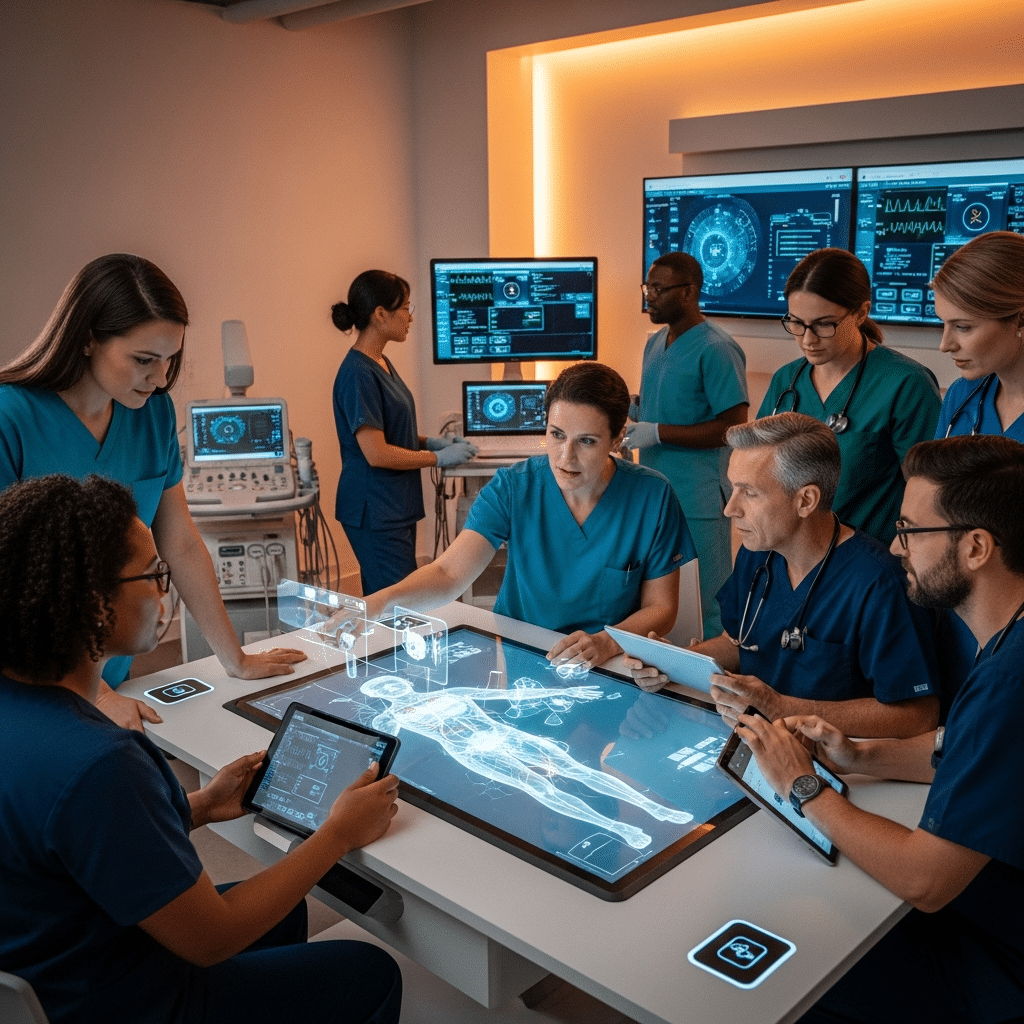

Early AI projects frequently succeed in controlled settings where engineers, data scientists and clinical champions are tightly involved. Scaling requires thousands of clinicians, nurses, and operations staff to adopt new interfaces, trust probabilistic outputs, and change workflows. The barrier is rarely the model. It’s the distributed, daily work of integrating AI recommendations into care plans, escalating when models are uncertain, and maintaining situational awareness when tools make autonomous decisions.

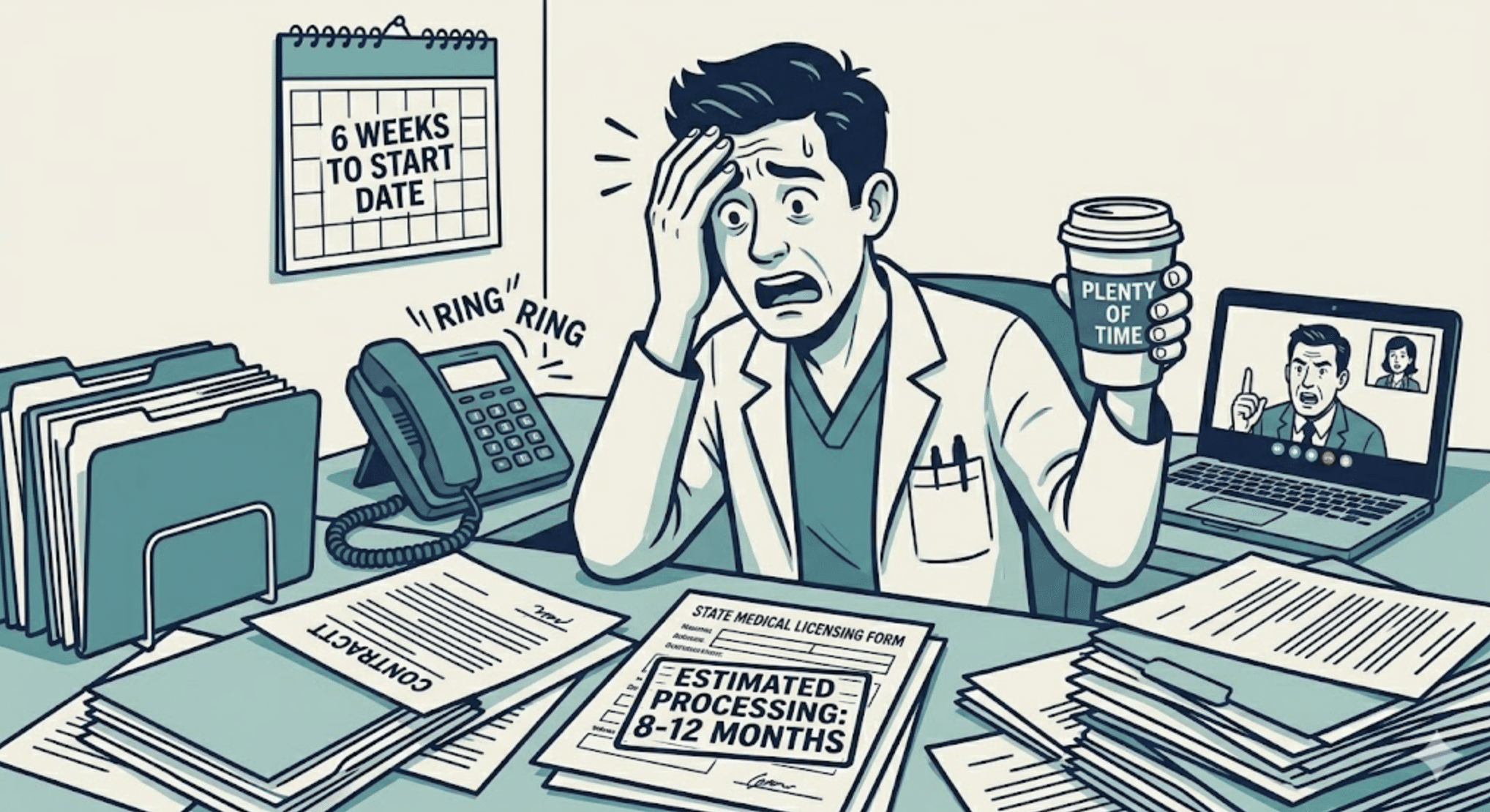

Practical implication: deployment timelines must budget for training cycles as long as they budget for model validation. Systems that bundle education, change management and local clinician governance into rollout plans see smoother transitions than those that rely on vendor manuals and ad-hoc town halls.

What effective AI literacy programs teach

AI literacy for healthcare personnel needs to be pragmatic and role-specific. Core domains include:

- Conceptual understanding: what models can and cannot do, sources of bias, and how uncertainty is expressed.

- Workflow integration: where in the clinical process the tool fits, who acts on outputs, and how escalation pathways operate.

- Safety and governance: recognizing failure modes, reporting mechanisms, and how to document AI-influenced decisions.

- Ethics and communication: explaining AI-driven recommendations to patients and obtaining informed consent where appropriate.

Training that combines brief, targeted modules with hands-on simulations is more likely to change behavior than long, lecture-based sessions. Simulation-based scenarios reveal edge cases and support development of practical heuristics that clinicians can use at the bedside.

Call Out: Targeted, role-based training beats generic AI overviews. Equip clinicians with decision-check heuristics and escalation pathways — not just theory — to reduce misuse and accelerate safe adoption.

Models for building AI competency

Health systems are experimenting with multiple approaches to grow AI capability:

- Distributed champions: training a cohort of clinician ‘AI ambassadors’ who provide peer-to-peer coaching and local troubleshooting.

- Centralized academies: cross-disciplinary learning hubs (IT, clinical, legal) that produce standardized certifications and run periodic refreshers.

- Vendor-enabled upskilling: co-developed curricula with technology partners that align features with clinical cases, supplemented by simulation labs.

- Microlearning and just-in-time support: embedded help, tooltips, and short refresher modules delivered at the point of care.

Each model has trade-offs. Distributed champions increase local credibility but require coordination and sustained incentives. Central academies ensure consistency but may struggle with rapid iteration. Hybrid strategies that combine local mentorship with centralized governance appear most resilient in large systems.

Measuring returns: what success looks like

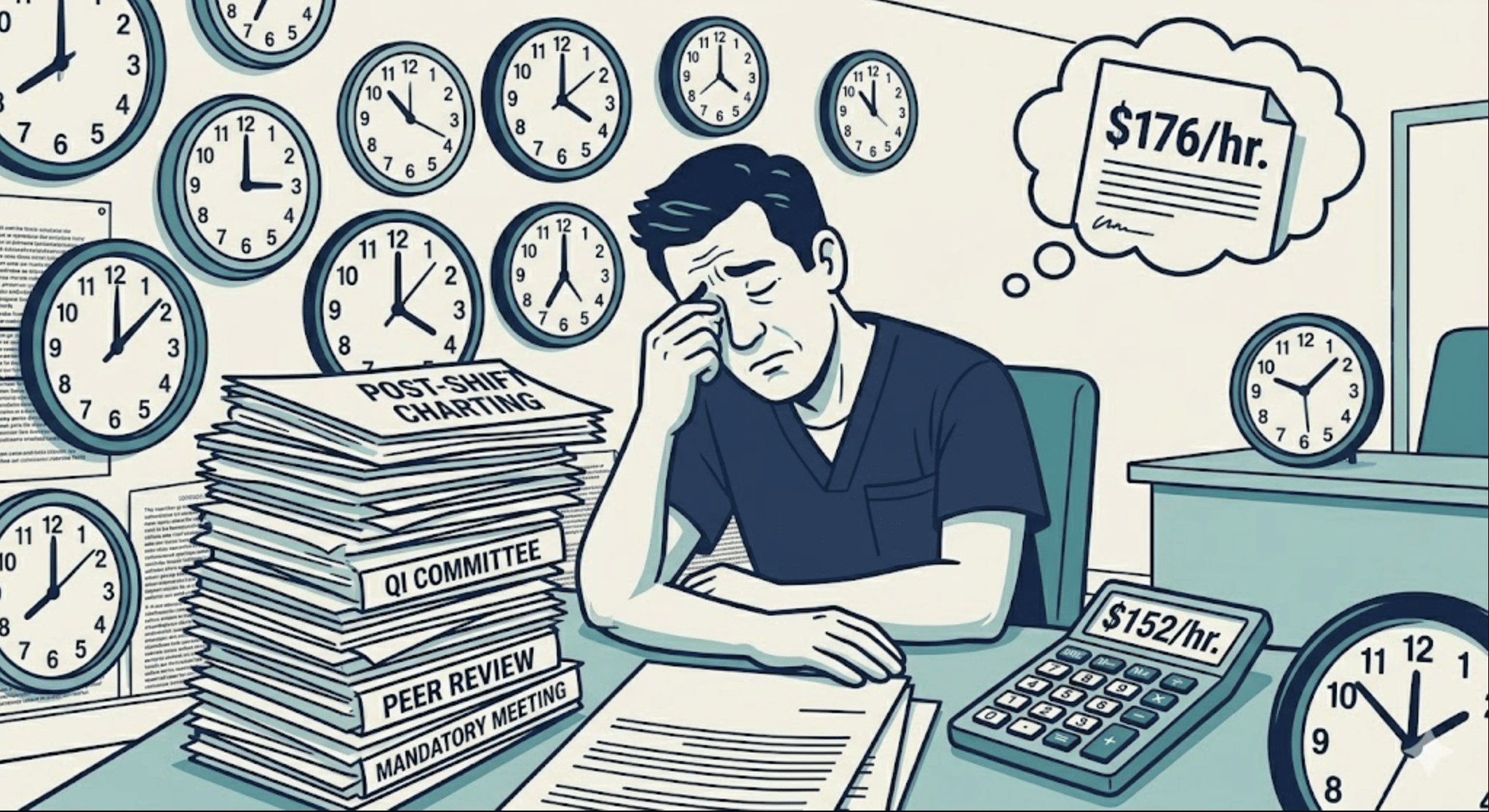

To know whether training is working, systems must measure both human and technical indicators. Useful metrics include:

- Adoption and sustained use rates by role and site.

- Changes in task time and clinician cognitive load when using AI-augmented workflows.

- Frequency and severity of AI-related safety incidents and near-misses.

- Quality measures tied to AI use (e.g., accuracy of triage decisions, documentation completeness).

- Clinician perceived trust and confidence, captured through regular surveys and focus groups.

Linking these indicators back to training interventions enables continuous improvement. When a spike in overrides or errors is detected, targeted retraining or interface redesign can be dispatched rather than broad, unfocused education campaigns.

Call Out: Measurement closes the loop. Pair adoption metrics with safety and satisfaction data to determine whether training reduces risk, not just raises awareness.

Implications for health systems and recruiting

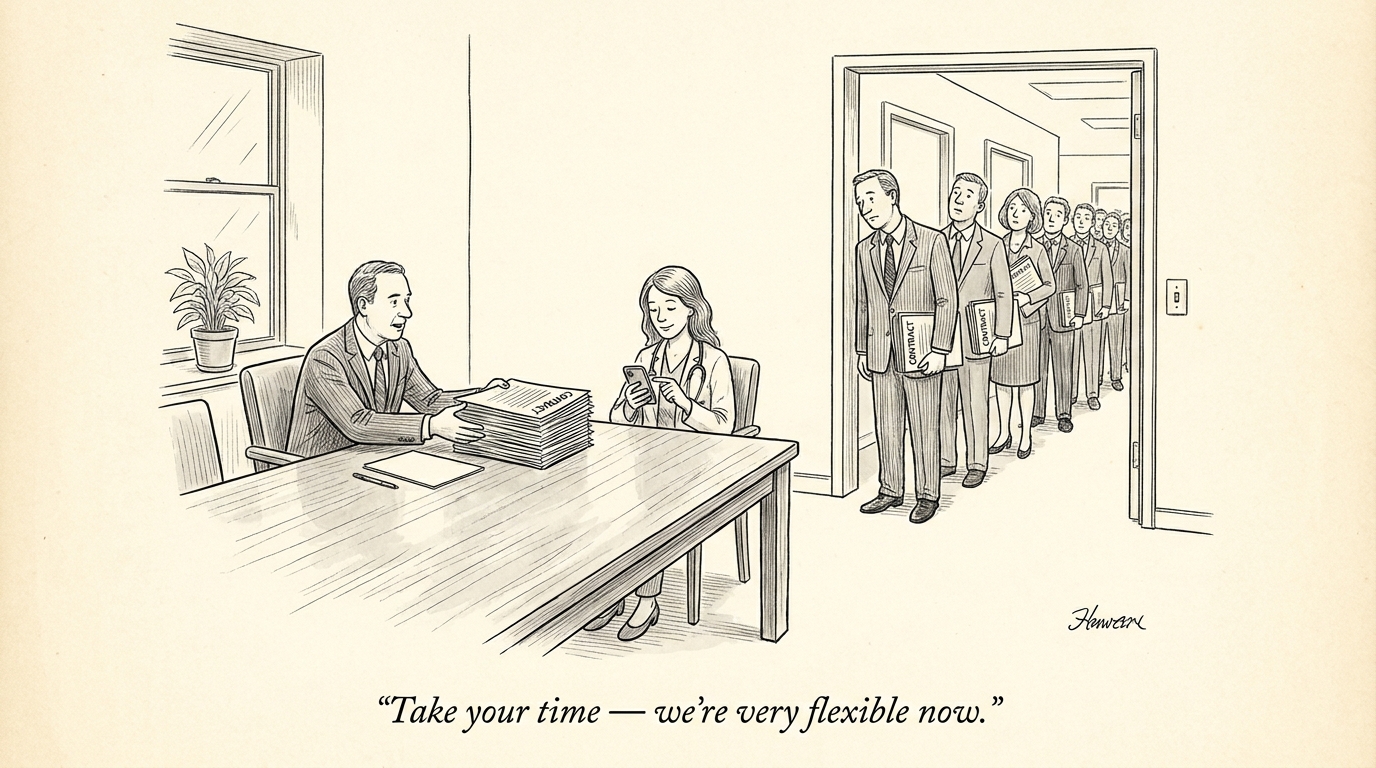

Scaling AI changes workforce needs. Recruiters and HR teams should expect demand for hybrid roles: clinicians who understand informatics, implementation specialists skilled in change management, and engineers fluent in clinical context. Job descriptions should emphasize collaboration skills, comfort with probabilistic outputs, and experience with clinical decision support systems.

For existing staff, organizations must offer career pathways tied to AI competency — certifications, protected time for training, and recognition for roles like AI steward or clinical model curator. Compensation frameworks and promotion criteria may need adjustment to value these new capabilities.

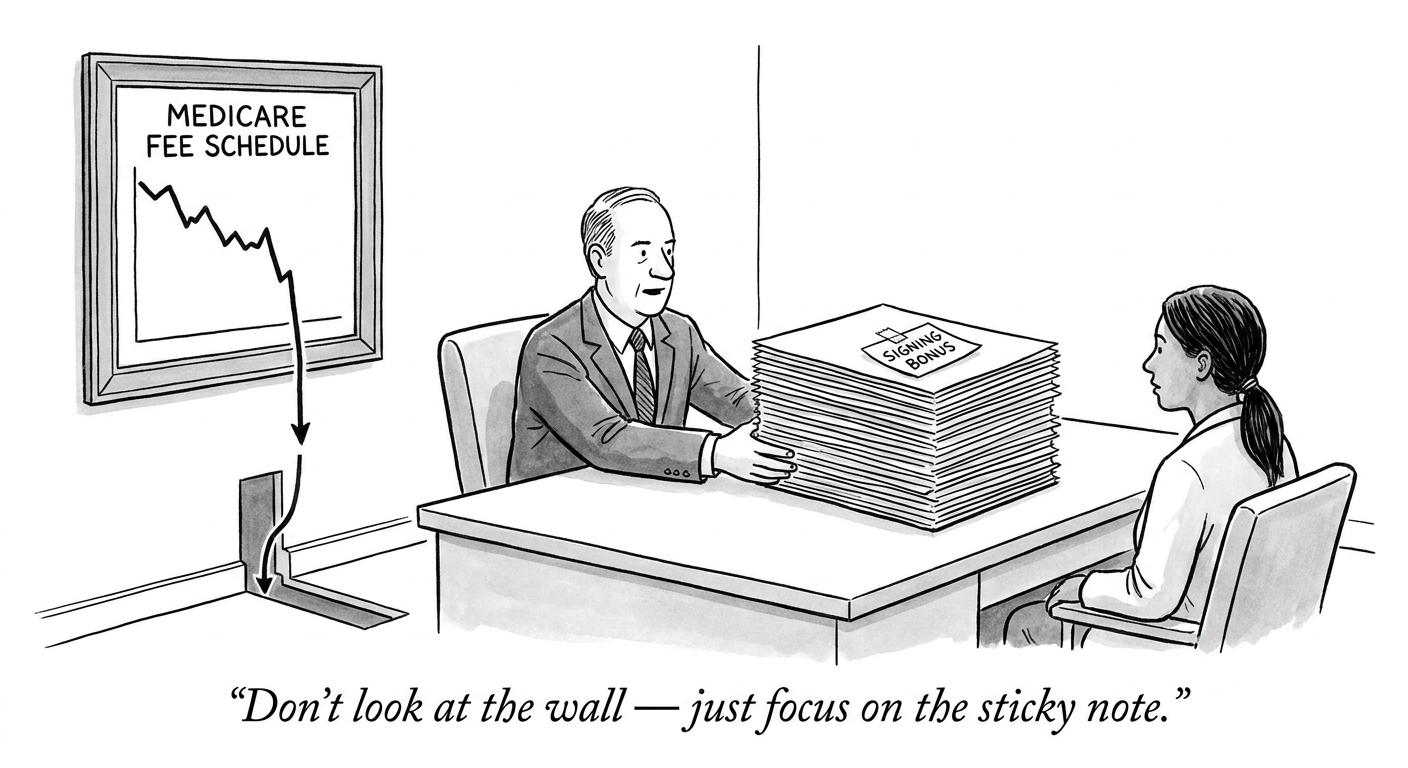

From a recruiting perspective, health systems that publicly invest in structured AI literacy programs will have an advantage attracting clinicians wary of technology-driven disruption. Candidates increasingly evaluate employers on how well they support digital transformation and protect clinician workflow integrity.

Conclusion: Treat training as infrastructure

AI adoption in healthcare is not a one-time implementation; it is an ongoing socio-technical transformation. Treating workforce education as infrastructure — with sustained funding, governance, measurement and career pathways — converts AI investments into reliable clinical value. Health systems that design training for daily clinical realities, measure outcomes, and evolve curricula alongside technology will unlock the promise of AI while reducing risk.

For recruiters and hiring teams, that means hiring for hybrid skills, creating structured upskilling pathways, and advertising concrete training commitments. Tools and models will continue to change; the durable advantage belongs to organizations that cultivate human expertise to orchestrate them.

Sources

Ohio health system spreads AI literacy to physicians – Becker’s Hospital Review

Industry Voices: Experience-Centered AI — The Future of Healthcare Innovation – Fierce Healthcare