Why this theme matters now

Agentic AI—systems that can make autonomous decisions or execute multi-step tasks with minimal human prompting—is moving from speculative research into hospital pilots and operational experiments, raising new demands for formal AI in healthcare as autonomy increases. A sizable portion of health system leaders are already exploring agentic models in clinical, administrative, and operational settings. That rapid movement creates a twofold urgency: organizations must establish governance and safety guardrails, and they must prepare clinicians, IT staff, and operational teams to work safely and effectively alongside systems that act with a degree of autonomy.

Adoption snapshot: pilots, priority areas, and risk tolerance

Across health systems, adoption is uneven but accelerating. Many organizations are running pilots across areas such as clinical documentation, care coordination, discharge planning, and administrative automation. Pilots let teams evaluate real-world performance, but they also expose differences in risk tolerance: some units view agentic automation as a way to reduce clinician administrative burden, while others treat it as an experimental tool requiring extensive human oversight. The current landscape is characterized by pockets of concentrated activity rather than broad, standardized deployment.

Readiness gaps: governance, validation, and accountability

Institutional readiness commonly lags behind technical enthusiasm. Three interdependent gaps stand out: governance frameworks to approve and monitor agentic AI use; rigorous processes for model validation in local workflows; and clear lines of accountability when an autonomous action influences patient care. Without standardized processes for approval, testing, and post-deployment monitoring, pilot programs risk producing inconsistent outcomes and undermining clinician trust.

Call Out: Governance matters more than hype. Establishing consistent approval pathways, safety checks, and audit trails before broad rollout lowers operational risk and preserves clinician confidence in agentic systems.

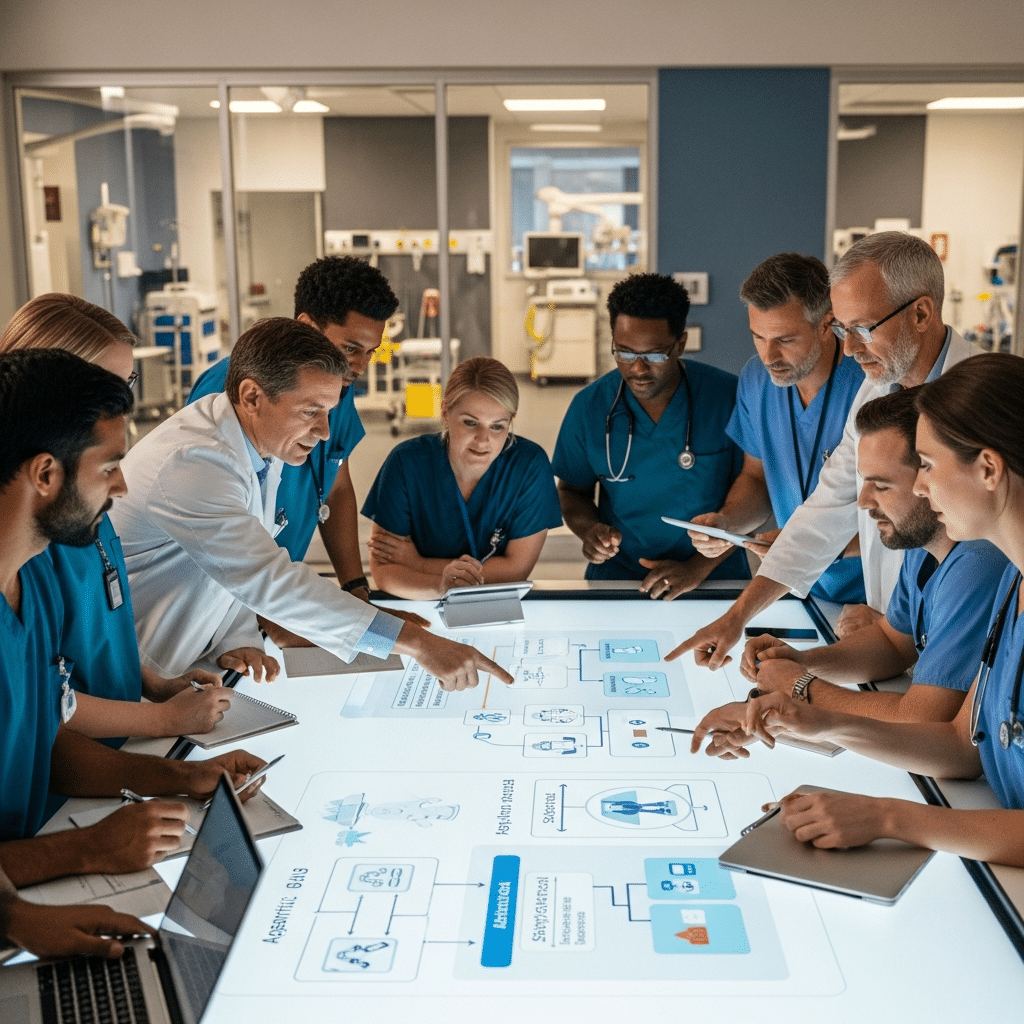

Workforce impacts: new skills, roles, and training priorities

Agentic AI changes the nature of several healthcare roles rather than simply replacing tasks. Clinicians will need skills in system oversight, exception management, and interpreting system rationale when available. IT and informatics teams require competencies in human-in-the-loop monitoring, model performance assessment, and rapid rollback procedures. New hybrid roles—such as clinical AI safety officers or model operations specialists—are increasingly relevant. Training programs should blend technical literacy, human factors, and scenario-based drills that simulate automation failures and edge cases.

Integration and operational safety: workflows, interfaces, and measurement

Successful integration depends on fit with existing workflows and clear user interfaces that minimize cognitive friction. Agentic actions must be transparent and explainable enough for clinicians to understand intent and limitations. Measurement frameworks should include not only traditional performance metrics (accuracy, throughput) but also safety indicators (near-miss frequency, escalation latency), clinician workload, and patient experience. Continuous monitoring with rapid feedback loops is essential to detect drift, emergent failure modes, or unintended downstream effects.

Call Out: Train for failure modes. Scenario-based training that simulates automation errors accelerates team readiness and exposes gaps in escalation and rollback procedures.

Organizational strategies to bridge the gaps

Health systems that are moving beyond pilots tend to adopt a few pragmatic strategies: centralized oversight bodies to set standards while enabling local adaptation; staged rollouts with mandatory safety checkpoints; investment in upskilling programs that combine on-the-job coaching with formal curricula; and mechanisms to capture frontline feedback quickly. Importantly, leaders who involve clinicians early—co-designing interfaces and governance—see faster acceptance and safer adoption.

Implications for healthcare industry and recruiting

For health systems: the next 12–24 months will be decisive. Organizations that fail to invest in governance, clinical oversight roles, and structured training risk stalled deployments and potential patient safety incidents. For vendors and integrators: demonstrating robust validation processes, human-centered design, and clear rollback mechanisms is increasingly a market differentiator.

For recruiting and talent strategy: the demand for professionals who can bridge clinical, technical, and operational domains will rise. Job descriptions should reflect hybrid competencies—clinical credibility, data literacy, and process improvement experience.

Conclusion: practical priorities for leaders

Agentic AI presents real efficiency and care-quality opportunities, but its safe, scalable adoption depends on organizational readiness that extends beyond technology. Leaders should prioritize: building governance and validation pipelines, defining accountability and escalation pathways, redesigning roles and training for human–AI collaboration, and implementing measurement systems that capture safety and workflow impacts. Those investments will determine whether agentic AI becomes a reliable partner in care delivery or a fragmented set of pilots with inconsistent outcomes.

Sources

43% of healthcare leaders piloting agentic AI: study – Becker’s Hospital Review

How health systems are tackling AI’s workforce readiness gap – Becker’s Hospital Review