Why this theme matters now

Healthcare organizations are under simultaneous pressures: executives and investors expect digital transformation to reduce costs and improve outcomes, while clinical teams want tools that actually help care delivery. That tension has converged on a single test: return on investment within broader AI in healthcare adoption and ROI decisions.With vendors pitching ambient AI platforms as foundational infrastructure and enterprise leaders pushing rapid pilots, the industry faces a choice between rushed adoption and disciplined evaluation. How organizations resolve that choice will shape budgets, procurement, workforce needs, and the credibility of AI in clinical settings for years to come.

Pressure versus proof: why adoption is accelerating

Three dynamics are driving rapid AI uptake. First, competitive anxiety—leaders fear being overtaken by peers who automate workflows or extract new revenue streams. Second, vendor marketing that positions ambient or generative AI as core infrastructure that will unlock broad efficiencies. Third, visible executive endorsements of experimentation as strategic imperative. Together these forces shorten decision cycles and push pilot programs into production faster than governance, measurement frameworks, and clinical validation processes can catch up.

Call Out — Strategic context: Rapid adoption without clear metrics shifts the risk from slow ROI to hidden long-term costs: increased clinician burden from poorly integrated tools, opaque downstream impacts on revenue and quality, and opportunity cost from misallocated funding.

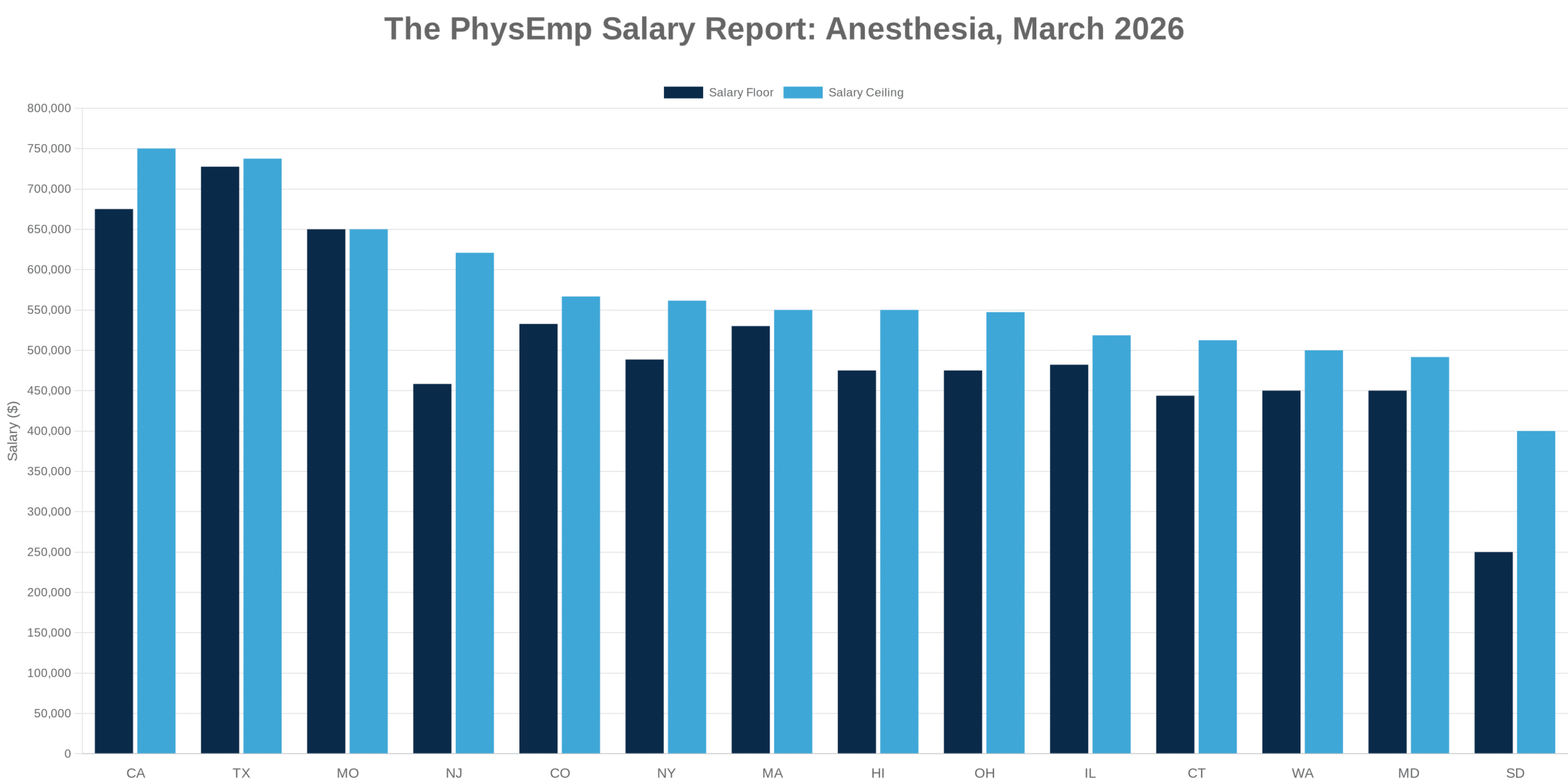

Operational ROI: lessons from revenue cycle deployments

Revenue cycle management is a practical proving ground. When organizations target claim denials, prior authorization, or coding accuracy, the value chain for measurement is tighter — you can map interventions to financial outcomes with reasonable confidence. Successful programs tie model outputs to specific operational workflows, instrument baseline KPIs, and iterate on human–AI handoffs.

Where deployments struggle, problems are predictable: models produce probabilistic outputs but are not embedded into task flows; teams lack clear change management plans; and ROI calculations omit maintenance, governance, and integration expenses. The healthcare groups that find durable benefit treat initial pilots as experiments with pre-specified success thresholds rather than open-ended feature launches.

Infrastructure and visibility: closing the ROI gap

One recurring theme is visibility. When leaders report an “AI ROI gap,” the core issue often isn’t that AI can’t create value but that organizations cannot reliably observe where value accrues. Ambient AI platforms promise pervasive assistance — transcribing notes, summarizing charts, flagging actions — but without instrumentation, improvements in clinician time or coding accuracy remain invisible.

Addressing that requires investment in telemetry, A/B testing frameworks, and causal measurement strategies. Instrumentation lets teams compare matched cohorts, measure downstream effects on billing or utilization, and detect regressions. In short, infrastructure spending on monitoring and evaluation is itself an ROI enabler: it turns speculative gains into auditable returns.

Call Out — Measurement imperative: Treat measurement infrastructure as a first-class deliverable. Without baseline telemetry and experiment scaffolding, early wins cannot be scaled credibly and leaders will conflate activity with impact.

Leadership expectations, incentives, and experimentation

Executive views vary on what AI should deliver and when. Some senior leaders demand near-term financial payback to justify budgets; others emphasize exploratory testing and capability-building, accepting deferred or indirect returns. Both views have merit, but mismatched incentives create friction. When C-suite expectations emphasize immediate ROI, teams often narrow problem scopes to monetizable workflows and deprioritize clinical validation or clinician experience. When tolerance for long-term learning dominates, procurement can become directionless and budgets diffuse.

Clear policy helps: define which pilots are “value-first” (must meet ROI thresholds within a set window) and which are “capability-first” (intended to inform longer-term strategy). Governance should attach success criteria, measurement plans, and funding horizons to every program so teams aren’t left guessing what constitutes acceptable progress.

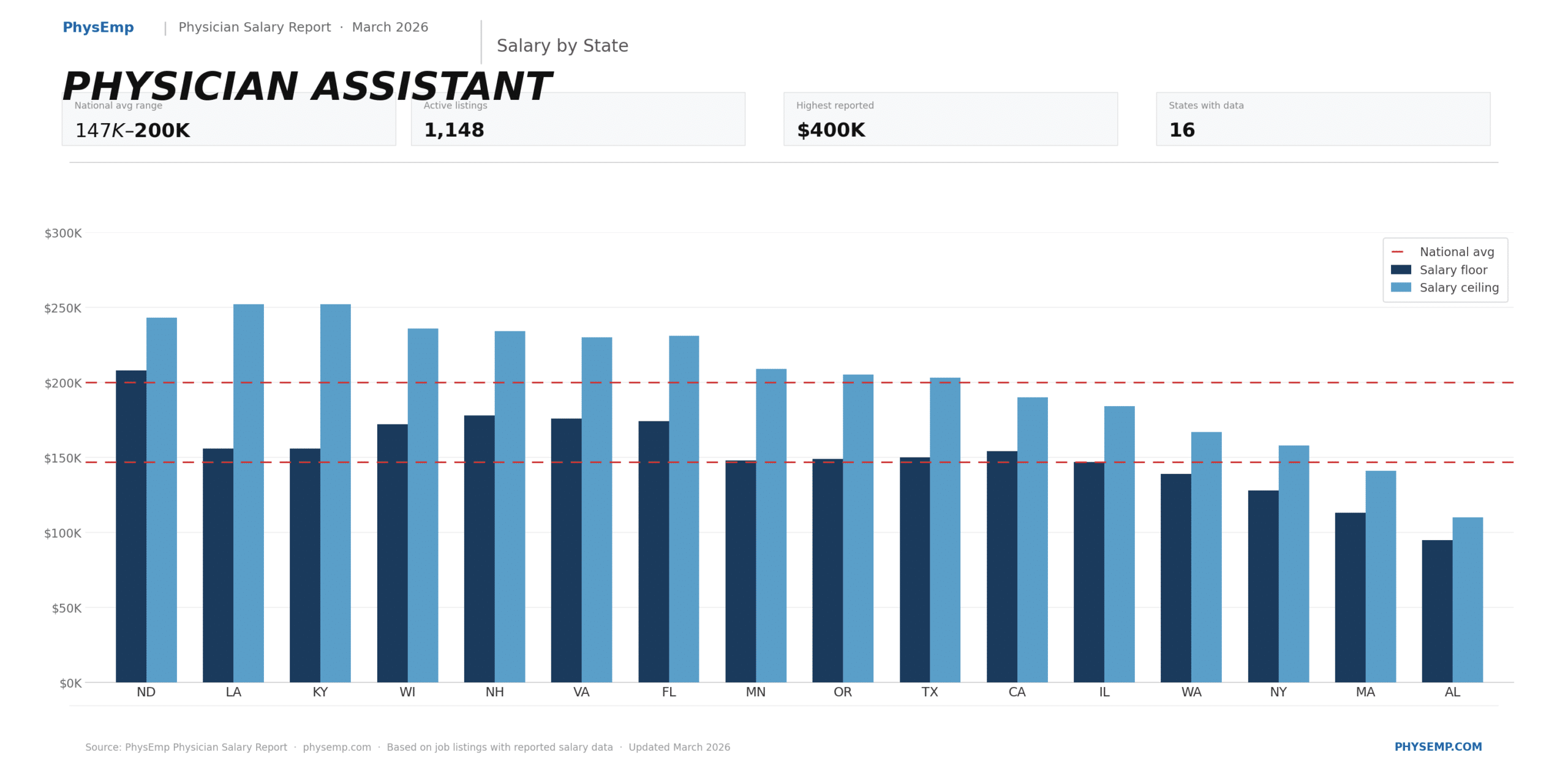

Implications for hiring and workforce planning

AI initiatives change the skills organizations need. Hiring is no longer only about data scientists and vendors; health systems require product managers who understand clinical workflows, implementation specialists who can integrate tools into EHRs, and analysts who can run causal evaluations. For recruiters and platforms that serve this market, demand will grow for hybrid profiles: clinicians with informatics experience, revenue cycle experts with analytics fluency, and project managers who can operationalize A/B tests.

F

Conclusion — practical next steps for healthcare leaders

AI in healthcare sits at an inflection point: the technology is sufficiently capable to alter operations, but organizational practices for capturing value must mature in parallel. Practical actions include: categorizing pilots by expected time-to-value; investing in instrumentation and experiment design; setting pre-defined success criteria; and aligning hiring to fill measurement and implementation gaps. Those who balance urgency with rigor — adopting promising technologies while building the systems to quantify their effects — will convert experimentation into sustainable returns.

Sources

Ambient AI Framed as Core Healthcare Infrastructure With ROI Focus – TipRanks

How NGHS balances revenue cycle AI adoption, ROI – TechTarget

Jensen Huang says demanding ROI from AI is like forcing a child to make a business plan – Fortune

Thoughts On Davos 2026: The AI ROI Gap Is A Visibility Gap – Forbes

Execs’ AI plans fueled by fear of falling behind, not ROI – HR Executive