Why this theme matters now

Healthcare organizations are increasingly deploying artificial intelligence across diagnostics, monitoring, and device-assisted care. Yet many models and embedded device algorithms are underperforming once they leave controlled development environments. As regulators tighten oversight and health systems invest in clinical AI at scale, the unresolved problems of missing clinical context, safety assurance, and governance are no longer theoretical—they affect patient outcomes, liability exposure, purchasing decisions, and workforce needs.

Context is not just data: the clinical signal problem

AI models trained on retrospective datasets often lack the situational signals clinicians use when deciding care: evolving patient status, unstructured notes, local practice patterns, and transient operational constraints. When deployed, models can underperform because they do not account for these contextual inputs or the way clinicians triangulate information. This gap shows up as degraded predictive value, inappropriate alerts, and recommendations that don’t match bedside realities.

Fixing this requires design that embeds contextual inputs (workflow metadata, temporality, clinician intent) and evaluation that mirrors real-time use. Prospective, context-aware validation and iterative refinement with clinician feedback are crucial steps to move models from useful in silico tools to reliable clinical partners.

Call Out — Clinical Context

Models that ignore temporality, workflow signals, or clinician reasoning will continue to misalign with bedside decision-making. Successful clinical AI must treat context as a data modality, not an afterthought, and validate performance in the live operational environment.

Device integration and safety: from component risk to system-level hazards

AI is not only software; it increasingly resides inside medical devices. That amplifies safety concerns because algorithmic behavior can interact with device hardware, user interfaces, and clinical processes in unpredictable ways. Traditional device testing often emphasizes component-level metrics, but algorithmic change, model drift, and data distribution shifts produce emergent risks that manifest only when the system is in routine use.

Manufacturers and health systems must extend safety engineering to include continuous monitoring, scenario-driven failure mode analysis, and human factors evaluation focused on how clinicians respond to AI outputs. That means complementing premarket evidence with robust post-market surveillance and clear mechanisms for graceful degradation or fail-safe behavior when model confidence or input quality is poor.

Call Out — Device Safety

AI in devices creates system-level hazards that require lifecycle safety strategies: real-world performance monitoring, clinician-centered interface design, and predefined fallback behaviors to prevent harm when algorithmic outputs are unreliable.

Trust, workflow, and the human–machine boundary

Trust is built when clinicians understand what an AI tool does, when it fails, and how it should influence decisions. Black-box outputs without provenance or uncertainty quantification erode adoption. Equally important is aligning AI behavior with clinical workflows: tools that interrupt, produce low-value alerts, or require time-consuming interpretation will be bypassed, regardless of technical performance.

Practical steps include transparent model cards, integrated uncertainty displays, and estimating the marginal utility of model outputs in specific decision nodes. Organizationally, multidisciplinary governance—bringing together clinicians, engineers, human factors experts, and legal teams—ensures deployment choices reflect clinical realities and institutional tolerance for risk.

Governance, validation, and the post-deployment lifecycle

Governance must shift from a one-time approval mindset to continuous stewardship. Key elements include standardized evaluation frameworks that reflect clinical endpoints, mechanisms for detecting and responding to model drift, and clear accountability for updates. Regulatory pathways are evolving to demand real-world evidence and post-market controls; health systems that adopt robust monitoring and update policies will be better positioned to manage both safety and cost.

Data governance also matters: datasets must capture population diversity and local practice variation to avoid biased recommendations. Contracts between device makers and health systems should specify responsibilities for retraining, incident response, and transparency around model changes.

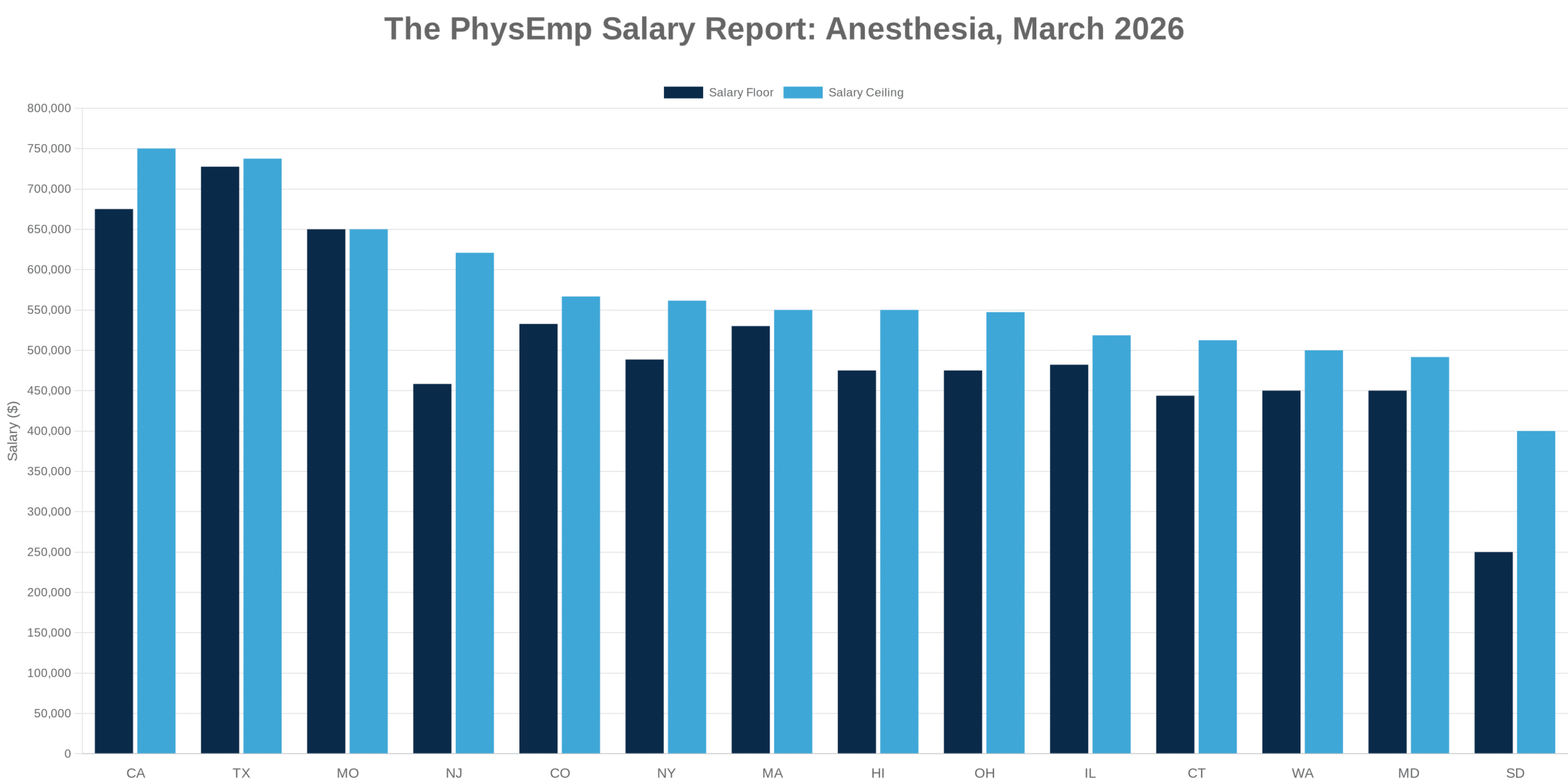

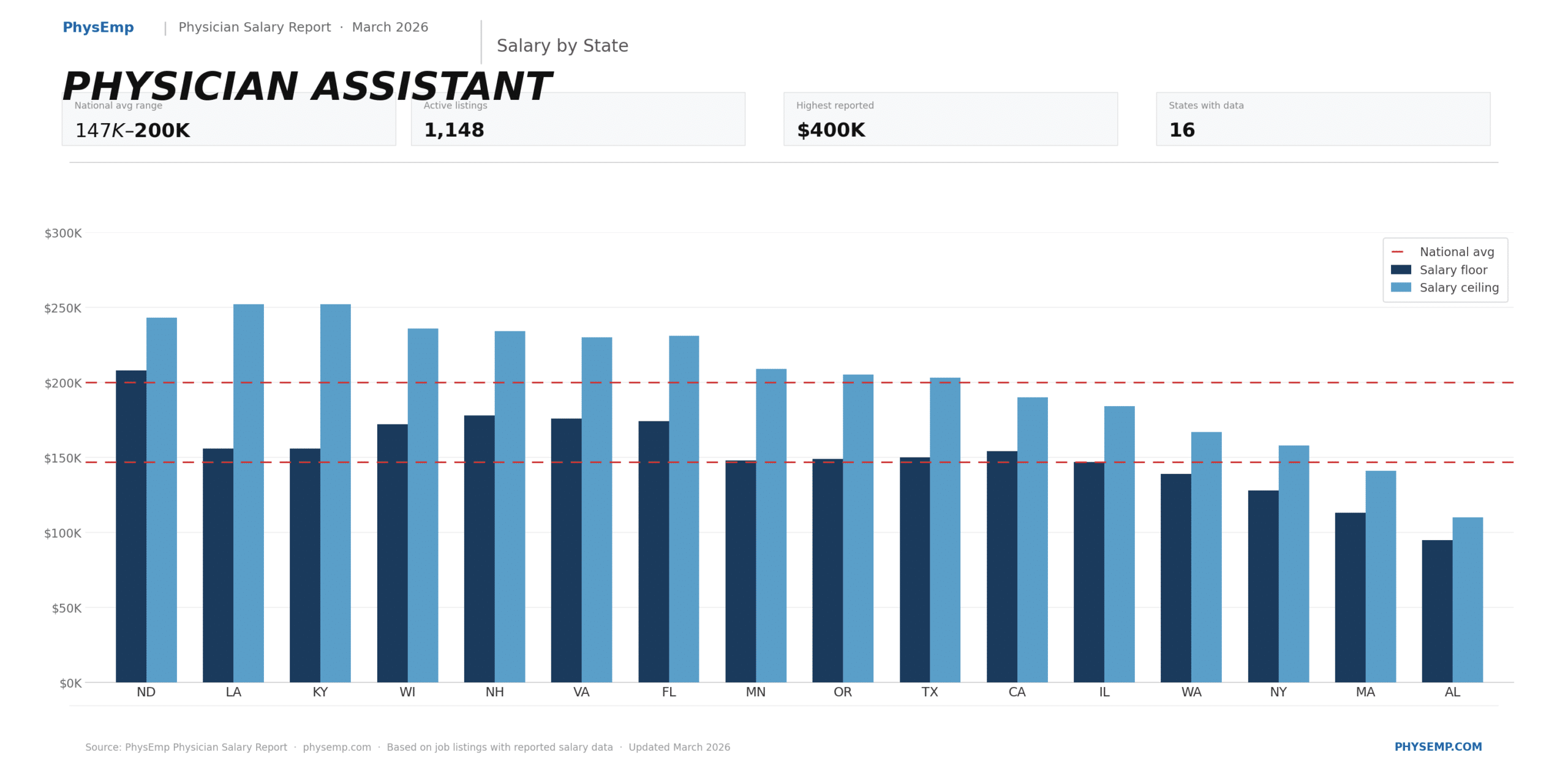

Implications for healthcare industry and recruiting

The clinical shortfalls of current AI create tangible hiring and organizational needs. Health systems must recruit personnel with expertise across a broader risk-management spectrum: clinician-informaticists, medical device safety engineers, human factors specialists, and regulatory experts who can translate model behavior into clinical policy. Vendors need teams that combine clinical domain knowledge, lifecycle safety engineering, and post-market surveillance capabilities.

For AI-minded professionals and recruiters, this means new role definitions and hiring criteria: demonstrated experience with prospective clinical validation, multidisciplinary deployment projects, and developing monitoring pipelines will be at a premium. Organizations such as “PhysEmp” that specialize in matching AI talent to healthcare roles can play a constructive role by surfacing candidates with these hybrid competencies.

Conclusion — closing the gap with systems thinking

Delivering on the promise of clinical AI requires moving beyond siloed model development to systems-level thinking: treat context as data, design for safe device integration, build trust through transparency and workflow fit, and make governance continuous. Health systems and manufacturers that adopt these practices will reduce risk, improve clinical value, and accelerate adoption. The next wave of clinical AI success will be defined not by algorithmic novelty, but by durable, governed systems that perform reliably at the point of care.

Sources

Why Healthcare AI Keeps Falling Short At The Point Of Care – Forbes

Medical AI Models Need More Context To Prepare for the Clinic – HMS Harvard

AI in Medical Devices: Safety Questions the Industry Can’t Afford to Ignore – MedTech Intelligence