Why this matters now

Artificial intelligence is transitioning from controlled research environments and limited pilot programs into routine frontline clinical use. As deployment expands, policymakers are introducing proposals to impose operational guardrails, clinicians are integrating algorithmic tools into diagnostic and triage workflows, and legal experts are reassessing liability frameworks when AI outputs influence care decisions.

These debates extend beyond theoretical governance—they directly affect procurement strategy, malpractice exposure, privileging standards, and workforce planning. Health systems must now reconcile innovation velocity with accountability mechanisms that define who is responsible when AI-informed decisions shape patient outcomes.

These developments sit squarely within the broader transformation of AI in Physician Employment & Clinical Practice, where regulatory design, clinical authority, and employment structures converge to determine how AI-enabled care is implemented and governed at scale.

Policy responses: bans, boundaries, and unintended consequences

Some jurisdictions are exploring or enacting prohibitions on specific clinical uses of AI, particularly where algorithms would directly influence patient prioritization or allocation of scarce resources. Such measures are driven by concerns about opaque models, insufficient validation, and the risk of embedding systemic bias into time-sensitive decisions. But blunt restrictions can produce trade-offs: banning a class of tools may slow deployment of systems that could, if properly designed and monitored, improve throughput and safety. The core policy challenge is not simply whether to allow models, but under what governance, transparency, and validation conditions their use should be permitted.

Analytical note

Policy that focuses only on prohibitions risks pushing innovation into narrower, less-regulated channels or creating a compliance treadmill where vendors optimize for legal minimalism rather than clinical performance and equity.

Bias and specialty gaps: where AI can help—and harm—women’s health

AI applications targeted at historically under-resourced areas of care—such as aspects of women’s health—promise to close diagnostic and access gaps. Yet training data, label conventions, and development choices can reproduce existing blind spots: underrepresentation of certain patient groups, reliance on proxy outcomes, and male-centric clinical benchmarks can all skew performance. The result is a dual possibility: well-designed models could surface patterns clinicians miss; poorly designed models could misclassify risk or deprioritize care for the very populations they were meant to serve.

Call Out: Careful dataset curation and continuous outcome monitoring are the practical levers that determine whether AI narrows or widens disparities in specialized care. Governance must mandate both diverse training data and real-world performance audits.

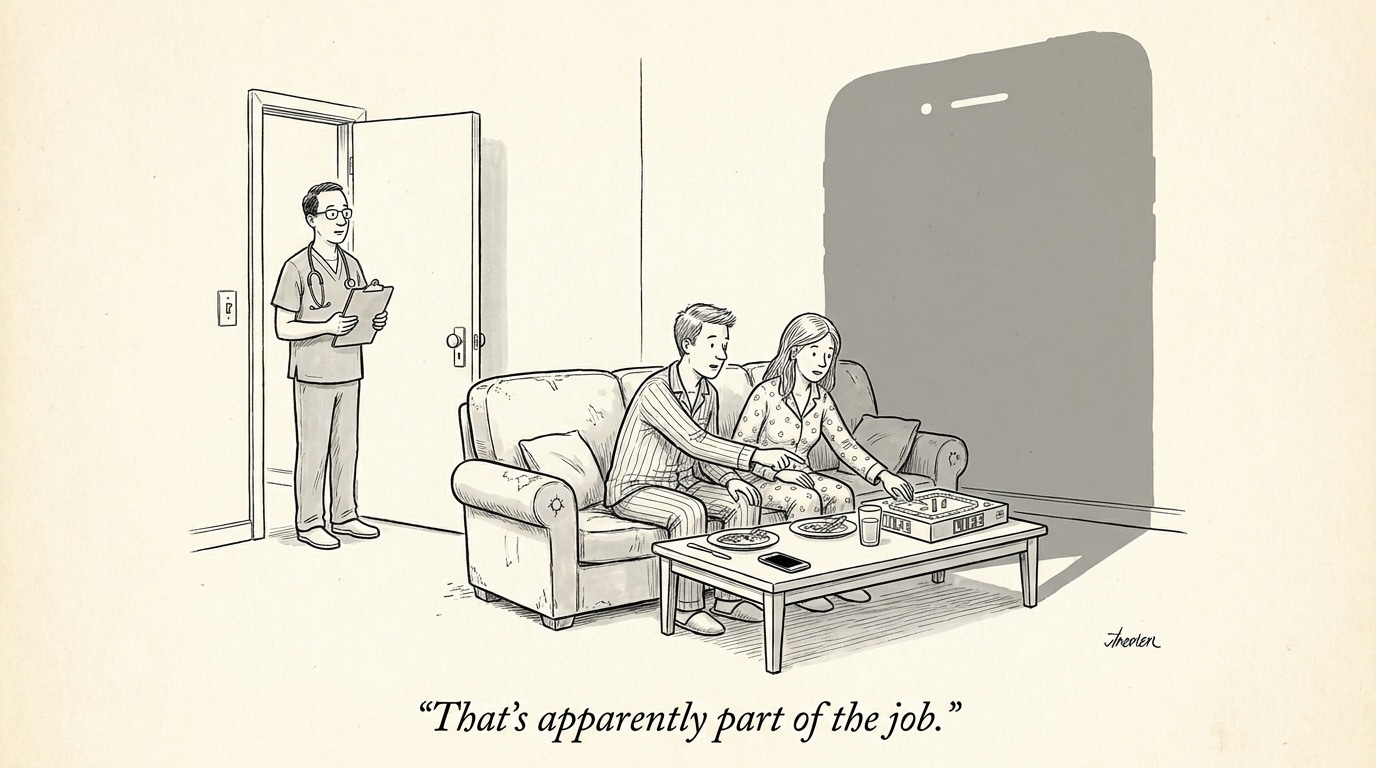

Accountability when AI speaks: legal and clinical responsibility

When an algorithm issues a recommendation—text, score, or alert—the question of who is accountable becomes acute. Is it the vendor who built the model, the health system that deployed it, the clinician who acted on it, or some combination? Current regulatory and malpractice frameworks were not designed for decision-making ecosystems where opaque statistical models can produce plausible but incorrect outputs. This ambiguity affects provider behavior: clinicians may defer to an algorithmic suggestion, leading to automation bias; conversely, fear of liability may induce clinicians to ignore useful alerts.

Operational implications

Practically, institutions are likely to respond by layering governance: stricter procurement standards, deployment-only-with-human-in-the-loop requirements, documentation trails for algorithmic influence on decisions, and explicit informed-consent language where appropriate. Those measures shift workloads—requiring new roles such as model stewards, validation engineers, and clinical-AI ethicists—and change hiring criteria for care teams.

Call Out: Clear liability allocation combined with robust clinical governance reduces both legal exposure and clinician mistrust. Health systems that formalize roles for model validation and post-deployment monitoring will be better positioned to adopt AI safely.

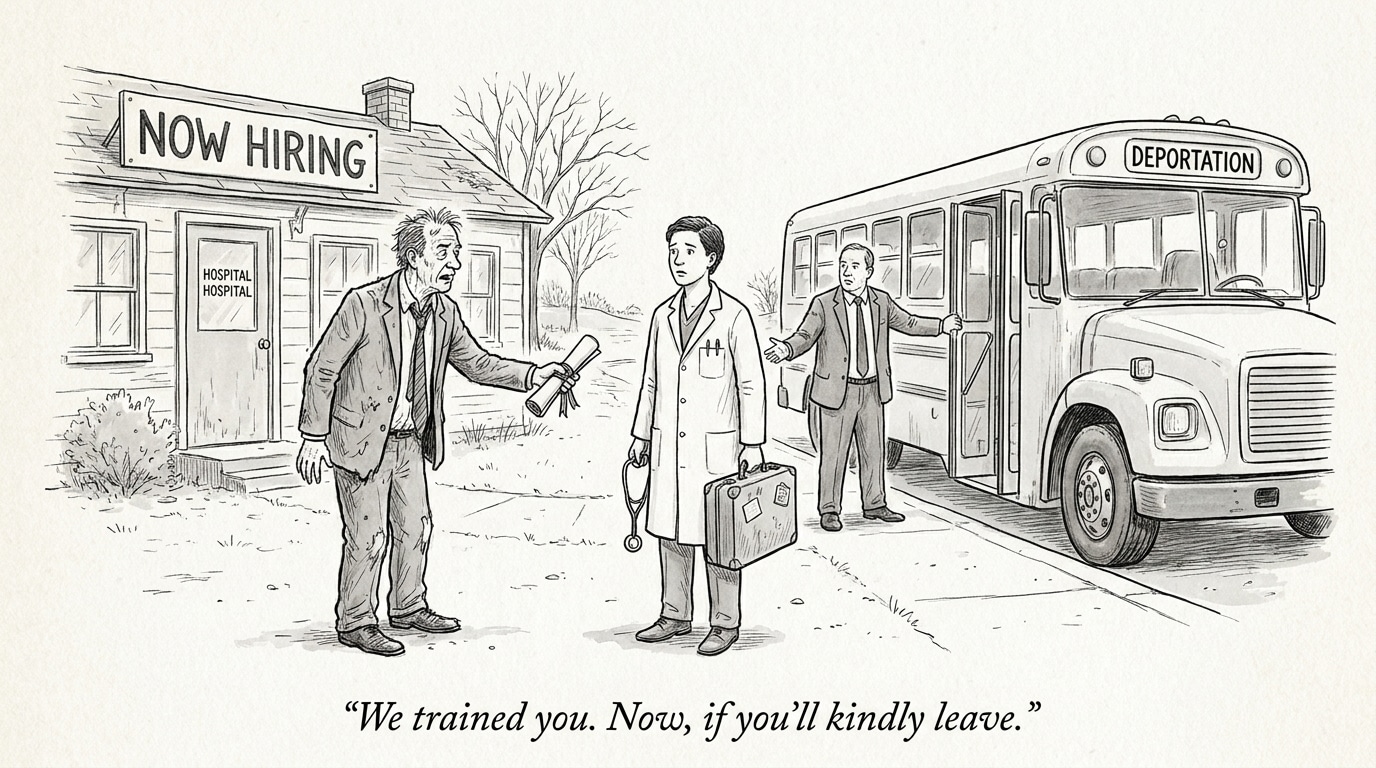

Workforce and recruitment implications

The confluence of policy scrutiny and technical complexity will reshape hiring needs. Health systems will need hybrid talent: clinicians who understand AI limits, data scientists fluent in clinical workflows, and compliance specialists who can translate regulation into operational policy. For recruiters, this creates demand for profiles that blend domain expertise with governance acumen. Job descriptions will increasingly call for demonstrated experience in model evaluation, bias mitigation, and cross-disciplinary communication.

Implications for the healthcare industry

Three practical implications follow for health systems, vendors, and regulators:

- Governance-first procurement. Healthcare organizations should make validated, auditable governance a precondition for procurement. Contracts must include performance thresholds, transparency requirements, and obligations for post-market surveillance.

- Measurement beyond accuracy. Evaluation should prioritize clinical outcomes, subgroup performance, and workflow impact rather than isolated technical metrics. Continuous monitoring with real-world feedback loops is essential.

- Workforce transformation. The adoption curve will favor organizations that invest in cross-functional teams and clear processes for human oversight. Recruiting priorities should shift to combine clinical judgment with capabilities in AI oversight.

Conclusion

Debates about whether to restrict particular clinical AI uses reflect deeper questions about accountability, equity, and the role of automation in care. Policy interventions can correct market failures and protect patients, but they must be paired with operational standards that enable responsible innovation. For health systems and talent platforms, the imperative is clear: build governance, measure impact by real-world outcomes, and hire for the intersection of clinical expertise and AI stewardship. That combination will determine whether AI becomes a lever for more equitable care or a source of amplified risk.

Sources

Can AI Bridge the Gaps in Women’s Healthcare? A Responsible Path Forward – MedCity News