Why this matters now

Regulators and auditors are moving from theoretical guidance to concrete scrutiny of employer use of AI in hiring. New audits, emerging local laws, and updated best-practice guidance mean organizations that treat AI as a neutral efficiency tool risk legal exposure, reputational damage, and operational disruption. Health systems and healthcare employers must align hiring practices with broader trust AI in Physician Employment & Clinical Practice, expectations to avoid legal and reputational exposure.

1. Audit reality: documentation, traceability, and proof

Regulatory attention is increasingly procedural. Employers are being asked to demonstrate how AI systems make decisions, what data informed those models, and what steps were taken to check fairness. In practice, that means logging data sources, versioning models and prompts, preserving validation reports, and retaining correspondence with vendors. For healthcare employers—where credentialing, licensure checks, and clinical fit are scrutinized—this recordkeeping must integrate with existing HR and compliance systems so that hiring AI decisions are auditable end-to-end.

Call Out — Evidence over assertion: Regulators will favor demonstrable evidence of testing and mitigation over high-level policy statements. Employers must produce test artifacts, bias metrics, and contractual assurances to survive audits.

2. Bias testing isn’t optional; it must be methodical and context-aware

Surface-level fairness checks are insufficient. Effective bias assessment requires representative datasets, outcome-driven metrics, and attention to the specific harms relevant to clinical roles. For instance, disparate impact on protected groups is legally and ethically significant, but in healthcare hiring there’s an additional layer: tools must not systematically exclude candidates with uncommon but essential clinical backgrounds or nontraditional training paths. That calls for role-specific fairness thresholds and clinician-informed validation cohorts.

3. Vendor governance and contractual controls

Many organizations rely on third-party vendors for AI-enabled screening or interviewing. Contract language must allocate responsibilities for data provenance, model updates, re-training schedules, and remedial testing after changes. Contracts should require notifications before substantive model changes, access for independent audits, and definitions of liability. Procurement and legal teams must work with clinical leaders to define acceptable performance criteria tied to patient-safety-related hiring outcomes.

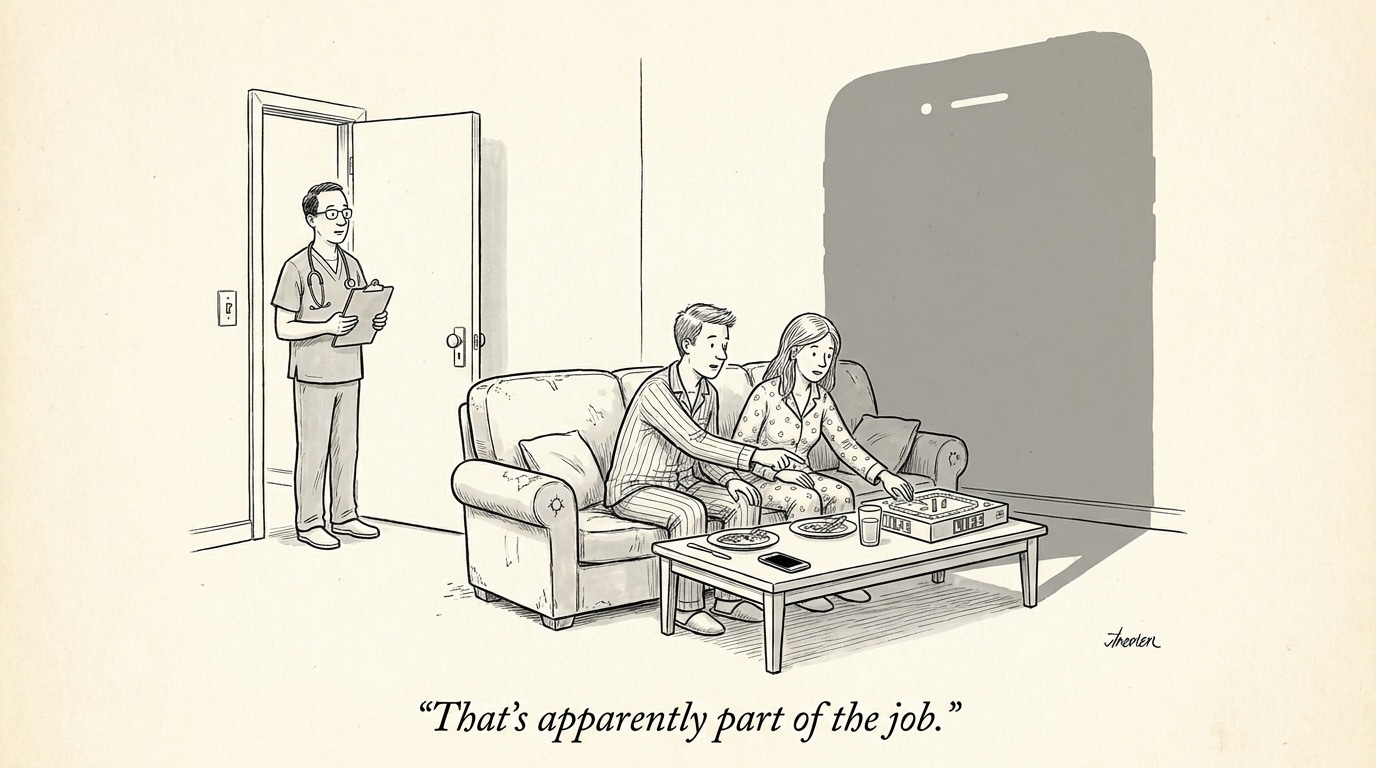

4. Human oversight: calibrated and documented

‘Human-in-the-loop’ cannot be a checkbox. Oversight needs to be systematic: define which decisions are automated vs. human-reviewed, establish criteria for when human review is triggered, and require reviewers to document decisions and rationale. In healthcare recruiting, that means credential verification, clinical scenario assessment, and judgment calls about cultural fit should remain areas where trained humans can override or supplement AI outputs—with the override data captured to inform future model improvements.

Call Out — Human oversight must be measurable: Establish measurable review triggers and track override rates to detect model drift, hidden bias, or operational gaps. Audit trails of overrides are prime evidence in regulatory reviews.

5. Privacy, consent, and candidate communications

Employers must balance operational needs with privacy and transparency obligations. Candidates should receive clear notices when AI tools materially influence hiring decisions, and organizations must explain the types of data used, retention periods, and avenues for redress. Healthcare organizations often process sensitive candidate information; privacy protections and minimal data-use principles should guide how model inputs are collected, stored, and shared with vendors.

6. Organizational design: roles, training, and tooling

Navigating AI hiring risk requires multidisciplinary teams: legal, HR, data science, compliance, and clinical leadership. Create formal governance bodies (e.g., an AI hiring review board) to approve tool selection, define acceptable risk levels for different roles, and oversee ongoing monitoring. Training is essential—recruiters, hiring managers, and clinical interviewers must understand AI limitations, signals to question outputs, and how to document human judgments.

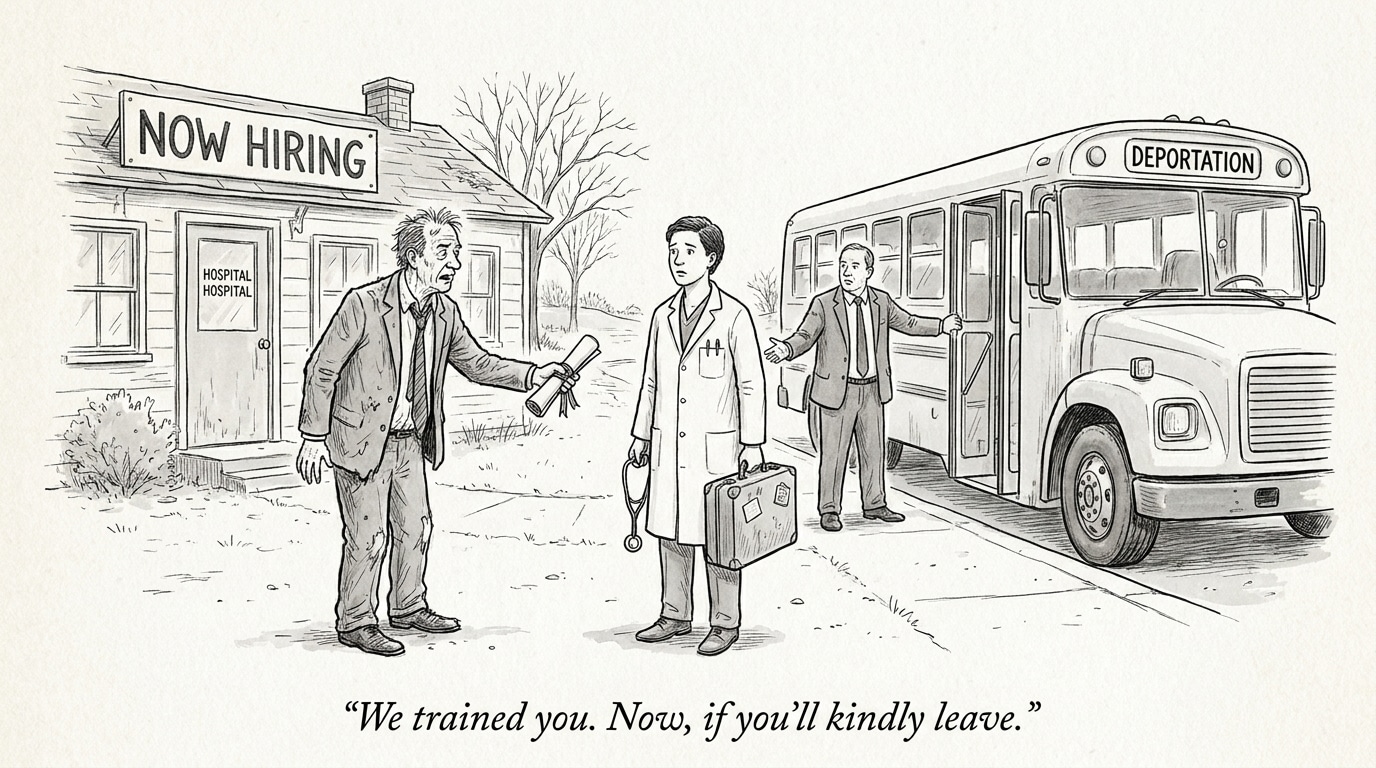

Implications for healthcare industry and recruiting

For health systems and clinics, noncompliance carries more than fines: it can degrade trust in hiring processes that implicitly affect patient care. Recruiters should consider slowing rollouts of automated decision systems until governance and monitoring are in place, or deploying AI in advisory, not determinative, roles. Hiring teams must invest in tooling that produces explainable metrics and supports integration with credentialing workflows.

Operationally, expect increased demand for roles that bridge clinical and AI governance skills—AI compliance officers, clinical data stewards, and vendor risk analysts. For staffing platforms and marketplaces, including AI governance signals in vendor evaluations will become a competitive differentiator.

Practical next steps for healthcare employers

- Inventory: map all automated hiring tools, data flows, and vendor obligations.

- Baseline testing: run role-specific bias and performance tests; document methods and outcomes.

- Contract remediation: update vendor agreements to require model-change notifications, audit access, and defined liability.

- Governance: create an AI hiring review board with clinical representation and formal approval processes.

- Monitoring: operationalize dashboards for fairness metrics, override rates, and candidate appeals.

Sources

AI Considerations and Best Practices for Employers in 2026 – National Law Review

An Employer’s 5-Step Guide to AI Interviewing and Hiring Tools – JD Supra