Why this matters now

Health systems are rapidly integrating AI tools — chatbots, clinical decision support, and patient apps — into workflows and care pathways. That pace of adoption has outstripped consensus on legal responsibility, auditability, and equitable performance. As providers lean on algorithmic assistance, questions about who is accountable when an AI makes a harmful recommendation are moving from academic debate into boardroom and courtroom reality. Resolving these questions requires stronger AI in Physician Employment & Clinical Practice, particularly as AI becomes embedded in frontline care.

Bias in clinical AI: mechanisms and consequences

Algorithmic bias in healthcare is rarely an exotic failure mode; it usually arises from predictable sources: skewed training data, incomplete representation of subpopulations, and proxies that correlate with sensitive attributes. Bias can manifest in chatbots that under-recognize symptoms in certain demographic groups, in risk calculators calibrated on historical practice patterns, or in medication-management apps that assume uniform literacy and access.

The downstream consequences are clinical and systemic. Individually, biased outputs can delay diagnosis or generate unsafe care plans. At scale, biased tools can entrench disparities by automating historically unequal treatment patterns. Importantly, bias is not solved solely by adding more data — it requires intentional curation, fairness-aware modeling, and continuous performance monitoring across demographics and clinical contexts.

Call Out: Addressing bias demands lifecycle controls — from representative data selection and fairness metrics to post-deployment surveillance. Without those, AI can convert ingrained health inequities into automated, reproducible harm.

Liability architectures: who can be held responsible?

When an AI-driven recommendation contributes to harm, legal responsibility can fall into several buckets: the clinician making the final decision, the health system that deployed the tool, the vendor that designed and maintained the algorithm, or, less commonly, regulatory actors that authorized the product. Current legal frameworks were not written with distributed, adaptive AI systems in mind, producing uncertainty.

Contract law and indemnities between providers and vendors are a first layer of allocation, but they do not eliminate exposure. Tort law (negligence, product liability) may apply depending on whether the AI is treated as a medical device or a non-declarative decision aid. Courts will examine foreseeability, standard of care, warnings provided, and the clinician’s reliance on the tool. For institutions, governance failures — inadequate testing, training, or monitoring — can produce vicarious liability even if the vendor bears contractual indemnity.

Clinical workforce trust and the adoption problem

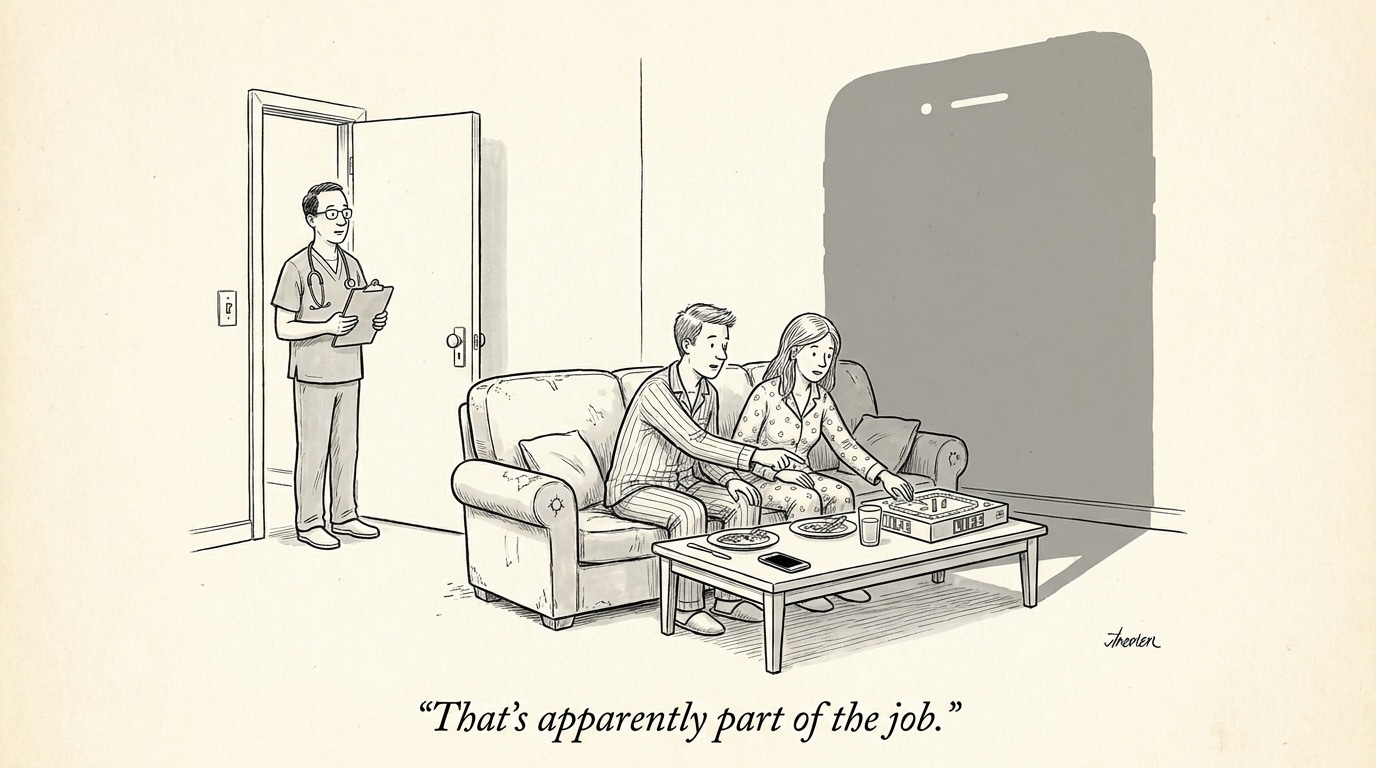

Clinicians are the final safety filter for algorithmic outputs. If they believe they will be blamed or inadequately protected should a tool err, they will resist adoption, second-guess recommendations, or disengage entirely. Recent workforce sentiment data show substantial concern among nurses and other clinicians about legal protection when AI-driven tools cause harm. That concern is consequential: reluctance to use validated tools delays benefits (e.g., early detection, medication reconciliation) and can lead to shadow workflows that reintroduce risk.

Training and clear policies about the role of AI in decision-making are necessary but insufficient. Trust-building requires transparent performance data, documented failure modes, and institutional commitments to how liability will be managed when tools fail. Without that, health systems face both underutilization of valuable aids and increased moral distress among staff.

Patient-facing apps and the shifting boundary of responsibility

As more patients use apps to manage medications, symptoms, and follow-up care, the line between clinical care and consumer-facing technology blurs. When a patient follows an app’s medication-reminder logic or dosing suggestion that leads to harm, the liability question becomes more complex: was the app acting as a recommendation engine or as a prescriber surrogate? Companies have incentives to position products as educational or informational to limit regulatory oversight, but that posture can complicate accountability when harm occurs.

Equally important is the role of digital literacy and access. Tools that assume certain technical skills or consistent connectivity will perform worse for vulnerable populations, entrenching disparate outcomes and opening new venues for liability rooted in discriminatory impact rather than explicit error.

Call Out: Patient-facing AI moves some clinical decisions into consumer contexts. Healthcare organizations must extend governance to cover apps patients use outside clinical settings and understand how those tools interact with formal care pathways.

Governance and mitigation: practical steps for health systems

Risk reduction is achievable through a combination of contract diligence, clinical validation, deployment controls, and continuous monitoring. Contracts should clarify data rights, liability allocation, and obligations for updates and safety patches. Clinical validation must test for performance across demographic groups and relevant clinical subpopulations. Deployment controls — such as limited rollouts, human-in-the-loop requirements, and explicit escalation paths — reduce exposure while systems learn a tool’s behavior in situ. Post-deployment monitoring, incident reporting, and rapid rollback pathways complete the lifecycle.

Regulators and payers will increasingly expect these governance practices. Organizations that adopt them will not only reduce legal risk but also improve clinician confidence and patient outcomes. For health systems, establishing central AI oversight committees and integrating legal, compliance, clinical, and IT teams is no longer optional; it is essential for responsible adoption.

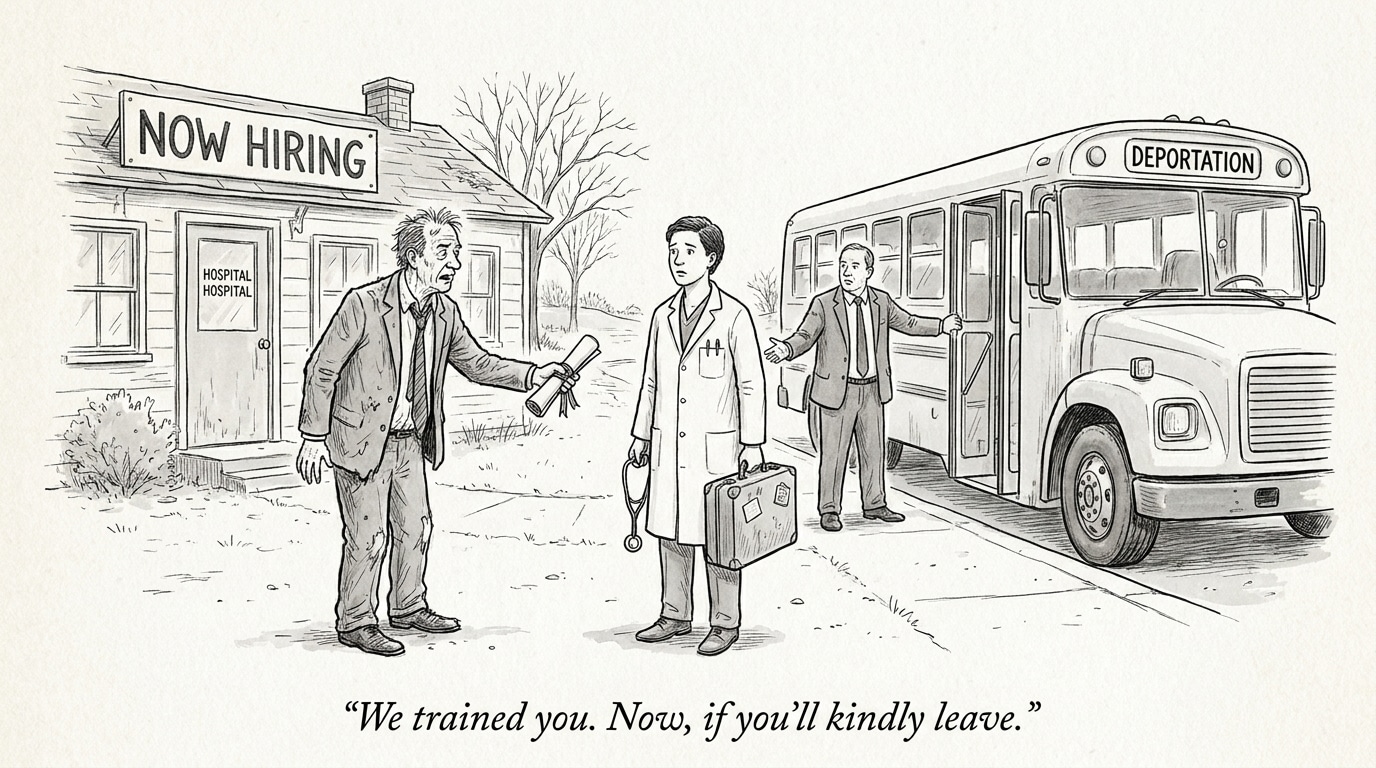

Implications for healthcare industry and recruiting

AI-related liability and bias concerns change the skills health systems must recruit. The demand will rise for clinicians with data literacy, legal and compliance experts conversant in AI risk, product managers who can manage vendor relationships, and quality professionals skilled in algorithmic audit and monitoring.

Ultimately, resolving who bears responsibility when AI causes harm will require coordinated action across providers, vendors, regulators, and legal systems. Until then, health systems that proactively build governance, transparency, and workforce protections will be best positioned to deploy AI safely and maintain trust.